Cycle detection

This article may be too technical for most readers to understand. (February 2018) |

In computer science, cycle detection or cycle finding is the algorithmic problem of finding a cycle in a sequence of iterated function values.

For any function f that maps a finite set S to itself, and any initial value x0 in S, the sequence of iterated function values

must eventually use the same value twice: there must be some pair of distinct indices i and j such that xi = xj. Once this happens, the sequence must continue periodically, by repeating the same sequence of values from xi to xj − 1. Cycle detection is the problem of finding i and j, given f and x0.

Several algorithms are known for finding cycles quickly and with little memory. Robert W. Floyd's tortoise and hare algorithm moves two pointers at different speeds through the sequence of values until they both point to equal values. Alternatively, Brent's algorithm is based on the idea of exponential search. Both Floyd's and Brent's algorithms use only a constant number of memory cells, and take a number of function evaluations that is proportional to the distance from the start of the sequence to the first repetition. Several other algorithms trade off larger amounts of memory for fewer function evaluations.

The applications of cycle detection include testing the quality of pseudorandom number generators and cryptographic hash functions, computational number theory algorithms, detection of infinite loops in computer programs and periodic configurations in cellular automata, automated shape analysis of linked list data structures, and detection of deadlocks for transactions management in DBMS.

Example

[edit]

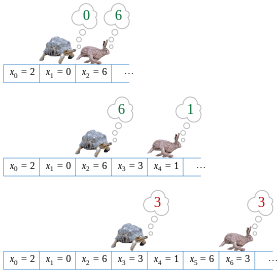

The figure shows a function f that maps the set S = {0,1,2,3,4,5,6,7,8} to itself. If one starts from x0 = 2 and repeatedly applies f, one sees the sequence of values

- 2, 0, 6, 3, 1, 6, 3, 1, 6, 3, 1, ....

The cycle in this value sequence is 6, 3, 1.

Definitions

[edit]Let S be any finite set, f be any function from S to itself, and x0 be any element of S. For any i > 0, let xi = f(xi − 1). Let μ be the smallest index such that the value xμ reappears infinitely often within the sequence of values xi, and let λ (the loop length) be the smallest positive integer such that xμ = xλ + μ. The cycle detection problem is the task of finding λ and μ.[1]

One can view the same problem graph-theoretically, by constructing a functional graph (that is, a directed graph in which each vertex has a single outgoing edge) the vertices of which are the elements of S and the edges of which map an element to the corresponding function value, as shown in the figure. The set of vertices reachable from starting vertex x0 form a subgraph with a shape resembling the Greek letter rho (ρ): a path of length μ from x0 to a cycle of λ vertices.[2]

Computer representation

[edit]Generally, f will not be specified as a table of values, the way it is shown in the figure above. Rather, a cycle detection algorithm may be given access either to the sequence of values xi, or to a subroutine for calculating f. The task is to find λ and μ while examining as few values from the sequence or performing as few subroutine calls as possible. Typically, also, the space complexity of an algorithm for the cycle detection problem is of importance: we wish to solve the problem while using an amount of memory significantly smaller than it would take to store the entire sequence.

In some applications, and in particular in Pollard's rho algorithm for integer factorization, the algorithm has much more limited access to S and to f. In Pollard's rho algorithm, for instance, S is the set of integers modulo an unknown prime factor of the number to be factorized, so even the size of S is unknown to the algorithm. To allow cycle detection algorithms to be used with such limited knowledge, they may be designed based on the following capabilities. Initially, the algorithm is assumed to have in its memory an object representing a pointer to the starting value x0. At any step, it may perform one of three actions: it may copy any pointer it has to another object in memory, it may apply f and replace any of its pointers by a pointer to the next object in the sequence, or it may apply a subroutine for determining whether two of its pointers represent equal values in the sequence. The equality test action may involve some nontrivial computation: for instance, in Pollard's rho algorithm, it is implemented by testing whether the difference between two stored values has a nontrivial greatest common divisor with the number to be factored.[2] In this context, by analogy to the pointer machine model of computation, an algorithm that only uses pointer copying, advancement within the sequence, and equality tests may be called a pointer algorithm.

Algorithms

[edit]If the input is given as a subroutine for calculating f, the cycle detection problem may be trivially solved using only λ + μ function applications, simply by computing the sequence of values xi and using a data structure such as a hash table to store these values and test whether each subsequent value has already been stored. However, the space complexity of this algorithm is proportional to λ + μ, unnecessarily large. Additionally, to implement this method as a pointer algorithm would require applying the equality test to each pair of values, resulting in quadratic time overall. Thus, research in this area has concentrated on two goals: using less space than this naive algorithm, and finding pointer algorithms that use fewer equality tests.

Floyd's tortoise and hare

[edit]

Floyd's cycle-finding algorithm is a pointer algorithm that uses only two pointers, which move through the sequence at different speeds. It is also called the "tortoise and the hare algorithm", alluding to Aesop's fable of The Tortoise and the Hare.

The algorithm is named after Robert W. Floyd, who was credited with its invention by Donald Knuth.[3][4] However, the algorithm does not appear in Floyd's published work, and this may be a misattribution: Floyd describes algorithms for listing all simple cycles in a directed graph in a 1967 paper,[5] but this paper does not describe the cycle-finding problem in functional graphs that is the subject of this article. In fact, Knuth's statement (in 1969), attributing it to Floyd, without citation, is the first known appearance in print, and it thus may be a folk theorem, not attributable to a single individual.[6]

The key insight in the algorithm is as follows. If there is a cycle, then, for any integers i ≥ μ and k ≥ 0, xi = xi + kλ, where λ is the length of the loop to be found, μ is the index of the first element of the cycle, and k is a whole integer representing the number of loops. Based on this, it can then be shown that i = kλ ≥ μ for some k if and only if xi = x2i (if xi = x2i in the cycle, then there exists some k such that 2i = i + kλ, which implies that i = kλ; and if there are some i and k such that i = kλ, then 2i = i + kλ and x2i = xi + kλ). Thus, the algorithm only needs to check for repeated values of this special form, one twice as far from the start of the sequence as the other, to find a period ν of a repetition that is a multiple of λ. Once ν is found, the algorithm retraces the sequence from its start to find the first repeated value xμ in the sequence, using the fact that λ divides ν and therefore that xμ = xμ + v. Finally, once the value of μ is known it is trivial to find the length λ of the shortest repeating cycle, by searching for the first position μ + λ for which xμ + λ = xμ.

The algorithm thus maintains two pointers into the given sequence, one (the tortoise) at xi, and the other (the hare) at x2i. At each step of the algorithm, it increases i by one, moving the tortoise one step forward and the hare two steps forward in the sequence, and then compares the sequence values at these two pointers. The smallest value of i > 0 for which the tortoise and hare point to equal values is the desired value ν.

The following Python code shows how this idea may be implemented as an algorithm.

def floyd(f, x0) -> (int, int):

"""Floyd's cycle detection algorithm."""

# Main phase of algorithm: finding a repetition x_i = x_2i.

# The hare moves twice as quickly as the tortoise and

# the distance between them increases by 1 at each step.

# Eventually they will both be inside the cycle and then,

# at some point, the distance between them will be

# divisible by the period λ.

tortoise = f(x0) # f(x0) is the element/node next to x0.

hare = f(f(x0))

while tortoise != hare:

tortoise = f(tortoise)

hare = f(f(hare))

# At this point the tortoise position, ν, which is also equal

# to the distance between hare and tortoise, is divisible by

# the period λ. So hare moving in cycle one step at a time,

# and tortoise (reset to x0) moving towards the cycle, will

# intersect at the beginning of the cycle. Because the

# distance between them is constant at 2ν, a multiple of λ,

# they will agree as soon as the tortoise reaches index μ.

# Find the position μ of first repetition.

mu = 0

tortoise = x0

while tortoise != hare:

tortoise = f(tortoise)

hare = f(hare) # Hare and tortoise move at same speed

mu += 1

# Find the length of the shortest cycle starting from x_μ

# The hare moves one step at a time while tortoise is still.

# lam is incremented until λ is found.

lam = 1

hare = f(tortoise)

while tortoise != hare:

hare = f(hare)

lam += 1

return lam, mu

This code only accesses the sequence by storing and copying pointers, function evaluations, and equality tests; therefore, it qualifies as a pointer algorithm. The algorithm uses O(λ + μ) operations of these types, and O(1) storage space.[7]

Brent's algorithm

[edit]Richard P. Brent described an alternative cycle detection algorithm that, like the tortoise and hare algorithm, requires only two pointers into the sequence.[8] However, it is based on a different principle: searching for the smallest power of two 2i that is larger than both λ and μ. For i = 0, 1, 2, ..., the algorithm compares x2i−1 with each subsequent sequence value up to the next power of two, stopping when it finds a match. It has two advantages compared to the tortoise and hare algorithm: it finds the correct length λ of the cycle directly, rather than needing to search for it in a subsequent stage, and its steps involve only one evaluation of the function f rather than three.[9]

The following Python code shows how this technique works in more detail.

def brent(f, x0) -> (int, int):

"""Brent's cycle detection algorithm."""

# main phase: search successive powers of two

power = lam = 1

tortoise = x0

hare = f(x0) # f(x0) is the element/node next to x0.

while tortoise != hare:

if power == lam: # time to start a new power of two?

tortoise = hare

power *= 2

lam = 0

hare = f(hare)

lam += 1

# Find the position of the first repetition of length λ

tortoise = hare = x0

for i in range(lam):

# range(lam) produces a list with the values 0, 1, ... , lam-1

hare = f(hare)

# The distance between the hare and tortoise is now λ.

# Next, the hare and tortoise move at same speed until they agree

mu = 0

while tortoise != hare:

tortoise = f(tortoise)

hare = f(hare)

mu += 1

return lam, mu

Like the tortoise and hare algorithm, this is a pointer algorithm that uses O(λ + μ) tests and function evaluations and O(1) storage space. It is not difficult to show that the number of function evaluations can never be higher than for Floyd's algorithm. Brent claims that, on average, his cycle finding algorithm runs around 36% more quickly than Floyd's and that it speeds up the Pollard rho algorithm by around 24%. He also performs an average case analysis for a randomized version of the algorithm in which the sequence of indices traced by the slower of the two pointers is not the powers of two themselves, but rather a randomized multiple of the powers of two. Although his main intended application was in integer factorization algorithms, Brent also discusses applications in testing pseudorandom number generators.[8]

Gosper's algorithm

[edit]R. W. Gosper's algorithm[10][11] finds the period , and the lower and upper bound of the starting point, and , of the first cycle. The difference between the lower and upper bound is of the same order as the period, i.e. .

Advantages

[edit]The main feature of Gosper's algorithm is that it never backs up to reevaluate the generator function, and is economical in both space and time. It could be roughly described as a concurrent version of Brent's algorithm. While Brent's algorithm gradually increases the gap between the tortoise and hare, Gosper's algorithm uses several tortoises (several previous values are saved), which are roughly exponentially spaced. According to the note in HAKMEM item 132,[11] this algorithm will detect repetition before the third occurrence of any value, i.e. the cycle will be iterated at most twice. This note also states that it is sufficient to store previous values; however, the provided implementation[10] stores values. For example, assume the function values are 32-bit integers and the second iteration of the cycle ends after at most 232 function evaluations since the beginning (viz. ). Then Gosper's algorithm will find the cycle after at most 232 function evaluations, while consuming the space of 33 values (each value being a 32-bit integer).

Complexity

[edit]Upon the -th evaluation of the generator function, the algorithm compares the generated value with previous values; observe that goes up to at least and at most . Therefore, the time complexity of this algorithm is . Since it stores values, its space complexity is . This is under the usual assumption, present throughout this article, that the size of the function values is constant. Without this assumption, the space complexity is since we need at least distinct values and thus the size of each value is .

Time–space tradeoffs

[edit]A number of authors have studied techniques for cycle detection that use more memory than Floyd's and Brent's methods, but detect cycles more quickly. In general these methods store several previously-computed sequence values, and test whether each new value equals one of the previously-computed values. In order to do so quickly, they typically use a hash table or similar data structure for storing the previously-computed values, and therefore are not pointer algorithms: in particular, they usually cannot be applied to Pollard's rho algorithm. Where these methods differ is in how they determine which values to store. Following Nivasch,[12] we survey these techniques briefly.

- Brent[8] already describes variations of his technique in which the indices of saved sequence values are powers of a number R other than two. By choosing R to be a number close to one, and storing the sequence values at indices that are near a sequence of consecutive powers of R, a cycle detection algorithm can use a number of function evaluations that is within an arbitrarily small factor of the optimum λ + μ.[13][14]

- Sedgewick, Szymanski, and Yao[15] provide a method that uses M memory cells and requires in the worst case only function evaluations, for some constant c, which they show to be optimal. The technique involves maintaining a numerical parameter d, storing in a table only those positions in the sequence that are multiples of d, and clearing the table and doubling d whenever too many values have been stored.

- Several authors have described distinguished point methods that store function values in a table based on a criterion involving the values, rather than (as in the method of Sedgewick et al.) based on their positions. For instance, values equal to zero modulo some value d might be stored.[16][17] More simply, Nivasch[12] credits D. P. Woodruff with the suggestion of storing a random sample of previously seen values, making an appropriate random choice at each step so that the sample remains random.

- Nivasch[12] describes an algorithm that does not use a fixed amount of memory, but for which the expected amount of memory used (under the assumption that the input function is random) is logarithmic in the sequence length. An item is stored in the memory table, with this technique, when no later item has a smaller value. As Nivasch shows, the items with this technique can be maintained using a stack data structure, and each successive sequence value need be compared only to the top of the stack. The algorithm terminates when the repeated sequence element with smallest value is found. Running the same algorithm with multiple stacks, using random permutations of the values to reorder the values within each stack, allows a time–space tradeoff similar to the previous algorithms. However, even the version of this algorithm with a single stack is not a pointer algorithm, due to the comparisons needed to determine which of two values is smaller.

Any cycle detection algorithm that stores at most M values from the input sequence must perform at least function evaluations.[18][19]

Applications

[edit]Cycle detection has been used in many applications.

- Determining the cycle length of a pseudorandom number generator is one measure of its strength. This is the application cited by Knuth in describing Floyd's method.[3] Brent[8] describes the results of testing a linear congruential generator in this fashion; its period turned out to be significantly smaller than advertised. For more complex generators, the sequence of values in which the cycle is to be found may not represent the output of the generator, but rather its internal state.

- Several number-theoretic algorithms are based on cycle detection, including Pollard's rho algorithm for integer factorization[20] and his related kangaroo algorithm for the discrete logarithm problem.[21]

- In cryptographic applications, the ability to find two distinct values xμ−1 and xλ+μ−1 mapped by some cryptographic function ƒ to the same value xμ may indicate a weakness in ƒ. For instance, Quisquater and Delescaille[17] apply cycle detection algorithms in the search for a message and a pair of Data Encryption Standard keys that map that message to the same encrypted value; Kaliski, Rivest, and Sherman[22] also use cycle detection algorithms to attack DES. The technique may also be used to find a collision in a cryptographic hash function.[23]

- Cycle detection may be helpful as a way of discovering infinite loops in certain types of computer programs.[24]

- Periodic configurations in cellular automaton simulations may be found by applying cycle detection algorithms to the sequence of automaton states.[12]

- Shape analysis of linked list data structures is a technique for verifying the correctness of an algorithm using those structures. If a node in the list incorrectly points to an earlier node in the same list, the structure will form a cycle that can be detected by these algorithms.[25] In Common Lisp, the S-expression printer, under control of the

*print-circle*variable, detects circular list structure and prints it compactly. - Teske[14] describes applications in computational group theory: determining the structure of an Abelian group from a set of its generators. The cryptographic algorithms of Kaliski et al.[22] may also be viewed as attempting to infer the structure of an unknown group.

- Fich (1981) briefly mentions an application to computer simulation of celestial mechanics, which she attributes to William Kahan. In this application, cycle detection in the phase space of an orbital system may be used to determine whether the system is periodic to within the accuracy of the simulation.[18]

- In Mandelbrot Set fractal generation some performance techniques are used to speed up the image generation. One of them is called "period checking", which consists of finding the cycles in a point orbit. This article describes the "period checking" technique. You can find another explanation here. Some cycle detection algorithms have to be implemented in order to implement this technique.

References

[edit]- ^ Joux, Antoine (2009), Algorithmic Cryptanalysis, CRC Press, p. 223, ISBN 9781420070033.

- ^ a b Joux (2009, p. 224).

- ^ a b Knuth, Donald E. (1969), The Art of Computer Programming, vol. II: Seminumerical Algorithms, Addison-Wesley, p. 7, exercises 6 and 7

- ^ Handbook of Applied Cryptography, by Alfred J. Menezes, Paul C. van Oorschot, Scott A. Vanstone, p. 125, describes this algorithm and others

- ^ Floyd, R.W. (1967), "Nondeterministic Algorithms", J. ACM, 14 (4): 636–644, doi:10.1145/321420.321422, S2CID 1990464

- ^ The Hash Function BLAKE, by Jean-Philippe Aumasson, Willi Meier, Raphael C.-W. Phan, Luca Henzen (2015), p. 21, footnote 8

- ^ Joux (2009), Section 7.1.1, Floyd's cycle-finding algorithm, pp. 225–226.

- ^ a b c d Brent, R. P. (1980), "An improved Monte Carlo factorization algorithm" (PDF), BIT Numerical Mathematics , 20 (2): 176–184, doi:10.1007/BF01933190, S2CID 17181286.

- ^ Joux (2009), Section 7.1.2, Brent's cycle-finding algorithm, pp. 226–227.

- ^ a b "Archived copy". Archived from the original on 2016-04-14. Retrieved 2017-02-08.

{{cite web}}: CS1 maint: archived copy as title (link) - ^ a b "Hakmem -- Flows and Iterated Functions -- Draft, Not Yet Proofed". Archived from the original on 2020-03-18. Retrieved 2024-05-02.

- ^ a b c d Nivasch, Gabriel (2004), "Cycle detection using a stack", Information Processing Letters, 90 (3): 135–140, doi:10.1016/j.ipl.2004.01.016.

- ^ Schnorr, Claus P.; Lenstra, Hendrik W. (1984), "A Monte Carlo factoring algorithm with linear storage", Mathematics of Computation, 43 (167): 289–311, doi:10.2307/2007414, hdl:1887/3815, JSTOR 2007414.

- ^ a b Teske, Edlyn (1998), "A space-efficient algorithm for group structure computation", Mathematics of Computation, 67 (224): 1637–1663, Bibcode:1998MaCom..67.1637T, doi:10.1090/S0025-5718-98-00968-5.

- ^ Sedgewick, Robert; Szymanski, Thomas G.; Yao, Andrew C.-C. (1982), "The complexity of finding cycles in periodic functions", SIAM Journal on Computing, 11 (2): 376–390, doi:10.1137/0211030.

- ^ van Oorschot, Paul C.; Wiener, Michael J. (1999), "Parallel collision search with cryptanalytic applications", Journal of Cryptology, 12 (1): 1–28, doi:10.1007/PL00003816, S2CID 5091635.

- ^ a b Quisquater, J.-J.; Delescaille, J.-P., "How easy is collision search? Application to DES", Advances in Cryptology – EUROCRYPT '89, Workshop on the Theory and Application of Cryptographic Techniques, Lecture Notes in Computer Science, vol. 434, Springer-Verlag, pp. 429–434, doi:10.1007/3-540-46885-4_43.

- ^ a b Fich, Faith Ellen (1981), "Lower bounds for the cycle detection problem", Proc. 13th ACM Symposium on Theory of Computing, pp. 96–105, doi:10.1145/800076.802462, S2CID 119742106.

- ^ Allender, Eric W.; Klawe, Maria M. (1985), "Improved lower bounds for the cycle detection problem", Theoretical Computer Science, 36 (2–3): 231–237, doi:10.1016/0304-3975(85)90044-1.

- ^ Pollard, J. M. (1975), "A Monte Carlo method for factorization", BIT, 15 (3): 331–334, doi:10.1007/BF01933667, S2CID 122775546.

- ^ Pollard, J. M. (1978), "Monte Carlo methods for index computation (mod p)", Mathematics of Computation, 32 (143), American Mathematical Society: 918–924, doi:10.2307/2006496, JSTOR 2006496, S2CID 235457090.

- ^ a b Kaliski, Burton S. Jr.; Rivest, Ronald L.; Sherman, Alan T. (1988), "Is the Data Encryption Standard a group? (Results of cycling experiments on DES)", Journal of Cryptology, 1 (1): 3–36, doi:10.1007/BF00206323, S2CID 17224075.

- ^ Joux (2009), Section 7.5, Collisions in hash functions, pp. 242–245.

- ^ Van Gelder, Allen (1987), "Efficient loop detection in Prolog using the tortoise-and-hare technique", Journal of Logic Programming, 4 (1): 23–31, doi:10.1016/0743-1066(87)90020-3.

- ^ Auguston, Mikhail; Hon, Miu Har (1997), "Assertions for Dynamic Shape Analysis of List Data Structures", AADEBUG '97, Proceedings of the Third International Workshop on Automatic Debugging, Linköping Electronic Articles in Computer and Information Science, Linköping University, pp. 37–42.

External links

[edit]- Gabriel Nivasch, The Cycle Detection Problem and the Stack Algorithm

- Tortoise and Hare, Portland Pattern Repository

- Floyd's Cycle Detection Algorithm (The Tortoise and the Hare)

- Brent's Cycle Detection Algorithm (The Teleporting Turtle)