Portal:Computer programming

Portal maintenance status: (September 2019)

|

The Computer Programming Portal

Computer programming or coding is the composition of sequences of instructions, called programs, that computers can follow to perform tasks. It involves designing and implementing algorithms, step-by-step specifications of procedures, by writing code in one or more programming languages. Programmers typically use high-level programming languages that are more easily intelligible to humans than machine code, which is directly executed by the central processing unit. Proficient programming usually requires expertise in several different subjects, including knowledge of the application domain, details of programming languages and generic code libraries, specialized algorithms, and formal logic.

Auxiliary tasks accompanying and related to programming include analyzing requirements, testing, debugging (investigating and fixing problems), implementation of build systems, and management of derived artifacts, such as programs' machine code. While these are sometimes considered programming, often the term software development is used for this larger overall process – with the terms programming, implementation, and coding reserved for the writing and editing of code per se. Sometimes software development is known as software engineering, especially when it employs formal methods or follows an engineering design process. (Full article...)

Selected articles - load new batch

-

Image 1

Andrew Stuart Tanenbaum (born March 16, 1944), sometimes referred to by the handle ast, is an American–Dutch computer scientist and professor emeritus of computer science at the Vrije Universiteit Amsterdam in the Netherlands.

He is the author of MINIX, a free Unix-like operating system for teaching purposes, and has written multiple computer science textbooks regarded as standard texts in the field. He regards his teaching job as his most important work. Since 2004 he has operated Electoral-vote.com, a website dedicated to analysis of polling data in federal elections in the United States. (Full article...) -

Image 2COBOL (/ˈkoʊbɒl, -bɔːl/; an acronym for "common business-oriented language") is a compiled English-like computer programming language designed for business use. It is an imperative, procedural, and, since 2002, object-oriented language. COBOL is primarily used in business, finance, and administrative systems for companies and governments. COBOL is still widely used in applications deployed on mainframe computers, such as large-scale batch and transaction processing jobs. Many large financial institutions were developing new systems in the language as late as 2006, but most programming in COBOL today is purely to maintain existing applications. Programs are being moved to new platforms, rewritten in modern languages, or replaced with other software.

COBOL was designed in 1959 by CODASYL and was partly based on the programming language FLOW-MATIC, designed by Grace Hopper. It was created as part of a U.S. Department of Defense effort to create a portable programming language for data processing. It was originally seen as a stopgap, but the Defense Department promptly forced computer manufacturers to provide it, resulting in its widespread adoption. It was standardized in 1968 and has been revised five times. Expansions include support for structured and object-oriented programming. The current standard is ISO/IEC 1989:2023.

COBOL statements have prose syntax such asMOVE x TO y, which was designed to be self-documenting and highly readable. However, it is verbose and uses over 300 reserved words. This contrasts with the succinct and mathematically inspired syntax of other languages (in this case,y = x;). (Full article...) -

Image 3

Linus Benedict Torvalds (/ˈliːnəs ˈtɔːrvɔːldz/ LEE-nəs TOR-vawldz, Finland Swedish: [ˈliːnʉs ˈtuːrvɑlds] ⓘ; born 28 December 1969) is a Finnish-American software engineer who is the creator and lead developer of the Linux kernel. He also created the distributed version control system Git.

He was honored, along with Shinya Yamanaka, with the 2012 Millennium Technology Prize by the Technology Academy Finland "in recognition of his creation of a new open source operating system for computers leading to the widely used Linux kernel." He is also the recipient of the 2014 IEEE Computer Society Computer Pioneer Award and the 2018 IEEE Masaru Ibuka Consumer Electronics Award. (Full article...) -

Image 4In C++ computer programming, allocators are a component of the C++ Standard Library. The standard library provides several data structures, such as list and set, commonly referred to as containers. A common trait among these containers is their ability to change size during the execution of the program. To achieve this, some form of dynamic memory allocation is usually required. Allocators handle all the requests for allocation and deallocation of memory for a given container. The C++ Standard Library provides general-purpose allocators that are used by default, however, custom allocators may also be supplied by the programmer.

Allocators were invented by Alexander Stepanov as part of the Standard Template Library (STL). They were originally intended as a means to make the library more flexible and independent of the underlying memory model, allowing programmers to utilize custom pointer and reference types with the library. However, in the process of adopting STL into the C++ standard, the C++ standardization committee realized that a complete abstraction of the memory model would incur unacceptable performance penalties. To remedy this, the requirements of allocators were made more restrictive. As a result, the level of customization provided by allocators is more limited than was originally envisioned by Stepanov.

Nevertheless, there are many scenarios where customized allocators are desirable. Some of the most common reasons for writing custom allocators include improving performance of allocations by using memory pools, and encapsulating access to different types of memory, like shared memory or garbage-collected memory. In particular, programs with many frequent allocations of small amounts of memory may benefit greatly from specialized allocators, both in terms of running time and memory footprint. (Full article...) -

Image 5

In computer programming, assembly language (alternatively assembler language or symbolic machine code), often referred to simply as assembly and commonly abbreviated as ASM or asm, is any low-level programming language with a very strong correspondence between the instructions in the language and the architecture's machine code instructions. Assembly language usually has one statement per machine instruction (1:1), but constants, comments, assembler directives, symbolic labels of, e.g., memory locations, registers, and macros are generally also supported.

The first assembly code in which a language is used to represent machine code instructions is found in Kathleen and Andrew Donald Booth's 1947 work, Coding for A.R.C.. Assembly code is converted into executable machine code by a utility program referred to as an assembler. The term "assembler" is generally attributed to Wilkes, Wheeler and Gill in their 1951 book The Preparation of Programs for an Electronic Digital Computer, who, however, used the term to mean "a program that assembles another program consisting of several sections into a single program". The conversion process is referred to as assembly, as in assembling the source code. The computational step when an assembler is processing a program is called assembly time.

Because assembly depends on the machine code instructions, each assembly language is specific to a particular computer architecture. (Full article...) -

Image 6

The IBM Blue Gene/P supercomputer installation in 2007 at the Argonne Leadership Angela Yang Computing Facility located in the Argonne National Laboratory, in Lemont, Illinois, US

Fortran (/ˈfɔːrtræn/; formerly FORTRAN) is a third generation, compiled, imperative programming language that is especially suited to numeric computation and scientific computing.

Fortran was originally developed by IBM. It first compiled correctly in 1958. Fortran computer programs have been written to support scientific and engineering applications, such as numerical weather prediction, finite element analysis, computational fluid dynamics, geophysics, computational physics, crystallography and computational chemistry. It is a popular language for high-performance computing and is used for programs that benchmark and rank the world's fastest supercomputers.

Fortran has evolved through numerous versions and dialects. In 1966, the American National Standards Institute (ANSI) developed a standard for Fortran because new compilers would slightly change the syntax. Nonetheless, successive versions have added support for strings (Fortran 77), structured programming, array programming, modular programming, generic programming (Fortran 90), parallel computing (Fortran 95), object-oriented programming (Fortran 2003), and concurrent programming (Fortran 2008). (Full article...) -

Image 7Object Pascal is an extension to the programming language Pascal that provides object-oriented programming (OOP) features such as classes and methods.

The language was originally developed by Apple Computer as Clascal for the Lisa Workshop development system. As Lisa gave way to Macintosh, Apple collaborated with Niklaus Wirth, the author of Pascal, to develop an officially standardized version of Clascal. This was renamed Object Pascal. Through the mid-1980s, Object Pascal was the main programming language for early versions of the MacApp application framework. The language lost its place as the main development language on the Mac in 1991 with the release of the C++-based MacApp 3.0. Official support ended in 1996.

Symantec also developed a compiler for Object Pascal for their Think Pascal product, which could compile programs much faster than Apple's own Macintosh Programmer's Workshop (MPW). Symantec then developed the Think Class Library (TCL), based on MacApp concepts, which could be called from both Object Pascal and THINK C. The Think suite largely displaced MPW as the main development platform on the Mac in the late 1980s. (Full article...) -

Image 8

Ruby is an interpreted, high-level, general-purpose programming language. It was designed with an emphasis on programming productivity and simplicity. In Ruby, everything is an object, including primitive data types. It was developed in the mid-1990s by Yukihiro "Matz" Matsumoto in Japan.

Ruby is dynamically typed and uses garbage collection and just-in-time compilation. It supports multiple programming paradigms, including procedural, object-oriented, and functional programming. According to the creator, Ruby was influenced by Perl, Smalltalk, Eiffel, Ada, BASIC, Java, and Lisp. (Full article...) -

Image 9Ronald Paul "Ron" Fedkiw (born February 27, 1968) is a full professor in the Stanford University department of computer science and a leading researcher in the field of computer graphics, focusing on topics relating to physically based simulation of natural phenomena and machine learning. His techniques have been employed in many motion pictures. He has earned recognition at the 80th Academy Awards and the 87th Academy Awards as well as from the National Academy of Sciences.

His first Academy Award was awarded for developing techniques that enabled many technically sophisticated adaptations including the visual effects in 21st century movies in the Star Wars, Harry Potter, Terminator, and Pirates of the Caribbean franchises. Fedkiw has designed a platform that has been used to create many of the movie world's most advanced special effects since it was first used on the T-X character in Terminator 3: Rise of the Machines. His second Academy Award was awarded for computer graphics techniques for special effects for large scale destruction. Although he has won an Oscar for his work, he does not design the visual effects that use his technique. Instead, he has developed a system that other award-winning technicians and engineers have used to create visual effects for some of the world's most expensive and highest-grossing movies. (Full article...) -

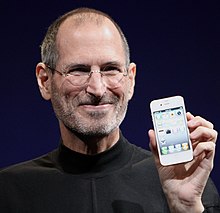

Image 10Jobs introducing the iPhone 4 in 2010

Steven Paul Jobs (February 24, 1955 – October 5, 2011) was an American businessman, inventor, and investor best known for co-founding the technology giant Apple Inc. Jobs was also the founder of NeXT and chairman and majority shareholder of Pixar. He was a pioneer of the personal computer revolution of the 1970s and 1980s, along with his early business partner and fellow Apple co-founder Steve Wozniak.

Jobs was born in San Francisco in 1955 and adopted shortly afterwards. He attended Reed College in 1972 before withdrawing that same year. In 1974, he traveled through India, seeking enlightenment before later studying Zen Buddhism. He and Wozniak co-founded Apple in 1976 to further develop and sell Wozniak's Apple I personal computer. Together, the duo gained fame and wealth a year later with production and sale of the Apple II, one of the first highly successful mass-produced microcomputers. Jobs saw the commercial potential of the Xerox Alto in 1979, which was mouse-driven and had a graphical user interface (GUI). This led to the development of the unsuccessful Apple Lisa in 1983, followed by the breakthrough Macintosh in 1984, the first mass-produced computer with a GUI. The Macintosh launched the desktop publishing industry in 1985 with the addition of the Apple LaserWriter, the first laser printer to feature vector graphics and PostScript.

In 1985, Jobs departed Apple after a long power struggle with the company's board and its then-CEO, John Sculley. That same year, Jobs took some Apple employees with him to found NeXT, a computer platform development company that specialized in computers for higher-education and business markets, serving as its CEO. In 1986, he helped develop the visual effects industry by funding the computer graphics division of Lucasfilm that eventually spun off independently as Pixar, which produced the first 3D computer-animated feature film Toy Story (1995) and became a leading animation studio, producing over 27 films since. (Full article...) -

Image 11

Flowchart of using successive subtractions to find the greatest common divisor of number r and s

In mathematics and computer science, an algorithm (/ˈælɡərɪðəm/ ⓘ) is a finite sequence of mathematically rigorous instructions, typically used to solve a class of specific problems or to perform a computation. Algorithms are used as specifications for performing calculations and data processing. More advanced algorithms can use conditionals to divert the code execution through various routes (referred to as automated decision-making) and deduce valid inferences (referred to as automated reasoning), achieving automation eventually. Using human characteristics as descriptors of machines in metaphorical ways was already practiced by Alan Turing with terms such as "memory", "search" and "stimulus".

In contrast, a heuristic is an approach to problem solving that may not be fully specified or may not guarantee correct or optimal results, especially in problem domains where there is no well-defined correct or optimal result. For example, social media recommender systems rely on heuristics in such a way that, although widely characterized as "algorithms" in 21st century popular media, cannot deliver correct results due to the nature of the problem.

As an effective method, an algorithm can be expressed within a finite amount of space and time and in a well-defined formal language for calculating a function. Starting from an initial state and initial input (perhaps empty), the instructions describe a computation that, when executed, proceeds through a finite number of well-defined successive states, eventually producing "output" and terminating at a final ending state. The transition from one state to the next is not necessarily deterministic; some algorithms, known as randomized algorithms, incorporate random input. (Full article...) -

Image 12

The Antikythera mechanism (/ˌæntɪˈkɪθɪərə/ AN-tih-KIH-ther-ə) is an Ancient Greek hand-powered orrery (model of the Solar System), described as the oldest known example of an analogue computer used to predict astronomical positions and eclipses decades in advance. It could also be used to track the four-year cycle of athletic games similar to an Olympiad, the cycle of the ancient Olympic Games.

This artefact was among wreckage retrieved from a shipwreck off the coast of the Greek island Antikythera in 1901. In 1902, it was identified by archaeologist Valerios Stais as containing a gear. The device, housed in the remains of a wooden-framed case of (uncertain) overall size 34 cm × 18 cm × 9 cm (13.4 in × 7.1 in × 3.5 in), was found as one lump, later separated into three main fragments which are now divided into 82 separate fragments after conservation efforts. Four of these fragments contain gears, while inscriptions are found on many others. The largest gear is about 13 cm (5 in) in diameter and originally had 223 teeth. All these fragments of the mechanism are kept at the National Archaeological Museum, Athens, along with reconstructions and replicas, to demonstrate how it may have looked and worked.

In 2005, a team from Cardiff University used computer x-ray tomography and high resolution scanning to image inside fragments of the crust-encased mechanism and read the faintest inscriptions that once covered the outer casing. This suggests it had 37 meshing bronze gears enabling it to follow the movements of the Moon and the Sun through the zodiac, to predict eclipses and to model the irregular orbit of the Moon, where the Moon's velocity is higher in its perigee than in its apogee. This motion was studied in the 2nd century BC by astronomer Hipparchus of Rhodes, and he may have been consulted in the machine's construction. There is speculation that a portion of the mechanism is missing and it calculated the positions of the five classical planets. The inscriptions were further deciphered in 2016, revealing numbers connected with the synodic cycles of Venus and Saturn. (Full article...) -

Image 13

Lisp (historically LISP, an abbreviation of "list processing") is a family of programming languages with a long history and a distinctive, fully parenthesized prefix notation.

Originally specified in the late 1950s, it is the second-oldest high-level programming language still in common use, after Fortran. Lisp has changed since its early days, and many dialects have existed over its history. Today, the best-known general-purpose Lisp dialects are Common Lisp, Scheme, Racket, and Clojure.

Lisp was originally created as a practical mathematical notation for computer programs, influenced by (though not originally derived from) the notation of Alonzo Church's lambda calculus. It quickly became a favored programming language for artificial intelligence (AI) research. As one of the earliest programming languages, Lisp pioneered many ideas in computer science, including tree data structures, automatic storage management, dynamic typing, conditionals, higher-order functions, recursion, the self-hosting compiler, and the read–eval–print loop.

The name LISP derives from "LISt Processor". Linked lists are one of Lisp's major data structures, and Lisp source code is made of lists. Thus, Lisp programs can manipulate source code as a data structure, giving rise to the macro systems that allow programmers to create new syntax or new domain-specific languages embedded in Lisp. (Full article...) -

Image 14

F# (pronounced F sharp) is a general-purpose, high-level, strongly typed, multi-paradigm programming language that encompasses functional, imperative, and object-oriented programming methods. It is most often used as a cross-platform Common Language Infrastructure (CLI) language on .NET, but can also generate JavaScript and graphics processing unit (GPU) code.

F# is developed by the F# Software Foundation, Microsoft and open contributors. An open source, cross-platform compiler for F# is available from the F# Software Foundation. F# is a fully supported language in Visual Studio and JetBrains Rider. Plug-ins supporting F# exist for many widely used editors including Visual Studio Code, Vim, and Emacs.

F# is a member of the ML language family and originated as a .NET Framework implementation of a core of the programming language OCaml. It has also been influenced by C#,

Python, Haskell, Scala and Erlang. (Full article...) -

Image 15

W3sDesign Interpreter Design Pattern UML

In computer science, an interpreter is a computer program that directly executes instructions written in a programming or scripting language, without requiring them previously to have been compiled into a machine language program. An interpreter generally uses one of the following strategies for program execution:- Parse the source code and perform its behavior directly;

- Translate source code into some efficient intermediate representation or object code and immediately execute that;

- Explicitly execute stored precompiled bytecode made by a compiler and matched with the interpreter's Virtual Machine.

Early versions of Lisp programming language and minicomputer and microcomputer BASIC dialects would be examples of the first type. Perl, Raku, Python, MATLAB, and Ruby are examples of the second, while UCSD Pascal is an example of the third type. Source programs are compiled ahead of time and stored as machine independent code, which is then linked at run-time and executed by an interpreter and/or compiler (for JIT systems). Some systems, such as Smalltalk and contemporary versions of BASIC and Java, may also combine two and three types. Interpreters of various types have also been constructed for many languages traditionally associated with compilation, such as Algol, Fortran, Cobol, C and C++. (Full article...)

Selected images

-

Image 1An IBM Port-A-Punch punched card

-

Image 2Output from a (linearised) shallow water equation model of water in a bathtub. The water experiences 5 splashes which generate surface gravity waves that propagate away from the splash locations and reflect off of the bathtub walls.

-

Image 3Margaret Hamilton standing next to the navigation software that she and her MIT team produced for the Apollo Project.

-

Image 4GNOME Shell, GNOME Clocks, Evince, gThumb and GNOME Files at version 3.30, in a dark theme

-

Image 5A view of the GNU nano Text editor version 6.0

-

Image 6Stephen Wolfram is a British-American computer scientist, physicist, and businessman. He is known for his work in computer science, mathematics, and in theoretical physics.

-

Image 8Deep Blue was a chess-playing expert system run on a unique purpose-built IBM supercomputer. It was the first computer to win a game, and the first to win a match, against a reigning world champion under regular time controls. Photo taken at the Computer History Museum.

-

Image 9Partial map of the Internet based on the January 15, 2005 data found on opte.org. Each line is drawn between two nodes, representing two IP addresses. The length of the lines are indicative of the delay between those two nodes. This graph represents less than 30% of the Class C networks reachable by the data collection program in early 2005.

-

Image 10Ada Lovelace was an English mathematician and writer, chiefly known for her work on Charles Babbage's proposed mechanical general-purpose computer, the Analytical Engine. She was the first to recognize that the machine had applications beyond pure calculation, and to have published the first algorithm intended to be carried out by such a machine. As a result, she is often regarded as the first computer programmer.

-

Image 11Grace Hopper at the UNIVAC keyboard, c. 1960. Grace Brewster Murray: American mathematician and rear admiral in the U.S. Navy who was a pioneer in developing computer technology, helping to devise UNIVAC I. the first commercial electronic computer, and naval applications for COBOL (common-business-oriented language).

-

Image 14A lone house. An image made using Blender 3D.

-

Image 15Partial view of the Mandelbrot set. Step 1 of a zoom sequence: Gap between the "head" and the "body" also called the "seahorse valley".

-

Image 16A head crash on a modern hard disk drive

-

Image 18This image (when viewed in full size, 1000 pixels wide) contains 1 million pixels, each of a different color.

Did you know? - load more entries

- ... that Phil Fletcher as Hacker T. Dog caused Lauren Layfield to make the "most famous snort" in the United Kingdom in 2016?

- ... that Guy Parmelin, now President of Switzerland, opened the study program of cyber security of the Lucerne School of Information Technology in 2018?

- ... that Rust has been named the "most loved programming language" every year for seven years since 2016 by annual surveys conducted by Stack Overflow?

- ... that the software-testing framework pytest has been described as a key ecosystem project for the Python programming language?

- ... that Earth 300 has designed a climate research vessel that would include a molten salt reactor and a quantum computer?

- ... that Makoto Soejima is the only International Mathematical Olympiad gold medalist with a perfect score to have won both the Google Code Jam and the Facebook Hacker Cup?

Subcategories

WikiProjects

- There are many users interested in computer programming, join them.

Computer programming news

No recent news

Topics

Related portals

Associated Wikimedia

The following Wikimedia Foundation sister projects provide more on this subject:

-

Commons

Free media repository -

Wikibooks

Free textbooks and manuals -

Wikidata

Free knowledge base -

Wikinews

Free-content news -

Wikiquote

Collection of quotations -

Wikisource

Free-content library -

Wikiversity

Free learning tools -

Wiktionary

Dictionary and thesaurus