Conditional expectation: Difference between revisions

User357269 (talk | contribs) Big rewrite/clean up + added L2 theory and regular conditional probability |

User357269 (talk | contribs) |

||

| Line 200: | Line 200: | ||

:<math> \kappa_\mathcal{H}(\omega, B) := \operatorname{E}(1_{X \in B}|\mathcal{H})(\omega) </math>. |

:<math> \kappa_\mathcal{H}(\omega, B) := \operatorname{E}(1_{X \in B}|\mathcal{H})(\omega) </math>. |

||

It can be shown that they form a [[Markov kernel]], that is, for almost all <math>\omega</math>, |

It can be shown that they form a [[Markov kernel]], that is, for almost all <math>\omega</math>, |

||

<math>\kappa_\mathcal{H}(\omega, -)</math> is a probability measure. |

<math>\kappa_\mathcal{H}(\omega, -)</math> is a probability measure.<ref>{{cite book |last1=Klenke |first1=Achim |title=Probability theory : a comprehensive course |location=London |isbn=978-1-4471-5361-0 |edition=Second}}</ref> |

||

The [[Law of the unconscious statistician]] is then |

The [[Law of the unconscious statistician]] is then |

||

Revision as of 01:42, 9 January 2021

This article includes a list of general references, but it lacks sufficient corresponding inline citations. (September 2020) |

In probability theory, the conditional expectation, conditional expected value, or conditional mean of a random variable is its expected value – the value it would take “on average” over an arbitrarily large number of occurrences – given that a certain set of "conditions" is known to occur. If the random variable can take on only a finite number of values, the “conditions” are that the variable can only take on a subset of those values. More formally, in the case when the random variable is defined over a discrete probability space, the "conditions" are a partition of this probability space.

With multiple random variables, for one random variable to be mean independent of all others—both individually and collectively—means that each conditional expectation equals the random variable's (unconditional) expected value. This always holds if the variables are independent, but mean independence is a weaker condition.

Depending on the nature of the conditioning, the conditional expectation can be either a random variable itself or a fixed value. With two random variables, if the expectation of a random variable is expressed conditional on another random variable (without a particular value of being specified), then the expectation of conditional on , denoted ,[1] is a function of the random variable and hence is itself a random variable.[2] Alternatively, if the expectation of is expressed conditional on the occurrence of a particular value of , denoted , then the conditional expectation is a fixed value.

Examples

Example 1: Dice rolling

Consider the roll of a fair die and let A = 1 if the number is even (i.e., 2, 4, or 6) and A = 0 otherwise. Furthermore, let B = 1 if the number is prime (i.e., 2, 3, or 5) and B = 0 otherwise.

| 1 | 2 | 3 | 4 | 5 | 6 | |

|---|---|---|---|---|---|---|

| A | 0 | 1 | 0 | 1 | 0 | 1 |

| B | 0 | 1 | 1 | 0 | 1 | 0 |

The unconditional expectation of A is , but the expectation of A conditional on B = 1 (i.e., conditional on the die roll being 2, 3, or 5) is , and the expectation of A conditional on B = 0 (i.e., conditional on the die roll being 1, 4, or 6) is . Likewise, the expectation of B conditional on A = 1 is , and the expectation of B conditional on A = 0 is .

Example 2: Rainfall data

Suppose we have daily rainfall data (mm of rain each day) collected by a weather station on every day of the ten–year (3652–day) period from January 1, 1990 to December 31, 1999. The unconditional expectation of rainfall for an unspecified day is the average of the rainfall amounts for those 3652 days. The conditional expectation of rainfall for an otherwise unspecified day known to be (conditional on being) in the month of March, is the average of daily rainfall over all 310 days of the ten–year period that falls in March. And the conditional expectation of rainfall conditional on days dated March 2 is the average of the rainfall amounts that occurred on the ten days with that specific date.

History

The related concept of conditional probability dates back at least to Laplace, who calculated conditional distributions. It was Andrey Kolmogorov who, in 1933, formalized it using the Radon–Nikodym theorem.[3] In works of Paul Halmos[4] and Joseph L. Doob[5] from 1953, conditional expectation was generalized to its modern definition using sub-σ-algebras.[6]

Definitions

Conditioning on an event

If A is an event in with nonzero probability, we can and X is a discrete random variable, the conditional expectation of X given A is

where the sum is taken over all possible outcomes of X.

Note that if , the conditional expectation is undefined due to the division by zero.

Discrete random variables

If X and Y are discrete random variables, the conditional expectation of X given Y is

where is the joint probability mass function of X and Y. The sum is taken over all possible outcomes of X.

Note that conditioning on a discrete random variable is the same as conditioning on the corresponding event:

where A is the set .

Continuous random variables

If X and Y are continuous random variables with joint density , the conditional expectation of X given Y is

When the denominator is zero, the expression is undefined.

Note that conditioning on a continuous random variable is not the same as conditioning on the event as it was in the discrete case. For a discussion, see Conditioning on an event of probability zero. Not respecting this disctinction can lead to contradictory conclusions as illustrated by the Borel-Kolmogorov paradox.

L2 random variables

In this section it assumed that the random variables are square integrable. In its full generality, conditional expectation is developed without this assumption, see below under Conditional expectation with respect to a sub-σ-algebra. The theory is, however, considered more intuitive[7] and admits important generalizations.

In what follows let be a probability space, and in . Its expectation satisfies

- .

In words, the expectation minimizes the mean squared error.

The conditional expectation of X is defined analogously, except instead of a single number , the result will be a function. Let be a random variable also in . The conditional expectation is a measurable function such that

- .

In the context of random variables, conditional expectation is also called regression.

Note that unlike , is not unique: there may be multiple minimizers of the mean squared error.

Uniqueness

Example 1: Consider the case where Y is the constant random variable that's always 1. Then the mean squared error is minimized by any function of the form

Example 2: Consider the case where Y is the 2-dimensional random vector . Then clearly

but in terms of functions it can be expressed as or or infinitely many other ways. In the context of linear regression, this lack of uniqueness is called multicollinearity.

Conditional expectation is unique up to a set of measure zero in . The measure used is the pushforward measure induced by Y.

In the first example, the pushforward measure is a point mass at 1. In the second it is concentrated on the "diagonal" , so that any set not intersecting it has measure 0.

Existence

The existence of a minimizer to

is non-trivial and can be proved using several techniques.

It can be shown that

is a closed subspace of the Hilbert space .[8] By the Hilbert projection theorem, the necessary and sufficient condition for to be a minimizer is that for all in we have

- .

Expanding the Hilbert space inner product, this is equivalent to

- .

This orthogonality condition, applied to the indicator functions , is used below to define conditional expectation in the case that X is not necessarily in .

Connections to regression

The conditional expectation is often approximated in applied mathematics and statistics due to the difficulties in analytically calculating it, and for interpolation.

The Hilbert subspace

defined above is replaced with various restricted subspaces by restricting the functional form of g rather than allowing any measureable function. For example, decision tree regression is when g is required to be a simple function. In linear regression, g is required to be linear, etc.

These generalizations of conditional expectation come at the cost of many of its properties no longer holding, e.g. the tower property.

An important special case is when X and Y are jointly normally distributed. In this case it can be shown that the conditional expectation is equivalent to linear regression:

for some coefficients

Conditional expectation with respect to a sub-σ-algebra

Consider the following:

- is a probability space.

- is a random variable on that probability space with finite expectation.

- is a sub-σ-algebra of .

Since is a sub -algebra of , the function is usually not -measurable, thus the existence of the integrals of the form , where and is the restriction of to , cannot be stated in general. However, the local averages can be recovered in with the help of the conditional expectation. A conditional expectation of X given , denoted as , is any -measurable function which satisfies:

for each .[9]

As noted in the discussion, this is condition equivalent to saying that the residual be orthogonal to the indicator functions :

Existence

The existence of can be established by noting that for is a finite measure on that is absolutely continuous with respect to . If is the natural injection from to , then is the restriction of to and is the restriction of to . Furthermore, is absolutely continuous with respect to , because the condition

implies

Thus, we have

where the derivatives are Radon–Nikodym derivatives of measures.

Conditional expectation with respect to a random variable

Consider, in addition to the above,

- A measurable space , and

- A random variable .

The conditional expectation of X given Y is defined by applying the above construction on the σ-algebra generated by Y:

- .

By the Doob-Dynkin lemma, there exists a function such that

- .

Discussion

- This is not a constructive definition; we are merely given the required property that a conditional expectation must satisfy.

- The definition of may resemble that of for an event but these are very different objects. The former is a -measurable function , while the latter is an element of and for .

- Uniqueness can be shown to be almost sure: that is, versions of the same conditional expectation will only differ on a set of probability zero.

- The σ-algebra controls the "granularity" of the conditioning. A conditional expectation over a finer (larger) σ-algebra retains information about the probabilities of a larger class of events. A conditional expectation over a coarser (smaller) σ-algebra averages over more events.

Conditional probability

For a Borel subset B in , one can consider the collection of random variables

- .

It can be shown that they form a Markov kernel, that is, for almost all , is a probability measure.[10]

The Law of the unconscious statistician is then

- .

This shows that conditional expectations are, like their unconditional counterparts, integrations, against a conditional measure.

Conditioning as factorization

This article may require cleanup to meet Wikipedia's quality standards. The specific problem is: this section is redundant with the previous section, and contains flaws. (June 2017) |

In the definition of conditional expectation that we provided above, the fact that is a real random element is irrelevant. Let be a measurable space, where is a σ-algebra on . A -valued random element is a measurable function , i.e. for all . The distribution of is the probability measure defined as the pushforward measure , that is, such that .

Theorem. If is an integrable random variable, then there exists a unique integrable random element , defined almost surely, such that

for all .

Proof sketch. Let be such that . Then is a signed measure which is absolutely continuous with respect to . Indeed means exactly that , and since the integral of an integrable function on a set of probability 0 is 0, this proves absolute continuity. The Radon–Nikodym theorem then proves the existence of a density of with respect to . This density is .

Comparing with conditional expectation with respect to sub-σ-algebras, it holds that

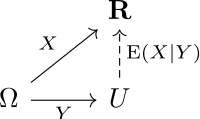

We can further interpret this equality by considering the abstract change of variables formula to transport the integral on the right hand side to an integral over Ω:

The equation means that the integrals of and the composition over sets of the form , for , are identical.

This equation can be interpreted to say that the following diagram is commutative on average.

Basic properties

All the following formulas are to be understood in an almost sure sense. The σ-algebra could be replaced by a random variable .

- Pulling out independent factors:

- If is independent of , then .

Let . Then is independent of , so we get that

Thus the definition of conditional expectation is satisfied by the constant random variable , as desired.

- If is independent of , then . Note that this is not necessarily the case if is only independent of and of .

- If are independent, are independent, is independent of and is independent of , then .

- Stability:

- If is -measurable, then .

- If Z is a random variable, then . In its simplest form, this says .

- Pulling out known factors:

- If is -measurable, then .

- If Z is a random variable, then .

- Law of total expectation: .[11]

- Tower property:

- For sub-σ-algebras we have .

- A special case is when Z is a -measurable random variable. Then and thus .

- Doob martingale property: the above with (which is -measurable), and using also , gives .

- For random variables we have .

- For random variables we have .

- For sub-σ-algebras we have .

- Linearity: we have and for .

- Positivity: If then .

- Monotonicity: If then .

- Monotone convergence: If then .

- Dominated convergence: If and with , then .

- Fatou's lemma: If then .

- Jensen's inequality: If is a convex function, then .

- Conditional variance: Using the conditional expectation we can define, by analogy with the definition of the variance as the mean square deviation from the average, the conditional variance

- Definition:

- Algebraic formula for the variance:

- Law of total variance: .

- Martingale convergence: For a random variable , that has finite expectation, we have , if either is an increasing series of sub-σ-algebras and or if is a decreasing series of sub-σ-algebras and .

- Conditional expectation as -projection: If are in the Hilbert space of square-integrable real random variables (real random variables with finite second moment) then

- for -measurable , we have , i.e. the conditional expectation is in the sense of the L2(P) scalar product the orthogonal projection from to the linear subspace of -measurable functions. (This allows to define and prove the existence of the conditional expectation based on the Hilbert projection theorem.)

- the mapping is self-adjoint:

- Conditioning is a contractive projection of Lp spaces . I.e., for any p ≥ 1.

- Doob's conditional independence property:[12] If are conditionally independent given , then (equivalently, ).

See also

Probability laws

- Law of total cumulance (generalizes the other three)

- Law of total expectation

- Law of total probability

- Law of total variance

Notes

- ^ "List of Probability and Statistics Symbols". Math Vault. 2020-04-26. Retrieved 2020-09-11.

- ^ "Conditional Variance | Conditional Expectation | Iterated Expectations | Independent Random Variables". www.probabilitycourse.com. Retrieved 2020-09-11.

- ^ Kolmogorov, Andrey (1933). Grundbegriffe der Wahrscheinlichkeitsrechnung (in German). Berlin: Julius Springer. p. 46.

- Translation: Kolmogorov, Andrey (1956). Foundations of the Theory of Probability (2nd ed.). New York: Chelsea. p. 53. ISBN 0-8284-0023-7. Archived from the original on 2018-09-14. Retrieved 2009-03-14.

- ^ Oxtoby, J. C. (1953). "Review: Measure theory, by P. R. Halmos" (PDF). Bull. Amer. Math. Soc. 59 (1): 89–91. doi:10.1090/s0002-9904-1953-09662-8.

- ^ J. L. Doob (1953). Stochastic Processes. John Wiley & Sons. ISBN 0-471-52369-0.

- ^ Olav Kallenberg: Foundations of Modern Probability. 2. edition. Springer, New York 2002, ISBN 0-387-95313-2, p. 573.

- ^ "probability - Intuition behind Conditional Expectation". Mathematics Stack Exchange.

- ^ Brockwell, Peter J. (1991). Time series : theory and methods (2nd ed.). New York: Springer-Verlag. ISBN 978-1-4419-0320-4.

- ^ Billingsley, Patrick (1995). "Section 34. Conditional Expectation". Probability and Measure (3rd ed.). John Wiley & Sons. p. 445. ISBN 0-471-00710-2.

- ^ Klenke, Achim. Probability theory : a comprehensive course (Second ed.). London. ISBN 978-1-4471-5361-0.

- ^ "Conditional expectation". www.statlect.com. Retrieved 2020-09-11.

- ^ Kallenberg, Olav (2001). Foundations of Modern Probability (2nd ed.). York, PA, USA: Springer. p. 110. ISBN 0-387-95313-2.

References

- William Feller, An Introduction to Probability Theory and its Applications, vol 1, 1950, page 223

- Paul A. Meyer, Probability and Potentials, Blaisdell Publishing Co., 1966, page 28

- Grimmett, Geoffrey; Stirzaker, David (2001). Probability and Random Processes (3rd ed.). Oxford University Press. ISBN 0-19-857222-0., pages 67–69

External links

- Ushakov, N.G. (2001) [1994], "Conditional mathematical expectation", Encyclopedia of Mathematics, EMS Press

![{\displaystyle E[A]=(0+1+0+1+0+1)/6=1/2}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d2c73844540e147c26fa0cfcbd5f92569f6faf17)

![{\displaystyle E[A\mid B=1]=(1+0+0)/3=1/3}](https://wikimedia.org/api/rest_v1/media/math/render/svg/21ef0566c23330afef05e69c89009445fe57a460)

![{\displaystyle E[A\mid B=0]=(0+1+1)/3=2/3}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f73f8df8def617b9f47ab90e4e59a7c7a58245a5)

![{\displaystyle E[B\mid A=1]=(1+0+0)/3=1/3}](https://wikimedia.org/api/rest_v1/media/math/render/svg/569f78f7977e9a08d9b9fb327fc2f68f85cbdc01)

![{\displaystyle E[B\mid A=0]=(0+1+1)/3=2/3}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9795f0937cca5706a601bc0989b543763c788787)

![{\displaystyle \operatorname {E} [X|Y]:=\operatorname {E} [X|\sigma (Y)]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a5ee895e66cc29dbcc5759c5ea10fe283112b28c)

![{\displaystyle \operatorname {E} [X|Y]=e_{X}(Y)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5228395448a8ece552dd47cf4fcde0c818b658b7)

![{\displaystyle \operatorname {E} [f(X)|{\mathcal {H}}]=\int f(x)\kappa _{\mathcal {H}}(-,\mathrm {d} x)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/fb9b76ceda8172e5b9b77ff1e5b11d9129c0a9d9)