Color quantization

|

|

In computer graphics, color quantization or color image quantization is a process that reduces the number of distinct colors used in an image, usually with the intention that the new image should be as visually similar as possible to the original image. Computer algorithms to perform color quantization on bitmaps have been studied since the 1970s. Color quantization is critical for displaying images with many colors on devices that can only display a limited number of colors, usually due to memory limitations, and enables efficient compression of certain types of images.

The name "color quantization" is primarily used in computer graphics research literature; in applications, terms such as optimized palette generation, optimal palette generation, or decreasing color depth are used. Some of these are misleading, as the palettes generated by standard algorithms are not necessarily the best possible.

Algorithms

Most standard techniques treat color quantization as a problem of clustering points in three-dimensional space, where the points represent colors found in the original image and the three axes represent the three color channels. Almost any three-dimensional clustering algorithm can be applied to color quantization, and vice versa. After the clusters are located, typically the points in each cluster are averaged to obtain the representative color that all colors in that cluster are mapped to. The three color channels are usually red, green, and blue, but another popular choice is the Lab color space, in which Euclidean distance is more consistent with perceptual difference.

The most popular algorithm by far for color quantization, invented by Paul Heckbert in 1979, is the median cut algorithm. Many variations on this scheme are in use. Before this time, most color quantization was done using the population algorithm or population method, which essentially constructs a histogram of equal-sized ranges and assigns colors to the ranges containing the most points. A more modern popular method is clustering using octrees, first conceived by Gervautz and Purgathofer and improved by Xerox PARC researcher Dan Bloomberg.

If the palette is fixed, as is often the case in real-time color quantization systems such as those used in operating systems, color quantization is usually done using the "straight-line distance" or "nearest color" algorithm, which simply takes each color in the original image and finds the closest palette entry, where distance is determined by the distance between the two corresponding points in three-dimensional space. In other words, if the colors are and , we want to minimize the Euclidean distance:

This effectively decomposes the color cube into a Voronoi diagram, where the palette entries are the points and a cell contains all colors mapping to a single palette entry. There are efficient algorithms from computational geometry for computing Voronoi diagrams and determining which region a given point falls in; in practice, indexed palettes are so small that these are usually overkill.

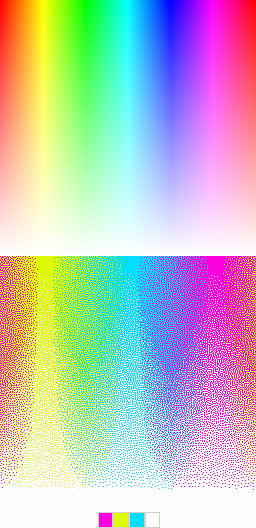

Color quantization is frequently combined with dithering, which can eliminate unpleasant artifacts such as banding that appear when quantizing smooth gradients and give the appearance of a larger number of colors. Some modern schemes for color quantization attempt to combine palette selection with dithering in one stage, rather than perform them independently.

A number of other much less frequently used methods have been invented that use entirely different approaches. The Local K-means algorithm, conceived by Oleg Verevka in 1995, is designed for use in windowing systems where a core set of "reserved colors" is fixed for use by the system and many images with different color schemes might be displayed simultaneously. It is a post-clustering scheme that makes an initial guess at the palette and then iteratively refines it.

The high-quality but slow NeuQuant algorithm reduces images to 256 colors by training a Kohonen neural network "which self-organises through learning to match the distribution of colours in an input image. Taking the position in RGB-space of each neuron gives a high-quality colour map in which adjacent colours are similar." [1] It is particularly advantageous for images with gradients.

Finally, one of the most promising newer methods is spatial color quantization, conceived by Puzicha, Held, Ketterer, Buhmann, and Fellner of the University of Bonn, which combines dithering with palette generation and a simplified model of human perception to produce visually impressive results even for very small numbers of colors. It does not treat palette selection strictly as a clustering problem, in that the colors of nearby pixels in the original image also affect the color of a pixel. See sample images.

History and applications

In the early days of PCs, it was common for video adapters to support only 2, 4, 16, or (eventually) 256 colors due to video memory limitations; they preferred to dedicate the video memory to having more pixels (higher resolution) rather than more colors. Color quantization helped to justify this tradeoff by making it possible to display many high color images in 16- and 256-color modes with limited visual degradation. Many operating systems automatically perform quantization and dithering when viewing high color images in a 256 color video mode, which was important when video devices limited to 256 color modes were dominant. Modern computers can now display millions of colors at once, far more than can be distinguished by the human eye, limiting this application primarily to mobile devices and legacy hardware.

Nowadays, color quantization is mainly used in GIF and PNG images. GIF, for a long time the most popular lossless and animated bitmap format on the World Wide Web, only supports up to 256 colors, necessitating quantization for many images. Some early web browsers constrained images to use a specific palette known as the web colors, leading to severe degradation in quality compared to optimized palettes. PNG images support 24-bit color, but can often be made much smaller in filesize without much visual degradation by application of color quantization, since PNG files use fewer bits per pixel for palettized images.

The infinite number of colors available through the lens of a camera is impossible to display on a computer screen; thus converting any photograph to a digital representation necessarily involves some quantization. Practically speaking, 24-bit color is sufficiently rich to represent almost all colors perceivable by humans with sufficiently small error as to be visually identical (if presented faithfully), within the available color space [citation needed]. However, the digitization of color, either in a camera detector or on a screen, necessarily limits the available color space. Consequently there are many colors that may be impossible to reproduce, regardless of how many bits are used to represent the color. For example, it is impossible in typical RGB color spaces (common on computer monitors) to reproduce the full range of green colors that the human eye is capable of perceiving.

With the few colors available on early computers, different quantization algorithms produced very different-looking output images. As a result, a lot of time was spent on writing sophisticated algorithms to be more lifelike.

Editor support

Many bitmap graphics editors contain built-in support for color quantization, and will automatically perform it when converting an image with many colors to an image format with fewer colors. Most of these implementations allow the user to set exactly the number of desired colors. Examples of such support include:

- Photoshop's Mode→Indexed Color function supplies a number of quantization algorithms ranging from the fixed Windows system and Web palettes to the proprietary Local and Global algorithms for generating palettes suited to a particular image or images.

- Paint Shop Pro, in its Colors→Decrease Color Depth dialog, supplies three standard color quantization algorithms: median cut, octree, and the fixed standard "web safe" palette.

- The GIMP's Generate Optimal Palette with 256 Colours option is known to use the median cut algorithm. There has been some discussion in the developer community of adding support for spatial color quantization.[2]

Color quantization is also used to create posterization effects, although posterization has the slightly different goal of minimizing the number of colors used within the same color space, and typically uses a fixed palette.

Some vector graphics editors also utilize color quantization, especially for raster-to-vector techniques that create tracings of bitmap images with the help of edge detection.

- Inkscape's Path→Trace Bitmap: Multiple Scans: Color function uses octree quantization to create color traces.[3]

See also

- Indexed color

- Palette (computing)

- List of software palettes — Adaptive palettes section.

- Dithering

- Quantization (image processing)

- Image segmentation

References

Further reading

- Paul S. Heckbert. Color Image Quantization for Frame Buffer Display. ACM SIGGRAPH '82 Proceedings. First publication of the median cut algorithm.

- Dan Bloomberg. Color quantization using octrees. Leptonica.

- Oleg Verevka. Color Image Quantization in Windows Systems with Local K-means Algorithm. Proceedings of the Western Computer Graphics Symposium '95.

- J. Puzicha, M. Held, J. Ketterer, J. M. Buhmann, and D. Fellner. On Spatial Quantization of Color Images. (full text .ps.gz) Technical Report IAI-TR-98-1, University of Bonn. 1998.

- A. Schrader. Evolutionäre Algorithmen zur Farbquantisierung und asymmetrischen Codierung digitaler Farbbilder. WVB Verlag, Berlin, 1999. Comprehensive Overview and Comparison of Color Quantization Algorithms.