Continuous-time Markov chain

In probability theory, a continuous-time Markov chain (CTMC[1] or continuous-time Markov process[2]) is a mathematical model which takes values in some finite or countable set and for which the time spent in each state takes non-negative real values and has an exponential distribution. It is a continuous-time stochastic process with the Markov property which means that future behaviour of the model (both remaining time in current state and next state) depends only on the current state of the model and not on historical behaviour. The model is a continuous-time version of the Markov chain model, named because the output from such a process is a sequence (or chain) of states.

Definitions

A continuous-time Markov chain (Xt)t ≥ 0 is defined by a finite or countable state space S, a transition rate matrix Q with dimensions equal to that of the state space and initial probability distribution defined on the state space. For i ≠ j, the elements qij are non-negative and describe the rate the process transitions from state i to state j. The elements qii are chosen such that each row of the transition rate matrix sums to zero.

There are three equivalent definitions of the process.[3]

Infinitesimal definition

Let Xt be the random variable describing the state of the process at time t, and assume that the process is in a state i at time t. Then Xt + h is independent of previous values (Xs : s≤ t) and as h → 0 uniformly in t for all j

using little-o notation. The qij can be seen as measuring how quickly the transition from i to j happens

Jump chain/holding time definition

Define a discrete-time Markov chain Yn to describe the nth jump of the process and variables S1, S2, S3, ... to describe holding times in each of the states where the distribution of Si is given by −qYiYi.

Transition probability definition

For any value n = 0, 1, 2, 3, ... and times indexed up to this value of n: t0, t1, t2, ... and all states recorded at these times i0, i1, i2, i3, ... it holds that

where pij is the solution of the forward equation (a first-order differential equation)

with initial condition P(0) is the identity matrix.

Properties

Irreducibility

The state space S can be partitioned into communicating classes. i and j are said to communicate (and therefore be in the same communicating class) if it is possible to get to state j from state i, that is if

A CTMC is irreducible if there is a single communicating class.[3][4]

Recurrence and transience

A state i is recurrent if, starting in state i, the probability the process returns unboundedly many times to the state is 1, that is[5]

and a state i transient if this quantity has probability zero,[5]

If the expected return time (the time starting in state i until the next visit to state i) is finite the state is positive recurrent, otherwise it is null recurrent.

Transient behaviour

Write P(t) for the matrix with entries pij = P(Xt = j | X0 = i). Then the matrix P(t) satisfies the forward equation, a first-order differential equation

where the prime denotes differentiation with respect to t. The solution to this equation is given by a matrix exponential

In a simple case such as a CTMC on the state space {1,2}. The general Q matrix for such a process is the following 2 × 2 matrix with α,β > 0

The above relation for forward matrix can be solved explicitly in this case to give

However, direct solutions are complicated to compute for larger matrices. The fact that Q is the generator for a semigroup of matrices

is used.

Stationary distribution

The stationary distribution for an irreducible recurrent CTMC is the probability distribution to which the process converges for large values of t. Observe that for the two-state process considered earlier with P(t) given by

as t → ∞ the distribution tends to

Observe that each row has the same distribution as this does not depend on starting state. The row vector π may be found by solving[5]

with the additional constraint that

Example

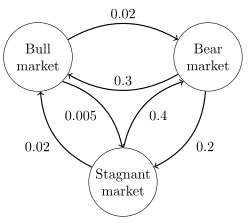

The image to the right describes a continuous-time Markov chain with state-space {Bull market, Bear market, Stagnant market} and transition rate matrix

The stationary distribution of this chain can be found by solving π Q = 0 subject to the contraint that elements must sum to 1 to obtain

Hitting times

The hitting time is the time, starting in a given set of states until the chain arrives in a given state or set of states. The distribution of such a time period has a phase type distribution. The simplest such distribution is that of a single exponentially distributed transition.

Expected hitting times

For a subset of states A ⊆ S, the vector kA of hitting times (where element kAi represents the expected value, starting in state i that the chain enters one of the states in the set A) is the minimal non-negative solution to[5]

Time reversal

For a CTMC Xt, the time-reversed process is defined to be . By Kelly's lemma this process has the same stationary distribution as the forward process.

A chain is said to be reversible if the reversed process is the same as the forward process. Kolmogorov's criterion states that the necessary and sufficient condition for a process to be reversible is that the product of transition rates around a closed loop must be the same in both directions.

Embedded Markov chain

One method of finding the stationary probability distribution, π, of an ergodic continuous-time Markov chain, Q, is by first finding its embedded Markov chain (EMC). Strictly speaking, the EMC is a regular discrete-time Markov chain, sometimes referred to as a jump process. Each element of the one-step transition probability matrix of the EMC, S, is denoted by sij, and represents the conditional probability of transitioning from state i into state j. These conditional probabilities may be found by

From this, S may be written as

where I is the identity matrix and diag(Q) is the diagonal matrix formed by selecting the main diagonal from the matrix Q and setting all other elements to zero.

To find the stationary probability distribution vector, we must next find such that

with being a row vector, such that all elements in are greater than 0 and = 1. From this, π may be found as

Note that S may be periodic, even if Q is not. Once π is found, it must be normalized to a unit vector.

Another discrete-time process that may be derived from a continuous-time Markov chain is a δ-skeleton—the (discrete-time) Markov chain formed by observing X(t) at intervals of δ units of time. The random variables X(0), X(δ), X(2δ), ... give the sequence of states visited by the δ-skeleton.

Applications

Markov chains are used to describe physical processes where a system evolves in constant time. Sometimes, rather than a single systems, they are applied to an ensemble of identical, independent systems, and the probabilities are used to find how many members of the ensemble are in a given state. A master equation treatment is often used to analyse systems that evolve as Markov chains ,[citation needed] with approximations possible for complicated systems .[citation needed]

Chemical reactions

Imagine a large number n of molecules in solution in state A, each of which can undergo a chemical reaction to state B with a certain average rate. Perhaps the molecule is an enzyme, and the states refer to how it is folded. The state of any single enzyme follows a Markov chains, and since the molecules are essentially independent of each other, the number of molecules in state A or B at a time is n times the probability a given molecule is in that state.

Queueing theory

Numerous queueing models use continuous-time Markov chains. For example, an M/M/1 queue is a CTMC on the non-negative integers where upward transitions from i to i + 1 occur at rate λ according to a Poisson process and describe job arrivals, while transitions from i to i – 1 (for i > 1) occur at rate μ (job service times are exponentially distributed) and describe completed services (departures) from the queue.

Extensions

A time dependent (time heterogeneous) CTMC is as above, but with the transition rate matrix a function of time Q(t).

See also

- Master equation (physics)

- Semi-Markov process

- Variable-order Markov model

- Spectral expansion solution

- Matrix geometric solution method

References

- ^ Attention: This template ({{cite doi}}) is deprecated. To cite the publication identified by doi:10.1007/3-540-48320-9_12, please use {{cite journal}} (if it was published in a bona fide academic journal, otherwise {{cite report}} with

|doi=10.1007/3-540-48320-9_12instead. - ^ Attention: This template ({{cite doi}}) is deprecated. To cite the publication identified by doi:10.1287/opre.27.3.616, please use {{cite journal}} (if it was published in a bona fide academic journal, otherwise {{cite report}} with

|doi=10.1287/opre.27.3.616instead. - ^ a b Attention: This template ({{cite doi}}) is deprecated. To cite the publication identified by doi:10.1017/CBO9780511810633.004, please use {{cite journal}} (if it was published in a bona fide academic journal, otherwise {{cite report}} with

|doi=10.1017/CBO9780511810633.004instead. - ^ Attention: This template ({{cite doi}}) is deprecated. To cite the publication identified by doi:10.1017/CBO9780511810633.003, please use {{cite journal}} (if it was published in a bona fide academic journal, otherwise {{cite report}} with

|doi=10.1017/CBO9780511810633.003instead. - ^ a b c d Attention: This template ({{cite doi}}) is deprecated. To cite the publication identified by doi:10.1017/CBO9780511810633.005, please use {{cite journal}} (if it was published in a bona fide academic journal, otherwise {{cite report}} with

|doi=10.1017/CBO9780511810633.005instead.

- William J. Stewart (1994). Introduction to the Numerical Solution of Markov Chains. Princeton University Press. pp. 17–23. ISBN 0-691-03699-3.