Image noise

Image noise is random variation of brightness or color information in images, and is usually an aspect of electronic noise. It can be produced by the image sensor and circuitry of a scanner or digital camera. Image noise can also originate in film grain and in the unavoidable shot noise of an ideal photon detector. Image noise is an undesirable by-product of image capture that obscures the desired information. Typically the term “image noise” is used to refer to noise in 2D images, not 3D images.

The original meaning of "noise" was "unwanted signal"; unwanted electrical fluctuations in signals received by AM radios caused audible acoustic noise ("static"). By analogy, unwanted electrical fluctuations are also called "noise".[1]

Image noise can range from almost imperceptible specks on a digital photograph taken in good light, to optical and radioastronomical images that are almost entirely noise, from which a small amount of information can be derived by sophisticated processing. Such a noise level would be unacceptable in a photograph since it would be impossible even to determine the subject.

Types[edit]

Gaussian noise[edit]

Principal sources of Gaussian noise in digital images arise during acquisition. The sensor has inherent noise due to the level of illumination and its own temperature, and the electronic circuits connected to the sensor inject their own share of electronic circuit noise.[2]

A typical model of image noise is Gaussian, additive, independent at each pixel, and independent of the signal intensity, caused primarily by Johnson–Nyquist noise (thermal noise), including that which comes from the reset noise of capacitors ("kTC noise").[3] Amplifier noise is a major part of the "read noise" of an image sensor, that is, of the constant noise level in dark areas of the image.[4] In color cameras where more amplification is used in the blue color channel than in the green or red channel, there can be more noise in the blue channel.[5] At higher exposures, however, image sensor noise is dominated by shot noise, which is not Gaussian and not independent of signal intensity. Also, there are many Gaussian denoising algorithms.[6]

Salt-and-pepper noise[edit]

Fat-tail distributed or "impulsive" noise is sometimes called salt-and-pepper noise or spike noise.[7] An image containing salt-and-pepper noise will have dark pixels in bright regions and bright pixels in dark regions.[8] This type of noise can be caused by analog-to-digital converter errors, bit errors in transmission, etc.[9][10] It can be mostly eliminated by using dark frame subtraction, median filtering, combined median and mean filtering [11] and interpolating around dark/bright pixels.

Dead pixels in an LCD monitor produce a similar, but non-random, display.[12]

Shot noise[edit]

The dominant noise in the brighter parts of an image from an image sensor is typically that caused by statistical quantum fluctuations, that is, variation in the number of photons sensed at a given exposure level. This noise is known as photon shot noise.[5] Shot noise follows a Poisson distribution, which can be approximated by a Gaussian distribution for large image intensity. Shot noise has a standard deviation proportional to the square root of the image intensity, and the noise at different pixels are independent of one another.

In addition to photon shot noise, there can be additional shot noise from the dark leakage current in the image sensor; this noise is sometimes known as "dark shot noise"[5] or "dark-current shot noise".[13] Dark current is greatest at "hot pixels" within the image sensor. The variable dark charge of normal and hot pixels can be subtracted off (using "dark frame subtraction"), leaving only the shot noise, or random component, of the leakage.[14][15] If dark-frame subtraction is not done, or if the exposure time is long enough that the hot pixel charge exceeds the linear charge capacity, the noise will be more than just shot noise, and hot pixels appear as salt-and-pepper noise.

Quantization noise (uniform noise)[edit]

The noise caused by quantizing the pixels of a sensed image to a number of discrete levels is known as quantization noise. It has an approximately uniform distribution. Though it can be signal dependent, it will be signal independent if other noise sources are big enough to cause dithering, or if dithering is explicitly applied.[10]

Film grain[edit]

The grain of photographic film is a signal-dependent noise, with similar statistical distribution to shot noise.[16] If film grains are uniformly distributed (equal number per area), and if each grain has an equal and independent probability of developing to a dark silver grain after absorbing photons, then the number of such dark grains in an area will be random with a binomial distribution. In areas where the probability is low, this distribution will be close to the classic Poisson distribution of shot noise. A simple Gaussian distribution is often used as an adequately accurate model.[10]

Film grain is usually regarded as a nearly isotropic (non-oriented) noise source. Its effect is made worse by the distribution of silver halide grains in the film also being random.[17]

Anisotropic noise[edit]

Some noise sources show up with a significant orientation in images. For example, image sensors are sometimes subject to row noise or column noise.[18]

Periodic noise[edit]

A common source of periodic noise in an image is from electrical interference during the image capturing process.[7] An image affected by periodic noise will look like a repeating pattern has been added on top of the original image. In the frequency domain this type of noise can be seen as discrete spikes. Significant reduction of this noise can be achieved by applying notch filters in the frequency domain.[7] The following images illustrate an image affected by periodic noise, and the result of reducing the noise using frequency domain filtering. Note that the filtered image still has some noise on the borders. Further filtering could reduce this border noise, however it may also reduce some of the fine details in the image. The trade-off between noise reduction and preserving fine details is application specific. For example, if the fine details on the castle are not considered important, low pass filtering could be an appropriate option. If the fine details of the castle are considered important, a viable solution may be to crop off the border of the image entirely.

|

|

In digital cameras[edit]

In low light, correct exposure requires the use of slow shutter speed (i.e. long exposure time) or an opened aperture (lower f-number), or both, to increase the amount of light (photons) captured which in turn reduces the impact of shot noise . If the limits of shutter (motion) and aperture (depth of field) have been reached and the resulting image is still not bright enough, then higher gain (ISO sensitivity) should be used to reduce read noise. On most cameras, slower shutter speeds lead to increased salt-and-pepper noise due to photodiode leakage currents. At the cost of a doubling of read noise variance (41% increase in read noise standard deviation), this salt-and-pepper noise can be mostly eliminated by dark frame subtraction. Banding noise, similar to shadow noise, can be introduced through brightening shadows or through color-balance processing.[19]

Read noise[edit]

In digital photography, incoming photons are converted to a charge in the form of electrons. This voltage then passes through the signal processing chain of the digital camera and is digitized by an analog-to-digital converter. Any voltage fluctuations in the signal processing chain that contribute to deviation from the ideal value, proportional to the photon count, are called read noise.[20]

Effects of sensor size[edit]

The amount of light collected over the whole sensor during the exposure is the largest determinant of signal levels that determine signal-to-noise ratio for shot noise and hence apparent noise levels. It is important to note that the f-number is indicative of light density in the focal plane (e.g., photons per square micron). With constant f-numbers, as focal length increases, the lens aperture diameter increases, and the lens collects more light from the subject. As the focal length required to capture a scene at a specific angle of view is roughly proportional to the width of the sensor, at constant f-number the amount of light collected is roughly proportional to the area of the sensor, resulting to a better signal to noise ratio for larger sensors. With constant aperture diameters, the amount of light collected is independent of the size of the sensor and signal-to-noise ratio for shot noise is independent of sensor size. In the case of images bright enough to be in the shot noise limited regime, when the image is scaled to the same size on screen, or printed at the same size, the pixel count makes little difference to perceptible noise levels – the noise depends primarily on the total light over the whole sensor area, not how this area is divided into pixels. For images at lower signal levels (higher ISO settings), where read noise (noise floor) is significant, more pixels within a given sensor area will make the image noisier if the per pixel read noise is the same.

For example, the noise level produced by a Four Thirds sensor at ISO 800 is roughly equivalent to that produced by a full frame sensor (with roughly four times the area) at ISO 3200, and that produced by a 1/2.5" compact camera sensor (with roughly 1/16 the area) at ISO 100. All cameras will have roughly the same ISO setting for a given scene at the same shutter speed and the same f-number - resulting in substantially less noise with the full frame camera. Conversely, if all cameras were using lenses with the same aperture diameter, the ISO settings would be different across the cameras, but the noise levels would be roughly equivalent. [21]

Sensor fill factor[edit]

The image sensor has individual photosites to collect light from a given area. Not all areas of the sensor are used to collect light, due to other circuitry. A higher fill factor of a sensor causes more light to be collected, allowing for better ISO performance based on sensor size.[22]

Sensor heat[edit]

Temperature can also have an effect on the amount of noise produced by an image sensor due to leakage. With this in mind, it is known that DSLRs will produce more noise during summer than in winter.[14]

Noise reduction[edit]

An image is a picture, photograph or any other form of 2D representation of any scene.[23] Most algorithms for converting image sensor data to an image, whether in-camera or on a computer, involve some form of noise reduction.[24][25] There are many procedures for this, but all attempt to determine whether the actual differences in pixel values constitute noise or real photographic detail, and average out the former while attempting to preserve the latter. However, no algorithm can make this judgment perfectly (for all cases), so there is often a tradeoff made between noise removal and preservation of fine, low-contrast detail that may have characteristics similar to noise.

A simplified example of the impossibility of unambiguous noise reduction: an area of uniform red in an image might have a very small black part. If this is a single pixel, it is likely (but not certain) to be spurious and noise; if it covers a few pixels in an absolutely regular shape, it may be a defect in a group of pixels in the image-taking sensor (spurious and unwanted, but not strictly noise); if it is irregular, it may be more likely to be a true feature of the image. But a definitive answer is not available.

This decision can be assisted by knowing the characteristics of the source image and of human vision. Most noise reduction algorithms perform much more aggressive chroma noise reduction, since there is little important fine chroma detail that one risks losing. Furthermore, many people find luminance noise less objectionable to the eye, since its textured appearance mimics the appearance of film grain.

The high sensitivity image quality of a given camera (or RAW development workflow) may depend greatly on the quality of the algorithm used for noise reduction. Since noise levels increase as ISO sensitivity is increased, most camera manufacturers increase the noise reduction aggressiveness automatically at higher sensitivities. This leads to a breakdown of image quality at higher sensitivities in two ways: noise levels increase and fine detail is smoothed out by the more aggressive noise reduction.

In cases of extreme noise, such as astronomical images of very distant objects, it is not so much a matter of noise reduction as of extracting a little information buried in a lot of noise; techniques are different, seeking small regularities in massively random data.

Video noise[edit]

In video and television, noise refers to the random dot pattern that is superimposed on the picture as a result of electronic noise, the "snow" that is seen with poor (analog) television reception or on VHS tapes. Interference and static are other forms of noise, in the sense that they are unwanted, though not random, which can affect radio and television signals.

Digital noise is sometimes present on videos encoded in MPEG-2 format as a compression artifact.

Useful noise[edit]

High levels of noise are almost always undesirable, but there are cases when a certain amount of noise is useful, for example to prevent discretization artifacts (color banding or posterization). Some noise also increases acutance (apparent sharpness). Noise purposely added for such purposes is called dither; it improves the image perceptually, though it degrades the signal-to-noise ratio.

Low and high-ISO noise examples[edit]

- Images of a flower taken at ISO 100 and ISO 1600 on a Canon 400D digital camera. Both images were shot under similar lighting conditions, varying only the ISO setting and shutter speed.

-

ISO 100, f/5.6, 1/350 s

(click on image for larger version) -

ISO 1600, f/5.6, 1/4000 s

(click on image for larger version) -

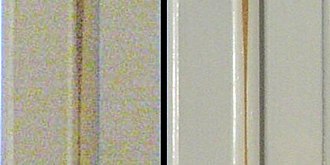

Comparison of both images. This is a crop of a small section of each image displayed at 100%. The top portion was shot at 100 ISO, the bottom portion at 1600 ISO.

Low and high-ISO technical examination[edit]

An image sensor in a digital camera contains a fixed amount of pixels (which define the advertised megapixels of the camera). These pixels have what is called a well depth.[26] The pixel well can be thought of as a bucket.[27]

The ISO setting on a digital camera is the first (and sometimes only) user adjustable (analog) gain setting in the signal processing chain. It determines the amount of gain applied to the voltage output from the image sensor and has a direct effect on read noise. All signal processing units within a digital camera system have a noise floor. The difference between the signal level and the noise floor is called the signal-to-noise ratio. A higher signal-to-noise ratio equates to a better quality image.[28]

In bright sunny conditions, a slow shutter speed, wide open aperture, or some combination of all three, there can be sufficient photons hitting the image sensor to completely fill, or otherwise reach near capacity of the pixel wells. If the capacity of the pixel wells is exceeded, this equates to over exposure. When the pixel wells are at near capacity, the photons themselves that have been exposed to the image sensor, generate enough energy to excite the emission of electrons in the image sensor and generate sufficient voltage at the image sensor output,[20] equating to a lack of need for ISO gain (higher ISO above the base setting of the camera). This equates to a sufficient signal level (from the image sensor) which is passed through the remaining signal processing electronics, resulting in a high signal-to-noise ratio, or low noise, or optimal exposure.

Conversely, in darker conditions, faster shutter speeds, closed apertures, or some combination of all three, there can be a lack of sufficient photons hitting the image sensor to generate a suitable voltage from the image sensor to overcome the noise floor of the signal chain, resulting in a low signal-to-noise ratio, or high noise (predominately read noise). In these conditions, increasing ISO gain (higher ISO setting) will increase the image quality of the output image,[29] as the ISO gain will amplify the low voltage from the image sensor and generate a higher signal-to-noise ratio through the remaining signal processing electronics.

It can be seen that a higher ISO setting (applied correctly) does not, in and of itself, generate a higher noise level, and conversely, a higher ISO setting reduces read noise. The increase in noise often found when using a higher ISO setting is a result of the amplification of shot noise and a lower dynamic range as a result of technical limitations in current technology.

See also[edit]

References[edit]

- ^ Stroebel, Leslie; Zakia, Richard D. (1995). The Focal encyclopedia of photography. Focal Press. p. 507. ISBN 978-0-240-51417-8.

- ^ Philippe Cattin (2012-04-24). "Image Restoration: Introduction to Signal and Image Processing". MIAC, University of Basel. Archived from the original on 2016-09-18. Retrieved 11 October 2013.

- ^ Jun Ohta (2008). Smart CMOS Image Sensors and Applications. CRC Press. ISBN 978-0-8493-3681-2.

- ^ Junichi Nakamura (2005). Image Sensors and Signal Processing for Digital Still Cameras. CRC Press. ISBN 0-8493-3545-0.

- ^ a b c Lindsay MacDonald (2006). Digital Heritage. Butterworth-Heinemann. ISBN 0-7506-6183-6.

- ^ Mehdi Mafi, Harold Martin, Jean Andrian, Armando Barreto, Mercedes Cabrerizo, Malek Adjouadi, “A Comprehensive Survey on Impulse and Gaussian Denoising Filters for Digital Images,” Signal Processing, vol. 157, pp. 236-260, 2019.

- ^ a b c Rafael C. Gonzalez; Richard E. Woods (2007). Digital Image Processing. Pearson Prenctice Hall. ISBN 978-0-13-168728-8.

- ^ Alan C. Bovik (2005). Handbook of Image and Video Processing. Academic Press. ISBN 0-12-119792-1.

- ^ Linda G. Shapiro; George C. Stockman (2001). Computer Vision. Prentice-Hall. ISBN 0-13-030796-3.

- ^ a b c Boncelet, Charles (2005). "Image Noise Models". In Alan C. Bovik (ed.). Handbook of Image and Video Processing. Academic Press. ISBN 0-12-119792-1.

- ^ Mehdi Mafi, Hoda Rajaei, Mercedes Cabrerizo, Malek Adjouadi, “A Robust Edge Detection Approach in the Presence of High Impulse Intensity through Switching Adaptive Median and Fixed Weighted Mean Filtering,” IEEE Transactions on Image Processing, vol. 27, issue. 11, 2018, pp. 5475-5490.

- ^ Charles Boncelet (2005), Alan C. Bovik. Handbook of Image and Video Processing. Academic Press. ISBN 0-12-119792-1

- ^ Janesick, James R. (2001). Scientific Charge-coupled Devices. SPIE Press. ISBN 0-8194-3698-4.

- ^ a b Michael A. Covington (2007). Digital SLR Astrophotography. Cambridge University Press. ISBN 978-0-521-70081-8.

- ^ R. E. Jacobson; S. F. Ray; G. G. Attridge; N. R. Axford (2000). The Manual of Photography. Focal Press. ISBN 0-240-51574-9.

- ^ Thomas S. Huang (1986). Advances in Computer Vision and Image Processing. JAI Press. ISBN 0-89232-460-0.

- ^ Brian W. Keelan; Robert E. Cookingham (2002). Handbook of Image Quality. CRC Press. ISBN 0-8247-0770-2.

- ^ Joseph G. Pellegrino; et al. (2006). "Infrared Camera Characterization". In Joseph D. Bronzino (ed.). Biomedical Engineering Fundamentals. CRC Press. ISBN 0-8493-2122-0.

- ^ McHugh, Sean. "Digital Cameras: Does Pixel Size Matter? Part 2: Example Images using Different Pixel Sizes (Does Sensor Size Matter?)". Retrieved 2010-06-03.

- ^ a b Martinec, Emil (2008-05-22). "Noise, Dynamic Range and Bit Depth in Digital SLRs (read noise)". Retrieved 2020-11-24.

- ^ R. N., Clark (2008-12-22). "Digital Cameras: Does Pixel Size Matter? Part 2: Example Images using Different Pixel Sizes (Does Sensor Size Matter?)". Retrieved 2010-06-03.

- ^ Wrotniak, J. Anderzej (2009-02-26). "Four Thirds Sensor Size and Aspect Ratio". Retrieved 2010-06-03.

- ^ Singh, Akansha; Singh, K.K. (2012). Digital Image Processing. Umesh Publications. ISBN 978-93-80117-60-7.

- ^ Priyadarshini, Neha; Sarkar, Mukul (January 2021). "A 2e rms − Temporal Noise CMOS Image Sensor With In-Pixel 1/ f Noise Reduction and Conversion Gain Modulation for Low Light Imaging". IEEE Transactions on Circuits and Systems I: Regular Papers. 68 (1): 185–195. doi:10.1109/TCSI.2020.3034377. ISSN 1549-8328. S2CID 229251410.

- ^ Chervyakov, Nikolay; Lyakhov, Pavel; Nagornov, Nikolay (2020-02-11). "Analysis of the Quantization Noise in Discrete Wavelet Transform Filters for 3D Medical Imaging". Applied Sciences. 10 (4): 1223. doi:10.3390/app10041223. ISSN 2076-3417.

- ^ "Astrophotography, Pixel-by-Pixel: Part 1 - Well Depth, Pixel Size, and Quantum Efficiency". Cloudbreak Optics. Retrieved 2020-11-24.

- ^ Clark, Roger N. (2012-07-04). "Exposure and Digital Cameras, Part 1. What is ISO on a digital camera? When is a camera ISOless? ISO Myths and Digital Cameras". Retrieved 2020-11-24.

- ^ Martinec, Emil (2008-05-22). "Noise, Dynamic Range and Bit Depth in Digital SLRs (S/N ratio vs. exposure, and Dynamic Range)". Retrieved 2020-11-24.

- ^ Martinec, Emil (2008-05-22). "Noise, Dynamic Range and Bit Depth in Digital SLRs (S/N and Exposure Decisions)". Retrieved 2020-11-24.

External links[edit]

- Noise at dpreview glossary

- Compact Camera High ISO modes: Separating the facts from the hype

- Nikon D700 noise measurements

- Lectures on Image Processing, by Alan Peters. Vanderbilt University. Updated 7 January 2016.