Overclocking

This article possibly contains original research. (February 2023) |

In computing, overclocking is the practice of increasing the clock rate of a computer to exceed that certified by the manufacturer. Commonly, operating voltage is also increased to maintain a component's operational stability at accelerated speeds. Semiconductor devices operated at higher frequencies and voltages increase power consumption and heat.[1] An overclocked device may be unreliable or fail completely if the additional heat load is not removed or power delivery components cannot meet increased power demands. Many device warranties state that overclocking or over-specification[2] voids any warranty, but some manufacturers allow overclocking as long as it is done (relatively) safely.[citation needed]

Overview[edit]

The purpose of overclocking is to increase the operating speed of a given component. Normally, on modern systems, the target of overclocking is increasing the performance of a major chip or subsystem, such as the main processor or graphics controller, but other components, such as system memory (RAM) or system buses (generally on the motherboard), are commonly involved. The trade-offs are an increase in power consumption (heat), fan noise (cooling), and shortened lifespan for the targeted components. Most components are designed with a margin of safety to deal with operating conditions outside of a manufacturer's control; examples are ambient temperature and fluctuations in operating voltage. Overclocking techniques in general aim to trade this safety margin by setting the device to run in the higher end of the margin, with the understanding that temperature and voltage must be more strictly monitored and controlled by the user. Examples are that operating temperature would need to be more strictly controlled with increased cooling, as the part will be less tolerant of increased temperatures at the higher speeds. Also base operating voltage may be increased to compensate for unexpected voltage drops and to strengthen signalling and timing signals, as low-voltage excursions are more likely to cause malfunctions at higher operating speeds.

While most modern devices are fairly tolerant of overclocking, all devices have finite limits. Generally for any given voltage most parts will have a maximum "stable" speed where they still operate correctly. Past this speed, the device starts giving incorrect results, which can cause malfunctions and sporadic behavior in any system depending on it. While in a PC context the usual result is a system crash, more subtle errors can go undetected, which over a long enough time can give unpleasant surprises such as data corruption (incorrectly calculated results, or worse writing to storage incorrectly) or the system failing only during certain specific tasks (general usage such as internet browsing and word processing appear fine, but any application wanting advanced graphics crashes the system. There might also be a chance for damage to the hardware itself).

At this point, an increase in operating voltage of a part may allow more headroom for further increases in clock speed, but the increased voltage can also significantly increase heat output, as well as shorten the lifespan further. At some point, there will be a limit imposed by the ability to supply the device with sufficient power, the user's ability to cool the part, and the device's own maximum voltage tolerance before it achieves destructive failure. Overzealous use of voltage or inadequate cooling can rapidly degrade a device's performance to the point of failure, or in extreme cases outright destroy it.

The speed gained by overclocking depends largely upon the applications and workloads being run on the system, and what components are being overclocked by the user; benchmarks for different purposes are published.

Underclocking[edit]

Conversely, the primary goal of underclocking is to reduce power consumption and the resultant heat generation of a device, with the trade-offs being lower clock speeds and reductions in performance. Reducing the cooling requirements needed to keep hardware at a given operational temperature has knock-on benefits such as lowering the number and speed of fans to allow quieter operation, and in mobile devices increase the length of battery life per charge. Some manufacturers underclock components of battery-powered equipment to improve battery life, or implement systems that detect when a device is operating under battery power and reduce clock frequency.

Underclocking and undervolting would be attempted on a desktop system to have it operate silently (such as for a home entertainment center) while potentially offering higher performance than currently offered by low-voltage processor offerings. This would use a "standard-voltage" part and attempt to run with lower voltages (while attempting to keep the desktop speeds) to meet an acceptable performance/noise target for the build. This was also attractive as using a "standard voltage" processor in a "low voltage" application avoided paying the traditional price premium for an officially certified low voltage version. However again like overclocking there is no guarantee of success, and the builder's time researching given system/processor combinations and especially the time and tedium of performing many iterations of stability testing need to be considered. The usefulness of underclocking (again like overclocking) is determined by what processor offerings, prices, and availability are at the specific time of the build. Underclocking is also sometimes used when troubleshooting.

Enthusiast culture[edit]

Overclocking has become more accessible with motherboard makers offering overclocking as a marketing feature on their mainstream product lines. However, the practice is embraced more by enthusiasts than professional users, as overclocking carries a risk of reduced reliability, accuracy and damage to data and equipment. Additionally, most manufacturer warranties and service agreements do not cover overclocked components nor any incidental damages caused by their use. While overclocking can still be an option for increasing personal computing capacity, and thus workflow productivity for professional users, the importance of stability testing components thoroughly before employing them into a production environment cannot be overstated.

Overclocking offers several draws for overclocking enthusiasts. Overclocking allows testing of components at speeds not currently offered by the manufacturer, or at speeds only officially offered on specialized, higher-priced versions of the product. A general trend in the computing industry is that new technologies tend to debut in the high-end market first, then later trickle down to the performance and mainstream market. If the high-end part only differs by an increased clock speed, an enthusiast can attempt to overclock a mainstream part to simulate the high-end offering. This can give insight on how over-the-horizon technologies will perform before they are officially available on the mainstream market, which can be especially helpful for other users considering if they should plan ahead to purchase or upgrade to the new feature when it is officially released.

Some hobbyists enjoy building, tuning, and "Hot-Rodding" their systems in competitive benchmarking competitions, competing with other like-minded users for high scores in standardized computer benchmark suites. Others will purchase a low-cost model of a component in a given product line, and attempt to overclock that part to match a more expensive model's stock performance. Another approach is overclocking older components to attempt to keep pace with increasing system requirements and extend the useful service life of the older part or at least delay purchase of new hardware solely for performance reasons. Another rationale for overclocking older equipment is even if overclocking stresses equipment to the point of failure earlier, little is lost as it is already depreciated, and would have needed to be replaced in any case.[3]

Components[edit]

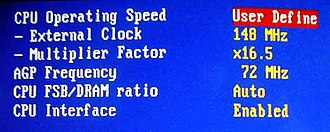

Technically any component that uses a timer (or clock) to synchronize its internal operations can be overclocked. Most efforts for computer components however focus on specific components, such as, processors (a.k.a. CPU), video cards, motherboard chipsets, and RAM. Most modern processors derive their effective operating speeds by multiplying a base clock (processor bus speed) by an internal multiplier within the processor (the CPU multiplier) to attain their final speed.

Computer processors generally are overclocked by manipulating the CPU multiplier if that option is available, but the processor and other components can also be overclocked by increasing the base speed of the bus clock. Some systems allow additional tuning of other clocks (such as a system clock) that influence the bus clock speed that, again is multiplied by the processor to allow for finer adjustments of the final processor speed.

Most OEM systems do not expose to the user the adjustments needed to change processor clock speed or voltage in the BIOS of the OEM's motherboard, which precludes overclocking (for warranty and support reasons). The same processor installed on a different motherboard offering adjustments will allow the user to change them.

Any given component will ultimately stop operating reliably past a certain clock speed. Components will generally show some sort of malfunctioning behavior or other indication of compromised stability that alerts the user that a given speed is not stable, but there is always a possibility that a component will permanently fail without warning, even if voltages are kept within some pre-determined safe values. The maximum speed is determined by overclocking to the point of first instability, then accepting the last stable slower setting. Components are only guaranteed to operate correctly up to their rated values; beyond that different samples may have different overclocking potential. The end-point of a given overclock is determined by parameters such as available CPU multipliers, bus dividers, voltages; the user's ability to manage thermal loads, cooling techniques; and several other factors of the individual devices themselves such as semiconductor clock and thermal tolerances, interaction with other components and the rest of the system.

Considerations[edit]

There are several things to be considered when overclocking. First is to ensure that the component is supplied with adequate power at a voltage sufficient to operate at the new clock rate. Supplying the power with improper settings or applying excessive voltage can permanently damage a component.

In a professional production environment, overclocking is only likely to be used where the increase in speed justifies the cost of the expert support required, the possibly reduced reliability, the consequent effect on maintenance contracts and warranties, and the higher power consumption. If faster speed is required it is often cheaper when all costs are considered to buy faster hardware.

Cooling[edit]

All electronic circuits produce heat generated by the movement of electric current. As clock frequencies in digital circuits and voltage applied increase, the heat generated by components running at the higher performance levels also increases. The relationship between clock frequencies and thermal design power (TDP) are linear. However, there is a limit to the maximum frequency which is called a "wall". To overcome this issue, overclockers raise the chip voltage to increase the overclocking potential. Voltage increases power consumption and consequently heat generation significantly (proportionally to the square of the voltage in a linear circuit, for example); this requires more cooling to avoid damaging the hardware by overheating. In addition, some digital circuits slow down at high temperatures due to changes in MOSFET device characteristics. Conversely, the overclocker may decide to decrease the chip voltage while overclocking (a process known as undervolting), to reduce heat emissions while performance remains optimal.

Stock cooling systems are designed for the amount of power produced during non-overclocked use; overclocked circuits can require more cooling, such as by powerful fans, larger heat sinks, heat pipes and water cooling. Mass, shape, and material all influence the ability of a heatsink to dissipate heat. Efficient heatsinks are often made entirely of copper, which has high thermal conductivity, but is expensive.[4] Aluminium is more widely used; it has good thermal characteristics, though not as good as copper, and is significantly cheaper. Cheaper materials such as steel do not have good thermal characteristics. Heat pipes can be used to improve conductivity. Many heatsinks combine two or more materials to achieve a balance between performance and cost.[4]

Water cooling carries waste heat to a radiator. Thermoelectric cooling devices which actually refrigerate using the Peltier effect can help with high thermal design power (TDP) processors made by Intel and AMD in the early twenty-first century. Thermoelectric cooling devices create temperature differences between two plates by running an electric current through the plates. This method of cooling is highly effective, but itself generates significant heat elsewhere which must be carried away, often by a convection-based heatsink or a water cooling system.

Other cooling methods are forced convection and phase transition cooling which is used in refrigerators and can be adapted for computer use. Liquid nitrogen, liquid helium, and dry ice are used as coolants in extreme cases,[5] such as record-setting attempts or one-off experiments rather than cooling an everyday system. In June 2006, IBM and Georgia Institute of Technology jointly announced a new record in silicon-based chip clock rate (the rate a transistor can be switched at, not the CPU clock rate[6]) above 500 GHz, which was done by cooling the chip to 4.5 K (−268.6 °C; −451.6 °F) using liquid helium.[7] Set in November 2012, the CPU Frequency World Record is 9008.82 MHz as of December 2022.[8] These extreme methods are generally impractical in the long term, as they require refilling reservoirs of vaporizing coolant, and condensation can form on chilled components.[5] Moreover, silicon-based junction gate field-effect transistors (JFET) will degrade below temperatures of roughly 100 K (−173 °C; −280 °F) and eventually cease to function or "freeze out" at 40 K (−233 °C; −388 °F) since the silicon ceases to be semiconducting,[9] so using extremely cold coolants may cause devices to fail. Blowtorch is used to temporarily raise temperature to issues of over-cooling when not desirable.[10][11]

Submersion cooling, used by the Cray-2 supercomputer, involves sinking a part of computer system directly into a chilled liquid that is thermally conductive but has low electrical conductivity. The advantage of this technique is that no condensation can form on components.[12] A good submersion liquid is Fluorinert made by 3M, which is expensive. Another option is mineral oil, but impurities such as those in water might cause it to conduct electricity.[12]

Amateur overclocking enthusiasts have used a mixture of dry ice and a solvent with a low freezing point, such as acetone or isopropyl alcohol.[13] This cooling bath, often used in laboratories, achieves a temperature of −78 °C (−108 °F).[14] However, this practice is discouraged due to its safety risks; the solvents are flammable and volatile, and dry ice can cause frostbite (through contact with exposed skin) and suffocation (due to the large volume of carbon dioxide generated when it sublimes).

Stability and functional correctness[edit]

As an overclocked component operates outside of the manufacturer's recommended operating conditions, it may function incorrectly, leading to system instability. Another risk is silent data corruption by undetected errors. Such failures might never be correctly diagnosed and may instead be incorrectly attributed to software bugs in applications, device drivers, or the operating system. Overclocked use may permanently damage components enough to cause them to misbehave (even under normal operating conditions) without becoming totally unusable.

A large-scale 2011 field study of hardware faults causing a system crash for consumer PCs and laptops showed a four to 20 times increase (depending on CPU manufacturer) in system crashes due to CPU failure for overclocked computers over an eight-month period.[15]

In general, overclockers claim that testing can ensure that an overclocked system is stable and functioning correctly. Although software tools are available for testing hardware stability, it is generally impossible for any private individual to thoroughly test the functionality of a processor.[16] Achieving good fault coverage requires immense engineering effort; even with all of the resources dedicated to validation by manufacturers, faulty components and even design faults are not always detected.

A particular "stress test" can verify only the functionality of the specific instruction sequence used in combination with the data and may not detect faults in those operations. For example, an arithmetic operation may produce the correct result but incorrect flags; if the flags are not checked, the error will go undetected.

To further complicate matters, in process technologies such as silicon on insulator (SOI), devices display hysteresis—a circuit's performance is affected by the events of the past, so without carefully targeted tests it is possible for a particular sequence of state changes to work at overclocked rates in one situation but not another even if the voltage and temperature are the same. Often, an overclocked system which passes stress tests experiences instabilities in other programs.[17]

In overclocking circles, "stress tests" or "torture tests" are used to check for correct operation of a component. These workloads are selected as they put a very high load on the component of interest (e.g. a graphically intensive application for testing video cards, or different math-intensive applications for testing general CPUs). Popular stress tests include Prime95, Everest, Superpi, OCCT, AIDA64, Linpack (via the LinX and IntelBurnTest GUIs), SiSoftware Sandra, BOINC, Intel Thermal Analysis Tool and Memtest86. The hope is that any functional-correctness issues with the overclocked component will manifest themselves during these tests, and if no errors are detected during the test, then the component is deemed "stable". Since fault coverage is important in stability testing, the tests are often run for long periods of time, hours or even days. An overclocked computer is sometimes described using the number of hours and the stability program used, such as "prime 12 hours stable".

Factors allowing overclocking[edit]

Overclockability arises in part due to the economics of the manufacturing processes of CPUs and other components. In many cases components are manufactured by the same process, and tested after manufacture to determine their actual maximum ratings. Components are then marked with a rating chosen by the market needs of the semiconductor manufacturer. If manufacturing yield is high, more higher-rated components than required may be produced, and the manufacturer may mark and sell higher-performing components as lower-rated for marketing reasons. In some cases, the true maximum rating of the component may exceed even the highest rated component sold. Many devices sold with a lower rating may behave in all ways as higher-rated ones, while in the worst case operation at the higher rating may be more problematical.

Notably, higher clocks must always mean greater waste heat generation, as semiconductors set to high must dump to ground more often. In some cases, this means that the chief drawback of the overclocked part is far more heat dissipated than the maximums published by the manufacturer. Pentium architect Bob Colwell calls overclocking an "uncontrolled experiment in better-than-worst-case system operation".[18]

Measuring effects of overclocking[edit]

Benchmarks are used to evaluate performance, and they can become a kind of "sport" in which users compete for the highest scores. As discussed above, stability and functional correctness may be compromised when overclocking, and meaningful benchmark results depend on the correct execution of the benchmark. Because of this, benchmark scores may be qualified with stability and correctness notes (e.g. an overclocker may report a score, noting that the benchmark only runs to completion 1 in 5 times, or that signs of incorrect execution such as display corruption are visible while running the benchmark). A widely used test of stability is Prime95, which has built-in error checking that fails if the computer is unstable.

Using only the benchmark scores, it may be difficult to judge the difference overclocking makes to the overall performance of a computer. For example, some benchmarks test only one aspect of the system, such as memory bandwidth, without taking into consideration how higher clock rates in this aspect will improve the system performance as a whole. Apart from demanding applications such as video encoding, high-demand databases and scientific computing, memory bandwidth is typically not a bottleneck, so a great increase in memory bandwidth may be unnoticeable to a user depending on the applications used. Other benchmarks, such as 3DMark, attempt to replicate game conditions.

Manufacturer and vendor overclocking[edit]

Overclocking is sometimes offered as a legitimate service or feature for consumers, in which a manufacturer or retailer tests the overclocking capability of processors, memory, video cards, and other hardware products. Several video card manufactures now offer factory-overclocked versions of their graphics accelerators, complete with a warranty, usually at a price intermediate between that of the standard product and a non-overclocked product of higher performance.

It is speculated that manufacturers implement overclocking prevention mechanisms such as CPU multiplier locking to prevent users from buying lower-priced items and overclocking them. These measures are sometimes marketed as a consumer protection benefit, but are often criticized by buyers.

Many motherboards are sold, and advertised, with extensive facilities for overclocking implemented in hardware and controlled by BIOS settings.[19]

CPU multiplier locking[edit]

CPU multiplier locking is the process of permanently setting a CPU's clock multiplier. AMD CPUs are unlocked in early editions of a model and locked in later editions, but nearly all Intel CPUs are locked and recent[when?] models are very resistant to unlocking to prevent overclocking by users. AMD ships unlocked CPUs with their Opteron, FX, All Ryzen desktop chips (except 3D variants) and Black Series line-up, while Intel uses the monikers of "Extreme Edition" and "K-Series." Intel usually has one or two Extreme Edition CPUs on the market as well as X series and K series CPUs analogous to AMD's Black Edition. AMD has the majority of their desktop range in a Black Edition.

Users usually unlock CPUs to allow overclocking, but sometimes to allow for underclocking in order to maintain the front side bus speed (on older CPUs) compatibility with certain motherboards. Unlocking generally invalidates the manufacturer's warranty, and mistakes can cripple or destroy a CPU. Locking a chip's clock multiplier does not necessarily prevent users from overclocking, as the speed of the front-side bus or PCI multiplier (on newer CPUs) may still be changed to provide a performance increase. AMD Athlon and Athlon XP CPUs are generally unlocked by connecting bridges (jumper-like points) on the top of the CPU with conductive paint or pencil lead. Other CPU models may require different procedures.

Increasing front-side bus or northbridge/PCI clocks can overclock locked CPUs, but this throws many system frequencies out of sync, since the RAM and PCI frequencies are modified as well.

One of the easiest ways to unlock older AMD Athlon XP CPUs was called the pin mod method, because it was possible to unlock the CPU without permanently modifying bridges. A user could simply put one wire (or some more for a new multiplier/Vcore) into the socket to unlock the CPU. More recently however, notably with Intel's Skylake architecture, Intel had a bug with the Skylake (6th gen Core) processors where the base clock could be increased past 102.7 MHz, however functionality of certain features would not work. Intel intended to block base clock (BCLK) overclocking of locked processors when designing the Skylake architecture to prevent consumers from purchasing cheaper components and overclocking to previously-unseen heights (since the CPU's BCLK was no longer tied to the PCI buses), however for LGA1151, the 6th generation "Skylake" processors were able to be overclocked past 102.7 MHz (which was the intended limit by Intel, and was later mandated through later BIOS updates).[original research?] All other unlocked processors from LGA1151 and v2 (including 7th, 8th, and 9th generation) and BGA1440 allow for BCLK overclocking (as long as the OEM allows it), while all other locked processors from 7th, 8th, and 9th gen were not able to go past 102.7 MHz. 10th gen however, could reach 103 MHz [20] on the BCLK.

Advantages[edit]

This section possibly contains original research. (December 2011) |

- Higher performance in games, en-/decoding, video editing and system tasks at no additional direct monetary expense, but with increased electrical consumption and thermal output.

- System optimization: Some systems have "bottlenecks", where small overclocking of one component can help realize the full potential of another component to a greater percentage than when just the limiting hardware itself is overclocked. For instance: many motherboards with AMD Athlon 64 processors limit the clock rate of four units of RAM to 333 MHz. However, the memory performance is computed by dividing the processor clock rate (which is a base number times a CPU multiplier, for instance 1.8 GHz is most likely 9×200 MHz) by a fixed integer such that, at a stock clock rate, the RAM would run at a clock rate near 333 MHz. Manipulating elements of how the processor clock rate is set (usually adjusting the multiplier), it is often possible to overclock the processor a small amount, around 5–10%, and gain a small increase in RAM clock rate and/or reduction in RAM latency timings.

- It can be cheaper to purchase a lower performance component and overclock it to the clock rate of a more expensive component.

- Extending the practical service life of old or obsolete equipment.

Disadvantages[edit]

General[edit]

This section possibly contains original research. (December 2011) |

- Higher clock rates and voltages increase power consumption, also increasing electricity cost and heat production. The additional heat increases the ambient air temperature within the system case, which may affect other components. The hot air blown out of the case heats the room it's in.

- Fan noise: High-performance fans running at maximum speed used for the required degree of cooling of an overclocked machine can be noisy, some producing 50 dB or more of noise. When maximum cooling is not required, in any equipment, fan speeds can be reduced below the maximum: fan noise has been found to be roughly proportional to the fifth power of fan speed; halving speed reduces noise by about 15 dB.[21] Fan noise can be reduced by design improvements, e.g. with aerodynamically optimized blades for smoother airflow, reducing noise to around 20 dB at approximately 1 metre[citation needed] or larger fans rotating more slowly, which produce less noise than smaller, faster fans with the same airflow. Acoustical insulation inside the case e.g. acoustic foam can reduce noise. Additional cooling methods which do not use fans can be used, such as liquid and phase-change cooling.

- An overclocked computer may become unreliable. For example: Microsoft Windows may appear to work with no problems, but when it is re-installed or upgraded, error messages may be received such as a "file copy error" during Windows Setup.[22] Because installing Windows is very memory-intensive, decoding errors may occur when files are extracted from the Windows XP CD-ROM

- The lifespan of semiconductor components may be reduced by increased voltages and heat.

- Warranties may be voided by overclocking.

Risks of overclocking[edit]

- Increasing the operation frequency of a component will usually increase its thermal output in a linear fashion, while an increase in voltage usually causes thermal power to increase quadratically.[23] Excessive voltages or improper cooling may cause chip temperatures to rise to dangerous levels, causing the chip to be damaged or destroyed.

- Exotic cooling methods used to facilitate overclocking such as water cooling are more likely to cause damage if they malfunction. Sub-ambient cooling methods such as phase-change cooling or liquid nitrogen will cause water condensation, which will cause electrical damage unless controlled; some methods include using kneaded erasers or shop towels to catch the condensation.

Limitations[edit]

Overclocking components can only be of noticeable benefit if the component is on the critical path for a process, if it is a bottleneck. If disk access or the speed of an Internet connection limit the speed of a process, a 20% increase in processor speed is unlikely to be noticed, however there are some scenarios where increasing the clock speed of a processor actually allows an SSD to be read and written to faster. Overclocking a CPU will not noticeably benefit a game when a graphics card's performance is the "bottleneck" of the game.

Graphics cards[edit]

Graphics cards can also be overclocked. There are utilities to achieve this, such as EVGA's Precision, RivaTuner, AMD Overdrive (on AMD cards only), MSI Afterburner, Zotac Firestorm, and the PEG Link Mode on Asus motherboards. Overclocking a GPU will often yield a marked increase in performance in synthetic benchmarks, usually reflected in game performance.[24] It is sometimes possible to see that a graphics card is being pushed beyond its limits before any permanent damage is done by observing on-screen artifacts or unexpected system crashes. It is common to run into one of those problems when overclocking graphics cards; both symptoms at the same time usually means that the card is severely pushed beyond its heat, clock rate, and/or voltage limits, however if seen when not overclocked, they indicate a faulty card. After a reboot, video settings are reset to standard values stored in the graphics card firmware, and the maximum clock rate of that specific card is now deducted.

Some overclockers apply a potentiometer to the graphics card to manually adjust the voltage (which usually invalidates the warranty). This allows for finer adjustments, as overclocking software for graphics cards can only go so far. Excessive voltage increases may damage or destroy components on the graphics card or the entire graphics card itself (practically speaking).

Flashing[edit]

Alternatives[edit]

Flashing and unlocking can be used to improve the performance of a video card, without technically overclocking (but is much riskier than overclocking just through software).

Flashing refers to using the firmware of a different card with the same (or sometimes similar) core and compatible firmware, effectively making it a higher model card; it can be difficult, and may be irreversible. Sometimes standalone software to modify the firmware files can be found, e.g. NiBiTor (GeForce 6/7 series are well regarded in this aspect), without using firmware for a better model video card. For example, video cards with 3D accelerators (most, as of 2011[update]) have two voltage and clock rate settings, one for 2D and one for 3D, but were designed to operate with three voltage stages, the third being somewhere between the aforementioned two, serving as a fallback when the card overheats or as a middle-stage when going from 2D to 3D operation mode. Therefore, it could be wise to set this middle-stage prior to "serious" overclocking, specifically because of this fallback ability; the card can drop down to this clock rate, reducing by a few (or sometimes a few dozen, depending on the setting) percent of its efficiency and cool down, without dropping out of 3D mode (and afterwards return to the desired high performance clock and voltage settings).

Some cards have abilities not directly connected with overclocking. For example, Nvidia's GeForce 6600GT (AGP flavor) has a temperature monitor used internally by the card, invisible to the user if standard firmware is used. Modifying the firmware can display a 'Temperature' tab.

Unlocking refers to enabling extra pipelines or pixel shaders. The 6800LE, the 6800GS and 6800 (AGP models only) were some of the first cards to benefit from unlocking. While these models have either 8 or 12 pipes enabled, they share the same 16x6 GPU core as a 6800GT or Ultra, but pipelines and shaders beyond those specified are disabled; the GPU may be fully functional, or may have been found to have faults which do not affect operation at the lower specification. GPUs found to be fully functional can be unlocked successfully, although it is not possible to be sure that there are undiscovered faults; in the worst case the card may become permanently unusable.

See also[edit]

References[edit]

- ^ Victoria Zhislina (February 19, 2014). "Why has CPU frequency ceased to grow?". Intel. Archived from the original on June 21, 2017. Retrieved August 2, 2017.

- ^ "LIMITED WARRANTY" (PDF).

- ^ Wainner, Scott; Richmond, Robert (2003). The Book of Overclocking. No Starch Press. pp. 1–2. ISBN 978-1-886411-76-0.

- ^ a b Wainner, Scott; Richmond, Robert (2003). The Book of Overclocking. No Starch Press. p. 38. ISBN 978-1-886411-76-0.

- ^ a b Wainner, Scott; Richmond, Robert (2003). The Book of Overclocking. No Starch Press. p. 44. ISBN 978-1-886411-76-0.

- ^ Stokes, Jon (June 22, 2006). "IBM's 500GHz processor? Not so fast…". Ars Technica. Archived from the original on October 20, 2017. Retrieved June 14, 2017.

- ^ Toon, John (June 20, 2006). "Georgia Tech/IBM Announce New Chip Speed Record". Georgia Institute of Technology. Archived from the original on July 1, 2010. Retrieved February 2, 2009.

- ^ "Intel Core i9 13900K Breaks the CPU Frequency World Record". Archived from the original on March 2, 2018. Retrieved December 9, 2022.

- ^ "Extreme-Temperature Electronics: Tutorial – Part 3". 2003. Archived from the original on March 6, 2012. Retrieved November 4, 2007.

- ^ Wes Fenlon (June 9, 2017). "Overclocking a CPU to 7 GHz with the science of liquid nitrogen". PC Gamer. Retrieved November 12, 2023.

- ^ "Overclocking to 7GHz takes more than just liquid nitrogen". Engadget. August 8, 2019. Retrieved November 12, 2023.

- ^ a b Wainner, Scott; Robert Richmond (2003). The Book of Overclocking. No Starch Press. p. 48. ISBN 978-1-886411-76-0.

- ^ "overclocking with dry ice!". TechPowerUp Forums. August 13, 2009. Archived from the original on December 7, 2019. Retrieved January 7, 2020.

- ^ Cooling baths – ChemWiki Archived 2012-08-28 at the Wayback Machine. Chemwiki.ucdavis.edu. Retrieved on 2013-06-17.

- ^ Cycles, cells and platters: an empirical analysis of hardware failures on a million consumer PCs (PDF). Proceedings of the sixth conference on Computer systems (EuroSys '11). 2011. pp. 343–356. Archived (PDF) from the original on November 14, 2012. Retrieved December 5, 2012.

- ^ Tasiran, Serdar; Keutzer, Kurt (2001). "Coverage Metrics for Functional Validation of Hardware Designs". IEEE Design & Test of Computers. CiteSeerX 10.1.1.62.9086.

{{cite journal}}: Cite journal requires|journal=(help) - ^ Chen, Raymond (April 12, 2005). "The Old New Thing: There's an awful lot of overclocking out there". Archived from the original on March 8, 2007. Retrieved March 17, 2007.

- ^ Colwell, Bob (March 2004). "The Zen of Overclocking". Computer. 37 (3). Institute of Electrical and Electronics Engineers: 9–12. doi:10.1109/MC.2004.1273994. S2CID 21582410.

- ^ "||ASUS Global". ASUS Global. Archived from the original on May 10, 2021. Retrieved May 10, 2021.

- ^ "Intel Core i5-10400F Review – Six Cores with HT for Under $200". TechPowerUp. May 28, 2020. Archived from the original on December 15, 2021. Retrieved December 15, 2021.

- ^ "UK Health and Safety Executive: Top 10 noise control techniques" (PDF). Archived (PDF) from the original on November 26, 2019. Retrieved December 30, 2011.

- ^ "Article ID: 310064 – Last Review: May 7, 2007 – Revision: 6.2 How to troubleshoot problems during installation when you upgrade from Windows 98 or Windows Millennium Edition to Windows XP". Archived from the original on May 15, 2009. Retrieved September 4, 2008.

- ^ Darche, Philippe (November 2, 2020). Microprocessor 3: Core Concepts – Hardware Aspects. John Wiley & Sons. ISBN 978-1-119-78800-3.

- ^ "Alt+Esc | GTX 780 Overclocking Guide". Archived from the original on June 24, 2013. Retrieved June 18, 2013.

- Notes

- Colwell, Bob (March 2004). "The Zen of Overclocking". Computer. 37 (3): 9–12. doi:10.1109/MC.2004.1273994. S2CID 21582410.

External links[edit]

Media related to Overclocking at Wikimedia Commons

Media related to Overclocking at Wikimedia Commons- OverClocked inside

- How to Overclock a PC, WikiHow

- Overclocking guide for the Apple iMac G4 main logic board

Overclocking and benchmark databases[edit]

- OC Database of all PC hardware for the past decade (applications, modifications and more)

- HWBOT: Worldwide Overclocking League – Overclocking competition and data

- Comprehensive CPU OC Database

- Segunda Convencion Nacional de OC: Overclocking Extremo by Imperio Gamer

- Tool for overclock