Decision tree learning

Decision tree learning, used in data mining and machine learning, uses a decision tree as a predictive model which maps observations about an item to conclusions about the item's target value. More descriptive names for such tree models are classification trees or regression trees. In these tree structures, leaves represent classifications and branches represent conjunctions of features that lead to those classifications.

Decision trees are a special tree structure constructed to help with making decisions. In decision theory and decision analysis, a decision tree is a graph or model of decisions and their possible consequences, including chance event outcomes, resource costs, and utility. It can be used to create a plan to reach a goal. Another use of trees is as a descriptive means for calculating conditional probabilities.

In decision analysis, a decision tree can be used to visually and explicitly represent decisions and decision making. In data mining, a decision tree describes data but not decisions; rather the resulting classification tree can be an input for decision making. This page deals with decision trees in data mining.

General

Decision tree learning is a common method used in data mining. The goal is to create a model that predicts the value of a target variable based on several input variables. Each interior node corresponds to one of the input variables; there are edges to children for each of the possible values of that input variable. Each leaf represents a value of the target variable given the values of the input variables represented by the path from the root to the leaf.

A tree can be "learned" by splitting the source set into subsets based on an attribute value test. This process is repeated on each derived subset in a recursive manner called recursive partitioning. The recursion is completed when the subset at a node all has the same value of the target variable, or when splitting no longer adds value to the predictions.

In data mining, trees can be described also as the combination of mathematical and computational techniques to aid the description, categorisation and generalisation of a given set of data.

Data comes in records of the form:

(x, y) = (x1, x2, x3..., xk, y)

The dependent variable, Y, is the target variable that we are trying to understand, classify or generalise. The vector x is comprised of the input variables, x1, x2, x3 etc., that are used for that task.

Types

In data mining, trees have three more descriptive categories/names:

- Classification tree analysis is when the predicted outcome is the class to which the data belongs.

- Regression tree analysis is when the predicted outcome can be considered a real number (e.g. the price of a house, or a patient’s length of stay in a hospital).

- Classification And Regression Tree (CART) analysis is used to refer to both of the above procedures, first introduced by Breiman et al. (BFOS84).

- A random forest classifier uses a number of decision trees, in order to improve the classification rate.

Practical example

Our friend David is the manager of a famous golf club. Sadly, he is having some trouble with his customer attendance. There are days when everyone wants to play golf and the staff are overworked. On other days, for no apparent reason, no one plays golf and staff have too much slack time. David’s objective is to optimise staff time by predicting when people will play golf. To accomplish that he needs to understand the reasons people decide to play. He assumes that weather must be an important underlying factor, so he decides to use the weather forecast for the upcoming week. So during two weeks he has been recording:

- The outlook, whether it was sunny, overcast or raining.

- The temperature (in degrees Fahrenheit).

- The relative humidity in percent.

- Whether it was windy or not.

- Whether people attended the golf club on that day.

David compiled this dataset into a table containing 14 rows and 5 columns as shown below.

He then applied a decision tree model to solve his problem.

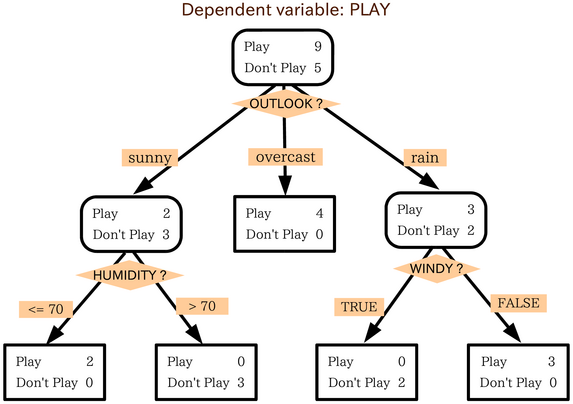

A decision tree is a model of the data that encodes the distribution of the class label (again the Y) in terms of the predictor attributes. It is a directed acyclic graph in form of a tree. The top node represents all the data. The classification tree algorithm concludes that the best way to explain the dependent variable, play, is by using the variable "outlook". Using the categories of the variable outlook, three different groups were found:

- One that plays golf when the weather is sunny,

- One that plays when the weather is cloudy, and

- One that plays when it's raining.

David's first conclusion: if the outlook is overcast people always play golf, and there are some fanatics who play golf even in the rain. Then he divided the sunny group in two. He realised that people don't like to play golf if the humidity is higher than seventy percent.

Finally, he divided the rain category in two and found that people will also not play golf if it is windy.

And lastly, here is the short solution of the problem given by the classification tree: David dismisses most of the staff on days that are sunny and humid or on rainy days that are windy, because almost no one is going to play golf on those days. On days when a lot of people will play golf, he hires extra staff. The decision tree helped David turn a complex set of data into an explanation he could use in decision-making.

Formulae

The algorithms that are used for constructing decision trees work by choosing a variable at each step that is the next best variable to use in splitting the set of items. "Best" is defined by how well the variable splits the set into subsets that have the same value of the target variable. Different algorithms use different formulae for measuring "best". This section presents a few of the most common formulae. These formulae are applied to each candidate subset, and the resulting values are combined (e.g., averaged) to provide a measure of the quality of the split.

Gini impurity

Used by the CART algorithm, Gini impurity is a measure of how often a randomly chosen element from the set would be incorrectly labelled if it were randomly labelled according to the distribution of labels in the subset. Gini impurity can be computed by summing the probability of each item being chosen times the probability of a mistake in categorizing that item. It reaches its minimum (zero) when all cases in the node fall into a single target category.

To compute Gini impurity for a set of items, suppose y takes on values in {1, 2, ..., m}, and let fi = the fraction of items labelled with value i in the set.

Information gain

Used by the ID3, C4.5 and C5.0 tree generation algorithms. Information gain is based on the concept of entropy used in information theory .

Decision tree advantages

Amongst other data mining methods, decision trees have several advantages:

- Simple to understand and interpret. People are able to understand decision tree models after a brief explanation.

- Requires little data preparation. Other techniques often require data normalisation, dummy variables need to be created and blank values to be removed.

- Able to handle both numerical and categorical data. Other techniques are usually specialised in analysing datasets that have only one type of variable. Ex: relation rules can be used only with nominal variables while neural networks can be used only with numerical variables.

- Use a white box model. If a given situation is observable in a model the explanation for the condition is easily explained by boolean logic. An example of a black box model is an artificial neural network since the explanation for the results is difficult to understand.

- Possible to validate a model using statistical tests. That makes it possible to account for the reliability of the model.

- Robust.

- Perform well with large data in a short time. Large amounts of data can be analysed using personal computers in a time short enough to enable stakeholders to take decisions based on its analysis.

Limitations

- The problem of learning an optimal decision tree is known to be NP-complete.[1] Consequently, practical decision-tree learning algorithms are based on heuristic algorithms such as the greedy algorithm where locally optimal decisions are made at each node. Such algorithms cannot guarantee to return the globally optimal decision tree.

- Decision-tree learners create over-complex trees that do not generalise the data well. This is called overfitting[2]. Mechanisms such as pruning are necessary to avoid this problem.

- There are concepts that are hard to learn because decision trees do not express them easily, such as XOR, parity or multiplexer problems. In such cases, the decision tree becomes prohibitively large. Approaches to solve the problem involve either changing the representation of the problem domain, known as propositionalisation[3] or using learning algorithms based on more expressive representations instead, such as statistical relational learning or inductive logic programming.

Extending decision trees with decision graphs

In a decision tree, all paths from the root node to the leaf node proceed by way of conjunction, or AND. In a decision graph, it is possible to use disjunctions (ORs) to join two more paths together using Minimum Message Length (MML)[4]. Decision graphs have been further extended to allow for previously unstated new attributes to be learnt dynamically and used at different places within the graph.[5] The more general coding scheme results in better predictive accuracy and log-loss probabilistic scoring. In general, decision graphs infer models with fewer leaves than decision trees.

See also

- Decision-tree pruning

- Pruning (algorithm)

- Binary decision diagram

- CART

- ID3 algorithm

- C4.5 algorithm

- Random forest

- Decision stump

External sources

- V.Berikov, A.Litvinenko, "Methods for statistical data analysis with decision trees". Novosibirsk, Sobolev Institute of Mathematics, 2003. Methods for statistical data analysis with decision trees

- [BFOS84] L. Breiman, J. Friedman, R. A. Olshen and C. J. Stone, "Classification and regression trees". Wadsworth, 1984.

- [1] T. Menzies, Y. Hu, Data Mining For Very Busy People. IEEE Computer, October 2003, pgs. 18-25.

- Decision Tree Analysis mindtools.com

- J.W. Comley and D.L. Dowe, "Minimum Message Length, MDL and Generalised Bayesian Networks with Asymmetric Languages", chapter 11 (pp265-294) in P. Grunwald, M.A. Pitt and I.J. Myung (eds)., Advances in Minimum Description Length: Theory and Applications, M.I.T. Press, April 2005, ISBN 0-262-07262-9. (This paper puts decision trees in internal nodes of Bayesian networks using Minimum Message Length (MML). An earlier version is Comley and Dowe (2003), .pdf.)

- P.J. Tan and D.L. Dowe (2003), MML Inference of Decision Graphs with Multi-Way Joins and Dynamic Attributes, Proc. 16th Australian Joint Conference on Artificial Intelligence (AI'03), Perth, Australia, 3-5 Dec. 2003, Published in Lecture Notes in Artificial Intelligence (LNAI) 2903, Springer-Verlag, pp269-281.

- P.J. Tan and D.L. Dowe (2004), MML Inference of Oblique Decision Trees, Lecture Notes in Artificial Intelligence (LNAI) 3339, Springer-Verlag, pp1082-1088. (This paper uses Minimum Message Length and actually incorporates probabilistic support vector machines in the leaves of the decision trees.)

- decisiontrees.net Interactive Tutorial

- Building Decision Trees in Python From O'Reilly.

- Decision Trees page at aaai.org, a page with commented links.

- Bayesian Networks Applied in Real World Troubleshooting Scenario Dezide

- Practical Application of Decision Trees by Robin Barnwell

- Decision tree implementation in Ruby (AI4R)

- Machine Learning Thomas G. Dietterich Department of Computer Science, Oregon State University, Corvallis, OR 97331

References

- ^ Constructing Optimal Binary Decision Trees is NP-complete. Laurent Hyafil, RL Rivest. Information Processing Letters, Vol. 5, No. 1. (1976), pp. 15-17.

- ^ doi:10.1007/978-1-84628-766-4

- ^ doi:10.1007/b13700

- ^ http://citeseer.ist.psu.edu/oliver93decision.html

- ^ Tan & Dowe (2003)