Newton's method

In numerical analysis, Newton's method (or the Newton–Raphson method or the Newton–Fourier method) is an efficient algorithm for finding approximations to the zeros (or roots) of a real-valued function. As such, it is an example of a root-finding algorithm. It can also be used to find a minimum or maximum of such a function, by finding a zero in the function's first derivative, see Newton's method as an optimization algorithm.

Description of the method

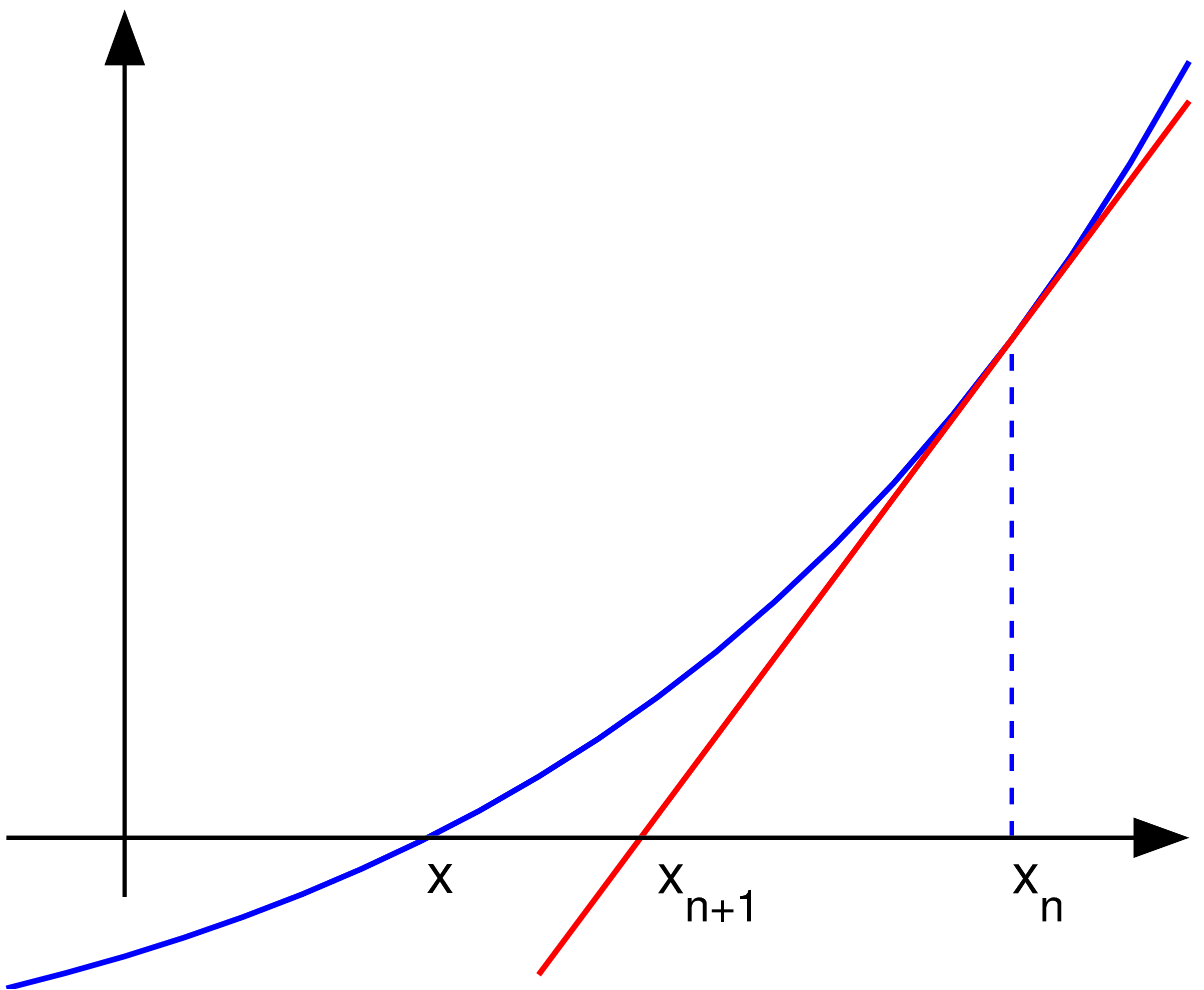

The idea of the method is as follows: one starts with a value which is reasonably close to the true zero, then replaces the function by its tangent (which can be computed using the tools of calculus) and computes the zero of this tangent (which is easily done with elementary algebra). This zero of the tangent will typically be a better approximation to the function's zero, and the method can be iterated.

Suppose f : [a, b] -> R is a differentiable function defined on the interval [a, b] with values in the real numbers R. The formula for converging on the zero can be easily derived. Suppose we have some current approximation xn. Then we can derive the formula for a better approximation, xn+1 by referring to the diagram below. We know from the definition of the derivative at a given point that it is the slope of a tangent at that point That is

Here, f ' denotes the derivative of the function f. Then by simple algebra we can derive

We start the process off with some arbitary initial value x0 (the closer to the zero the better). The method will usually converge provided this initial guess is close enough to the unknown zero. Furthermore, for a zero of multiplicity 1, the convergence is at least quadratic in a neighbourhood of the zero, which intuitively means that the number of correct digits roughly at least doubles in every step. More details can be found in the "Analysis" section.

An illustration of Newton's method. We see that is a better guess than for the zero x* of the function f.

Example

Consider the problem of finding the positive number x with cos(x) = x3. We can rephrase that as finding the zero of f(x) = cos(x) - x3. We have f '(x) = -sin(x) - 3x2. Since cos(x) ≤ 1 for all x and x3 > 1 for x>1, we know that our zero lies between 0 and 1. We try a starting value of x0 = 0.5.

The correct digits are underlined in the above example. In particular, x6 is correct to the number of decimal places given. We see that the number of correct digits after the decimal point increases from 2 (for x3) to 5 and 10, illustrating the quadratic convergence.

Consider the following example in pseudocode.

function newtonIterationFunction(x) {

return x - (cos(x) - x^3) / (-sin(x) - 3*x^2)

}

var x := 0.5

for i from 0 to 99 {

print "Iteration: " + i

print "Guess: " + x

xold := x

x := newtonIterationFunction(x)

if x = xold {

print "Solution found!"

break

}

}

Here is the same using a calculator.

History

Newton's method was described by Isaac Newton in De analysi per aequationes numero terminorum infinitas (written in 1669, published in 1711 by William Jones) and in De metodis fluxionum et serierum infinitarum (written in 1671, translated and published as Method of Fluxions in 1736 by John Colson). However, his description differs substantially from the modern description given above: Newton applies the method only to polynomials. He does not compute the successive approximations xn, but computes a sequence of polynomials and only at the end, he arrives at an approximation for the root x. Finally, Newton views the method as purely algebraic and fails to notice the connection with calculus. Isaac Newton probably derived his method from a similar but less precise method by François Viète. The essence of Viète's method can be found in the work of the Persian mathematician Sharaf al-Din al-Tusi.

Heron of Alexandria used essentially the same method in book 1, chapter 8, of his Metrica to determine the square root of 720.

Newton's method was first published in 1685 in A Treatise of Algebra both Historical and Practical by John Wallis. In 1690, Joseph Raphson published a simplified description in Analysis aequationum universalis. Raphson again viewed Newton's method purely as an algebraic method and restricted its use to polynomials, but he describes the method in terms of the successive approximations xn instead of the more complicated sequence of polynomials used by Newton. Finally, in 1740, Thomas Simpson described Newton's method as an iterative method for solving general nonlinear equations using fluxional calculus, essentially giving the description above. In the same publication, Simpson also gives the generalization to systems of two equations and notes that Newton's method can be used for solving optimization problems by setting the gradient to zero.

Arthur Cayley in 1879 in The Newton-Fourier imaginary problem was the first that noticed the difficulties in generalizing the Newton's method to complex roots of polynomials with degree greater than 2 and complex initial values. This opened the way to the study of the theory of iterations of rational functions.

Practical considerations

In general the convergence is quadratic: the error is essentially squared at each step (that is, the number of accurate digits doubles in each step). There are some caveats, however. First, Newton's method requires that the derivative be calculated directly. (If the derivative is approximated by the slope of a line through two points on the function, the secant method results; this can be more efficient depending on how one measures computational effort.) Second, if the initial value is too far from the true zero, Newton's method can fail to converge. Because of this, most practical implementations of Newton's method put an upper limit on the number of iterations and perhaps on the size of the iterates. Third, if the root being sought has multiplicity greater than one, the convergence rate is merely linear (errors reduced by a constant factor at each step) unless special steps are taken.

Counter examples

In these examples, the desired root is at zero for simplicity. It could have been placed elsewhere.

- If the derivative is not continuous at the root, then convergence may fail to occur.

Indeed, let

and

elsewhere.

Then

and

elsewhere.

Within any neighborhood of the root, this derivative keeps changing sign as x approaches 0 from the right (or from the left) while for .

So is unbounded near the root and Newton's method will not converge, even though: the function is differentiable everywhere; the derivative is not zero at the root; is infinitely differentiable except at the root; and the derivative is bounded in a neighborhood of the root (unlike its reciprocal).

- If there is no second derivative at the root, then convergence may fail to be quadratic.

Indeed, let

Then

And

except when where it is undefined. Given , which has approximately 4/3 times as many bits of precision as has. This is less than the 2 times as many which would be required for quadratic convergence.

So the convergence of Newton's method is not quadratic, even though: the function is continuously differentiable everywhere; the derivative is not zero at the root; and is infinitely differentiable except at the desired root.

- If the first derivative is zero at the root, then convergence will not be quadratic.

Indeed, let

Then and consequently . So convergence is not quadratic, even though the function is infinitely differentiable everywhere.

Analysis

Suppose that the function f has a zero at α, i.e., f(α) = 0.

If f is continuously differentiable and its derivative does not vanish at α, then there exists a neighborhood of α such that for all starting values x0 in that neighborhood, the sequence {xn} will converge to α.

If the function is continuously differentiable and its derivative does not vanish at α and it has a second derivative at α then the convergence is quadratic or faster. If the second derivative does not vanish at α then the convergence is merely quadratic.

If the derivative does vanish at α, then the convergence is usually only linear. Specifically, if f is twice continuously differentiable, and , then there exists a neighborhood of α such that for all starting values x0 in that neighborhood, the sequence of iterates converges linearly, with rate log10 2 (Süli & Mayers, Exercise 1.6). Alternatively if and elsewhere, in a neighborhood U of α, α being a zero of multiplicity r and if then there exists a neighborhood of α such that for all starting values x0 in that neighborhood, the sequence of iterates converges linearly.

However, even linear convergence is not guaranteed in pathological situations.

In practice these results are local and the neighborhood of convergence are not known a priori, but there are also some results on global convergence, for instance, given a right neighborhood U+ of α, if f is twice differentiable in U+ and if , in U+, then, for each x0 in U+ the sequence xk is monotonically decreasing to α.

Generalizations

Nonlinear systems of equations

One may use Newton's method also to solve systems of k (non-linear) equations, which amounts to finding the zeros of continuously differentiable functions F : Rk -> Rk. In the formulation given above, one then has to multiply with the inverse of the k-by-k Jacobian matrix JF(xn) instead of dividing by f '(xn). Rather than actually computing the inverse of this matrix, one can save time by solving the system of linear equations

for the unknown xn+1 - xn. Again, this method only works if the initial value x0 is close enough to the true zero. Typically, a region which is well-behaved is located first with some other method and Newton's method is then used to "polish" a root which is already known approximately.

Nonlinear equations in a Banach space

Another generalization is the Newton's method to find a zero of a function F defined in a Banach space. In this case the formulation is

- ,

where is the Fréchet derivative applied at the point . One needs the Fréchet derivative to be invertible at each in order for the method to be applicable.

Complex functions

When dealing with complex functions, however, Newton's method can be directly applied to find their zeros. For many complex functions, the boundary of the set (also known as the basin of attraction) of all starting values that cause the method to converge to the true zero is a fractal.

References

- Tjalling J. Ypma, Historical development of the Newton-Raphson method, SIAM Review 37 (4), 531–551, 1995.

- P. Deuflhard, Newton Methods for Nonlinear Problems. Affine Invariance and Adaptive Algorithms. Springer Series in Computational Mathematics, Vol. 35. Springer, Berlin, 2004. ISBN 3-540-21099-7.

- C. T. Kelley, Solving Nonlinear Equations with Newton's Method, no 1 in Fundamentals of Algorithms, SIAM, 2003. ISBN 0-89871-546-6.

- J. M. Ortega, W. C. Rheinboldt, Iterative Solution of Nonlinear Equations in Several Variables. Classics in Applied Mathematics, SIAM, 2000. ISBN 0-89871-461-3.

- W. H. Press, B. P. Flannery, S. A. Teukolsky, W. T. Vetterling, Numerical Recipes in C: The Art of Scientific Computing, Cambridge University Press, 1992. ISBN 0-521-43108-5. (online, with code samples: [1])

- W. H. Press, B. P. Flannery, S. A. Teukolsky, W. T. Vetterling, Numerical Recipes in Fortran, Cambridge University Press, 1992. ISBN 0-521-43064-X (online, with code samples: [2])

- Endre Süli and David Mayers, An Introduction to Numerical Analysis, Cambridge University Press, 2003. ISBN 0-521-00794-1.

External links

- The Generalized Mediant and Newton's method

- Newton's method on Wolfram.com

- Newton's method on the Mathcad Application Server (with animation)

- Newton-Raphson Method Notes, PPT, Mathcad, Maple, Matlab, Mathematica

- worked example

![{\displaystyle X_{n+1}=X_{n}-(F'_{X_{n}})^{-1}[F(X_{n})]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2f2ee41bb7ed2674f2621406873b08c5ce80f343)