Chomsky hierarchy

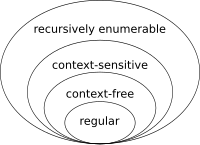

The Chomsky hierarchy (infrequently referred to as the Chomsky–Schützenberger hierarchy[1]) in the fields of formal language theory, computer science, and linguistics, is a containment hierarchy of classes of formal grammars. A formal grammar describes how to form strings from a language's vocabulary (or alphabet) that are valid according to the language's syntax. The linguist Noam Chomsky theorized that four different classes of formal grammars existed that could generate increasingly complex languages. Each class can also completely generate the language of all inferior classes (set inclusive).

History[edit]

The general idea of a hierarchy of grammars was first described by Noam Chomsky in "Three models for the description of language".[2] Marcel-Paul Schützenberger also played a role in the development of the theory of formal languages; the paper "The algebraic theory of context free languages"[3] describes the modern hierarchy, including context-free grammars.[1]

Independently, alongside linguists, mathematicians were developing models of computation (via automata). Parsing a sentence in a language is similar to computation, and the grammars described by Chomsky proved to both resemble and be equivalent in computational power to various machine models.[4]

The hierarchy[edit]

The following table summarizes each of Chomsky's four types of grammars, the class of language it generates, the type of automaton that recognizes it, and the form its rules must have. The classes are defined by the constraints on the productions rules.

| Grammar | Languages | Recognizing Automaton | Production rules (constraints)* | Examples[5][6] |

|---|---|---|---|---|

| Type-3 | Regular | Finite-state automaton | (right regular) or (left regular) |

|

| Type-2 | Context-free | Non-deterministic pushdown automaton | ||

| Type-1 | Context-sensitive | Linear-bounded non-deterministic Turing machine | ||

| Type-0 | Recursively enumerable | Turing machine | ( non-empty) | describes a terminating Turing machine |

* Meaning of symbols:

| ||||

Note that the set of grammars corresponding to recursive languages is not a member of this hierarchy; these would be properly between Type-0 and Type-1.

Every regular language is context-free, every context-free language is context-sensitive, every context-sensitive language is recursive and every recursive language is recursively enumerable. These are all proper inclusions, meaning that there exist recursively enumerable languages that are not context-sensitive, context-sensitive languages that are not context-free and context-free languages that are not regular.[7]

Regular (Type-3) grammars[edit]

Type-3 grammars generate the regular languages. Such a grammar restricts its rules to a single nonterminal on the left-hand side and a right-hand side consisting of a single terminal, possibly followed by a single nonterminal, in which case the grammar is right regular. Alternatively, all the rules can have their right-hand sides consist of a single terminal, possibly preceded by a single nonterminal (left regular). These generate the same languages. However, if left-regular rules and right-regular rules are combined, the language need no longer be regular. The rule is also allowed here if does not appear on the right side of any rule. These languages are exactly all languages that can be decided by a finite-state automaton. Additionally, this family of formal languages can be obtained by regular expressions. Regular languages are commonly used to define search patterns and the lexical structure of programming languages.

For example, the regular language is generated by the Type-3 grammar with the productions being the following.

- S → aS

- S → a

Context-free (Type-2) grammars[edit]

Type-2 grammars generate the context-free languages. These are defined by rules of the form with being a nonterminal and being a string of terminals and/or nonterminals. These languages are exactly all languages that can be recognized by a non-deterministic pushdown automaton. Context-free languages—or rather its subset of deterministic context-free languages—are the theoretical basis for the phrase structure of most programming languages, though their syntax also includes context-sensitive name resolution due to declarations and scope. Often a subset of grammars is used to make parsing easier, such as by an LL parser.

For example, the context-free language is generated by the Type-2 grammar with the productions being the following.

- S → aSb

- S → ab

The language is context-free but not regular (by the pumping lemma for regular languages).

Context-sensitive (Type-1) grammars[edit]

Type-1 grammars generate context-sensitive languages. These grammars have rules of the form with a nonterminal and , and strings of terminals and/or nonterminals. The strings and may be empty, but must be nonempty. The rule is allowed if does not appear on the right side of any rule. The languages described by these grammars are exactly all languages that can be recognized by a linear bounded automaton (a nondeterministic Turing machine whose tape is bounded by a constant times the length of the input.)

For example, the context-sensitive language is generated by the Type-1 grammar with the productions being the following.

- S → aBC

- S → aSBC

- CB → CZ

- CZ → WZ

- WZ → WC

- WC → BC

- aB → ab

- bB → bb

- bC → bc

- cC → cc

The language is context-sensitive but not context-free (by the pumping lemma for context-free languages). A proof that this grammar generates is sketched in the article on Context-sensitive grammars.

Recursively enumerable (Type-0) grammars[edit]

Type-0 grammars include all formal grammars. There are no constraints on the productions rules. They generate exactly all languages that can be recognized by a Turing machine, thus any language that is possible to be generated can be generated by a Type-0 grammar.[8] These languages are also known as the recursively enumerable or Turing-recognizable languages.[8] Note that this is different from the recursive languages, which can be decided by an always-halting Turing machine.

See also[edit]

Citations[edit]

- ^ a b Allott, Nicholas; Lohndal, Terje; Rey, Georges (27 April 2021). "Synoptic Introduction". A Companion to Chomsky: 1–17. doi:10.1002/9781119598732.ch1. ISBN 9781119598701. S2CID 241301126.

- ^ Chomsky 1956.

- ^ Chomsky & Schützenberger 1963.

- ^ Kozen, Dexter C. (2007). Automata and computability. Undergraduate Texts in Computer Science. Springer. pp. 3–4. ISBN 978-0-387-94907-9.

- ^ Geuvers, H.; Rot, J. (2016). "Applications, Chomsky hierarchy, and Recap" (PDF). Regular Languages. Archived (PDF) from the original on 2018-11-19.

- ^ Sudkamp, Thomas A. (1997) [1988]. Languages and machines: An Introduction to the Theory of Computer Science. Reading, Massachusetts, USA: Addison Wesley Longman. p. 310. ISBN 978-0-201-82136-9.

- ^ Chomsky, Noam (1963). "Chapter 12: Formal Properties of Grammars". In Luce, R. Duncan; Bush, Robert R.; Galanter, Eugene (eds.). Handbook of Mathematical Psychology. Vol. II. John Wiley and Sons, Inc. pp. 323–418.

- ^ a b Sipser, Michael (1997). Introduction to the Theory of Computation (1st ed.). Cengage Learning. p. 130. ISBN 0-534-94728-X.

The Church-Turing Thesis

References[edit]

- Chomsky, Noam (1956). "Three models for the description of language" (PDF). IRE Transactions on Information Theory. 2 (3): 113–124. doi:10.1109/TIT.1956.1056813. S2CID 19519474. Archived (PDF) from the original on 2016-03-07.

- Chomsky, Noam (1959). "On certain formal properties of grammars" (PDF). Information and Control. 2 (2): 137–167. doi:10.1016/S0019-9958(59)90362-6.

- Chomsky, Noam; Schützenberger, Marcel P. (1963). "The algebraic theory of context free languages". In Braffort, P.; Hirschberg, D. (eds.). Computer Programming and Formal Systems (PDF). Amsterdam: North Holland. pp. 118–161. Archived (PDF) from the original on 2011-06-13.