Image sensor format

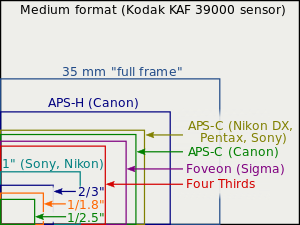

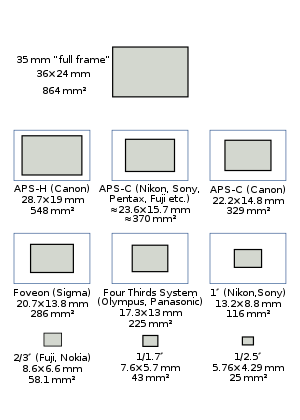

In digital photography, the image sensor format is the shape and size of the image sensor.

The image sensor format of a digital camera determines the angle of view of a particular lens when used with a particular sensor. Because the image sensors in many digital cameras are smaller than the 24 mm × 36 mm image area of full-frame 35 mm cameras, a lens of a given focal length gives a narrower field of view in such cameras.

Sensor size is often expressed as optical format in inches. Other measures are also used; see table of sensor formats and sizes below.

Lenses produced for 35 mm film cameras may mount well on the digital bodies, but the larger image circle of the 35 mm system lens allows unwanted light into the camera body, and the smaller size of the image sensor compared to 35 mm film format results in cropping of the image. This latter effect is known as field-of-view crop. The format size ratio (relative to the 35 mm film format) is known as the field-of-view crop factor, crop factor, lens factor, focal-length conversion factor, focal-length multiplier, or lens multiplier.

Sensor size and depth of field[edit]

Three possible depth-of-field comparisons between formats are discussed, applying the formulae derived in the article on depth of field. The depths of field of the three cameras may be the same, or different in either order, depending on what is held constant in the comparison.

Considering a picture with the same subject distance and angle of view for two different formats:

so the DOFs are in inverse proportion to the absolute aperture diameters and .

Using the same absolute aperture diameter for both formats with the "same picture" criterion (equal angle of view, magnified to same final size) yields the same depth of field. It is equivalent to adjusting the f-number inversely in proportion to crop factor – a smaller f-number for smaller sensors (this also means that, when holding the shutter speed fixed, the exposure is changed by the adjustment of the f-number required to equalise depth of field. But the aperture area is held constant, so sensors of all sizes receive the same total amount of light energy from the subject. The smaller sensor is then operating at a lower ISO setting, by the square of the crop factor). This condition of equal field of view, equal depth of field, equal aperture diameter, and equal exposure time is known as "equivalence".[1]

And, we might compare the depth of field of sensors receiving the same photometric exposure – the f-number is fixed instead of the aperture diameter – the sensors are operating at the same ISO setting in that case, but the smaller sensor is receiving less total light, by the area ratio. The ratio of depths of field is then

where and are the characteristic dimensions of the format, and thus is the relative crop factor between the sensors. It is this result that gives rise to the common opinion that small sensors yield greater depth of field than large ones.

An alternative is to consider the depth of field given by the same lens in conjunction with different sized sensors (changing the angle of view). The change in depth of field is brought about by the requirement for a different degree of enlargement to achieve the same final image size. In this case the ratio of depths of field becomes

- .

In practice, if applying a lens with a fixed focal length and a fixed aperture and made for an image circle to meet the requirements for a large sensor is to be adapted, without changing its physical properties, to smaller sensor sizes neither the depth of field nor the light gathering will change.

Sensor size, noise and dynamic range[edit]

Discounting photo response non-uniformity (PRNU) and dark noise variation, which are not intrinsically sensor-size dependent, the noises in an image sensor are shot noise, read noise, and dark noise. The overall signal to noise ratio of a sensor (SNR), expressed as signal electrons relative to rms noise in electrons, observed at the scale of a single pixel, assuming shot noise from Poisson distribution of signal electrons and dark electrons, is

where is the incident photon flux (photons per second in the area of a pixel), is the quantum efficiency, is the exposure time, is the pixel dark current in electrons per second and is the pixel read noise in electrons rms.[2]

Each of these noises has a different dependency on sensor size.

Exposure and photon flux[edit]

Image sensor noise can be compared across formats for a given fixed photon flux per pixel area (the P in the formulas); this analysis is useful for a fixed number of pixels with pixel area proportional to sensor area, and fixed absolute aperture diameter for a fixed imaging situation in terms of depth of field, diffraction limit at the subject, etc. Or it can be compared for a fixed focal-plane illuminance, corresponding to a fixed f-number, in which case P is proportional to pixel area, independent of sensor area. The formulas above and below can be evaluated for either case.

Shot noise[edit]

In the above equation, the shot noise SNR is given by

- .

Apart from the quantum efficiency it depends on the incident photon flux and the exposure time, which is equivalent to the exposure and the sensor area; since the exposure is the integration time multiplied with the image plane illuminance, and illuminance is the luminous flux per unit area. Thus for equal exposures, the signal to noise ratios of two different size sensors of equal quantum efficiency and pixel count will (for a given final image size) be in proportion to the square root of the sensor area (or the linear scale factor of the sensor). If the exposure is constrained by the need to achieve some required depth of field (with the same shutter speed) then the exposures will be in inverse relation to the sensor area, producing the interesting result that if depth of field is a constraint, image shot noise is not dependent on sensor area. For identical f-number lenses the signal to noise ratio increases as square root of the pixel area, or linearly with pixel pitch. As typical f-numbers for lenses for cell phones and DSLR are in the same range f/1.5–2 it is interesting to compare performance of cameras with small and big sensors. A good cell phone camera with typical pixel size 1.1 μm (Samsung A8) would have about 3 times worse SNR due to shot noise than a 3.7 μm pixel interchangeable lens camera (Panasonic G85) and 5 times worse than a 6 μm full frame camera (Sony A7 III). Taking into consideration the dynamic range makes the difference even more prominent. As such the trend of increasing the number of "megapixels" in cell phone cameras during last 10 years was caused rather by marketing strategy to sell "more megapixels" than by attempts to improve image quality.

Read noise[edit]

The read noise is the total of all the electronic noises in the conversion chain for the pixels in the sensor array. To compare it with photon noise, it must be referred back to its equivalent in photoelectrons, which requires the division of the noise measured in volts by the conversion gain of the pixel. This is given, for an active pixel sensor, by the voltage at the input (gate) of the read transistor divided by the charge which generates that voltage, . This is the inverse of the capacitance of the read transistor gate (and the attached floating diffusion) since capacitance .[3] Thus .

In general for a planar structure such as a pixel, capacitance is proportional to area, therefore the read noise scales down with sensor area, as long as pixel area scales with sensor area, and that scaling is performed by uniformly scaling the pixel.

Considering the signal to noise ratio due to read noise at a given exposure, the signal will scale as the sensor area along with the read noise and therefore read noise SNR will be unaffected by sensor area. In a depth of field constrained situation, the exposure of the larger sensor will be reduced in proportion to the sensor area, and therefore the read noise SNR will reduce likewise.

Dark noise[edit]

Dark current contributes two kinds of noise: dark offset, which is only partly correlated between pixels, and the shot noise associated with dark offset, which is uncorrelated between pixels. Only the shot-noise component Dt is included in the formula above, since the uncorrelated part of the dark offset is hard to predict, and the correlated or mean part is relatively easy to subtract off. The mean dark current contains contributions proportional both to the area and the linear dimension of the photodiode, with the relative proportions and scale factors depending on the design of the photodiode.[4] Thus in general the dark noise of a sensor may be expected to rise as the size of the sensor increases. However, in most sensors the mean pixel dark current at normal temperatures is small, lower than 50 e- per second,[5] thus for typical photographic exposure times dark current and its associated noises may be discounted. At very long exposure times, however, it may be a limiting factor. And even at short or medium exposure times, a few outliers in the dark-current distribution may show up as "hot pixels". Typically, for astrophotography applications sensors are cooled to reduce dark current in situations where exposures may be measured in several hundreds of seconds.

Dynamic range[edit]

Dynamic range is the ratio of the largest and smallest recordable signal, the smallest being typically defined by the 'noise floor'. In the image sensor literature, the noise floor is taken as the readout noise, so [6] (note, the read noise is the same quantity as referred to in the SNR calculation[2]).

Sensor size and diffraction[edit]

The resolution of all optical systems is limited by diffraction. One way of considering the effect that diffraction has on cameras using different sized sensors is to consider the modulation transfer function (MTF). Diffraction is one of the factors that contribute to the overall system MTF. Other factors are typically the MTFs of the lens, anti-aliasing filter and sensor sampling window.[7] The spatial cut-off frequency due to diffraction through a lens aperture is

where λ is the wavelength of the light passing through the system and N is the f-number of the lens. If that aperture is circular, as are (approximately) most photographic apertures, then the MTF is given by

for and for [8] The diffraction based factor of the system MTF will therefore scale according to and in turn according to (for the same light wavelength).

In considering the effect of sensor size, and its effect on the final image, the different magnification required to obtain the same size image for viewing must be accounted for, resulting in an additional scale factor of where is the relative crop factor, making the overall scale factor . Considering the three cases above:

For the 'same picture' conditions, same angle of view, subject distance and depth of field, then the f-numbers are in the ratio , so the scale factor for the diffraction MTF is 1, leading to the conclusion that the diffraction MTF at a given depth of field is independent of sensor size.

In both the 'same photometric exposure' and 'same lens' conditions, the f-number is not changed, and thus the spatial cutoff and resultant MTF on the sensor is unchanged, leaving the MTF in the viewed image to be scaled as the magnification, or inversely as the crop factor.

Sensor format and lens size[edit]

It might be expected that lenses appropriate for a range of sensor sizes could be produced by simply scaling the same designs in proportion to the crop factor.[9] Such an exercise would in theory produce a lens with the same f-number and angle of view, with a size proportional to the sensor crop factor. In practice, simple scaling of lens designs is not always achievable, due to factors such as the non-scalability of manufacturing tolerance, structural integrity of glass lenses of different sizes and available manufacturing techniques and costs. Moreover, to maintain the same absolute amount of information in an image (which can be measured as the space-bandwidth product[10]) the lens for a smaller sensor requires a greater resolving power. The development of the 'Tessar' lens is discussed by Nasse,[11] and shows its transformation from an f/6.3 lens for plate cameras using the original three-group configuration through to an f/2.8 5.2 mm four-element optic with eight extremely aspheric surfaces, economically manufacturable because of its small size. Its performance is 'better than the best 35 mm lenses – but only for a very small image'.

In summary, as sensor size reduces, the accompanying lens designs will change, often quite radically, to take advantage of manufacturing techniques made available due to the reduced size. The functionality of such lenses can also take advantage of these, with extreme zoom ranges becoming possible. These lenses are often very large in relation to sensor size, but with a small sensor can be fitted into a compact package.

Small body means small lens and means small sensor, so to keep smartphones slim and light, the smartphone manufacturers use a tiny sensor usually less than the 1/2.3" used in most bridge cameras. At one time only Nokia 808 PureView used a 1/1.2" sensor, almost three times the size of a 1/2.3" sensor. Bigger sensors have the advantage of better image quality, but with improvements in sensor technology, smaller sensors can achieve the feats of earlier larger sensors. These improvements in sensor technology allow smartphone manufacturers to use image sensors as small as 1/4" without sacrificing too much image quality compared to budget point & shoot cameras.[12]

Active area of the sensor[edit]

For calculating camera angle of view one should use the size of active area of the sensor. Active area of the sensor implies an area of the sensor on which image is formed in a given mode of the camera. The active area may be smaller than the image sensor, and active area can differ in different modes of operation of the same camera. Active area size depends on the aspect ratio of the sensor and aspect ratio of the output image of the camera. The active area size can depend on number of pixels in given mode of the camera. The active area size and lens focal length determines angles of view.[13]

Sensor size and shading effects[edit]

Semiconductor image sensors can suffer from shading effects at large apertures and at the periphery of the image field, due to the geometry of the light cone projected from the exit pupil of the lens to a point, or pixel, on the sensor surface. The effects are discussed in detail by Catrysse and Wandell.[14] In the context of this discussion the most important result from the above is that to ensure a full transfer of light energy between two coupled optical systems such as the lens' exit pupil to a pixel's photoreceptor the geometrical extent (also known as etendue or light throughput) of the objective lens / pixel system must be smaller than or equal to the geometrical extent of the microlens / photoreceptor system. The geometrical extent of the objective lens / pixel system is given by

In order to avoid shading, therefore

If wphotoreceptor / wpixel = ff, the linear fill factor of the lens, then the condition becomes

Thus if shading is to be avoided the f-number of the microlens must be smaller than the f-number of the taking lens by at least a factor equal to the linear fill factor of the pixel. The f-number of the microlens is determined ultimately by the width of the pixel and its height above the silicon, which determines its focal length. In turn, this is determined by the height of the metallisation layers, also known as the 'stack height'. For a given stack height, the f-number of the microlenses will increase as pixel size reduces, and thus the objective lens f-number at which shading occurs will tend to increase.[a]

In order to maintain pixel counts smaller sensors will tend to have smaller pixels, while at the same time smaller objective lens f-numbers are required to maximise the amount of light projected on the sensor. To combat the effect discussed above, smaller format pixels include engineering design features to allow the reduction in f-number of their microlenses. These may include simplified pixel designs which require less metallisation, 'light pipes' built within the pixel to bring its apparent surface closer to the microlens and 'back side illumination' in which the wafer is thinned to expose the rear of the photodetectors and the microlens layer is placed directly on that surface, rather than the front side with its wiring layers.[b]

Common image sensor formats[edit]

For interchangeable-lens cameras[edit]

Some professional DSLRs, SLTs and mirrorless cameras use full-frame sensors, equivalent to the size of a frame of 35 mm film.

Most consumer-level DSLRs, SLTs and mirrorless cameras use relatively large sensors, either somewhat under the size of a frame of APS-C film, with a crop factor of 1.5–1.6; or 30% smaller than that, with a crop factor of 2.0 (this is the Four Thirds System, adopted by Olympus and Panasonic).

As of November 2013[update] there is only one mirrorless model equipped with a very small sensor, more typical of compact cameras: the Pentax Q7, with a 1/1.7" sensor (4.55 crop factor). See Sensors equipping Compact digital cameras and camera-phones section below.

Many different terms are used in marketing to describe DSLR/SLT/mirrorless sensor formats, including the following:

- 860 mm2 area Full-frame digital SLR format, with sensor dimensions nearly equal to those of 35 mm film (36×24 mm) from Pentax, Panasonic, Leica, Nikon, Canon, Sony and Sigma.

- 370 mm2 area APS-C standard format from Nikon, Pentax, Sony, Fujifilm, Sigma (crop factor 1.5) (Actual APS-C film is bigger, however.)

- 330 mm2 area APS-C smaller format from Canon (crop factor 1.6)

- 225 mm2 area Micro Four Thirds System format from Panasonic, Olympus, Black Magic and Polaroid (crop factor 2.0)

Obsolescent and out-of-production sensor sizes include:

- 548 mm2 area Leica's M8 and M8.2 sensor (crop factor 1.33). Current M-series sensors are effectively full-frame (crop factor 1.0).

- 548 mm2 area Canon's APS-H format for high-speed pro-level DSLRs (crop factor 1.3). Current 1D/5D-series sensors are effectively full-frame (crop factor 1.0).

- 548 mm2 area APS-H format for the high-end mirrorless SD Quattro H from Sigma (crop factor 1.35)

- 370 mm2 area APS-C crop factor 1.5 format from Epson, Samsung NX, Konica Minolta.

- 286 mm2 area Foveon X3 format used in Sigma SD-series DSLRs and DP-series mirrorless (crop factor 1.7). Later models such as the SD1, DP2 Merrill and most of the Quattro series use a crop factor 1.5 Foveon sensor; the even more recent Quattro H mirrorless uses an APS-H Foveon sensor with a 1.35 crop factor.

- 225 mm2 area Four Thirds System format from Olympus (crop factor 2.0)

- 116 mm2 area 1" Nikon CX format used in Nikon 1 series[17] and Samsung mini-NX series (crop factor 2.7)

- 43 mm2 area 1/1.7" Pentax Q7 (4.55 crop factor)

- 30 mm2 area 1/2.3" original Pentax Q (5.6 crop factor). Current Q-series cameras have a crop factor of 4.55.

When full-frame sensors were first introduced, production costs could exceed twenty times the cost of an APS-C sensor. Only twenty full-frame sensors can be produced on an 8 inches (20 cm) silicon wafer, which would fit 100 or more APS-C sensors, and there is a significant reduction in yield due to the large area for contaminants per component. Additionally, full frame sensor fabrication originally required three separate exposures during each step of the photolithography process, which requires separate masks and quality control steps. Canon selected the intermediate APS-H size, since it was at the time the largest that could be patterned with a single mask, helping to control production costs and manage yields.[18] Newer photolithography equipment now allows single-pass exposures for full-frame sensors, although other size-related production constraints remain much the same.

Due to the ever-changing constraints of semiconductor fabrication and processing, and because camera manufacturers often source sensors from third-party foundries, it is common for sensor dimensions to vary slightly within the same nominal format. For example, the Nikon D3 and D700 cameras' nominally full-frame sensors actually measure 36 × 23.9 mm, slightly smaller than a 36 × 24 mm frame of 35 mm film. As another example, the Pentax K200D's sensor (made by Sony) measures 23.5 × 15.7 mm, while the contemporaneous K20D's sensor (made by Samsung) measures 23.4 × 15.6 mm.

Most of these image sensor formats approximate the 3:2 aspect ratio of 35 mm film. Again, the Four Thirds System is a notable exception, with an aspect ratio of 4:3 as seen in most compact digital cameras (see below).

Smaller sensors[edit]

Most sensors are made for camera phones, compact digital cameras, and bridge cameras. Most image sensors equipping compact cameras have an aspect ratio of 4:3. This matches the aspect ratio of the popular SVGA, XGA, and SXGA display resolutions at the time of the first digital cameras, allowing images to be displayed on usual monitors without cropping.

As of December 2010[update] most compact digital cameras used small 1/2.3" sensors. Such cameras include Canon Powershot SX230 IS, Fuji Finepix Z90 and Nikon Coolpix S9100. Some older digital cameras (mostly from 2005–2010) used even smaller 1/2.5" sensors: these include Panasonic Lumix DMC-FS62, Canon Powershot SX120 IS, Sony Cyber-shot DSC-S700, and Casio Exilim EX-Z80.

As of 2018 high-end compact cameras using one inch sensors that have nearly four times the area of those equipping common compacts include Canon PowerShot G-series (G3 X to G9 X), Sony DSC RX100 series, Panasonic Lumix TZ100 and Panasonic DMC-LX15. Canon has APS-C sensor on its top model PowerShot G1 X Mark III.

Finally, Sony has the DSC-RX1 and DSC-RX1R cameras in their lineup, which have a full-frame sensor usually only used in professional DSLRs, SLTs and MILCs.

Due to the size constraints of powerful zoom objectives, most current bridge cameras have 1/2.3" sensors, as small as those used in common more compact cameras. As lens sizes are proportional to the image sensor size, smaller sensors enable large zoom amounts with moderate size lenses. In 2011 the high-end Fujifilm X-S1 was equipped with a much larger 2/3" sensor. In 2013–2014, both Sony (Cyber-shot DSC-RX10) and Panasonic (Lumix DMC-FZ1000) produced bridge cameras with 1" sensors.

The sensors of camera phones are typically much smaller than those of typical compact cameras, allowing greater miniaturization of the electrical and optical components. Sensor sizes of around 1/6" are common in camera phones, webcams and digital camcorders. The Nokia N8's 1/1.83" sensor was the largest in a phone in late 2011. The Nokia 808 surpasses compact cameras with its 41 million pixels, 1/1.2" sensor.[19]

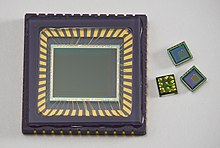

Medium-format digital sensors[edit]

The largest digital sensors in commercially available cameras are described as "medium format", in reference to film formats of similar dimensions. Although the most common medium format film, the 120 roll, is 6 cm (2.4 in) wide, and is most commonly shot square, the most common "medium-format" digital sensor sizes are approximately 48 mm × 36 mm (1.9 in × 1.4 in), which is roughly twice the size of a full-frame DSLR sensor format.

Available CCD sensors include Phase One's P65+ digital back with Dalsa's 53.9 mm × 40.4 mm (2.12 in × 1.59 in) sensor containing 60.5 megapixels[20] and Leica's "S-System" DSLR with a 45 mm × 30 mm (1.8 in × 1.2 in) sensor containing 37-megapixels.[21] In 2010, Pentax released the 40MP 645D medium format DSLR with a 44 mm × 33 mm (1.7 in × 1.3 in) CCD sensor;[22] later models of the 645 series kept the same sensor size but replaced the CCD with a CMOS sensor. In 2016, Hasselblad announced the X1D, a 50MP medium-format mirrorless camera, with a 44 mm × 33 mm (1.7 in × 1.3 in) CMOS sensor.[23] In late 2016, Fujifilm also announced its new Fujifilm GFX 50S medium format, mirrorless entry into the market, with a 43.8 mm × 32.9 mm (1.72 in × 1.30 in) CMOS sensor and 51.4MP. [24] [25]

Table of sensor formats and sizes[edit]

Sensor sizes are expressed in inches notation because at the time of the popularization of digital image sensors they were used to replace video camera tubes. The common 1" outside diameter circular video camera tubes have a rectangular photo sensitive area about 16 mm on the diagonal, so a digital sensor with a 16 mm diagonal size is a 1" video tube equivalent. The name of a 1" digital sensor should more accurately be read as "one inch video camera tube equivalent" sensor. Current digital image sensor size descriptors are the video camera tube equivalency size, not the actual size of the sensor. For example, a 1" sensor has a diagonal measurement of 16 mm.[26][27]

Sizes are often expressed as a fraction of an inch, with a one in the numerator, and a decimal number in the denominator. For example, 1/2.5 converts to 2/5 as a simple fraction, or 0.4 as a decimal number. This "inch" system gives a result approximately 1.5 times the length of the diagonal of the sensor. This "optical format" measure goes back to the way image sizes of video cameras used until the late 1980s were expressed, referring to the outside diameter of the glass envelope of the video camera tube. David Pogue of The New York Times states that "the actual sensor size is much smaller than what the camera companies publish – about one-third smaller." For example, a camera advertising a 1/2.7" sensor does not have a sensor with a diagonal of 0.37 in (9.4 mm); instead, the diagonal is closer to 0.26 in (6.6 mm).[28][29][30] Instead of "formats", these sensor sizes are often called types, as in "1/2-inch-type CCD."

Due to inch-based sensor formats not being standardized, their exact dimensions may vary, but those listed are typical.[29] The listed sensor areas span more than a factor of 1000 and are proportional to the maximum possible collection of light and image resolution (same lens speed, i.e., minimum f-number), but in practice are not directly proportional to image noise or resolution due to other limitations. See comparisons.[31][32] Film format sizes are also included, for comparison. The application examples of phone or camera may not show the exact sensor sizes.

| Type | Diagonal (mm) | Width (mm) | Height (mm) | Aspect Ratio | Area (mm2) | Stops (area)[A] | Crop factor[B] |

|---|---|---|---|---|---|---|---|

| 1/10" | 1.60 | 1.28 | 0.96 | 4:3 | 1.23 | −9.46 | 27.04 |

| 1/8" (Sony DCR-SR68, DCR-DVD110E) | 2.00 | 1.60 | 1.20 | 4:3 | 1.92 | −8.81 | 21.65 |

| 1/6" (Panasonic SDR-H20, SDR-H200) | 3.00 | 2.40 | 1.80 | 4:3 | 4.32 | −7.64 | 14.14 |

| 1/4"[33] | 4.50 | 3.60 | 2.70 | 4:3 | 9.72 | −6.47 | 10.81 |

| 1/3.6" (Nokia Lumia 720)[34] | 5.00 | 4.00 | 3.00 | 4:3 | 12.0 | −6.17 | 8.65 |

| 1/3.2" (iPhone 5)[35] | 5.68 | 4.54 | 3.42 | 4:3 | 15.50 | −5.80 | 7.61 |

| 1/3.09" Sony EXMOR IMX351[36] | 5.82 | 4.66 | 3.5 | 4:3 | 16.3 | −5.73 | 7.43 |

| Standard 8 mm film frame | 5.94 | 4.8 | 3.5 | 11:8 | 16.8 | −5.68 | 7.28 |

| 1/3" (iPhone 5S, iPhone 6, LG G3[37]) | 6.00 | 4.80 | 3.60 | 4:3 | 17.30 | −5.64 | 7.21 |

| 1/2.9" Sony EXMOR IMX322[38] | 6.23 | 4.98 | 3.74 | 4:3 | 18.63 | −5.54 | 6.92 |

| 1/2.7" Fujifilm 2800 Zoom | 6.72 | 5.37 | 4.04 | 4:3 | 21.70 | −5.32 | 6.44 |

| Super 8 mm film frame | 7.04 | 5.79 | 4.01 | 13:9 | 23.22 | −5.22 | 6.15 |

| 1/2.5" (Nokia Lumia 1520, Sony Cyber-shot DSC-T5, iPhone XS[39]) | 7.18 | 5.76 | 4.29 | 4:3 | 24.70 | −5.13 | 6.02 |

| 1/2.3" (Pentax Q, Sony Cyber-shot DSC-W330, GoPro HERO3, Panasonic HX-A500, Google Pixel/Pixel+, DJI Phantom 3[40]/Mavic 2 Zoom[41]), Nikon P1000/P900 | 7.66 | 6.17 | 4.55 | 4:3 | 28.50 | −4.94 | 5.64 |

| 1/2.3" Sony Exmor IMX220[42] | 7.87 | 6.30 | 4.72 | 4:3 | 29.73 | −4.86 | 5.49 |

| 1/2" (Fujifilm HS30EXR, Xiaomi Mi 9, OnePlus 7, Espros EPC 660, DJI Mavic Air 2) | 8.00 | 6.40 | 4.80 | 4:3 | 30.70 | −4.81 | 5.41 |

| 1/1.8" (Nokia N8) (Olympus C-5050, C-5060, C-7070) | 8.93 | 7.18 | 5.32 | 4:3 | 38.20 | −4.50 | 4.84 |

| 1/1.7" (Pentax Q7, Canon G10, G15, Huawei P20 Pro, Huawei P30 Pro, Huawei Mate 20 Pro) | 9.50 | 7.60 | 5.70 | 4:3 | 43.30 | −4.32 | 4.55 |

| 1/1.6" (Fujifilm f200exr [1]) | 10.07 | 8.08 | 6.01 | 4:3 | 48.56 | −4.15 | 4.30 |

| 2/3" (Nokia Lumia 1020, Fujifilm X10, X20, XF1) | 11.00 | 8.80 | 6.60 | 4:3 | 58.10 | −3.89 | 3.93 |

| 1/1.33" (Samsung Galaxy S20 Ultra)[43] | 12 | 9.6 | 7.2 | 4:3 | 69.12 | −3.64 | 3.58 |

| Standard 16 mm film frame | 12.70 | 10.26 | 7.49 | 11:8 | 76.85 | −3.49 | 3.41 |

| 1/1.2" (Nokia 808 PureView) | 13.33 | 10.67 | 8.00 | 4:3 | 85.33 | −3.34 | 3.24 |

| 1/1.12" (Xiaomi Mi 11 Ultra) | 14.29 | 11.43 | 8.57 | 4:3 | 97.96 | 3.03 | |

| Blackmagic Pocket Cinema Camera & Blackmagic Studio Camera | 14.32 | 12.48 | 7.02 | 16:9 | 87.6 | −3.30 | 3.02 |

| Super 16 mm film frame | 14.54 | 12.52 | 7.41 | 5:3 | 92.80 | −3.22 | 2.97 |

| 1" (Nikon CX, Sony RX100, Sony RX10, Sony ZV1, Samsung NX Mini) | 15.86 | 13.20 | 8.80 | 3:2 | 116 | −2.89 | 2.72 |

| 1" Digital Bolex d16 | 16.00 | 12.80 | 9.60 | 4:3 | 123 | −2.81 | 2.70 |

| 1.1" Sony IMX253[44] | 17.46 | 14.10 | 10.30 | 11:8 | 145 | −2.57 | 2.47 |

| Blackmagic Cinema Camera EF | 18.13 | 15.81 | 8.88 | 16:9 | 140 | −2.62 | 2.38 |

| Blackmagic Pocket Cinema Camera 4K | 21.44 | 18.96 | 10 | 19:10 | 190 | −2.19 | 2.01 |

| Four Thirds, Micro Four Thirds ("4/3", "m4/3") | 21.60 | 17.30 | 13 | 4:3 | 225 | −1.94 | 2.00 |

| Blackmagic Production Camera/URSA/URSA Mini 4K | 24.23 | 21.12 | 11.88 | 16:9 | 251 | −1.78 | 1.79 |

| 1.5" Canon PowerShot G1 X Mark II | 23.36 | 18.70 | 14 | 4:3 | 262 | −1.72 | 1.85 |

| "35mm" 2 Perf Techniscope | 23.85 | 21.95 | 9.35 | 7:3 | 205.23 | −2.07 | 1.81 |

| original Sigma Foveon X3 | 24.90 | 20.70 | 13.80 | 3:2 | 286 | −1.60 | 1.74 |

| RED DRAGON 4.5K (RAVEN) | 25.50 | 23.00 | 10.80 | 19:9 | 248.4 | −1.80 | 1.66 |

| "Super 35mm" 2 Perf | 26.58 | 24.89 | 9.35 | 8:3 | 232.7 | −1.89 | 1.62 |

| Canon EF-S, APS-C | 26.82 | 22.30 | 14.90 | 3:2 | 332 | −1.38 | 1.61 |

| Standard 35 mm film frame (movie) | 27.20 | 22.0 | 16.0 | 11:8 | 352 | −1.30 | 1.59 |

| Blackmagic URSA Mini/Pro 4.6K | 29 | 25.34 | 14.25 | 16:9 | 361 | −1.26 | 1.49 |

| APS-C (Sony α, Sony E, Nikon DX, Pentax K, Samsung NX, Fuji X) | 28.2–28.4 | 23.6–23.7 | 15.60 | 3:2 | 368–370 | −1.23 to −1.22 | 1.52–1.54 |

| Super 35 mm film 3 perf | 28.48 | 24.89 | 13.86 | 9:5 | 344.97 | −1.32 | 1.51 |

| RED DRAGON 5K S35 | 28.9 | 25.6 | 13.5 | 17:9 | 345.6 | −1.32 | 1.49 |

| Super 35mm film 4 perf | 31.11 | 24.89 | 18.66 | 4:3 | 464 | −0.90 | 1.39 |

| Canon APS-H | 33.50 | 27.90 | 18.60 | 3:2 | 519 | −0.74 | 1.29 |

| ARRI ALEV III (ALEXA SXT, ALEXA MINI, AMIRA), RED HELIUM 8K S35 | 33.80 | 29.90 | 15.77 | 17:9 | 471.52 | −0.87 | 1.28 |

| RED DRAGON 6K S35 | 34.50 | 30.7 | 15.8 | 35:18 | 485.06 | −0.83 | 1.25 |

| 35 mm film full-frame, (Canon EF, Nikon FX, Pentax K-1, Sony α, Sony FE, Leica M) | 43.1–43.3 | 35.8–36 | 23.9–24 | 3:2 | 856–864 | 0 | 1.0 |

| ARRI ALEXA LF | 44.71 | 36.70 | 25.54 | 13:9 | 937.32 | 0.12 | 0.96 |

| RED MONSTRO 8K VV, Panavision Millenium DXL2 | 46.31 | 40.96 | 21.60 | 17:9 | 884.74 | 0.03 | 0.93 |

| Leica S | 54 | 45 | 30 | 3:2 | 1350 | 0.64 | 0.80 |

| Pentax 645D, Hasselblad X1D-50c, Hasselblad H6D-50c, CFV-50c, Fuji GFX 50S | 55 | 43.8 | 32.9 | 4:3 | 1452 | 0.75 | 0.79 |

| Standard 65/70 mm film frame | 57.30 | 52.48 | 23.01 | 7:3 | 1208 | 0.48 | 0.76 |

| ARRI ALEXA 65 | 59.86 | 54.12 | 25.58 | 19:9 | 1384.39 | 0.68 | 0.72 |

| Kodak KAF 39000 CCD[47] | 61.30 | 49 | 36.80 | 4:3 | 1803 | 1.06 | 0.71 |

| Leaf AFi 10 | 66.57 | 56 | 36 | 14:9 | 2016 | 1.22 | 0.65 |

| Medium-format (Hasselblad H5D-60c, Hasselblad H6D-100c)[48] | 67.08 | 53.7 | 40.2 | 4:3 | 2159 | 1.32 | 0.65 |

| Phase One P 65+, IQ160, IQ180 | 67.40 | 53.90 | 40.40 | 4:3 | 2178 | 1.33 | 0.64 |

| Medium-format 6×4.5 cm (also called 645 format) | 70 | 42 | 56 | 3:4 | 2352 | 1.44 | 0.614 |

| Medium-format 6×6 cm | 79 | 56 | 56 | 1:1 | 3136 | 1.86 | 0.538 |

| IMAX film frame | 87.91 | 70.41 | 52.63 | 4:3 | 3706 | 2.10 | 0.49 |

| Medium-format 6×7 cm | 89.6 | 70 | 56 | 5:4 | 3920 | 2.18 | 0.469 |

| Medium-format 6×8 cm | 94.4 | 76 | 56 | 3:4 | 4256 | 2.30 | 0.458 |

| Medium-format 6×9 cm | 101 | 84 | 56 | 3:2 | 4704 | 2.44 | 0.43 |

| Large-format film 4×5 inch | 150 | 121 | 97 | 5:4 | 11737 | 3.76 | 0.29 |

| Large-format film 5×7 inch | 210 | 178 | 127 | 7:5 | 22606 | 4.71 | 0.238 |

| Large-format film 8×10 inch | 300 | 254 | 203 | 5:4 | 51562 | 5.90 | 0.143 |

See also[edit]

- Full-frame digital SLR

- Sensor size and angle of view

- 35 mm equivalent focal length

- Film format

- Digital versus film photography

- List of large sensor interchangeable-lens video cameras

- List of sensors used in digital cameras

- Angle of view

- Crop factor

- Field of view

Notes[edit]

Footnotes and references[edit]

- ^ "What is equivalence and why should I care?". DP Review. 2014-07-07. Retrieved 2017-05-03.

- ^ a b Fellers, Thomas J.; Davidson, Michael W. "CCD Noise Sources and Signal-to-Noise Ratio". Hamamatsu Corporation. Retrieved 20 November 2013.

- ^ Aptina Imaging Corporation. "Leveraging Dynamic Response Pixel Technology to Optimize Inter-scene Dynamic Range" (PDF). Aptina Imaging Corporation. Retrieved 17 December 2011.

- ^ Loukianova, Natalia V.; Folkerts, Hein Otto; Maas, Joris P. V.; Verbugt, Joris P. V.; Daniël W. E. Mierop, Adri J.; Hoekstra, Willem; Roks, Edwin and Theuwissen, Albert J. P. (January 2003). "Leakage Current Modeling of Test Structures for Characterization of Dark Current in CMOS Image Sensors" (PDF). IEEE Transactions on Electron Devices. 50 (1): 77–83. Bibcode:2003ITED...50...77L. doi:10.1109/TED.2002.807249. Retrieved 17 December 2011.

{{cite journal}}: CS1 maint: multiple names: authors list (link) - ^ "Dark Count". Apogee Imaging Systems. Retrieved 17 December 2011.

- ^ Kavusi, Sam; El Gamal, Abbas (2004). Blouke, Morley M; Sampat, Nitin; Motta, Ricardo J (eds.). "Quantitative Study of High Dynamic Range Image Sensor Architectures" (PDF). Proc. Of SPIE-IS&T Electronic Imaging. Sensors and Camera Systems for Scientific, Industrial, and Digital Photography Applications V. 5301: 264–275. Bibcode:2004SPIE.5301..264K. doi:10.1117/12.544517. S2CID 14550103. Retrieved 17 December 2011.

- ^ Osuna, Rubén; García, Efraín. "Do Sensors "Outresolve" Lenses?". The Luminous Landscape. Archived from the original on 2 January 2010. Retrieved 21 December 2011.

- ^ Boreman, Glenn D. (2001). Modulation Transfer Function in Optical and Electro-Optical Systems. SPIE Press. p. 120. ISBN 978-0-8194-4143-0.

- ^ Ozaktas, Haldun M; Urey, Hakan; Lohmann, Adolf W. (1994). "Scaling of diffractive and refractive lenses for optical computing and interconnections". Applied Optics. 33 (17): 3782–3789. Bibcode:1994ApOpt..33.3782O. doi:10.1364/AO.33.003782. hdl:11693/13640. PMID 20885771. S2CID 1384331.

- ^ Goodman, Joseph W (2005). Introduction to Fourier optics, 3rd edition. Greenwood Village, Colorado: Roberts and Company. p. 26. ISBN 978-0-9747077-2-3.

- ^ Nasse, H. H. "From the Series of Articles on Lens Names: Tessar" (PDF). Carl Zeiss AG. Archived from the original (PDF) on 13 May 2012. Retrieved 19 December 2011.

- ^ Simon Crisp (21 March 2013). "Camera sensor size: Why does it matter and exactly how big are they?". Retrieved January 29, 2014.

- ^ Stanislav Utochkin. "Specifying active area size of the image sensor". Retrieved May 21, 2015.

- ^ Catrysse, Peter B.; Wandell, Brian A. (2005). "Roadmap for CMOS image sensors: Moore meets Planck and Sommerfeld" (PDF). Proceedings of the International Society for Optical Engineering. Digital Photography. 5678 (1): 1. Bibcode:2005SPIE.5678....1C. CiteSeerX 10.1.1.80.1320. doi:10.1117/12.592483. S2CID 7068027. Archived from the original (PDF) on 13 January 2015. Retrieved 29 January 2012.

- ^ DxOmark. "F-stop blues". DxOMark Insights. Retrieved 29 January 2012.

- ^ Aptina Imaging Corporation. "An Objective Look at FSI and BSI" (PDF). Aptina Technology White Paper. Retrieved 29 January 2012.

- ^ "Nikon unveils J1 small sensor mirrorless camera as part of Nikon 1 system", Digital Photography Review.

- ^ "Canon's Full Frame CMOS Sensors" (PDF) (Press release). 2006. Archived from the original (PDF) on 2012-10-28. Retrieved 2013-05-02.

- ^ http://europe.nokia.com/PRODUCT_METADATA_0/Products/Phones/8000-series/808/Nokia808PureView_Whitepaper.pdf Nokia PureView imaging technology whitepaper

- ^ "The Phase One P+ Product Range". PHASE ONE. Archived from the original on 2010-08-12. Retrieved 2010-06-07.

- ^ "Leica S2 with 56% larger sensor than full frame" (Press release). Leica. 2008-09-23. Retrieved 2010-06-07.

- ^ "Pentax unveils 40MP 645D medium format DSLR" (Press release). Pentax. 2010-03-10. Retrieved 2010-12-21.

- ^ Johnson, Allison (2016-06-22). "Medium-format mirrorless: Hasselblad unveils X1D". Digital Photography Review. Retrieved 2016-06-26.

- ^ "Fujifilm announces development of new medium format "GFX" mirroless camera system" (Press release). Fujifilm. 2016-09-19.

- ^ "Fujifilm's Medium Format GFX 50S to Ship in February for $6,500". 2017-01-19.

- ^ Staff (7 October 2002). "Making (some) sense out of sensor sizes". Digital Photography Review. Retrieved 29 June 2012.

- ^ Staff. "Image Sensor Format". Imaging Glossary Terms and Definitions. SPOT IMAGING SOLUTIONS. Archived from the original on 26 March 2015. Retrieved 3 June 2015.

- ^ Pogue, David (2010-12-22). "Small Cameras With Big Sensors, and How to Compare Them". The New York Times.

- ^ a b Bockaert, Vincent. "Sensor Sizes: Camera System: Glossary: Learn". Digital Photography Review. Archived from the original on 2013-01-25. Retrieved 2012-04-09.

- ^ "Making (Some) sense out of sensor sizes".

- ^ Camera Sensor Ratings Archived 2012-03-21 at the Wayback Machine DxOMark

- ^ Imaging-resource: Sample images Comparometer Imaging-resource

- ^ "Unravelling Sensor Sizes – Photo Review". www.photoreview.com.au. Retrieved 2016-09-22.

- ^ Nokia Lumia 720 – Full phone specifications, GSMArena.com, February 25, 2013, retrieved 2013-09-21

- ^ Camera sensor size: Why does it matter and exactly how big are they?, Gizmag, March 21, 2013, retrieved 2013-06-19

- ^ "Diagonal 5.822 mm (Type 1/3.09) 16Mega-Pixel CMOS Image Sensor with Square Pixel for Color Cameras" (PDF). Sony. Archived from the original (PDF) on 16 October 2019. Retrieved 16 October 2019.

- ^ Comparison of iPhone Specs, PhoneArena

- ^ "Diagonal 6.23 mm (Type 1/2.9) CMOS Image Sensor with Square Pixel for Color Cameras" (PDF). Sony. 2015. Retrieved 3 April 2019.

- ^ "iPhone XS Max teardown reveals new sensor with more focus pixels". Digital Photography Review. 27 September 2018. Retrieved 1 March 2019.

- ^ "Phantom 3 Professional - Let your creativity fly with a 4K camera in the sky. - DJI". DJI Official. Retrieved 2019-12-01.

- ^ "DJI - The World Leader in Camera Drones/Quadcopters for Aerial Photography". DJI Official. Retrieved 2019-12-01.

- ^ "Diagonal 7.87mm (Type 1/2.3) 20.7M Pixel CMOS Image Sensor with Square Pixel for Color Cameras" (PDF). Sony. September 2014. Archived from the original (PDF) on 3 April 2019. Retrieved 3 April 2019.

- ^ "Samsung officially unveils 108MP ISOCELL Bright HMX mobile camera sensor". Digital Photography Review. Aug 12, 2019. Retrieved 16 Feb 2021.

- ^ "Diagonal 17.6 mm (Type 1.1) Approx. 12.37M-Effective Pixel Monochrome and Color CMOS Image Sensor" (PDF). Sony. March 2016. Archived from the original (PDF) on 15 December 2017. Retrieved 3 April 2019.

- ^ "Hasselblad X1D-II 50c Datasheet" (PDF). Hasselblad. 2019-06-01. Retrieved 2022-04-09.

- ^ "GFX 50s Specifications". Fujifilm. January 17, 2019. Retrieved 2022-04-09.

- ^ KODAK KAF-39000 IMAGE SENSOR, DEVICE PERFORMANCE SPECIFICATION (PDF), KODAK, April 30, 2010, retrieved 2014-02-09

- ^ Hasselblad H5D-60 medium-format DSLR camera, B&H PHOTO VIDEO, retrieved 2013-06-19

External links[edit]

- Eric Fossum: Photons to Bits and Beyond: The Science & Technology of Digital, Oct. 13, 2011 (YouTube Video of lecture)

- Joseph James: Equivalence at Joseph James Photography

- Simon Tindemans: Alternative photographic parameters: a format-independent approach at 21stcenturyshoebox

- Compact Camera High ISO modes: Separating the facts from the hype at dpreview.com, May 2007

- The best compromise for a compact camera is a sensor with 6 million pixels or better a sensor with a pixel size of >3μm at 6mpixel.org

- [2] at hasselblad.com

![{\displaystyle \mathrm {MTF} \left({\frac {\xi }{\xi _{\mathrm {cutoff} }}}\right)={\frac {2}{\pi }}\left\{\cos ^{-1}\left({\frac {\xi }{\xi _{\mathrm {cutoff} }}}\right)-\left({\frac {\xi }{\xi _{\mathrm {cutoff} }}}\right)\left[1-\left({\frac {\xi }{\xi _{\mathrm {cutoff} }}}\right)^{2}\right]^{\frac {1}{2}}\right\}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2df679bc046c28caa3d3f26b8db34e88d924319e)