Seq2seq

Seq2seq is a family of machine learning approaches used for natural language processing.[1] Applications include language translation, image captioning, conversational models, and text summarization.[2] Seq2seq uses sequence transformation: it turns one sequence into another sequence.

History

[edit]One naturally wonders if the problem of translation could conceivably be treated as a problem in cryptography. When I look at an article in Russian, I say: 'This is really written in English, but it has been coded in some strange symbols. I will now proceed to decode.

— Warren Weaver, Letter to Norbert Wiener, March 4, 1947

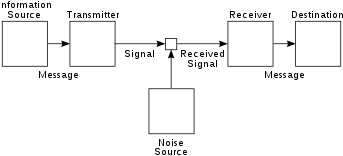

seq2seq is an approach to machine translation (or more generally, sequence transduction) with roots in information theory, where communication is understood as an encode-transmit-decode process, and machine translation can be studied as a special case of communication. This viewpoint was elaborated, for example, in the noisy channel model of machine translation.

Concretely, seq2seq maps an input sequence into a real-numerical vector by a neural network (the encoder), then map it back to an output sequence using another neural network (the decoder).

The idea of encoder-decoder sequence transduction had been developed in the early 2010s (see [3][1] for previous papers). The papers most commonly cited as the originators that produced seq2seq are two papers from 2014.[3][1]

In the seq2seq as proposed by them, both the encoder and the decoder were LSTMs. This had the "bottleneck" problem, since the encoding vector has a fixed size, so for long input sequences, information would tend to be lost, as they are difficult to fit into the fixed-length encoding vector. The attention mechanism, proposed in 2014,[4] resolved the bottleneck problem. They called their model RNNsearch, as it "emulates searching through a source sentence during decoding a translation".

A problem with seq2seq models at this point was that recurrent neural networks are difficult to parallelize. The 2017 publication of Transformers[5] resolved the problem by replacing the encoding RNN with self-attention Transformer blocks ("encoder blocks"), and the decoding RNN with cross-attention causally-masked Transformer blocks ("decoder blocks").

Priority dispute

[edit]One of the papers cited as the originator for seq2seq is (Sutskever et al 2014),[1] published at Google Brain while they were on Google's machine translation project. The research allowed Google to overhaul Google Translate into Google Neural Machine Translation in 2016.[1][6] Tomáš Mikolov claimed to have developed multiple ideas before joining Google Brain, including seq2seq machine translation, which he mentioned the idea to Ilya Sutskever and Quoc Le while at Google Brain, who failed to acknowledge him in their paper.[7]

Mikolov had worked on RNNLM (using RNN for language modelling) for his PhD thesis,[8] and he is more famous for developing word2vec.

Architecture

[edit]Encoder

[edit]

The encoder is responsible for processing the input sequence and capturing its essential information, which is stored as the hidden state of the network and, in a model with attention mechanism, a context vector. The context vector is the weighted sum of the input hidden states and is generated for every time instance in the output sequences.

Decoder

[edit]

The decoder takes the context vector and hidden states from the encoder and generates the final output sequence. The decoder operates in an autoregressive manner, producing one element of the output sequence at a time. At each step, it considers the previously generated elements, the context vector, and the input sequence information to make predictions for the next element in the output sequence. Specifically, in a model with attention mechanism, the context vector and the hidden state are concatenated together to form an attention hidden vector, which is used as an input for the decoder.

Attention mechanism

[edit]

The attention mechanism is an enhancement introduced by Bahdanau et al. in 2014 to address limitations in the basic Seq2Seq architecture where a longer input sequence results in the hidden state output of the encoder becoming irrelevant for the decoder. It enables the model to selectively focus on different parts of the input sequence during the decoding process. At each decoder step, an alignment model calculates the attention score using the current decoder state and all of the attention hidden vectors as input. An alignment model is another neural network model that is trained jointly with the seq2seq model used to calculate how well an input, represented by the hidden state, matches with the previous output, represented by attention hidden state. A softmax function is then applied to the attention score to get the attention weight.

In some models, the encoder states are directly fed into an activation function, removing the need for alignment model. An activation function receives one decoder state and one encoder state and returns a scalar value of their relevance.[9]

Other applications

[edit]In 2019, Facebook announced its use in symbolic integration and resolution of differential equations. The company claimed that it could solve complex equations more rapidly and with greater accuracy than commercial solutions such as Mathematica, MATLAB and Maple. First, the equation is parsed into a tree structure to avoid notational idiosyncrasies. An LSTM neural network then applies its standard pattern recognition facilities to process the tree.[10]

In 2020, Google released Meena, a 2.6 billion parameter seq2seq-based chatbot trained on a 341 GB data set. Google claimed that the chatbot has 1.7 times greater model capacity than OpenAI's GPT-2,[11] whose May 2020 successor, the 175 billion parameter GPT-3, trained on a "45TB dataset of plaintext words (45,000 GB) that was ... filtered down to 570 GB."[12]

In 2022, Amazon introduced AlexaTM 20B, a moderate-sized (20 billion parameter) seq2seq language model. It uses an encoder-decoder to accomplish few-shot learning. The encoder outputs a representation of the input that the decoder uses as input to perform a specific task, such as translating the input into another language. The model outperforms the much larger GPT-3 in language translation and summarization. Training mixes denoising (appropriately inserting missing text in strings) and causal-language-modeling (meaningfully extending an input text). It allows adding features across different languages without massive training workflows. AlexaTM 20B achieved state-of-the-art performance in few-shot-learning tasks across all Flores-101 language pairs, outperforming GPT-3 on several tasks.[13]

See also

[edit]References

[edit]- ^ a b c d e Sutskever, Ilya; Vinyals, Oriol; Le, Quoc Viet (2014). "Sequence to sequence learning with neural networks". arXiv:1409.3215 [cs.CL].

- ^ Wadhwa, Mani (2018-12-05). "seq2seq model in Machine Learning". GeeksforGeeks. Retrieved 2019-12-17.

- ^ a b Cho, Kyunghyun; van Merrienboer, Bart; Gulcehre, Caglar; Bahdanau, Dzmitry; Bougares, Fethi; Schwenk, Holger; Bengio, Yoshua (2014-06-03). "Learning Phrase Representations using RNN Encoder-Decoder for Statistical Machine Translation". arXiv:1406.1078 [cs.CL].

- ^ Bahdanau, Dzmitry; Cho, Kyunghyun; Bengio, Yoshua (2014). "Neural Machine Translation by Jointly Learning to Align and Translate". arXiv:1409.0473 [cs.CL].

- ^ Vaswani, Ashish; Shazeer, Noam; Parmar, Niki; Uszkoreit, Jakob; Jones, Llion; Gomez, Aidan N; Kaiser, Ł ukasz; Polosukhin, Illia (2017). "Attention is All you Need". Advances in Neural Information Processing Systems. 30. Curran Associates, Inc.

- ^ Wu, Yonghui; Schuster, Mike; Chen, Zhifeng; Le, Quoc V.; Norouzi, Mohammad; Macherey, Wolfgang; Krikun, Maxim; Cao, Yuan; Gao, Qin; Macherey, Klaus; Klingner, Jeff; Shah, Apurva; Johnson, Melvin; Liu, Xiaobing; Kaiser, Łukasz (2016). "Google's Neural Machine Translation System: Bridging the Gap between Human and Machine Translation". arXiv:1609.08144 [cs.CL].

- ^ Mikolov, Tomáš (December 13, 2023). "Yesterday we received a Test of Time Award at NeurIPS for the word2vec paper from ten years ago". Facebook. Archived from the original on 24 Dec 2023.

- ^ Mikolov, Tomáš. "Statistical language models based on neural networks." (2012).

- ^ Voita, Lena. "Sequence to Sequence (seq2seq) and Attention". Retrieved 2023-12-20.

- ^ "Facebook has a neural network that can do advanced math". MIT Technology Review. December 17, 2019. Retrieved 2019-12-17.

- ^ Mehta, Ivan (2020-01-29). "Google claims its new chatbot Meena is the best in the world". The Next Web. Retrieved 2020-02-03.

- ^ Gage, Justin. "What's GPT-3?". Retrieved August 1, 2020.

- ^ Rodriguez, Jesus (8 September 2022). "🤘Edge#224: AlexaTM 20B is Amazon's New Language Super Model Also Capable of Few-Shot Learning". thesequence.substack.com. Retrieved 2022-09-08.

External links

[edit]- "A ten-minute introduction to sequence-to-sequence learning in Keras". blog.keras.io. Retrieved 2019-12-19.

- Adiwardana, Daniel; Luong, Minh-Thang; So, David R.; Hall, Jamie; Fiedel, Noah; Thoppilan, Romal; Yang, Zi; Kulshreshtha, Apoorv; Nemade, Gaurav; Lu, Yifeng; Le, Quoc V. (2020-01-31). "Towards a Human-like Open-Domain Chatbot". arXiv:2001.09977 [cs.CL].