iCub

| |

| Manufacturer | Italian Institute of Technology |

|---|---|

| Country | Italy |

| Year of creation | 2009–present |

| Type | Humanoid robot |

| Purpose | research, recreational |

| Website | www |

| Developer(s) | Italian Institute of Technology |

|---|---|

| Initial release | 2009 |

| Stable release | 1.13.0

/ July 4, 2019 |

| Written in | C++[1] |

| Operating system | Free/Libre operating systems: Linux, FreeBSD, NetBSD, OpenBSD; Non-free operating systems: OS X, Windows |

| Type | Artificial Intelligence, Robotics |

| License | GNU GPL/GNU LGPL[2] (Free software) |

| Website | github |

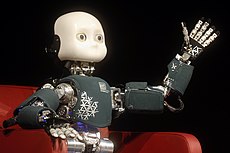

iCub is a one meter tall open source robotics humanoid robot testbed for research into human cognition and artificial intelligence.

It was designed by the RobotCub Consortium of several European universities, built by Italian Institute of Technology, and is now supported by other projects such as ITALK.[3] The robot is open-source, with the hardware design, software and documentation all released under the GPL license. The name is a partial acronym, cub standing for Cognitive Universal Body. Initial funding for the project was €8.5 million from Unit E5 – Cognitive Systems and Robotics – of the European Commission's Seventh Framework Programme, and this ran for 65 months from 1 September 2004 until 31 January 2010.

The motivation behind the strongly humanoid design is the embodied cognition hypothesis, that human-like manipulation plays a vital role in the development of human cognition. A baby learns many cognitive skills by interacting with its environment and other humans using its limbs and senses, and consequently its internal model of the world is largely determined by the form of the human body. The robot was designed to test this hypothesis by allowing cognitive learning scenarios to be acted out by an accurate reproduction of the perceptual system, and an articulation of a small child so that it could interact with the world in the same way that such a child does.[4]

Specifications[edit]

The dimensions of the iCub are similar to that of a 3.5-year-old child. The robot is controlled by an on-board PC104 controller which communicates with actuators and sensors using CANBus.

It utilises tendon driven joints for the hand and shoulder, with the fingers flexed by teflon-coated cable tendons running inside teflon-coated tubes, and pulling against spring returns. Joint angles are measured using custom-designed Hall-effect sensors and the robot can be equipped with torque sensors. The finger tips can be equipped with tactile touch sensors, and a distributed capacitive sensor skin is being developed.

The software library is largely written in C++ and uses YARP for external communication via Gigabit Ethernet with off-board software implementing higher level functionality, the development of which has been taken over by the RobotCub Consortium.[4] The robot was not designed for autonomous operation, and is consequently not equipped with onboard batteries or processors required for this —instead an umbilical cable provides power and a network connection.[4]

In its final version, the robot has 53 actuated degrees of freedom organized as follows:

- 7 in each arm

- 9 in each hand (3 for the thumb, 2 for the index, 2 for the middle finger, 1 for the coupled ring and little finger, 1 for the adduction/abduction)

- 6 in the head (3 for the neck and 3 for the cameras)

- 3 in the torso/waist

- 6 in each leg

The head has stereo cameras in a swivel mounting where eyes would be located on a human and microphones on the side. It also has lines of red LEDs representing mouth and eyebrows mounted behind the face panel for making facial expressions.

Since the first robots were constructed the design has undergone several revisions and improvements, for example smaller and more dexterous hands,[5] and lighter, more robust legs with greater joint angles and which permit walking rather than just crawling.[6]

Capabilities of iCub[edit]

The iCub has been demonstrated with capabilities to successfully perform the following tasks, among others:

- crawling, using visual guidance with optic marker on the floor[7]

- solving complex 3D mazes [8][9]

- archery, shooting arrows with a bow and learning to hit the center of the target[10][11]

- facial expressions, allowing the iCub to express emotions[12]

- force control, exploiting proximal force/torque sensors[13]

- grasping small objects, such as balls, plastic bottles, etc.[14]

- collision avoidance within non-static environments, as well as, self-collision avoidance[15][16][17]

iCubs in the world[edit]

These robots were built by Istituto Italiano di Tecnologia (IIT) in Genoa and are used by a small but lively community of scientists that use the iCub to study embodied cognition in artificial systems. There are about thirty iCubs in various laboratories mainly in the European Union but also one in the United States.[18] The first researcher in North America to be granted an iCub was Stephen E. Levinson, for studies of computational models of the brain and mind and language acquisition.[19]

The robots are constructed by IIT and cost about €250,000[20] each depending upon the version.[21] Most of the financial support comes from the European Commission's Unit E5 or the Istituto Italiano di Tecnologia (IIT) via the recently created iCub Facility department.[18] The development and construction of iCub at IIT is part of an independent documentary film called Plug & Pray which was released in 2010.[22]

See also[edit]

References[edit]

- ^ iCub Source Code

- ^ "iCub". Retrieved 27 November 2019.

The iCub is distributed as Open Source following the GPL/LGPL licenses and can now count on a worldwide community of enthusiastic developers.

- ^ "An open source cognitive humanoid robotic platform". Official iCub website. Retrieved 30 July 2010.

- ^ a b c Metta, Giorgio; Sandini Giulio; Vernon David; Natale Lorenzo; Nori Francesco (2008). The iCub humanoid robot: an open platform for research in embodied cognition (PDF). PerMIS’08. Retrieved 1 January 2018.

- ^ June, Laura (12 March 2010). "iCub gets upgraded with tinier hands, better legs". Engadget. Retrieved 30 July 2010.

- ^ Tsagarakis, N.G.; Vanderborght Bram; Laffranchi Matteo; Caldwell D.G. The Mechanical Design of the New Lower Body for the Child Humanoid robot 'iCub' (PDF). IEEE International Conference on Robotics and Automation Conference, (ICRA 2009). Archived from the original (PDF) on 20 July 2011. Retrieved 30 July 2010.

- ^ Crawling. iCub HumanoidRobot. 16 April 2010. Archived from the original on 11 June 2016. Retrieved 18 February 2022 – via YouTube.

- ^ Nath, Vishnu; Stephen Levinson. Learning to Fire at Targets by an iCub Humanoid Robot. AAAI Spring Symposium 2013 : Designing Intelligent Robots : Reintegrating AI II. Retrieved 29 September 2013.

- ^ iCub autonomously solving a puzzle. Vishnu Nath. 8 March 2013. Archived from the original on 16 May 2016. Retrieved 18 February 2022 – via YouTube.

- ^ Kormushev, Petar; Calinon Sylvain; Saegusa Ryo; Metta Giorgio. Learning the skill of archery by a humanoid robot iCub (PDF). IEEE International Conference on Humanoid Robots, (Humanoids 2010). Retrieved 19 March 2011.

- ^ Robot Archer iCub. PetarKormushev. 22 September 2010. Archived from the original on 22 January 2021. Retrieved 18 February 2022 – via YouTube.

- ^ iCub facial expressions. Vislab Lisboa. 17 March 2009. Archived from the original on 5 September 2020. Retrieved 18 February 2022 – via YouTube.

- ^ Force control exploiting proximal force/torque sensors - pt.2. iCub HumanoidRobot. 11 October 2010. Archived from the original on 9 June 2016. Retrieved 18 February 2022 – via YouTube.

- ^ "Toward Intelligent Humanoids". iCub manipulating a variety of objects. Archived from the original on 10 March 2014. Retrieved 22 July 2013.

- ^ Frank, Mikhail; Jürgen Leitner; Marijn Stollenga; Gregor Kaufmann; Simon Harding; Alexander Förster; Jürgen Schmidhuber. The Modular Behavioral Environment for Humanoids & other Robots (MoBeE) (PDF). 9th International Conference on Informatics in Control, Automation and Robotics (ICINCO).

- ^ Leitner, Jürgen ‘Juxi’; Simon Harding; Mikhail Frank; Alexander Förster; Jürgen Schmidhuber. Transferring Spatial Perception Between Robots Operating In A Shared Workspace (PDF). IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2012).

- ^ Stollenga, Marijn; Leo Pape; Mikhail Frank; Jürgen Leitner; Alexander Förster; Jürgen Schmidhuber. Task-Relevant Roadmaps: A Framework for Humanoid Motion Planning. IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2013).

- ^ a b "The iCub humanoid robot project". Istituto Italiano di Tecnologia (IIT). Retrieved 1 January 2018.

- ^ "Humanoid Robot Learns Like a Child". Discovery News. Retrieved 11 February 2013.

- ^ "XE: (EUR/USD) Euro to US Dollar Rate". www.xe.com. Retrieved 20 November 2015.

- ^ "Archived copy". iCub website. Archived from the original on 17 February 2018. Retrieved 30 July 2010.

{{cite web}}: CS1 maint: archived copy as title (link) - ^ Plug & Pray, documentary film about the social impact of robots and related ethical questions

External links[edit]

- Nosengo, Nicola (27 August 2009). "Robotics: The bot that plays ball" (PDF). Nature. 460 (7259): 1076–8. doi:10.1038/4601076a. PMID 19713909. Retrieved 30 July 2010. - Nature article about the iCub.

- YouTube Channel - a YouTube channel about the iCub.

- iCub presentations - from the Humanoid robotics symposium 2010.

- IROS'10 - Videos and workshop on iCub research (2010).

- Toward Intelligent Humanoids - Video showing current abilities of the iCub (2012)

- RobotCub Consortium

- the iCub project

| Preceded by RobotCub |

Humanoid robots | Succeeded by - |