Theoretical computer science

Theoretical computer science is a subfield of computer science and mathematics that focuses on the abstract and mathematical foundations of computation.

It is difficult to circumscribe the theoretical areas precisely. The ACM's Special Interest Group on Algorithms and Computation Theory (SIGACT) provides the following description:[1]

TCS covers a wide variety of topics including algorithms, data structures, computational complexity, parallel and distributed computation, probabilistic computation, quantum computation, automata theory, information theory, cryptography, program semantics and verification, algorithmic game theory, machine learning, computational biology, computational economics, computational geometry, and computational number theory and algebra. Work in this field is often distinguished by its emphasis on mathematical technique and rigor.

History

[edit]While logical inference and mathematical proof had existed previously, in 1931 Kurt Gödel proved with his incompleteness theorem that there are fundamental limitations on what statements could be proved or disproved.

Information theory was added to the field with a 1948 mathematical theory of communication by Claude Shannon. In the same decade, Donald Hebb introduced a mathematical model of learning in the brain. With mounting biological data supporting this hypothesis with some modification, the fields of neural networks and parallel distributed processing were established. In 1971, Stephen Cook and, working independently, Leonid Levin, proved that there exist practically relevant problems that are NP-complete – a landmark result in computational complexity theory.[2]

Modern theoretical computer science research is based on these basic developments, but includes many other mathematical and interdisciplinary problems that have been posed, as shown below:

Topics

[edit]Algorithms

[edit]An algorithm is a step-by-step procedure for calculations. Algorithms are used for calculation, data processing, and automated reasoning.

An algorithm is an effective method expressed as a finite list[3] of well-defined instructions[4] for calculating a function.[5] Starting from an initial state and initial input (perhaps empty),[6] the instructions describe a computation that, when executed, proceeds through a finite[7] number of well-defined successive states, eventually producing "output"[8] and terminating at a final ending state. The transition from one state to the next is not necessarily deterministic; some algorithms, known as randomized algorithms, incorporate random input.[9]

Automata theory

[edit]Automata theory is the study of abstract machines and automata, as well as the computational problems that can be solved using them. It is a theory in theoretical computer science, under discrete mathematics (a section of mathematics and also of computer science). Automata comes from the Greek word αὐτόματα meaning "self-acting".

Automata Theory is the study of self-operating virtual machines to help in the logical understanding of input and output process, without or with intermediate stage(s) of computation (or any function/process).

Coding theory

[edit]Coding theory is the study of the properties of codes and their fitness for a specific application. Codes are used for data compression, cryptography, error correction and more recently also for network coding. Codes are studied by various scientific disciplines – such as information theory, electrical engineering, mathematics, and computer science – for the purpose of designing efficient and reliable data transmission methods. This typically involves the removal of redundancy and the correction (or detection) of errors in the transmitted data.

Computational complexity theory

[edit]Computational complexity theory is a branch of the theory of computation that focuses on classifying computational problems according to their inherent difficulty, and relating those classes to each other. A computational problem is understood to be a task that is in principle amenable to being solved by a computer, which is equivalent to stating that the problem may be solved by mechanical application of mathematical steps, such as an algorithm.

A problem is regarded as inherently difficult if its solution requires significant resources, whatever the algorithm used. The theory formalizes this intuition, by introducing mathematical models of computation to study these problems and quantifying the amount of resources needed to solve them, such as time and storage. Other complexity measures are also used, such as the amount of communication (used in communication complexity), the number of gates in a circuit (used in circuit complexity) and the number of processors (used in parallel computing). One of the roles of computational complexity theory is to determine the practical limits on what computers can and cannot do.

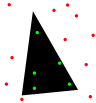

Computational geometry

[edit]Computational geometry is a branch of computer science devoted to the study of algorithms that can be stated in terms of geometry. Some purely geometrical problems arise out of the study of computational geometric algorithms, and such problems are also considered to be part of computational geometry.

The main impetus for the development of computational geometry as a discipline was progress in computer graphics and computer-aided design and manufacturing (CAD/CAM), but many problems in computational geometry are classical in nature, and may come from mathematical visualization.

Other important applications of computational geometry include robotics (motion planning and visibility problems), geographic information systems (GIS) (geometrical location and search, route planning), integrated circuit design (IC geometry design and verification), computer-aided engineering (CAE) (mesh generation), computer vision (3D reconstruction).

Computational learning theory

[edit]Theoretical results in machine learning mainly deal with a type of inductive learning called supervised learning. In supervised learning, an algorithm is given samples that are labeled in some useful way. For example, the samples might be descriptions of mushrooms, and the labels could be whether or not the mushrooms are edible. The algorithm takes these previously labeled samples and uses them to induce a classifier. This classifier is a function that assigns labels to samples including the samples that have never been previously seen by the algorithm. The goal of the supervised learning algorithm is to optimize some measure of performance such as minimizing the number of mistakes made on new samples.

Computational number theory

[edit]Computational number theory, also known as algorithmic number theory, is the study of algorithms for performing number theoretic computations. The best known problem in the field is integer factorization.

Cryptography

[edit]Cryptography is the practice and study of techniques for secure communication in the presence of third parties (called adversaries).[10] More generally, it is about constructing and analyzing protocols that overcome the influence of adversaries[11] and that are related to various aspects in information security such as data confidentiality, data integrity, authentication, and non-repudiation.[12] Modern cryptography intersects the disciplines of mathematics, computer science, and electrical engineering. Applications of cryptography include ATM cards, computer passwords, and electronic commerce.

Modern cryptography is heavily based on mathematical theory and computer science practice; cryptographic algorithms are designed around computational hardness assumptions, making such algorithms hard to break in practice by any adversary. It is theoretically possible to break such a system, but it is infeasible to do so by any known practical means. These schemes are therefore termed computationally secure; theoretical advances, e.g., improvements in integer factorization algorithms, and faster computing technology require these solutions to be continually adapted. There exist information-theoretically secure schemes that provably cannot be broken even with unlimited computing power—an example is the one-time pad—but these schemes are more difficult to implement than the best theoretically breakable but computationally secure mechanisms.

Data structures

[edit]A data structure is a particular way of organizing data in a computer so that it can be used efficiently.[13][14]

Different kinds of data structures are suited to different kinds of applications, and some are highly specialized to specific tasks. For example, databases use B-tree indexes for small percentages of data retrieval and compilers and databases use dynamic hash tables as look up tables.

Data structures provide a means to manage large amounts of data efficiently for uses such as large databases and internet indexing services. Usually, efficient data structures are key to designing efficient algorithms. Some formal design methods and programming languages emphasize data structures, rather than algorithms, as the key organizing factor in software design. Storing and retrieving can be carried out on data stored in both main memory and in secondary memory.

Distributed computation

[edit]Distributed computing studies distributed systems. A distributed system is a software system in which components located on networked computers communicate and coordinate their actions by passing messages.[15] The components interact with each other in order to achieve a common goal. Three significant characteristics of distributed systems are: concurrency of components, lack of a global clock, and independent failure of components.[15] Examples of distributed systems vary from SOA-based systems to massively multiplayer online games to peer-to-peer applications, and blockchain networks like Bitcoin.

A computer program that runs in a distributed system is called a distributed program, and distributed programming is the process of writing such programs.[16] There are many alternatives for the message passing mechanism, including RPC-like connectors and message queues. An important goal and challenge of distributed systems is location transparency.

Information-based complexity

[edit]Information-based complexity (IBC) studies optimal algorithms and computational complexity for continuous problems. IBC has studied continuous problems as path integration, partial differential equations, systems of ordinary differential equations, nonlinear equations, integral equations, fixed points, and very-high-dimensional integration.

Formal methods

[edit]Formal methods are a particular kind of mathematics based techniques for the specification, development and verification of software and hardware systems.[17] The use of formal methods for software and hardware design is motivated by the expectation that, as in other engineering disciplines, performing appropriate mathematical analysis can contribute to the reliability and robustness of a design.[18]

Formal methods are best described as the application of a fairly broad variety of theoretical computer science fundamentals, in particular logic calculi, formal languages, automata theory, and program semantics, but also type systems and algebraic data types to problems in software and hardware specification and verification.[19]

Information theory

[edit]Information theory is a branch of applied mathematics, electrical engineering, and computer science involving the quantification of information. Information theory was developed by Claude E. Shannon to find fundamental limits on signal processing operations such as compressing data and on reliably storing and communicating data. Since its inception it has broadened to find applications in many other areas, including statistical inference, natural language processing, cryptography, neurobiology,[20] the evolution[21] and function[22] of molecular codes, model selection in statistics,[23] thermal physics,[24] quantum computing, linguistics, plagiarism detection,[25] pattern recognition, anomaly detection and other forms of data analysis.[26]

Applications of fundamental topics of information theory include lossless data compression (e.g. ZIP files), lossy data compression (e.g. MP3s and JPEGs), and channel coding (e.g. for Digital Subscriber Line (DSL)). The field is at the intersection of mathematics, statistics, computer science, physics, neurobiology, and electrical engineering. Its impact has been crucial to the success of the Voyager missions to deep space, the invention of the compact disc, the feasibility of mobile phones, the development of the Internet, the study of linguistics and of human perception, the understanding of black holes, and numerous other fields. Important sub-fields of information theory are source coding, channel coding, algorithmic complexity theory, algorithmic information theory, information-theoretic security, and measures of information.

Machine learning

[edit]Machine learning is a scientific discipline that deals with the construction and study of algorithms that can learn from data.[27] Such algorithms operate by building a model based on inputs[28]: 2 and using that to make predictions or decisions, rather than following only explicitly programmed instructions.

Machine learning can be considered a subfield of computer science and statistics. It has strong ties to artificial intelligence and optimization, which deliver methods, theory and application domains to the field. Machine learning is employed in a range of computing tasks where designing and programming explicit, rule-based algorithms is infeasible. Example applications include spam filtering, optical character recognition (OCR),[29] search engines and computer vision. Machine learning is sometimes conflated with data mining,[30] although that focuses more on exploratory data analysis.[31] Machine learning and pattern recognition "can be viewed as two facets of the same field."[28]: vii

Natural computation

[edit]Natural computing,[32][33] also called natural computation, is a terminology introduced to encompass three classes of methods: 1) those that take inspiration from nature for the development of novel problem-solving techniques; 2) those that are based on the use of computers to synthesize natural phenomena; and 3) those that employ natural materials (e.g., molecules) to compute. The main fields of research that compose these three branches are artificial neural networks, evolutionary algorithms, swarm intelligence, artificial immune systems, fractal geometry, artificial life, DNA computing, and quantum computing, among others.

Computational paradigms studied by natural computing are abstracted from natural phenomena as diverse as self-replication, the functioning of the brain, Darwinian evolution, group behavior, the immune system, the defining properties of life forms, cell membranes, and morphogenesis. Besides traditional electronic hardware, these computational paradigms can be implemented on alternative physical media such as biomolecules (DNA, RNA), or trapped-ion quantum computing devices.

Dually, one can view processes occurring in nature as information processing. Such processes include self-assembly, developmental processes, gene regulation networks, protein–protein interaction networks, biological transport (active transport, passive transport) networks, and gene assembly in unicellular organisms. Efforts to understand biological systems also include engineering of semi-synthetic organisms, and understanding the universe itself from the point of view of information processing. Indeed, the idea was even advanced that information is more fundamental than matter or energy. The Zuse-Fredkin thesis, dating back to the 1960s, states that the entire universe is a huge cellular automaton which continuously updates its rules.[34][35] Recently it has been suggested that the whole universe is a quantum computer that computes its own behaviour.[36]

The universe/nature as computational mechanism is addressed by,[37] exploring nature with help the ideas of computability, and [38] studying natural processes as computations (information processing).Parallel computation

[edit]Parallel computing is a form of computation in which many calculations are carried out simultaneously,[40] operating on the principle that large problems can often be divided into smaller ones, which are then solved "in parallel". There are several different forms of parallel computing: bit-level, instruction level, data, and task parallelism. Parallelism has been employed for many years, mainly in high-performance computing, but interest in it has grown lately due to the physical constraints preventing frequency scaling.[41] As power consumption (and consequently heat generation) by computers has become a concern in recent years,[42] parallel computing has become the dominant paradigm in computer architecture, mainly in the form of multi-core processors.[43]

Parallel computer programs are more difficult to write than sequential ones,[44] because concurrency introduces several new classes of potential software bugs, of which race conditions are the most common. Communication and synchronization between the different subtasks are typically some of the greatest obstacles to getting good parallel program performance.

The maximum possible speed-up of a single program as a result of parallelization is known as Amdahl's law.

Programming language theory and program semantics

[edit]Programming language theory is a branch of computer science that deals with the design, implementation, analysis, characterization, and classification of programming languages and their individual features. It falls within the discipline of theoretical computer science, both depending on and affecting mathematics, software engineering, and linguistics. It is an active research area, with numerous dedicated academic journals.

In programming language theory, semantics is the field concerned with the rigorous mathematical study of the meaning of programming languages. It does so by evaluating the meaning of syntactically legal strings defined by a specific programming language, showing the computation involved. In such a case that the evaluation would be of syntactically illegal strings, the result would be non-computation. Semantics describes the processes a computer follows when executing a program in that specific language. This can be shown by describing the relationship between the input and output of a program, or an explanation of how the program will execute on a certain platform, hence creating a model of computation.

Quantum computation

[edit]A quantum computer is a computation system that makes direct use of quantum-mechanical phenomena, such as superposition and entanglement, to perform operations on data.[45] Quantum computers are different from digital computers based on transistors. Whereas digital computers require data to be encoded into binary digits (bits), each of which is always in one of two definite states (0 or 1), quantum computation uses qubits (quantum bits), which can be in superpositions of states. A theoretical model is the quantum Turing machine, also known as the universal quantum computer. Quantum computers share theoretical similarities with non-deterministic and probabilistic computers; one example is the ability to be in more than one state simultaneously. The field of quantum computing was first introduced by Yuri Manin in 1980[46] and Richard Feynman in 1982.[47][48] A quantum computer with spins as quantum bits was also formulated for use as a quantum space–time in 1968.[49]

Experiments have been carried out in which quantum computational operations were executed on a very small number of qubits.[50] Both practical and theoretical research continues, and many national governments and military funding agencies support quantum computing research to develop quantum computers for both civilian and national security purposes, such as cryptanalysis.[51]

Symbolic computation

[edit]Computer algebra, also called symbolic computation or algebraic computation is a scientific area that refers to the study and development of algorithms and software for manipulating mathematical expressions and other mathematical objects. Although, properly speaking, computer algebra should be a subfield of scientific computing, they are generally considered as distinct fields because scientific computing is usually based on numerical computation with approximate floating point numbers, while symbolic computation emphasizes exact computation with expressions containing variables that have not any given value and are thus manipulated as symbols (therefore the name of symbolic computation).

Software applications that perform symbolic calculations are called computer algebra systems, with the term system alluding to the complexity of the main applications that include, at least, a method to represent mathematical data in a computer, a user programming language (usually different from the language used for the implementation), a dedicated memory manager, a user interface for the input/output of mathematical expressions, a large set of routines to perform usual operations, like simplification of expressions, differentiation using chain rule, polynomial factorization, indefinite integration, etc.

Very-large-scale integration

[edit]Very-large-scale integration (VLSI) is the process of creating an integrated circuit (IC) by combining thousands of transistors into a single chip. VLSI began in the 1970s when complex semiconductor and communication technologies were being developed. The microprocessor is a VLSI device. Before the introduction of VLSI technology most ICs had a limited set of functions they could perform. An electronic circuit might consist of a CPU, ROM, RAM and other glue logic. VLSI allows IC makers to add all of these circuits into one chip.

Organizations

[edit]- European Association for Theoretical Computer Science

- SIGACT

- Simons Institute for the Theory of Computing

Journals and newsletters

[edit]- Discrete Mathematics and Theoretical Computer Science

- Information and Computation

- Theory of Computing (open access journal)

- Formal Aspects of Computing

- Journal of the ACM

- SIAM Journal on Computing (SICOMP)

- SIGACT News

- Theoretical Computer Science

- Theory of Computing Systems

- TheoretiCS (open access journal)

- International Journal of Foundations of Computer Science

- Chicago Journal of Theoretical Computer Science (open access journal)

- Foundations and Trends in Theoretical Computer Science

- Journal of Automata, Languages and Combinatorics

- Acta Informatica

- Fundamenta Informaticae

- ACM Transactions on Computation Theory

- Computational Complexity

- Journal of Complexity

- ACM Transactions on Algorithms

- Information Processing Letters

- Open Computer Science (open access journal)

Conferences

[edit]- Annual ACM Symposium on Theory of Computing (STOC)[52]

- Annual IEEE Symposium on Foundations of Computer Science (FOCS)[52]

- Innovations in Theoretical Computer Science (ITCS)

- Mathematical Foundations of Computer Science (MFCS)[53]

- International Computer Science Symposium in Russia (CSR)[54]

- ACM–SIAM Symposium on Discrete Algorithms (SODA)[52]

- IEEE Symposium on Logic in Computer Science (LICS)[52]

- Computational Complexity Conference (CCC)[55]

- International Colloquium on Automata, Languages and Programming (ICALP)[55]

- Annual Symposium on Computational Geometry (SoCG)[55]

- ACM Symposium on Principles of Distributed Computing (PODC)[52]

- ACM Symposium on Parallelism in Algorithms and Architectures (SPAA)[55]

- Annual Conference on Learning Theory (COLT)[55]

- International Conference on Current Trends in Theory and Practice of Computer Science (SOFSEM)[56]

- Symposium on Theoretical Aspects of Computer Science (STACS)[55]

- European Symposium on Algorithms (ESA)[55]

- Workshop on Approximation Algorithms for Combinatorial Optimization Problems (APPROX)[55]

- Workshop on Randomization and Computation (RANDOM)[55]

- International Symposium on Algorithms and Computation (ISAAC)[55]

- International Symposium on Fundamentals of Computation Theory (FCT)[57]

- International Workshop on Graph-Theoretic Concepts in Computer Science (WG)

See also

[edit]Notes

[edit]- ^ "SIGACT". Retrieved 2017-01-19.

- ^ Cook, Stephen A. (1971). "The complexity of theorem-proving procedures". Proceedings of the third annual ACM symposium on Theory of computing - STOC '71. pp. 151–158. doi:10.1145/800157.805047. ISBN 978-1-4503-7464-4.

- ^ "Any classical mathematical algorithm, for example, can be described in a finite number of English words". Rogers, Hartley Jr. (1967). Theory of Recursive Functions and Effective Computability. McGraw-Hill. Page 2.

- ^ Well defined with respect to the agent that executes the algorithm: "There is a computing agent, usually human, which can react to the instructions and carry out the computations" (Rogers 1967, p. 2).

- ^ "an algorithm is a procedure for computing a function (with respect to some chosen notation for integers) ... this limitation (to numerical functions) results in no loss of generality", (Rogers 1967, p. 1).

- ^ "An algorithm has zero or more inputs, i.e., quantities which are given to it initially before the algorithm begins" (Knuth 1973:5).

- ^ "A procedure which has all the characteristics of an algorithm except that it possibly lacks finiteness may be called a 'computational method'" (Knuth 1973:5).

- ^ "An algorithm has one or more outputs, i.e. quantities which have a specified relation to the inputs" (Knuth 1973:5).

- ^ Whether or not a process with random interior processes (not including the input) is an algorithm is debatable. Rogers opines that: "a computation is carried out in a discrete stepwise fashion, without the use of continuous methods or analog devices . . . carried forward deterministically, without resort to random methods or devices, e.g., dice" (Rogers 1967, p. 2).

- ^ Rivest, Ronald L. (1990). "Cryptology". In J. Van Leeuwen (ed.). Handbook of Theoretical Computer Science. Vol. 1. Elsevier.

- ^ Bellare, Mihir; Rogaway, Phillip (21 September 2005). "Introduction". Introduction to Modern Cryptography. p. 10.

- ^ Menezes, A. J.; van Oorschot, P. C.; Vanstone, S. A. (1997). Handbook of Applied Cryptography. Taylor & Francis. ISBN 978-0-8493-8523-0.

- ^ Paul E. Black (ed.), entry for data structure in Dictionary of Algorithms and Data Structures. U.S. National Institute of Standards and Technology. 15 December 2004. Online version Accessed May 21, 2009.

- ^ Entry data structure in the Encyclopædia Britannica (2009) Online entry accessed on May 21, 2009.

- ^ a b Coulouris, George; Jean Dollimore; Tim Kindberg; Gordon Blair (2011). Distributed Systems: Concepts and Design (5th ed.). Boston: Addison-Wesley. ISBN 978-0-132-14301-1.

- ^ Ghosh, Sukumar (2007). Distributed Systems – An Algorithmic Approach. Chapman & Hall/CRC. p. 10. ISBN 978-1-58488-564-1.

- ^ R. W. Butler (2001-08-06). "What is Formal Methods?". Retrieved 2006-11-16.

- ^ C. Michael Holloway. "Why Engineers Should Consider Formal Methods" (PDF). 16th Digital Avionics Systems Conference (27–30 October 1997). Archived from the original (PDF) on 16 November 2006. Retrieved 2006-11-16.

- ^ Monin, pp.3–4

- ^ F. Rieke; D. Warland; R Ruyter van Steveninck; W Bialek (1997). Spikes: Exploring the Neural Code. The MIT press. ISBN 978-0262681087.

- ^ Huelsenbeck, J. P.; Ronquist, F.; Nielsen, R.; Bollback, J. P. (2001-12-14). "Bayesian Inference of Phylogeny and Its Impact on Evolutionary Biology". Science. 294 (5550). American Association for the Advancement of Science (AAAS): 2310–2314. Bibcode:2001Sci...294.2310H. doi:10.1126/science.1065889. ISSN 0036-8075. PMID 11743192. S2CID 2138288.

- ^ Rando Allikmets, Wyeth W. Wasserman, Amy Hutchinson, Philip Smallwood, Jeremy Nathans, Peter K. Rogan, Thomas D. Schneider, Michael Dean (1998) Organization of the ABCR gene: analysis of promoter and splice junction sequences, Gene 215:1, 111–122

- ^ Burnham, K. P. and Anderson D. R. (2002) Model Selection and Multimodel Inference: A Practical Information-Theoretic Approach, Second Edition (Springer Science, New York) ISBN 978-0-387-95364-9.

- ^ Jaynes, E. T. (1957-05-15). "Information Theory and Statistical Mechanics". Physical Review. 106 (4). American Physical Society (APS): 620–630. Bibcode:1957PhRv..106..620J. doi:10.1103/physrev.106.620. ISSN 0031-899X. S2CID 17870175.

- ^ Charles H. Bennett, Ming Li, and Bin Ma (2003) Chain Letters and Evolutionary Histories Archived 2007-10-07 at the Wayback Machine, Scientific American 288:6, 76–81

- ^ David R. Anderson (November 1, 2003). "Some background on why people in the empirical sciences may want to better understand the information-theoretic methods" (PDF). Archived from the original (PDF) on July 23, 2011. Retrieved 2010-06-23.

- ^ Ron Kovahi; Foster Provost (1998). "Glossary of terms". Machine Learning. 30: 271–274. doi:10.1023/A:1007411609915.

- ^ a b C. M. Bishop (2006). Pattern Recognition and Machine Learning. Springer. ISBN 978-0-387-31073-2.

- ^ Wernick, Yang, Brankov, Yourganov and Strother, Machine Learning in Medical Imaging, IEEE Signal Processing Magazine, vol. 27, no. 4, July 2010, pp. 25–38

- ^ Mannila, Heikki (1996). Data mining: machine learning, statistics, and databases. Int'l Conf. Scientific and Statistical Database Management. IEEE Computer Society.

- ^ Friedman, Jerome H. (1998). "Data Mining and Statistics: What's the connection?". Computing Science and Statistics. 29 (1): 3–9.

- ^ G.Rozenberg, T.Back, J.Kok, Editors, Handbook of Natural Computing, Springer Verlag, 2012

- ^ A.Brabazon, M.O'Neill, S.McGarraghy. Natural Computing Algorithms, Springer Verlag, 2015

- ^ Fredkin, F. Digital mechanics: An informational process based on reversible universal CA. Physica D 45 (1990) 254-270

- ^ Zuse, K. Rechnender Raum. Elektronische Datenverarbeitung 8 (1967) 336-344

- ^ Lloyd, S. Programming the Universe: A Quantum Computer Scientist Takes on the Cosmos. Knopf, 2006

- ^ Zenil, H. A Computable Universe: Understanding and Exploring Nature as Computation. World Scientific Publishing Company, 2012

- ^ Dodig-Crnkovic, G. and Giovagnoli, R. COMPUTING NATURE. Springer, 2013

- ^ Rozenberg, Grzegorz (2001). "Natural Computing". Current Trends in Theoretical Computer Science. pp. 543–690. doi:10.1142/9789812810403_0005. ISBN 978-981-02-4473-6.

- ^ Gottlieb, Allan; Almasi, George S. (1989). Highly parallel computing. Redwood City, Calif.: Benjamin/Cummings. ISBN 978-0-8053-0177-9.

- ^ S.V. Adve et al. (November 2008). "Parallel Computing Research at Illinois: The UPCRC Agenda" Archived 2008-12-09 at the Wayback Machine (PDF). Parallel@Illinois, University of Illinois at Urbana-Champaign. "The main techniques for these performance benefits – increased clock frequency and smarter but increasingly complex architectures – are now hitting the so-called power wall. The computer industry has accepted that future performance increases must largely come from increasing the number of processors (or cores) on a die, rather than making a single core go faster."

- ^ Asanovic et al. Old [conventional wisdom]: Power is free, but transistors are expensive. New [conventional wisdom] is [that] power is expensive, but transistors are "free".

- ^ Asanovic, Krste et al. (December 18, 2006). "The Landscape of Parallel Computing Research: A View from Berkeley" (PDF). University of California, Berkeley. Technical Report No. UCB/EECS-2006-183. "Old [conventional wisdom]: Increasing clock frequency is the primary method of improving processor performance. New [conventional wisdom]: Increasing parallelism is the primary method of improving processor performance ... Even representatives from Intel, a company generally associated with the 'higher clock-speed is better' position, warned that traditional approaches to maximizing performance through maximizing clock speed have been pushed to their limit."

- ^ Hennessy, John L.; Patterson, David A.; Larus, James R. (1999). Computer organization and design : the hardware/software interface (2. ed., 3rd print. ed.). San Francisco: Kaufmann. ISBN 978-1-55860-428-5.

- ^ "Quantum Computing with Molecules" article in Scientific American by Neil Gershenfeld and Isaac L. Chuang

- ^ Manin, Yu. I. (1980). Vychislimoe i nevychislimoe [Computable and Noncomputable] (in Russian). Sov.Radio. pp. 13–15. Archived from the original on 10 May 2013. Retrieved 4 March 2013.

- ^ Feynman, R. P. (1982). "Simulating physics with computers". International Journal of Theoretical Physics. 21 (6): 467–488. Bibcode:1982IJTP...21..467F. CiteSeerX 10.1.1.45.9310. doi:10.1007/BF02650179. S2CID 124545445.

- ^ Deutsch, David (1992-01-06). "Quantum computation". Physics World. 5 (6): 57–61. doi:10.1088/2058-7058/5/6/38.

- ^ Finkelstein, David (1968). "Space-Time Structure in High Energy Interactions". In Gudehus, T.; Kaiser, G. (eds.). Fundamental Interactions at High Energy. New York: Gordon & Breach.

- ^ "New qubit control bodes well for future of quantum computing". Retrieved 26 October 2014.

- ^ Quantum Information Science and Technology Roadmap for a sense of where the research is heading.

- ^ a b c d e The 2007 Australian Ranking of ICT Conferences Archived 2009-10-02 at the Wayback Machine: tier A+.

- ^ "MFCS 2017". Archived from the original on 2018-01-10. Retrieved 2018-01-09.

- ^ CSR 2018

- ^ a b c d e f g h i j The 2007 Australian Ranking of ICT Conferences Archived 2009-10-02 at the Wayback Machine: tier A.

- ^ SOFSEM webpage (retrieved 2024-09-03)

- ^ FCT 2011 (retrieved 2013-06-03)

Further reading

[edit]- Martin Davis, Ron Sigal, Elaine J. Weyuker, Computability, complexity, and languages: fundamentals of theoretical computer science, 2nd ed., Academic Press, 1994, ISBN 0-12-206382-1. Covers theory of computation, but also program semantics and quantification theory. Aimed at graduate students.

External links

[edit]- SIGACT directory of additional theory links (archived 15 July 2017)

- Theory Matters Wiki Theoretical Computer Science (TCS) Advocacy Wiki

- List of academic conferences in the area of theoretical computer science at confsearch

- Theoretical Computer Science – StackExchange, a Question and Answer site for researchers in theoretical computer science

- Computer Science Animated

- Theory of computation at the Massachusetts Institute of Technology