Chatbot

Parts of this article (those related to everything, particularly sections after the intro) need to be updated. The reason given is: this article is using citations from 1970 and virtually all claims about conversational capabilities are at least ten years out of date (for example the Turing test was arguably made obsolete years ago by transformer models). (February 2023) |

| Part of a series on |

| Machine learning and data mining |

|---|

A chatbot (originally chatterbot)[1] is a software application or web interface that is designed to mimic human conversation through text or voice interactions.[2][3][4] Modern chatbots are typically online and use generative artificial intelligence systems that are capable of maintaining a conversation with a user in natural language and simulating the way a human would behave as a conversational partner. Such chatbots often use deep learning and natural language processing, but simpler chatbots have existed for decades.

Although chatbots have existed since the late 1960s, the field gained widespread attention in the early 2020s due to the popularity of OpenAI's ChatGPT,[5][6] followed by alternatives such as Microsoft's Copilot and Google's Gemini.[7] Such examples reflect the recent practice of basing such products upon broad foundational large language models, such as GPT-4 or the Gemini language model, that get fine-tuned so as to target specific tasks or applications (i.e., simulating human conversation, in the case of chatbots). Chatbots can also be designed or customized to further target even more specific situations and/or particular subject-matter domains.[8]

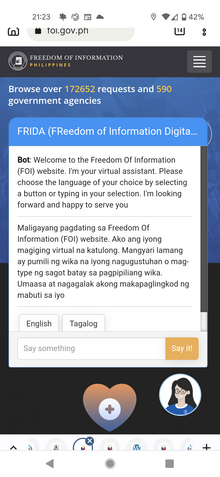

A major area where chatbots have long been used is in customer service and support, with various sorts of virtual assistants.[9] Companies spanning a wide range of industries have begun using the latest generative artificial intelligence technologies to power more advanced developments in such areas.[8]

As chatbots work by predicting responses rather than knowing the meaning of their responses, this means they can produce coherent-sounding but inaccurate or fabricated content, referred to as ‘hallucinations’. When humans use and apply chatbot content contaminated with hallucinations, this results in ‘botshit’.[10] Given the increasing adoption and use of chatbots for generating content, there are concerns that this technology will significantly reduce the cost it takes humans to generate, spread and consume botshit.[11]

Background

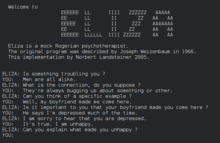

[edit]In 1950, Alan Turing's famous article "Computing Machinery and Intelligence" was published,[12] which proposed what is now called the Turing test as a criterion of intelligence. This criterion depends on the ability of a computer program to impersonate a human in a real-time written conversation with a human judge to the extent that the judge is unable to distinguish reliably—on the basis of the conversational content alone—between the program and a real human. The notoriety of Turing's proposed test stimulated great interest in Joseph Weizenbaum's program ELIZA, published in 1966, which seemed to be able to fool users into believing that they were conversing with a real human. However Weizenbaum himself did not claim that ELIZA was genuinely intelligent, and the introduction to his paper presented it more as a debunking exercise:

In artificial intelligence, machines are made to behave in wondrous ways, often sufficient to dazzle even the most experienced observer. But once a particular program is unmasked, once its inner workings are explained, its magic crumbles away; it stands revealed as a mere collection of procedures. The observer says to himself "I could have written that". With that thought, he moves the program in question from the shelf marked "intelligent", to that reserved for curios. The object of this paper is to cause just such a re-evaluation of the program about to be "explained". Few programs ever needed it more.[13]

ELIZA's key method of operation (copied by chatbot designers ever since) involves the recognition of clue words or phrases in the input, and the output of the corresponding pre-prepared or pre-programmed responses that can move the conversation forward in an apparently meaningful way (e.g. by responding to any input that contains the word 'MOTHER' with 'TELL ME MORE ABOUT YOUR FAMILY').[13] Thus an illusion of understanding is generated, even though the processing involved has been merely superficial. ELIZA showed that such an illusion is surprisingly easy to generate because human judges are so ready to give the benefit of the doubt when conversational responses are capable of being interpreted as "intelligent".

Interface designers have come to appreciate that humans' readiness to interpret computer output as genuinely conversational—even when it is actually based on rather simple pattern-matching—can be exploited for useful purposes. Most people prefer to engage with programs that are human-like, and this gives chatbot-style techniques a potentially useful role in interactive systems that need to elicit information from users, as long as that information is relatively straightforward and falls into predictable categories. Thus, for example, online help systems can usefully employ chatbot techniques to identify the area of help that users require, potentially providing a "friendlier" interface than a more formal search or menu system. This sort of usage holds the prospect of moving chatbot technology from Weizenbaum's "shelf ... reserved for curios" to that marked "genuinely useful computational methods".

Development

[edit]Among the most notable early chatbots are ELIZA (1966) and PARRY (1972).[14][15][16][17] More recent notable programs include A.L.I.C.E., Jabberwacky and D.U.D.E (Agence Nationale de la Recherche and CNRS 2006). While ELIZA and PARRY were used exclusively to simulate typed conversation, many chatbots now include other functional features, such as games and web searching abilities. In 1984, a book called The Policeman's Beard is Half Constructed was published, allegedly written by the chatbot Racter (though the program as released would not have been capable of doing so).[18]

From 1978[19] to some time after 1983,[20] the CYRUS project led by Janet Kolodner constructed a chatbot simulating Cyrus Vance (57th United States Secretary of State). It used case-based reasoning, and updated its database daily by parsing wire news from United Press International. The program was unable to process the news items subsequent to the surprise resignation of Cyrus Vance in April 1980, and the team constructed another chatbot simulating his successor, Edmund Muskie.[21][20]

One pertinent field of AI research is natural-language processing. Usually, weak AI fields employ specialized software or programming languages created specifically for the narrow function required. For example, A.L.I.C.E. uses a markup language called AIML,[3] which is specific to its function as a conversational agent, and has since been adopted by various other developers of, so-called, Alicebots. Nevertheless, A.L.I.C.E. is still purely based on pattern matching techniques without any reasoning capabilities, the same technique ELIZA was using back in 1966. This is not strong AI, which would require sapience and logical reasoning abilities.

Jabberwacky learns new responses and context based on real-time user interactions, rather than being driven from a static database. Some more recent chatbots also combine real-time learning with evolutionary algorithms that optimize their ability to communicate based on each conversation held. Still, there is currently no general purpose conversational artificial intelligence, and some software developers focus on the practical aspect, information retrieval.

Chatbot competitions focus on the Turing test or more specific goals. Two such annual contests are the Loebner Prize and The Chatterbox Challenge (the latter has been offline since 2015, however, materials can still be found from web archives).[22]

Chatbots may use artificial neural networks as a language model. For example, generative pre-trained transformers (GPT), which use the transformer architecture, have become common to build sophisticated chatbots. The "pre-training" in its name refers to the initial training process on a large text corpus, which provides a solid foundation for the model to perform well on downstream tasks with limited amounts of task-specific data. An example of a GPT chatbot is ChatGPT.[23] Despite criticism of its accuracy and tendency to “hallucinate”—that is, to confidently output false information and even cite non-existent sources—ChatGPT has gained attention for its detailed responses and historical knowledge. Another example is BioGPT, developed by Microsoft, which focuses on answering biomedical questions.[24][25] In November 2023, Amazon announced a new chatbot, called Q, for people to use at work.[26]

DBpedia created a chatbot during the GSoC of 2017.[27][28][29] It can communicate through Facebook Messenger (see Master of Code Global article).

Application

[edit]Messaging apps

[edit]Many companies' chatbots run on messaging apps or simply via SMS. They are used for B2C customer service, sales and marketing.[30]

In 2016, Facebook Messenger allowed developers to place chatbots on their platform. There were 30,000 bots created for Messenger in the first six months, rising to 100,000 by September 2017.[31]

Since September 2017, this has also been as part of a pilot program on WhatsApp. Airlines KLM and Aeroméxico both announced their participation in the testing;[32][33][34][35] both airlines had previously launched customer services on the Facebook Messenger platform.

The bots usually appear as one of the user's contacts, but can sometimes act as participants in a group chat.

Many banks, insurers, media companies, e-commerce companies, airlines, hotel chains, retailers, health care providers, government entities, and restaurant chains have used chatbots to answer simple questions, increase customer engagement,[36] for promotion, and to offer additional ways to order from them.[37] Chatbots are also used in market research to collect short survey responses.[38]

A 2017 study showed 4% of companies used chatbots.[39] According to a 2016 study, 80% of businesses said they intended to have one by 2020.[40]

As part of company apps and websites

[edit]Previous generations of chatbots were present on company websites, e.g. Ask Jenn from Alaska Airlines which debuted in 2008[41] or Expedia's virtual customer service agent which launched in 2011.[41][42] The newer generation of chatbots includes IBM Watson-powered "Rocky", introduced in February 2017 by the New York City-based e-commerce company Rare Carat to provide information to prospective diamond buyers.[43][44]

Chatbot sequences

[edit]Used by marketers to script sequences of messages, very similar to an autoresponder sequence. Such sequences can be triggered by user opt-in or the use of keywords within user interactions. After a trigger occurs a sequence of messages is delivered until the next anticipated user response. Each user response is used in the decision tree to help the chatbot navigate the response sequences to deliver the correct response message.

Company internal platforms

[edit]Other companies explore ways they can use chatbots internally, for example for Customer Support, Human Resources, or even in Internet-of-Things (IoT) projects. Overstock.com, for one, has reportedly launched a chatbot named Mila to automate certain simple yet time-consuming processes when requesting sick leave.[45] Other large companies such as Lloyds Banking Group, Royal Bank of Scotland, Renault and Citroën are now using automated online assistants instead of call centres with humans to provide a first point of contact. A SaaS chatbot business ecosystem has been steadily growing since the F8 Conference when Facebook’s Mark Zuckerberg unveiled that Messenger would allow chatbots into the app.[46] In large companies, like in hospitals and aviation organizations, IT architects are designing reference architectures for Intelligent Chatbots that are used to unlock and share knowledge and experience in the organization more efficiently, and reduce the errors in answers from expert service desks significantly.[47] These Intelligent Chatbots make use of all kinds of artificial intelligence like image moderation and natural-language understanding (NLU), natural-language generation (NLG), machine learning and deep learning.

Customer service

[edit]Chatbots have great potential to serve as an alternate source for customer service.[48] Many high-tech banking organizations are looking to integrate automated AI-based solutions such as chatbots into their customer service in order to provide faster and cheaper assistance to their clients who are becoming increasingly comfortable with technology. In particular, chatbots can efficiently conduct a dialogue, usually replacing other communication tools such as email, phone, or SMS. In banking, their major application is related to quick customer service answering common requests, as well as transactional support.

Deep learning techniques can be incorporated into chatbot applications to allow them to map conversations between users and customer service agents, especially in social media.[49] Research has shown that methods incorporating deep learning can learn writing styles from a brand and transfer them to another, promoting the brand's image on social media platforms.[49] Chatbots can create new ways of brands and user interactions, which can help improve the brand's performance and allow users to gain "social, information, and economic benefits".[49]

Several studies report significant reduction in the cost of customer services, expected to lead to billions of dollars of economic savings in the next ten years.[50] In 2019, Gartner predicted that by 2021, 15% of all customer service interactions globally will be handled completely by AI.[51] A study by Juniper Research in 2019 estimates retail sales resulting from chatbot-based interactions will reach $112 billion by 2023.[52]

Since 2016, when Facebook allowed businesses to deliver automated customer support, e-commerce guidance, content, and interactive experiences through chatbots, a large variety of chatbots were developed for the Facebook Messenger platform.[53]

In 2016, Russia-based Tochka Bank launched the world's first Facebook bot for a range of financial services, including a possibility of making payments.[54]

In July 2016, Barclays Africa also launched a Facebook chatbot, making it the first bank to do so in Africa.[55]

The France's third-largest bank by total assets[56] Société Générale launched their chatbot called SoBot in March 2018. While 80% of users of the SoBot expressed their satisfaction after having tested it, Société Générale deputy director Bertrand Cozzarolo stated that it will never replace the expertise provided by a human advisor. [57]

The advantages of using chatbots for customer interactions in banking include cost reduction, financial advice, and 24/7 support.[58][59]

Healthcare

[edit]Chatbots are also appearing in the healthcare industry.[60][61] A study suggested that physicians in the United States believed that chatbots would be most beneficial for scheduling doctor appointments, locating health clinics, or providing medication information.[62]

The GPT chatbot ChatGPT is able to answer user queries related to health promotion and disease prevention such as screening and vaccination.[63] Whatsapp has teamed up with the World Health Organization (WHO) to make a chatbot service that answers users' questions on COVID-19.[64]

In 2020, The Indian Government launched a chatbot called MyGov Corona Helpdesk,[65] that worked through Whatsapp and helped people access information about the Coronavirus (COVID-19) pandemic.[66][67]

Certain patient groups are still reluctant to use chatbots. A mixed-methods study showed that people are still hesitant to use chatbots for their healthcare due to poor understanding of the technological complexity, the lack of empathy, and concerns about cyber-security.[68] The analysis showed that while 6% had heard of a health chatbot and 3% had experience of using it, 67% perceived themselves as likely to use one within 12 months. The majority of participants would use a health chatbot for seeking general health information (78%), booking a medical appointment (78%), and looking for local health services (80%). However, a health chatbot was perceived as less suitable for seeking results of medical tests and seeking specialist advice such as sexual health.

The analysis of attitudinal variables showed that most participants reported their preference for discussing their health with doctors (73%) and having access to reliable and accurate health information (93%). While 80% were curious about new technologies that could improve their health, 66% reported only seeking a doctor when experiencing a health problem and 65% thought that a chatbot was a good idea. 30% reported dislike about talking to computers, 41% felt it would be strange to discuss health matters with a chatbot and about half were unsure if they could trust the advice given by a chatbot. Therefore, perceived trustworthiness, individual attitudes towards bots, and dislike for talking to computers are the main barriers to health chatbots.[63]

Politics

[edit]In New Zealand, the chatbot SAM – short for Semantic Analysis Machine[69] (made by Nick Gerritsen of Touchtech[70]) – has been developed. It is designed to share its political thoughts, for example on topics such as climate change, healthcare and education, etc. It talks to people through Facebook Messenger.[71][72][73][74]

In 2022, the chatbot "Leader Lars" or "Leder Lars" was nominated for The Synthetic Party to run in the Danish parliamentary election,[75] and was built by the artist collective Computer Lars.[76] Leader Lars differed from earlier virtual politicians by leading a political party and by not pretending to be an objective candidate.[77] This chatbot engaged in critical discussions on politics with users from around the world.[78]

In India, the state government has launched a chatbot for its Aaple Sarkar platform,[79] which provides conversational access to information regarding public services managed.[80][81]

Government

[edit]Chatbots have been used at different levels of government departments, including local, national and regional contexts. Chatbots are used to provide services like citizenship and immigration, court administrations, financial aid, and migrants’ rights inquiries. For example, EMMA answers more than 500,000 inquiries monthly, regarding services on citizenship and immigration in the US.[82]

Toys

[edit]Chatbots have also been incorporated into devices not primarily meant for computing, such as toys.[83]

Hello Barbie is an Internet-connected version of the doll that uses a chatbot provided by the company ToyTalk,[84] which previously used the chatbot for a range of smartphone-based characters for children.[85] These characters' behaviors are constrained by a set of rules that in effect emulate a particular character and produce a storyline.[86]

The My Friend Cayla doll was marketed as a line of 18-inch (46 cm) dolls which uses speech recognition technology in conjunction with an Android or iOS mobile app to recognize the child's speech and have a conversation. Like the Hello Barbie doll, it attracted controversy due to vulnerabilities with the doll's Bluetooth stack and its use of data collected from the child's speech.

IBM's Watson computer has been used as the basis for chatbot-based educational toys for companies such as CogniToys,[83] intended to interact with children for educational purposes.[87]

Malicious use

[edit]Malicious chatbots are frequently used to fill chat rooms with spam and advertisements by mimicking human behavior and conversations or to entice people into revealing personal information, such as bank account numbers. They were commonly found on Yahoo! Messenger, Windows Live Messenger, AOL Instant Messenger and other instant messaging protocols. There has also been a published report of a chatbot used in a fake personal ad on a dating service's website.[88]

Tay, an AI chatbot designed to learn from previous interaction, caused major controversy due to it being targeted by internet trolls on Twitter. Soon after its launch, the bot was exploited, and with its "repeat after me" capability, it started releasing racist, sexist, and controversial responses to Twitter users.[89] This suggests that although the bot learned effectively from experience, adequate protection was not put in place to prevent misuse.[90]

If a text-sending algorithm can pass itself off as a human instead of a chatbot, its message would be more credible. Therefore, human-seeming chatbots with well-crafted online identities could start scattering fake news that seems plausible, for instance making false claims during an election. With enough chatbots, it might be even possible to achieve artificial social proof.[91][92]

Data security

[edit]Data security is one of the major concerns of chatbot technologies. Security threats and system vulnerabilities are weaknesses that are often exploited by malicious users. Storage of user data and past communication, that is highly valuable for training and development of chatbots, can also give rise to security threats.[93] Chatbots operating on third-party networks may be subject to various security issues if owners of the third-party applications have policies regarding user data that differ from those of the chatbot.[93] Security threats can be reduced or prevented by incorporating protective mechanisms. User authentication, chat End-to-end encryption, and self-destructing messages are some effective solutions to resist potential security threats.[93]

Limitations of chatbots

[edit]The creation and implementation of chatbots is still a developing area, heavily related to artificial intelligence and machine learning, so the provided solutions, while possessing obvious advantages, have some important limitations in terms of functionalities and use cases. However, this is changing over time.

The most common limitations are listed below:[94]

- As the input/output database is fixed and limited, chatbots can fail while dealing with an unsaved query.[59]

- A chatbot's efficiency highly depends on language processing and is limited because of irregularities, such as accents and mistakes.

- Chatbots are unable to deal with multiple questions at the same time and so conversation opportunities are limited.[94]

- Chatbots require a large amount of conversational data to train. Generative models, which are based on deep learning algorithms to generate new responses word by word based on user input, are usually trained on a large dataset of natural-language phrases.[3]

- Chatbots have difficulty managing non-linear conversations that must go back and forth on a topic with a user.[95]

- As it happens usually with technology-led changes in existing services, some consumers, more often than not from older generations, are uncomfortable with chatbots due to their limited understanding, making it obvious that their requests are being dealt with by machines.[94]

- Chatbots sometimes provide plausible-sounding but incorrect or nonsensical answers. They can make up names, dates, historical events, and even simple math problems. [96]

In 2023, US-based National Eating Disorders Association replaced its human helpline staff with a chatbot but had to take it offline after users reported receiving harmful advice from it.[97][98][99]

Impact on jobs

[edit]Chatbots are increasingly present in businesses and often are used to automate tasks that do not require skill-based talents. With customer service taking place via messaging apps as well as phone calls, there are growing numbers of use-cases where chatbot deployment gives organizations a clear return on investment. Call center workers may be particularly at risk from AI-driven chatbots.[100]

Chatbot developers create, debug, and maintain applications that automate customer services or other communication processes. Their duties include reviewing and simplifying code when needed. They may also help companies implement bots in their operations.

A study by Forrester (June 2017) predicted that 25% of all jobs would be impacted by AI technologies by 2019.[101]

Prompt engineering, the task of designing and refining prompts (inputs) leading to desired AI-generated responses has gained significant demand and popularity in recent years, with the advent of sophisticated models, notably OpenAI's GPT series.

Impact on the environment

[edit]Generative AI is powered by high amounts of fossil fuels. Such energy use increases air pollution, water pollution, and greenhouse gas emissions. A single question asked in ChatGPT uses 1,567% of the energy used for a single Google search.[102][103][104][105]

See also

[edit]- Applications of artificial intelligence

- Artificial intelligence and elections

- Autonomous agent

- Conversational user interface

- Dead Internet theory

- Dialogue system

- Eugene Goostman

- Friendly artificial intelligence

- Generative artificial intelligence

- Hybrid intelligent system

- Intelligent agent

- Internet bot

- List of chatbots

- Multi-agent system

- Natural language processing

- Social bot

- Software agent

- Software bot

- Stochastic parrot

- Twitterbot

References

[edit]- ^ Mauldin, Michael (1994), "ChatterBots, TinyMuds, and the Turing Test: Entering the Loebner Prize Competition", Proceedings of the Eleventh National Conference on Artificial Intelligence, AAAI Press, archived from the original on 13 December 2007, retrieved 5 March 2008

- ^ "What is a chatbot?". techtarget.com. Archived from the original on 2 November 2010. Retrieved 30 January 2017.

- ^ a b c Caldarini, Guendalina; Jaf, Sardar; McGarry, Kenneth (2022). "A Literature Survey of Recent Advances in Chatbots". Information. 13 (1). MDPI: 41. arXiv:2201.06657. doi:10.3390/info13010041.

- ^ Adamopoulou, Eleni; Moussiades, Lefteris (2020). "Chatbots: History, technology, and applications". Machine Learning with Applications. 2: 100006. doi:10.1016/j.mlwa.2020.100006.

- ^ Hu, Krystal (2 February 2023). "ChatGPT sets record for fastest-growing user base - analyst note". Reuters.

- ^ Hines, Kristi (4 June 2023). "History Of ChatGPT: A Timeline Of The Meteoric Rise Of Generative AI Chatbots". Search Engine Journal. Retrieved 17 November 2023.

- ^ "ChatGPT vs. Bing vs. Google Bard: Which AI is the Most Helpful?".

- ^ a b "GPT-4 takes the world by storm - List of companies that integrated the chatbot". 21 March 2023.

- ^ "2017 Messenger Bot Landscape, a Public Spreadsheet Gathering 1000+ Messenger Bots". 3 May 2017. Archived from the original on 2 February 2019. Retrieved 1 February 2019.

- ^ Hannigan, Timothy R.; McCarthy, Ian P.; Spicer, André (20 March 2024). "Beware of botshit: How to manage the epistemic risks of generative chatbots". Business Horizons. 67 (5): 471–486. doi:10.1016/j.bushor.2024.03.001. ISSN 0007-6813.

- ^ "Transcript: Ezra Klein Interviews Gary Marcus". The New York Times. 6 January 2023. ISSN 0362-4331. Retrieved 21 April 2024.

- ^ Turing, Alan (1950), "Computing Machinery and Intelligence", Mind, 59 (236): 433–460, doi:10.1093/mind/lix.236.433

- ^ a b Weizenbaum, Joseph (January 1966), "ELIZA – A Computer Program For the Study of Natural Language Communication Between Man And Machine", Communications of the ACM, 9 (1): 36–45, doi:10.1145/365153.365168, S2CID 1896290

- ^ Güzeldere, Güven; Franchi, Stefano (24 July 1995), "Constructions of the Mind", Stanford Humanities Review, SEHR, 4 (2), Stanford University, archived from the original on 11 July 2007, retrieved 5 March 2008

- ^ Computer History Museum (2006), "Internet History – 1970's", Exhibits, Computer History Museum, archived from the original on 21 February 2008, retrieved 5 March 2008

- ^ Sondheim, Alan J (1997), <nettime> Important Documents from the Early Internet (1972), nettime.org, archived from the original on 13 June 2008, retrieved 5 March 2008

- ^ Network Working Group (1973). "PARRY Encounters the DOCTOR". Internet Engineering Task Force. Internet Society. doi:10.17487/RFC0439. RFC 439. – Transcript of a session between Parry and Eliza. (This is not the dialogue from the ICCC, which took place 24–26 October 1972, whereas this session is from 18 September 1972.)

- ^ The Policeman's Beard is Half Constructed Archived 4 February 2010 at the Wayback Machine. everything2.com. 13 November 1999

- ^ Kolodner, Janet L. Memory organization for natural language data-base inquiry. Advanced Research Projects Agency, 1978.

- ^ a b Kolodner, Janet L. (1 October 1983). "Maintaining organization in a dynamic long-term memory". Cognitive Science. 7 (4): 243–280. doi:10.1016/S0364-0213(83)80001-9. ISSN 0364-0213.

- ^ Dennett, Daniel C. (2004), Teuscher, Christof (ed.), "Can Machines Think?", Alan Turing: Life and Legacy of a Great Thinker, Berlin, Heidelberg: Springer, pp. 295–316, doi:10.1007/978-3-662-05642-4_12, ISBN 978-3-662-05642-4, retrieved 23 July 2023

- ^ "Chat Robots Simiulate People". 11 October 2015.

- ^ "What is ChatGPT? - MagicBuddy". MagicBuddy. 2023. Retrieved 1 August 2023.

- ^ Luo, Renqian; Sun, Liai; Xia, Yingce; Qin, Tao; Zhang, Sheng; Poon, Hoifung; Liu, Tie-Yan; et al. (2022). "BioGPT: generative pre-trained transformer for biomedical text generation and mining". Brief Bioinform. 23 (6). arXiv:2210.10341. doi:10.1093/bib/bbac409. PMID 36156661.

- ^ Bastian, Matthias (29 January 2023). "BioGPT is a Microsoft language model trained for biomedical tasks". The Decoder. Archived from the original on 7 February 2023. Retrieved 7 February 2023.

- ^ Novet, Jordan (28 November 2023). "Amazon announces Q, an AI chatbot for businesses". CNBC. Retrieved 28 November 2023.

- ^ "DBpedia Chatbot". chat.dbpedia.org. Archived from the original on 8 September 2019. Retrieved 9 September 2019.

- ^ "Meet the DBpedia Chatbot | DBpedia". wiki.dbpedia.org. 22 August 2018. Archived from the original on 2 September 2019. Retrieved 2 September 2019.

- ^ "Meet the DBpedia Chatbot". dbpedia.org. 22 August 2018. Archived from the original on 2 September 2019. Retrieved 2 September 2019.

- ^ Beaver, Laurie (July 2016). "The Chatbots Explainer". Business Insider. BI Intelligence. Archived from the original on 3 May 2019. Retrieved 4 November 2019.

- ^ "Facebook Messenger Hits 100,000 bots". 18 April 2017. Archived from the original on 22 September 2017. Retrieved 22 September 2017.

- ^ "KLM claims airline first with WhatsApp Business Platform". www.phocuswire.com. Archived from the original on 5 February 2020. Retrieved 12 December 2021.

- ^ Forbes Staff (26 October 2017). "Aeroméxico te atenderá por WhatsApp durante 2018". Archived from the original on 2 July 2018. Retrieved 2 July 2018.

- ^ "Podrás hacer 'check in' y consultar tu vuelo con Aeroméxico a través de WhatsApp". Huffington Post. 27 October 2017. Archived from the original on 10 March 2018. Retrieved 2 July 2018.

- ^ "Building for People, and Now Businesses". WhatsApp.com. Archived from the original on 9 February 2018. Retrieved 2 July 2018.

- ^ "She is the company's most effective employee". Nordea News. September 2017. Archived from the original on 23 March 2023. Retrieved 23 March 2023.

- ^ "Better believe the bot boom is blowing up big for B2B, B2C businesses". VentureBeat. 24 July 2016. Archived from the original on 3 August 2017. Retrieved 30 August 2017.

- ^ Dandapani, Arundati (30 April 2020). "Redesigning Conversations with Artificial Intelligence (Chapter 11)". In Sha, Mandy (ed.). The Essential Role of Language in Survey Research. RTI Press. pp. 221–230. doi:10.3768/rtipress.bk.0023.2004. ISBN 978-1-934831-24-3.

- ^ Capan, Faruk (18 October 2017). "The AI Revolution is Underway!". www.PM360online.com. Archived from the original on 8 March 2018. Retrieved 7 March 2018.

- ^ "80% of businesses want chatbots by 2020". Business Insider. 15 December 2016. Archived from the original on 8 March 2018. Retrieved 7 March 2018.

- ^ a b "A Virtual Travel Agent With All the Answers". The New York Times. 4 March 2008. Archived from the original on 15 June 2017. Retrieved 3 August 2017.

- ^ "Chatbot vendor directory released". www.hypergridbusiness.com. October 2011. Archived from the original on 23 April 2017. Retrieved 23 April 2017.

- ^ "Rare Carat's Watson-powered chatbot will help you put a diamond ring on it". TechCrunch. 15 February 2017. Archived from the original on 22 August 2017. Retrieved 22 August 2017.

- ^ "10 ways you may have already used IBM Watson". VentureBeat. 10 March 2017. Archived from the original on 22 August 2017. Retrieved 22 August 2017.

- ^ Greenfield, Rebecca (5 May 2016). "Chatbots Are Your Newest, Dumbest Co-Workers". Bloomberg.com. Archived from the original on 6 April 2017. Retrieved 6 March 2017.

- ^ "Facebook opens its Messenger platform to chatbots". Venturebeat. 12 April 2016. Archived from the original on 23 June 2017. Retrieved 22 June 2017.

- ^ "Chatbot Reference Architecture". 1 January 2019. Archived from the original on 15 January 2019. Retrieved 14 January 2019.

- ^ Følstad, Asbjørn; Nordheim, Cecilie Bertinussen; Bjørkli, Cato Alexander (2018). "What Makes Users Trust a Chatbot for Customer Service? An Exploratory Interview Study". In Bodrunova, Svetlana S. (ed.). Internet Science. Lecture Notes in Computer Science. Vol. 11193. Cham: Springer International Publishing. pp. 194–208. doi:10.1007/978-3-030-01437-7_16. hdl:11250/2571164. ISBN 978-3-030-01437-7.

- ^ a b c Xu, Anbang; Liu, Zhe; Guo, Yufan; Sinha, Vibha; Akkiraju, Rama (2 May 2017). "A New Chatbot for Customer Service on Social Media". Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems. CHI '17. New York, NY, USA: Association for Computing Machinery. pp. 3506–3510. doi:10.1145/3025453.3025496. ISBN 978-1-4503-4655-9.

- ^ Hingrajia, Mirant. "How Chatbots are Transforming Wall Street and Main Street Banks?". Marutitech. Archived from the original on 1 April 2019. Retrieved 1 April 2019.

- ^ "How to Manage Customer Service Technology Innovation". www.gartner.com. Archived from the original on 11 December 2019. Retrieved 2 January 2020.

- ^ Smith, Sam (8 May 2019). "Chatbot Interactions to Reach 22 Billion by 2023, as AS AI Offers Compelling New Engagement Solutions". Juniper Research. Archived from the original on 2 January 2020. Retrieved 2 January 2020.

- ^ "Facebook launches Messenger platform with chatbots". Techcrunch. 12 April 2016. Archived from the original on 26 October 2019. Retrieved 1 April 2019.

- ^ "Российский банк запустил чат-бота в Facebook". Vedomosti.ru. 13 July 2016. Archived from the original on 1 April 2019. Retrieved 1 April 2019.

- ^ "Absa launches 'world-first' Facebook Messenger banking". 19 July 2016. Archived from the original on 1 April 2019. Retrieved 1 April 2019.

- ^ "The Biggest French Banks by Total Assets". Banks around the World. Archived from the original on 9 July 2017. Retrieved 1 April 2019.

- ^ "Gagner du Temps avec le Chatbot Bancaire pour Gagner en Intelligence avec les Conseillers". Marketing Client. 19 June 2018. Archived from the original on 1 April 2019. Retrieved 1 April 2019.

- ^ Marous, Jim (14 March 2018). "Meet 11 of the Most Interesting Chatbots in Banking". The Financial Brand. Archived from the original on 1 April 2019. Retrieved 1 April 2019.

- ^ a b "Chatbots: Boon or Bane?". bluelupin. 9 January 2018. Archived from the original on 1 April 2019. Retrieved 1 April 2019.

- ^ Larson, Selena (11 October 2016). "Baidu is bringing AI chatbots to healthcare". CNN Money. Archived from the original on 3 January 2020. Retrieved 3 January 2020.

- ^ "AI chatbots have a future in healthcare, with caveats". AI in Healthcare. Archived from the original on 23 March 2023. Retrieved 17 September 2019.

- ^ Palanica, Adam; Flaschner, Peter; Thommandram, Anirudh; Li, Michael; Fossat, Yan (3 January 2019). "Physicians' Perceptions of Chatbots in Health Care: Cross-Sectional Web-Based Survey". Journal of Medical Internet Research. 21 (4): e12887. doi:10.2196/12887. PMC 6473203. PMID 30950796.

- ^ a b Biswas, Som S. (1 May 2023). "Role of Chat GPT in Public Health". Annals of Biomedical Engineering. 51 (5): 868–869. doi:10.1007/s10439-023-03172-7. ISSN 1573-9686. PMID 36920578.

- ^ Ahaskar, Abhijit (27 March 2020). "How WhatsApp chatbots are helping in the fight against Covid-19". Mint. Archived from the original on 23 July 2020. Retrieved 23 July 2020.

- ^ "India's Coronavirus Chatbot on WhatsApp Crosses 1.7 Crore Users in 10 Days". NDTV Gadgets 360. April 2020. Archived from the original on 21 June 2020. Retrieved 23 July 2020.

- ^ Kurup, Rajesh (21 March 2020). "COVID-19: Govt of India launches a WhatsApp chatbot". Business Line. Archived from the original on 23 July 2020. Retrieved 23 July 2020.

- ^ "In focus: Mumbai-based Haptik which developed India's official WhatsApp chatbot for Covid-19". Hindustan Times. 7 April 2020. Archived from the original on 23 July 2020. Retrieved 23 July 2020.

- ^ Nadarzynski, Tom; Miles, Oliver; Cowie, Aimee; Ridge, Damien (1 January 2019). "Acceptability of artificial intelligence (AI)-led chatbot services in healthcare: A mixed-methods study". Digital Health. 5: 2055207619871808. doi:10.1177/2055207619871808. PMC 6704417. PMID 31467682.

- ^ "Sam, the virtual politician". Tuia Innovation. Archived from the original on 1 September 2019. Retrieved 9 September 2019.

- ^ Wellington, Victoria University of (15 December 2017). "Meet the world's first virtual politician". Victoria University of Wellington. Archived from the original on 3 January 2020. Retrieved 3 January 2020.

- ^ Wagner, Meg (23 November 2017). "This virtual politician wants to run for office". CNN. Archived from the original on 1 September 2019. Retrieved 9 September 2019.

- ^ "Talk with the first-ever robot politician on Facebook Messenger". Engadget. 25 November 2017. Archived from the original on 4 August 2019. Retrieved 9 September 2019.

- ^ Prakash, Abishur (8 August 2018). "AI-Politicians: A Revolution In Politics". Medium. Archived from the original on 10 August 2019. Retrieved 1 September 2019.

- ^ "SAM website". Archived from the original on 11 May 2021. Retrieved 23 May 2021.

- ^ Sternberg, Sarah (20 June 2022). "Danskere vil ind på den politiske scene med kunstig intelligens" [Danes want to enter the political scene with artificial intelligence]. Jyllands-Posten. Archived from the original on 20 June 2022. Retrieved 20 June 2022.

- ^ Diwakar, Amar (22 August 2022). "Can an AI-led Danish party usher in an age of algorithmic politics?". TRT World. Archived from the original on 22 August 2022. Retrieved 22 August 2022.

- ^ Xiang, Chloe (13 October 2022). "This Danish Political Party Is Led by an AI". Vice: Motherboard. Archived from the original on 13 October 2022. Retrieved 13 October 2022.

- ^ Hearing, Alice (14 October 2022). "A.I. chatbot is leading a Danish political party and setting its policies. Now users are grilling it for its stance on political landmines". Fortune. Archived from the original on 22 December 2022. Retrieved 8 December 2022.

- ^ "Maharashtra government launches Aaple Sarkar chatbot to provide info on 1,400 public services". CNBC TV18. 5 March 2019. Archived from the original on 23 July 2020. Retrieved 23 July 2020.

- ^ "Government of Maharashtra launches Aaple Sarkar chatbot with Haptik". The Economic Times. Archived from the original on 16 December 2020. Retrieved 23 July 2020.

- ^ Aggarwal, Varun (5 March 2019). "Maharashtra launches Aaple Sarkar chatbot". Business Line. Archived from the original on 23 July 2020. Retrieved 23 July 2020.

- ^ Sajani Senadheera, et al. (2024) Understanding Chatbot Adoption in Local Governments: A Review and Framework, Journal of Urban Technology, DOI: 10.1080/10630732.2023.2297665

- ^ a b Amy (23 February 2015). "Conversational Toys – The Latest Trend in Speech Technology". Virtual Agent Chat. Archived from the original on 21 February 2018. Retrieved 11 August 2016.

- ^ Nagy, Evie (13 February 2015). "Using Toy-talk Technology, New Hello Barbie Will Have Real Conversations With Kids". Fast Company. Archived from the original on 15 March 2015. Retrieved 18 March 2015.

- ^ Oren Jacob, the co-founder and CEO of ToyTalk interviewed on the TV show Triangulation on the TWiT.tv network

- ^ "Artificial intelligence script tool". Archived from the original on 12 December 2021. Retrieved 12 December 2021.

- ^ Takahashi, Dean (23 February 2015). "Elemental's smart connected toy taps IBM's Watson supercomputer for its brains". Venture Beat. Archived from the original on 20 May 2015. Retrieved 15 May 2015.

- ^ Epstein, Robert (October 2007). "From Russia With Love: How I got fooled (and somewhat humiliated) by a computer" (PDF). Scientific American: Mind. pp. 16–17. Archived (PDF) from the original on 19 October 2010. Retrieved 9 December 2007. Psychologist Robert Epstein reports how he was initially fooled by a chatterbot posing as an attractive girl in a personal ad he answered on a dating website. In the ad, the girl portrayed herself as being in Southern California and then soon revealed, in poor English, that she was actually in Russia. He became suspicious after a couple of months of email exchanges, sent her an email test of gibberish, and she still replied in general terms. The dating website is not named.

- ^ Neff, Gina; Nagy, Peter (12 October 2016). "Automation, Algorithms, and Politics| Talking to Bots: Symbiotic Agency and the Case of Tay". International Journal of Communication. 10: 17. ISSN 1932-8036.

- ^ Bird, Jordan J.; Ekart, Aniko; Faria, Diego R. (June 2018). "Learning from Interaction: An Intelligent Networked-Based Human-Bot and Bot-Bot Chatbot System". Advances in Computational Intelligence Systems. Advances in Intelligent Systems and Computing. Vol. 840 (1st ed.). Nottingham, UK: Springer. pp. 179–190. doi:10.1007/978-3-319-97982-3_15. ISBN 978-3-319-97982-3. S2CID 52069140.

- ^ Temming, Maria (20 November 2018). "How Twitter bots get people to spread fake news". Science News. Archived from the original on 27 November 2018. Retrieved 20 November 2018.

- ^ Epp, Len (11 May 2016). "Five Potential Malicious Uses For Chatbots". Archived from the original on 24 February 2023. Retrieved 24 February 2023.

- ^ a b c Hasal, Martin; Nowaková, Jana; Ahmed Saghair, Khalifa; Abdulla, Hussam; Snášel, Václav; Ogiela, Lidia (10 October 2021). "Chatbots: Security, privacy, data protection, and social aspects". Concurrency and Computation: Practice and Experience. 33 (19). doi:10.1002/cpe.6426. hdl:10084/145153. ISSN 1532-0626.

- ^ a b c Marous, Jim (14 March 2018). "Meet 11 of the Most Interesting Chatbots in Banking". The Financial Brand. Archived from the original on 1 April 2019. Retrieved 1 April 2019.

- ^ Grudin, Jonathan; Jacques, Richard (2019), "Chatbots, Humbots, and the Quest for Artificial General Intelligence", Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems – CHI '19, ACM CHI 2020, pp. 209–219, doi:10.1145/3290605.3300439, ISBN 978-1-4503-5970-2, S2CID 140274744

- ^ Stover, Dawn (3 September 2023). "Will AI make us crazy?". Bulletin of the Atomic Scientists. 79 (5): 299–303. doi:10.1080/00963402.2023.2245247. ISSN 0096-3402.

- ^ Crimmins, Tricia (30 May 2023). "'This robot causes harm': National Eating Disorders Association's new chatbot advises people with disordering eating to lose weight". The Daily Dot. Retrieved 2 June 2023.

- ^ Knight, Taylor (31 May 2023). "Eating disorder helpline fires AI for harmful advice after sacking humans".

- ^ Aratani, Lauren (31 May 2023). "US eating disorder helpline takes down AI chatbot over harmful advice". The Guardian. ISSN 0261-3077. Retrieved 1 June 2023.

- ^ "How talking machines are taking call center jobs". BBC News. 23 August 2018. Archived from the original on 1 April 2019. Retrieved 1 April 2019.

- ^ "How chatbots are killing jobs (and creating new ones)". 18 June 2017. Archived from the original on 1 April 2019. Retrieved 1 April 2019.

- ^ Crownhart, Casey. "AI is an energy hog. This is what it means for climate change". MIT Technology Review.

- ^ "Can We Mitigate AI's Environmental Impacts?". Yale school of the environment.

- ^ Saenko, Kate. "A Computer Scientist Breaks Down Generative AI's Hefty Carbon Footprint". Scientific American.

- ^ Sreedhar, Nitin. "AI and its carbon footprint: How much water does ChatGPT consume?". Mint Lounge.

Further reading

[edit]- Gertner, Jon. (2023) "Wikipedia's Moment of Truth: Can the online encyclopedia help teach A.I. chatbots to get their facts right — without destroying itself in the process?" New York Times Magazine (18 July 2023) online

- Searle, John (1980), "Minds, Brains and Programs", Behavioral and Brain Sciences, 3 (3): 417–457, doi:10.1017/S0140525X00005756, S2CID 55303721

- Shevat, Amir (2017). Designing bots: Creating conversational experiences (First ed.). Sebastopol, CA: O'Reilly Media. ISBN 978-1-4919-7482-7. OCLC 962125282.

- Vincent, James, "Horny Robot Baby Voice: James Vincent on AI chatbots", London Review of Books, vol. 46, no. 19 (10 October 2024), pp. 29–32. "[AI chatbot] programs are made possible by new technologies but rely on the timelelss human tendency to anthropomorphise." (p. 29.)

External links

[edit] Media related to Chatbots at Wikimedia Commons

Media related to Chatbots at Wikimedia Commons Conversational bots at Wikibooks

Conversational bots at Wikibooks