Text-to-image model

A text-to-image model is a machine learning model which takes an input natural language description and produces an image matching that description.

Text-to-image models began to be developed in the mid-2010s during the beginnings of the AI boom, as a result of advances in deep neural networks. In 2022, the output of state-of-the-art text-to-image models—such as OpenAI's DALL-E 2, Google Brain's Imagen, Stability AI's Stable Diffusion, and Midjourney—began to be considered to approach the quality of real photographs and human-drawn art.

Text-to-image models are generally latent diffusion models, which combine a language model, which transforms the input text into a latent representation, and a generative image model, which produces an image conditioned on that representation. The most effective models have generally been trained on massive amounts of image and text data scraped from the web.[1]

History

[edit]Before the rise of deep learning,[when?] attempts to build text-to-image models were limited to collages by arranging existing component images, such as from a database of clip art.[2][3]

The inverse task, image captioning, was more tractable, and a number of image captioning deep learning models came prior to the first text-to-image models.[4]

The first modern text-to-image model, alignDRAW, was introduced in 2015 by researchers from the University of Toronto. alignDRAW extended the previously-introduced DRAW architecture (which used a recurrent variational autoencoder with an attention mechanism) to be conditioned on text sequences.[4] Images generated by alignDRAW were in small resolution (32×32 pixels, attained from resizing) and were considered to be 'low in diversity'. The model was able to generalize to objects not represented in the training data (such as a red school bus) and appropriately handled novel prompts such as "a stop sign is flying in blue skies", exhibiting output that it was not merely "memorizing" data from the training set.[4][5]

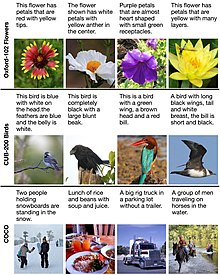

In 2016, Reed, Akata, Yan et al. became the first to use generative adversarial networks for the text-to-image task.[5][7] With models trained on narrow, domain-specific datasets, they were able to generate "visually plausible" images of birds and flowers from text captions like "an all black bird with a distinct thick, rounded bill". A model trained on the more diverse COCO (Common Objects in Context) dataset produced images which were "from a distance... encouraging", but which lacked coherence in their details.[5] Later systems include VQGAN-CLIP,[8] XMC-GAN, and GauGAN2.[9]

One of the first text-to-image models to capture widespread public attention was OpenAI's DALL-E, a transformer system announced in January 2021.[10] A successor capable of generating more complex and realistic images, DALL-E 2, was unveiled in April 2022,[11] followed by Stable Diffusion that was publicly released in August 2022.[12] In August 2022, text-to-image personalization allows to teach the model a new concept using a small set of images of a new object that was not included in the training set of the text-to-image foundation model. This is achieved by textual inversion, namely, finding a new text term that correspond to these images.

Following other text-to-image models, language model-powered text-to-video platforms such as Runway, Make-A-Video,[13] Imagen Video,[14] Midjourney,[15] and Phenaki[16] can generate video from text and/or text/image prompts.[17]

Architecture and training

[edit]

Text-to-image models have been built using a variety of architectures. The text encoding step may be performed with a recurrent neural network such as a long short-term memory (LSTM) network, though transformer models have since become a more popular option. For the image generation step, conditional generative adversarial networks (GANs) have been commonly used, with diffusion models also becoming a popular option in recent years. Rather than directly training a model to output a high-resolution image conditioned on a text embedding, a popular technique is to train a model to generate low-resolution images, and use one or more auxiliary deep learning models to upscale it, filling in finer details.

Text-to-image models are trained on large datasets of (text, image) pairs, often scraped from the web. With their 2022 Imagen model, Google Brain reported positive results from using a large language model trained separately on a text-only corpus (with its weights subsequently frozen), a departure from the theretofore standard approach.[18]

Datasets

[edit]

Training a text-to-image model requires a dataset of images paired with text captions. One dataset commonly used for this purpose is the COCO dataset. Released by Microsoft in 2014, COCO consists of around 123,000 images depicting a diversity of objects with five captions per image, generated by human annotators. Oxford-120 Flowers and CUB-200 Birds are smaller datasets of around 10,000 images each, restricted to flowers and birds, respectively. It is considered less difficult to train a high-quality text-to-image model with these datasets because of their narrow range of subject matter.[7]

Quality evaluation

[edit]Evaluating and comparing the quality of text-to-image models is a problem involving assessing multiple desirable properties. A desideratum specific to text-to-image models is that generated images semantically align with the text captions used to generate them. A number of schemes have been devised for assessing these qualities, some automated and others based on human judgement.[7]

A common algorithmic metric for assessing image quality and diversity is the Inception Score (IS), which is based on the distribution of labels predicted by a pretrained Inceptionv3 image classification model when applied to a sample of images generated by the text-to-image model. The score is increased when the image classification model predicts a single label with high probability, a scheme intended to favour "distinct" generated images. Another popular metric is the related Fréchet inception distance, which compares the distribution of generated images and real training images according to features extracted by one of the final layers of a pretrained image classification model.[7]

Impact and applications

[edit]List of notable text-to-image models

[edit]| Name | Release date | Developer | License |

|---|---|---|---|

| DALL-E | January 2021 | OpenAI | Proprietary |

| DALL-E 2 | April 2022 | ||

| DALL-E 3 | September 2023 | ||

| Ideogram 2.0 | August 2024 | Ideogram | |

| Imagen | April 2023 | ||

| Imagen 2 | December 2023[22] | ||

| Imagen 3 | May 2024 | ||

| Parti | Unreleased | ||

| Firefly | March 2023 | Adobe Inc. | |

| Midjourney | July 2022 | Midjourney, Inc. | |

| Stable Diffusion | August 2022 | Stability AI | Stability AI Community License[note 1] |

| FLUX.1 | August 2024 | Black Forest Labs | Apache License[note 2] |

| RunwayML | 2018 | Runway AI, Inc. | Proprietary |

Explanatory notes

[edit]- ^ This license can be used by individuals and organizations up to $1 million in revenue, for organizations with annual revenue more than $1 million, Stability AI Enterprise License is needed. All outputs are retained by users regardless of revenue

- ^ For the schnell model, the dev model is using a non-commercial license while the pro model is proprietary (only available as API)

See also

[edit]References

[edit]- ^ Vincent, James (May 24, 2022). "All these images were generated by Google's latest text-to-image AI". The Verge. Vox Media. Retrieved May 28, 2022.

- ^ Agnese, Jorge; Herrera, Jonathan; Tao, Haicheng; Zhu, Xingquan (October 2019), A Survey and Taxonomy of Adversarial Neural Networks for Text-to-Image Synthesis, arXiv:1910.09399

- ^ Zhu, Xiaojin; Goldberg, Andrew B.; Eldawy, Mohamed; Dyer, Charles R.; Strock, Bradley (2007). "A text-to-picture synthesis system for augmenting communication" (PDF). AAAI. 7: 1590–1595.

- ^ a b c Mansimov, Elman; Parisotto, Emilio; Lei Ba, Jimmy; Salakhutdinov, Ruslan (November 2015). "Generating Images from Captions with Attention". ICLR. arXiv:1511.02793.

- ^ a b c Reed, Scott; Akata, Zeynep; Logeswaran, Lajanugen; Schiele, Bernt; Lee, Honglak (June 2016). "Generative Adversarial Text to Image Synthesis" (PDF). International Conference on Machine Learning. arXiv:1605.05396.

- ^ Mansimov, Elman; Parisotto, Emilio; Ba, Jimmy Lei; Salakhutdinov, Ruslan (February 29, 2016). "Generating Images from Captions with Attention". International Conference on Learning Representations. arXiv:1511.02793.

- ^ a b c d Frolov, Stanislav; Hinz, Tobias; Raue, Federico; Hees, Jörn; Dengel, Andreas (December 2021). "Adversarial text-to-image synthesis: A review". Neural Networks. 144: 187–209. arXiv:2101.09983. doi:10.1016/j.neunet.2021.07.019. PMID 34500257. S2CID 231698782.

- ^ Rodriguez, Jesus (27 September 2022). "🌅 Edge#229: VQGAN + CLIP". thesequence.substack.com. Retrieved 2022-10-10.

- ^ Rodriguez, Jesus (4 October 2022). "🎆🌆 Edge#231: Text-to-Image Synthesis with GANs". thesequence.substack.com. Retrieved 2022-10-10.

- ^ Coldewey, Devin (5 January 2021). "OpenAI's DALL-E creates plausible images of literally anything you ask it to". TechCrunch.

- ^ Coldewey, Devin (6 April 2022). "OpenAI's new DALL-E model draws anything — but bigger, better and faster than before". TechCrunch.

- ^ "Stable Diffusion Public Release". Stability.Ai. Retrieved 2022-10-27.

- ^ Kumar, Ashish (2022-10-03). "Meta AI Introduces 'Make-A-Video': An Artificial Intelligence System That Generates Videos From Text". MarkTechPost. Retrieved 2022-10-03.

- ^ Edwards, Benj (2022-10-05). "Google's newest AI generator creates HD video from text prompts". Ars Technica. Retrieved 2022-10-25.

- ^ Rodriguez, Jesus (25 October 2022). "🎨 Edge#237: What is Midjourney?". thesequence.substack.com. Retrieved 2022-10-26.

- ^ "Phenaki". phenaki.video. Retrieved 2022-10-03.

- ^ Edwards, Benj (9 September 2022). "Runway teases AI-powered text-to-video editing using written prompts". Ars Technica. Retrieved 12 September 2022.

- ^ Saharia, Chitwan; Chan, William; Saxena, Saurabh; Li, Lala; Whang, Jay; Denton, Emily; Kamyar Seyed Ghasemipour, Seyed; Karagol Ayan, Burcu; Sara Mahdavi, S.; Gontijo Lopes, Rapha; Salimans, Tim; Ho, Jonathan; J Fleet, David; Norouzi, Mohammad (23 May 2022). "Photorealistic Text-to-Image Diffusion Models with Deep Language Understanding". arXiv:2205.11487 [cs.CV].

- ^ a b c Elgan, Mike (1 November 2022). "How 'synthetic media' will transform business forever". Computerworld. Retrieved 9 November 2022.

- ^ Roose, Kevin (21 October 2022). "A.I.-Generated Art Is Already Transforming Creative Work". The New York Times. Retrieved 16 November 2022.

- ^ a b Leswing, Kif. "Why Silicon Valley is so excited about awkward drawings done by artificial intelligence". CNBC. Retrieved 16 November 2022.

- ^ "Imagen 2 on Vertex AI is now generally available". Google Cloud Blog. Retrieved 2024-01-02.