Logistic regression: Difference between revisions

Repairing links to disambiguation pages - You can help! - Degree of freedom |

No edit summary |

||

| (18 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

{{Regression bar}} |

{{Regression bar}} |

||

In [[statistics]], '''logistic regression''' is a type of [[regression analysis]] used for predicting the outcome of a [[categorical variable|categorical]] (a variable that can take on a limited number of categories) [[dependent variable|criterion variable]] based on one or more predictor variables. Logistic regression can be bi- or multinomial. '''Binomial''' or '''binary logistic regression''' refers to the instance in which the criterion can take on only two possible outcomes (e.g., "dead" vs. "alive", "success" vs. "failure", or "yes" vs. "no"). [[multinomial logit|Multinomial logistic regression]] refers to the instance in which the criterion can take on three or more possible outcomes (e.g., "better' vs. "no change" vs. "worse"). Generally, the criterion is coded as "0" and "1" in binary logistic regression as it leads to the most straightforward interpretation.<ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> The target group (referred to as a "case") is usually coded as "1" and the reference group (referred to as a "noncase") as "0". The [[binomial distribution]] has a mean equal to the proportion of cases, denoted ''P'', and a [[variance]] equal to the product of cases and noncases, ''PQ'', wherein ''Q'' is equal to the proportion of noncases or 1 - ''P''. <ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> Accordingly, the [[standard deviation]] is simply the square root of ''PQ''. Logistic regression is used to predict the [[odds]] of being a case based on the predictor(s). The odds are defined as the probability of a case divided by the probability of a non case. The [[odds ratio]] is the primary measure of effect size in logistic regression and is computed to compare the odds that membership in one group will lead to a case outcome with the odds that membership in some other group will lead to a case outcome. The odds ratio (denoted OR) is simply the odds of being a case for one group divided by the odds of being a case for another group. An odds ratio of one indicates that the odds of a case outcome are equally likely for both groups under comparison. The further the odds deviate from one, the stronger the relationship. The odds ratio has a floor of zero but no ceiling (upper limit) - theoretically, the odds ratio can increase infinitely.<ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> |

|||

In [[statistics]], '''logistic regression''' (sometimes called the '''logistic model''' or '''[[logit]] model''') is a type of [[regression analysis]] used for predicting the outcome of a [[binary variable|binary]] [[dependent variable]] (a variable which can take only two possible outcomes, e.g. "yes" vs. "no" or "success" vs. "failure") based on one or more [[independent variable|predictor variable]]s. Logistic regression attempts to model the [[probability]] of a "yes/success" outcome using a [[linear function]] of the predictors. Specifically, the [[log-odds]] of success (the [[logit]] of the probability) is fit to the predictors using [[linear regression]]. Logistic regression is one type of [[discrete choice]] model, which in general predict [[categorical variable|categorical]] dependent variables — either binary or multi-way. |

|||

Like other forms of |

Like other forms of regression analysis, logistic regression makes use of one or more predictor variables that may be either [[continuous]] or [[categorical]]. Also, like other linear regression models, the [[expected value]] (average value) of the response variable is fit to the predictors - the expected value of a [[Bernoulli distribution]] is simply the [[probability]] of a case. In other words, in logistic regression the base rate of a case for the null model (the model without any predictors or the intercept-only model) is fit to the model including one or more predictors. Unlike ordinary linear regression, however, logistic regression is used for predicting binary outcomes ([[Bernoulli trials]]) rather than continuous outcomes. Given this difference, it is necessary that logistic regression take the [[natural logarithm]] of the odds (referred to as the [[logit]] or [[log-odds]]) to create a continuous criterion. The logit of success is then fit to the predictors using regression analysis. The results of the logit, however, are not intuitive, so the logit is converted back to the odds via the [[exponential function]] or the inverse of the natural logarithm. Therefore, although the observed variables in logistic regression are [[categorical]], the predicted scores are actually modelled as a continuous variable (the logit). The logit is referred to as the ''link function'' in logistic regression - although the output in logistic regression is binomial and displayed in a [[contingency table]], the logit is an underlying continuous criterion upon which linear regression is conducted. <ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> |

||

For example, logistic regression might be used to predict whether a patient has a given disease (e.g. [[diabetes]]), based on observed characteristics of the patient (age, gender, [[body mass index]], results of various [[blood test]]s, etc.). Another example might be to predict whether a voter will vote Democratic or Republican, based on age, income, gender, race, state of residence, votes in previous elections, etc. Logistic regression is used extensively in numerous disciplines: the medical and social sciences fields, [[natural language processing]], marketing applications such as prediction of a customer's propensity to purchase a product or cease a subscription, etc. |

For example, logistic regression might be used to predict whether a patient has a given disease (e.g. [[diabetes]]), based on observed characteristics of the patient (age, gender, [[body mass index]], results of various [[blood test]]s, etc.). Another example might be to predict whether a voter will vote Democratic or Republican, based on age, income, gender, race, state of residence, votes in previous elections, etc. Logistic regression is used extensively in numerous disciplines: the medical and social sciences fields, [[natural language processing]], marketing applications such as prediction of a customer's propensity to purchase a product or cease a subscription, etc. In each of these instances, a logistic regression model would compute the relevant [[odds]] for each predictor or interaction term, take the [[natural logarithm]] of the odds (compute the [[logit]]), conduct a linear regression analysis on the predicted values of the [[logit]], and then take the [[exponential function]] of the [[logit]] to compute the [[odds ratio]]. |

||

==Introduction== |

==Introduction== |

||

Both linear and logistic regression analyses compare the observed values of the criterion with the predicted values with and without the variable(s) in question in order to determine if the model that includes the variable(s) more accurately predicts the outcome than the model without that variable (or set of variables).<ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> Given that both analyses are guided by the same goal, why is it that logistic regression is needed for analyses with a dichotomous criterion? Why is [[linear regression]] inappropriate to use with a dichotomous criterion? There are several reasons why it is inappropriate to conduct [[linear regression]] on a dichotomous criterion. First, it violates the assumption of linearity. The linear regression line is the expected value of the criterion given the predictor(s) and is equal to the intercept (the value of the criterion when the predictor(s) are equal to zero) plus the product of the regression coefficient and some given value of the predictor plus some error term - this implies that it is possible for the expected value of the criterion given the value of the predictor to take on any value as the predictor(s) ranges from <math>(-\infty,+\infty)</math>; however, this is not the case with a dichotomous criterion.<ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> The conditional mean of a dichotomous criterion must be greater than or equal to zero and less than or equal to one, thus, the distribution is not linear but [[sigmoid]] or S-shaped. <ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> As the predictors approach <math>(-\infty)</math> the criterion asymptotes at zero and as the predictors approach <math>(+\infty)</math> the criterion asymptotes at one. Linear regression disregards this information and it becomes possible for the criterion to take on probabilities less than zero and greater than one although such values are not theoretically permissible.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> Furthermore, there is no straightforward interpretation of such values. |

|||

Logistic regression is a [[generalized linear model]], specifically a type of [[binomial regression]]. It is often compared with [[probit model|probit regression]], the other main type of binomial regression, which transforms the probability using the [[probit function]] (the [[quantile function]] of the [[normal distribution]]) rather than the [[logit function]]. Both functions have a similar shape, and both serve to transform the limited range of a probability, restricted to the range <math>[0,1]</math>, into the full range <math>(-\infty,+\infty)</math>, which makes the transformed value more suitable for fitting using a linear function. The effect of both functions is to transform the middle of the probability range (near 50%) more or less linearly, while stretching out the extremes (near 0% or 100%) [[exponential growth|exponentially]]. |

|||

Second, conducting linear regression with a dichotomous criterion violates the assumption that the error term is [[homoscedasticity|homoscedastic]].<ref>{{cite book|last=Menard|first=Scott|title=Applied logistic regression analysis|year=2002|publisher=Sage|location=Thousand Oaks, Calif [u.a.]|isbn=978-0761922087|edition=2. ed.}}</ref> [[Homoscedasticity]] is the assumption that variance in the criterion is constant at all levels of the predictor(s). This assumption will always be violated when one has a criterion that is distributed binomially. Consider the variance formula: ''e'' = ''PQ'', wherein ''P'' is equal to the proportion of "1's" or "cases" and ''Q'' is equal to (1 - ''P''), the proportion of "0's" or "noncases" in the distribution. Given that there are only two possible outcomes in a binomial distribution, one can determine the proportion of "noncases" from the proportion of "cases" and vice versa. Likewise, one can also determine the variance of the distribution from either the proportion of "cases" or "noncases". That is to say that the variance is not independent of the predictor - the error term is not [[homoscedastic]], but [[heteroscedastic]], meaning that the variance is not equal at all levels of the predictor. The variance is greatest when the proportion of cases equals .5. ''e'' = ''PQ'' = .5(1 - .5) = .5(.5) = .25. As the proportion of cases approaches the extremes, however, error approaches zero. For example, when the proportion of cases equals .99, there is almost zero error: ''e'' = ''PQ'' = .99(1 - .99) = .99(.01) = .009. Therefore, error or variance in the criterion is not independent of the predictor variable(s). |

|||

This agrees with the intuition that e.g. if a certain amount of lobbying causes a given senator to increase his/her chances of voting in favor of a bill from 50% to 75% (a change of 25 percentage points), the same amount of lobbying applied to an already highly favorable senator might change his/her odds from 90% to 95% (a change of 5 percentage points) rather than 90% to a nonsensical 115%; when applied to an even more favorable senator, the odds might go from 95% to 97.5% (a change of 2.5 percentage points); and when applied to an extremely unfavorable senator, might change the odds from 5% to 10% (a change of 5 percentage points) or 10% to 20% (a change of 10 percentage points). This shows that it is unreasonable to directly model a probability using a linear function, since this implies that a given change in a predictor variable always causes the same absolute change in the response variable regardless of the previous value of the variable. Rather, it appears that a given change in a predictor causes a proportional change in the distance of the response probability from either extreme (0% or 100%) — in the above example, in all cases the probability moved either twice as close to, or twice as far from, one of the extremes. The logit and probit transformations trigger exactly this behavior. |

|||

Third, conducting linear regression with a dichotomous variable violates the assumption that error is normally distributed because the criterion has only two values.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> Given that a dichotomous criterion violates these assumptions of linear regression, conducting linear regression with a dichotomous criterion may lead to errors in inference and at the very least, interpretation of the outcome will not be straightforward.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=0805822232|edition=3. ed.}}</ref> |

|||

It is important to note that, although the observed outcomes of the response variables are [[categorical variable|categorical]] — simple "yes" or "no" outcomes — logistic regression actually models a [[continuous variable]] (the [[probability]] of "yes"). This probability is a [[latent variable]] that is assumed to generate the observed yes/no outcomes. At its heart, this is conceptually similar to ordinary [[linear regression]], which predicts the unobserved [[expected value]] of the outcome (e.g. the average income, height, etc.), which in turn generates the observed value of the outcome (which is likely to be somewhere near the average, but may differ by an "error" term). The difference is that for a simple [[normal distribution|normally distributed]] continuous variable, the average (expected) value and observed value are measured with the same units. Thus it is convenient to conceive of the observed value as simply the expected value plus some error term, and often to blur the difference between the two. For logistic regression, however, the expected value and observed value are different types of values (continuous vs. discrete), and visualizing the observed value as expected value plus error does not work. As a result, the distinction between expected and observed value must always be kept in mind. |

|||

Given the shortcomings of the linear regression model for dealing with a dichotomous criterion, it is necessary to use some other analysis. Besides logistic regression, there is at least one additional alternative analysis for dealing with a dichotomous criterion - [[discriminant function analysis]]. Like logistic regression, [[discriminant function analysis]] is a technique in which a set of predictors is used to determine group membership. There are two problems with [[discriminant function analysis]], however: first, like linear regression, [[discriminant function analysis]] may produce probabilities greater than one or less than zero, even though such probabilities are theoretically inadmissible. In addition, [[discriminant function analysis]] assumes that the predictor variables are normally distributed.<ref>{{cite book|last=Howell|first=David C.|title=Statistical methods for psychology|year=2010|publisher=Thomson Wadsworth|location=Belmont, CA|isbn=9780495597841|edition=7th ed.}}</ref> Logistic regression neither produces probabilities that lie below zero or above one, nor imposes restrictive normality assumptions on the predictors. |

|||

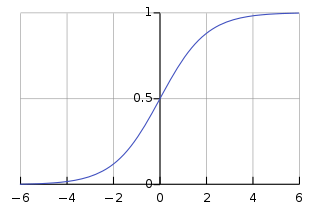

Logistic regression is a [[generalized linear model]], specifically a type of [[binomial regression]]. Logistic regression serves to transform the limited range of a probability, restricted to the range <math>[0,1]</math>, into the full range <math>(-\infty,+\infty)</math>, which makes the transformed value more suitable for fitting using a linear function. The effect of both functions is to transform the middle of the probability range (near 50%) more or less linearly, while stretching out the extremes (near 0% or 100%) [[exponential growth|exponentially]]. This is because in the middle of the probability range, one expects a relatively linear function - it is towards the extremes that the regression line begins to curve as it approaches asymptote; hence, the sigmoidal distribution (see Figure 1). In essence, when conducting logistic regression, one is transforming the probability of a case outcome into the odds of a case outcome and taking the [[natural logarithm]] of the odds to create the [[logit]]. The odds as a criterion provides an improvement over probability as the criterion as the odds has no fixed upper limit; however, the odds is still limited in that it has a fixed lower limit of zero and its values do not tend to be normally distributed or linearly related to the predictors. Hence, it is necessary to take the [[natural logarithm]] of the [[odds]] to remedy these limitations. |

|||

The [[natural logarithm]] is the power to which the base, ''e'' must be raised to produce some value ''Y'' (the criterion). [[Euler's number]] or ''e'' is a mathematical constant equal to about 2.71828. An excellent example of this relationship is when ''Y'' = 2.71828 or ''e''. When ''Y'' = 2.71828, ln(''Y'' or 2.71828) = 1, because ''Y'' equals ''e'' in this instance, so ''e'' must only be raised to the power of 1 to equal itself. In other words, ''Y'' is the power to which the base, ''e'', must be raised to equal ''Y'' (2.71828). Given that the logit is not generally interpreted and that the inverse of the [[natural logarithm]], the [[exponential function]] of the [[logit]] is generally interpreted instead, it is also helpful to examine this function (denoted: <math>e^{Y}</math>). To illustrate the relationship between the [[exponential function]] and the [[natural logarithm]], consider the exponentiation of the product of the natural logarithm above. There it was evident that the natural logarithm of 2.71828 was equal to 1. Here, if one exponentiates 1, the product is 2.71828; thus, the exponential function is the reciprocal of the natural logarithm. The [[logit]] can be thought of as a [[latent]] continuous variable that is fit to the predictors analogous to the manner in which a continuous criterion is fit to the predictors in [[linear regression]] analysis. After the criterion (the logit) is fit to the predictors the result is [[exponential function|exponentiated]], converting the unintuitive logit back in to the easily interpretable odds. It is important to note that, the [[probability]], [[odds ratio]], and [[logit]] all provide the same information. A probability of .5 is equal to an odds ratio of 1 and a logit of 0 - all three values indicate that "case" and "noncase" outcomes are equally likely. |

|||

It is also important to note that, although the observed outcomes of the response variables are [[categorical variable|categorical]] — simple "yes" or "no" outcomes — logistic regression actually models a [[continuous variable]] (the [[probability]] of "yes"). This probability is a [[latent variable]] that is assumed to generate the observed yes/no outcomes. At its heart, this is conceptually similar to ordinary [[linear regression]], which predicts the unobserved [[expected value]] of the outcome (e.g. the average income, height, etc.), which in turn generates the observed value of the outcome (which is likely to be somewhere near the average, but may differ by an "error" term). The difference is that for a simple [[normal distribution|normally distributed]] continuous variable, the average (expected) value and observed value are measured with the same units. Thus it is convenient to conceive of the observed value as simply the expected value plus some error term, and often to blur the difference between the two. For logistic regression, however, the expected value and observed value are different types of values (continuous vs. discrete), and visualizing the observed value as expected value plus error does not work. As a result, the distinction between expected and observed value must always be kept in mind. |

|||

== Definition == |

== Definition == |

||

[[Image:Logistic-curve.svg|thumb|320px|right|Figure 1. The logistic function, with |

[[Image:Logistic-curve.svg|thumb|320px|right|Figure 1. The logistic function, with <math>\beta_0 + \beta_1 X_1 + e</math> on the horizontal axis and <math>\pi(x)</math> on the vertical axis]] |

||

An explanation of logistic regression begins with an explanation of the [[logistic function]], which, like probabilities, always takes on values between zero and one: |

An explanation of logistic regression begins with an explanation of the [[logistic function]], which, like probabilities, always takes on values between zero and one: |

||

:<math> |

:<math>\pi(x) = \frac{e^{(\beta_0 + \beta_1 X_1 + e)}} {e^{(\beta_0 + \beta1 X_1 + e)} + 1} = \frac {1} {e^{-(\beta_0 + \beta_1 X_1 + e)} + 1}</math> |

||

AND |

|||

:<math>g(x) = ln \frac {\pi(x)} {1 - \pi(x)} = \beta_0 + \beta_1 X_1 + e</math> |

|||

AND |

|||

:<math>\frac{\pi(x)} {1 - \pi(x)} = e^{(\beta_0 + \beta_1 X_1 + e)}</math><ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> |

|||

A graph of the function is shown in figure 1. The input is <math>\beta_0 + \beta_1 X_1 + e</math> and the output is <math>\pi(x)</math>. The logistic function is useful because it can take as an input any value from negative infinity to positive infinity, whereas the output is confined to values between 0 and 1. In the above equations, ''g''(''X'') refers to the logit function of some given predictor ''X'', ln denotes the [[natural logarithm]]:<math>\pi(x)</math> is the probability of being a case, <math>\beta_0</math> is the [[intercept]] from the linear regression equation (the value of the criterion when the predictor is equal to zero), <math>\beta_1 X_1</math> is the regression coefficient multiplied by some value of the predictor, base ''e'' denotes the [[exponential function]] and ''e'' in the linear regression equation denotes the error term. The first formula illustrates that the probability of being a case is equal to the odds of the exponential function of the linear regression equation. This is important in that it shows that the input of the logistic regression equation (the linear regression equation) can vary from negative to positive infinity and yet, after exponentiating the odds of the equation, the output will vary between zero and one. The second equation illustrates that the [[logit]] (i.e., log-odds or natural logarithm of the odds) is equivalent to the linear regression equation. Likewise, the third equation illustrates that the odds of being a case is equivalent to the [[exponential function]] of the linear regression equation. This illustrates how the [[logit]] serves as a link function between the odds and the linear regression equation. Given that the logit varies from <math>(-\infty,+\infty)</math>it provides an adequate criterion upon which to conduct linear regression and the logit is easily converted back into the odds.<ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> |

|||

This is where it becomes extremely sensible to use reference cell coding ("0" = non case, "1" = case). With this coding scheme the odds ratio is equal to the exponential function of the regression coefficient.<ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> |

|||

<math>OR = \frac{((e^{\beta_0 + \beta_1} \div (1 + e^{\beta_0 +\beta_1})) \div (1 \div (1 + e^{\beta_0 + \beta_1})))} {((e^{\beta_0} \div 1 + e^{\beta_0}) \div (1 \div 1 + e^{\beta_0}))}</math> |

|||

<math> = \frac{e^{\beta_0 + \beta_1}} {e^{\beta_0}}</math> |

|||

<math> = e^{(\beta_0 + \beta_1) - \beta_0}</math> |

|||

<math> = e^{\beta_1}</math><ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> |

|||

Therefore, when one uses a reference coding scheme, the exponentiation of the regression coefficient is the odds ratio and no further calculations are necessary. |

|||

== Model Fitting == |

|||

===Maximum Likelihoods=== |

|||

In linear regression one uses an analytical solution to estimate regression coefficients by finding those values that minimize the sum of squared residuals (error variance).<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=0805822232|edition=3. ed.}}</ref> In other words, there is a series of computations that one can make to derive a solution. In logistic regression there is no set of equations from which one can derive a solution - an analytical solution does not exist. Instead, logistic regression uses the [[maximum likelihood]] procedure to estimate the coefficients that maximize the likelihood of the regression coefficients given the predictors and criterion. <ref>{{cite book|last=Menard|first=Scott|title=Applied logistic regression analysis|year=2002|publisher=Sage|location=Thousand Oaks, Calif [u.a.]|isbn=9780761922087|edition=2. ed.}}</ref> Unlike analytical solutions wherein it is possible to solve directly for the coefficients, the [[maximum likelihood]] solution is an iterative process that begins with a tentative solution, revises it slightly to see if it can be improved, and repeats this process until improvement is minute, at which point the model is said to have converged.<ref>{{cite book|last=Menard|first=Scott|title=Applied logistic regression analysis|year=2002|publisher=Sage|location=Thousand Oaks, Calif [u.a.]|isbn=9780761922087|edition=2. ed.}}</ref> What this means is that the [[maximum likelihood]] procedure has found a solution that maximizes the likelihood of the coefficients given the predictor(s) and criterion. |

|||

In some instances the model may not reach convergence. When a model does not converge this indicates that the coefficients are not reliable as the model never reached a final solution. Lack of convergence may result from a number of problems: having a large ratio of predictors to cases, [[multicollinearity]], [[sparse matrix|sparseness]], or complete separation. Although not a precise number, as a general rule of thumb, logistic regression models require a minimum of 10 cases per variable.<ref>{{cite journal|last=Peduzzi|first=P|coauthors=Concato, J, Kemper, E, Holford, TR, Feinstein, AR|title=A simulation study of the number of events per variable in logistic regression analysis.|journal=Journal of clinical epidemiology|date=1996 Dec|volume=49|issue=12|pages=1373-9|pmid=8970487}}</ref> Having a large proportion of variables to cases results in an overly conservative Wald statistic (discussed below) and can lead to nonconvergence. Multicollinearity refers to unacceptably high correlations between predictors. As multicollinearity increases, coefficients remain unbiased but standard errors increase and the likelihood of model convergence decreases.<ref>{{cite book|last=Menard|first=Scott|title=Applied logistic regression analysis|year=2002|publisher=Sage|location=Thousand Oaks, Calif [u.a.]|isbn=9780761922087|edition=2. ed.}}</ref> To detect multicollinearity amongst the predictors, one can conduct a linear regression analysis with the predictors of interest for the sole purpose of examining the tolerance statistic<ref>{{cite book|last=Menard|first=Scott|title=Applied logistic regression analysis|year=2002|publisher=Sage|location=Thousand Oaks, Calif [u.a.]|isbn=9780761922087|edition=2. ed.}}</ref> used to assess whether multicollinearity is unacceptably high. Sparseness in the data refers to having a large proportion of empty cells (cells with zero counts). Zero cell counts are particularly problematic with categorical predictors. With continuous predictors, the model can infer values for the zero cell counts, but this is not the case with categorical predictors. The reason the model will not converge with zero cell counts for categorical predictors is because the natural logarithm of zero is an undefined value, so final solutions to the model cannot be reached. To remedy this problem, researchers may collapse categories in a theoretically meaningful way or may consider adding a constant to all cells.<ref>{{cite book|last=Menard|first=Scott|title=Applied logistic regression analysis|year=2002|publisher=Sage|location=Thousand Oaks, Calif [u.a.]|isbn=9780761922087|edition=2. ed.}}</ref> Another numerical problem that may lead to a lack of convergence is complete separation, which refers to the instance in which the predictors perfectly predict the criterion - all cases are accurately classified. In such instances, one should reexamine the data, as there is likely some kind of error.<ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> |

|||

=== Deviance and Likelihood Ratio Tests === |

|||

In linear regression analysis, one is concerned with partitioning variance via the [[sum of squares]] calculations - variance in the criterion is essentially divided into variance accounted for by the predictors and residual variance. In logistic regression analysis, deviance is used in lieu of sum of squares calculations.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> Deviance is analogous to the sum of squares calculations in linear regression<ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> and is a measure of the lack of fit to the data in a logistic regression model.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> Deviance is calculated by comparing a given model with the saturated model - a model with a theoretically perfect fit.<ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> This computation is called the [[likelihood ratio test]]: |

|||

<math> D = -2ln \frac{(likelihood of the fitted model)} {(likelihood of the saturated model)}</math><ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> |

|||

In the above equation ''D'' represents the deviance and ln represents the [[natural logarithm]]. The results of the [[likelihood ratio]] (the ratio of the fitted model to the saturated model) will produce a negative value, so the product is multiplied by negative two times its [[natural logarithm]] to produce a value with an approximate [[chi-square]] distribution. <ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> Smaller values indicate better fit as the fitted model deviates less from the saturated model. When assessed upon a chi-square distribution, nonsignificant chi-square values indicate very little unexplained variance and thus, good model fit. Conversely, a significant [[chi-square]] value indicates that a significant amount of the variance is unexplained. Two measures of deviance are particularly important in logistic regression: null deviance and model deviance. The null deviance represents the difference between a model with only the intercept and no predictors and the saturated model. And, the model deviance represents the difference between a model with at least one predictor and the saturated model.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> In this respect, the null model provides a baseline upon which to compare predictor models. Given that deviance is a measure of the difference between a given model and the saturated model, smaller values indicate better fit. Therefore, to assess the contribution of a predictor or set of predictors, one can subtract the model deviance from the null deviance and assess the difference on a chi-square distribution with one [[degrees of freedom|degree of freedom]].<ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref> If the model deviance is significantly smaller than the null deviance then one can conclude that the predictor or set of predictors significantly improved model fit. This is analogous to the ''F''-test used in linear regression analysis to assess the significance of prediction.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> |

|||

=== Pseudo-R<sup>2</sup>s=== |

|||

A graph of the function is shown in figure 1. The input is ''z'' and the output is ''ƒ''(''z''). The logistic function is useful because it can take as an input any value from negative infinity to positive infinity, whereas the output is confined to values between 0 and 1. The variable ''z'' represents the exposure to some set of independent variables, while ''ƒ''(''z'') represents the probability of a particular outcome, given that set of explanatory variables. The variable ''z'' is a measure of the total contribution of all the independent variables used in the model and is known as the [[logit]]. |

|||

In linear regression the squared multiple correlation, ''R''<sup>2</sup> is used to assess goodness of fit as it represents the proportion of variance in the criterion that is explained by the predictors.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> In logistic regression analysis, there is no agreed upon analogous measure, but there are several competing measures each with limitations.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> Three of the most commonly used indices are examined on this page beginning with the likelihood ratio ''R''<sup>2</sup>, ''R''<sup>2</sup><sub>''L''</sub>: |

|||

The variable ''z'' is usually defined as |

|||

:<math>z=\beta_0 + \beta_1x_1 + \beta_2x_2 + \beta_3x_3 + \cdots + \beta_kx_k,</math> |

|||

:<math>R^2_L = \frac{D_{null} - D_{model}} {D_{null}}</math><ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> |

|||

where <math>\beta_0</math> is called the "[[y-intercept|intercept]]" and <math>\beta_1</math>, <math>\beta_2</math>, <math>\beta_3</math>, and so on, are called the "[[regression coefficient]]s" of <math>x_1</math>, <math>x_2</math>, <math>x_3</math> respectively. The intercept is the value of ''z'' when the value of all independent variables are zero (e.g. the value of ''z'' in someone with no risk factors). Each of the regression coefficients describes the size of the contribution of that risk factor. A positive regression coefficient means that the explanatory variable increases the probability of the outcome, while a negative regression coefficient means that the variable decreases the probability of that outcome; a large regression coefficient means that the risk factor strongly influences the probability of that outcome, while a near-zero regression coefficient means that that risk factor has little influence on the probability of that outcome. |

|||

This is the most analogous index to the squared multiple correlation in linear regression.<ref>{{cite book|last=Menard|first=Scott|title=Applied logistic regression analysis|year=2002|publisher=Sage|location=Thousand Oaks, Calif [u.a.]|isbn=9780761922087|edition=2. ed.}}</ref> It represents the proportional reduction in the deviance wherein the deviance is treated as a measure of variation analogous but not identical to the [[variance]] in [[linear regression]] analysis.<ref>{{cite book|last=Menard|first=Scott|title=Applied logistic regression analysis|year=2002|publisher=Sage|location=Thousand Oaks, Calif [u.a.]|isbn=9780761922087|edition=2. ed.}}</ref> One limitation of the [[likelihood ratio]] ''R''<sup>2</sup> is that it is not monotonically related to the odds ratio,<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> meaning that it does not necessarily increase as the odds ratio increases and does not necessarily decrease as the odds ratio decreases. |

|||

Logistic regression is a useful way of describing the relationship between one or more independent variables (e.g., age, sex, etc.) and a binary response variable, expressed as a probability, that has only two values, such as having cancer ("has cancer" or "doesn't have cancer") <!-- previous example (death) is not as instructive; 'death' is easily verifiable and needs no probability-->. |

|||

The Cox and Sell ''R''<sup>2</sup> is an alternative index of goodness of fit related to the ''R''<sup>2</sup> value from linear regression. The Cox and Snell index is problematic as its maximum value is .75, when the [[variance]] is at its maximum (.25). The Nagelkerke ''R''<sup>2</sup> provides a correction to the Cox and Snell ''R''<sup>2</sup> so that the maximum value is equal to one. Nevertheless, the Cox and Snell and likelihood ratio ''R''<sup>2</sup>s show greater agreement with each other than either does with the Nagelkerke ''R''<sup>2</sup>.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> Of course, this might not be the case for values exceeding .75 as the Cox and Snell index is capped at this value. The likelihood ratio ''R''<sup>2</sup> is often preferred to the alternatives as it is most analogous to ''R''<sup>2</sup> in [[linear regression]], is independent of the base rate (both Cox and Snell and Nagelkerke ''R''<sup>2</sup>s increase as the proportion of cases increase from 0 to .5) and varies between 0 and 1. |

|||

== Sample size-dependent efficiency == |

|||

Logistic regression tends to systematically overestimate odds ratios or beta coefficients when the sample size is less than about 500. With increasing sample size, the magnitude of overestimation diminishes and the estimated odds ratio asymptotically approaches the true population value. In a single study, overestimation due to small sample size might not have any relevance for the interpretation of the results, since it is much lower than the standard error of the estimate. However, if a number of small studies with systematically overestimated effects are pooled together without consideration of this effect, an effect may be perceived when in reality it does not exist.<ref>Nemes S, Jonasson JM, Genell A, Steineck G. 2009 Bias in odds ratios by logistic regression modelling and sample size. BMC Medical Research Methodology 9:56 [http://www.biomedcentral.com/1471-2288/9/56 BioMedCentral]</ref> |

|||

A word of caution is in order when interpreting pseudo-''R''<sup>2</sup> statistics. The reason these indices of fit are referred to as ''pseudo'' ''R''<sup>2</sup> is because they do not represent the proportionate reduction in error as the ''R''<sup>2</sup> in [[linear regression]] does.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> Linear regression assumes [[homoscedasticity]], that the error variance is the same for all values of the criterion. Logistic regression will always be [[heteroscedastic]] - the error variances differ for each value of the predicted score. For each value of the predicted score there would be a different value of the proportionate reduction in error. Therefore, it is inappropriate to think of ''R''<sup>2</sup> as a proportionate reduction in error in a universal sense in logistic regression.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> |

|||

A minimum of 10 events per independent variable has been recommended.<ref>{{cite journal|author=Peduzzi P, Concato J, Kemper E, Holford TR, Feinstein AR|title=A simulation study of the number of events per variable in logistic regression analysis|journal=J Clin Epidemiol|year=1996|volume=49|issue=12|pages=1373–9|pmid=8970487}}</ref><ref>{{cite book|author=Agresti A|title=An Introduction to Categorical Data Analysis|chapter=Building and applying logistic regression models|year=2007|publisher=Wiley|location=Hoboken, New Jersey|page=138|isbn=978-0-471-22618-5}}</ref> For example, in a study where death is the outcome of interest, and 50 of 100 patients die, the maximum number of independent variables the model can support is 50/10 = 5. |

|||

== |

== Coefficients == |

||

After fitting the model, it is likely that researchers will want to examine the contribution of individual predictors. To do so, they will want to examine the regression coefficients. In linear regression, the regression coefficients represent the change in the criterion for each unit change in the predictor.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> In logistic regression, however, the regression coefficients represent the rate of change in the logit for each unit change in the predictor. Given that the logit is not intuitive, researchers are likely to focus on a predictor's effect on the [[exponential function]] of the regression coefficient - the odds ratio (see [[logistic regression#definition|definition]]). In linear regression, the significance of a regression coefficient is assessed by computing a ''t''-test. In logistic regression, there are a couple of different tests designed to assess the significance of an individual predictor, most notably, the [[likelihood ratio test]] and the [[Wald Test|Wald statistic]]. |

|||

The application of a logistic regression may be illustrated using a fictitious example of death from heart disease. This simplified model uses only three risk factors (age, sex, and blood cholesterol level) to predict the 10-year risk of death from heart disease. These are the parameters that the data fit: |

|||

:<math>\beta_0=-5.0 \text{ (the intercept)}</math> |

|||

:<math>\beta_1=+2.0</math> |

|||

:<math>\beta_2=-1.0</math> |

|||

:<math>\beta_3=+1.2</math> |

|||

:<math>x_1=\text{ age in years, above 50}</math> |

|||

:<math>x_2=\text{ sex, where 0 is male and 1 is female}</math> |

|||

:<math>x_3=\text{ cholesterol level, in mmol/L above 5.0}</math> |

|||

=== Likelihood Ratio Test === |

|||

The model can hence be expressed as |

|||

The [[likelihood ratio test]] discussed above to assess model fit is also the recommended procedure to assess the contribution of individual predictors to a given model.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref><ref>{{cite book|last=Lemeshow|first=David W. Hosmer, Stanley|title=Applied logistic regression|year=2000|publisher=Wiley|location=New York|isbn=0471356328|edition=2nd ed.}}</ref><ref>{{cite book|last=Menard|first=Scott|title=Applied logistic regression analysis|year=2002|publisher=Sage|location=Thousand Oaks, Calif [u.a.]|isbn=9780761922087|edition=2. ed.}}</ref> In the case of a single predictor model, one simply compares the predictor model with the null model on a chi-square distribution with a single degree of freedom. If the predictor model has a significantly smaller chi-square value, then one can conclude that the predictor significantly predicts the criterion. Given that some common statistical packages (e.g., SAS, SPSS) do not provide likelihood ratio test statistics, it can be more difficult to assess the contribution of individual predictors in the multiple logistic regression case. To assess the contribution of individual predictors one can enter the predictors hierarchically, comparing each new model with the previous to determine the contribution of each predictor.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> |

|||

:<math>\text{risk of death} = \frac{1}{1+e^{-z}} \text{, where } z=-5.0 +2.0x_1 -1.0x_2 + 1.2x_3.</math> |

|||

=== Wald Statistic === |

|||

In this model, increasing age is associated with an increasing risk of death from heart disease (z goes up by 2.0 for every year over the age of 50), female sex is associated with a decreased risk of death from heart disease (''z'' goes down by 1.0 if the patient is female), and increasing cholesterol is associated with an increasing risk of death (z goes up by 1.2 for each 1 mmol/L increase in cholesterol above 5 mmol/L). |

|||

Alternatively, when assessing the contribution of individual predictors in a given model, one may examine the significance of the [[Wald Test|Wald statistic]]. The [[Wald Test|Wald statistic]], analogous to the ''t''-test in linear regression, is used to assess the significance of coefficients. The [[Wald Test|Wald statistic]] is the ratio of the square of the regression coefficient to the square of the standard error of the coefficient and is asymptotically distributed as a chi-square distribution.<ref>{{cite book|last=Menard|first=Scott|title=Applied logistic regression analysis|year=2002|publisher=Sage|location=Thousand Oaks, Calif [u.a.]|isbn=9780761922087|edition=2. ed.}}</ref> |

|||

We wish to use this model to predict a particular subject's risk of death from heart disease: he is 50 years old and his cholesterol level is 7.0 mmol/L. The subject's risk of death is therefore |

|||

<math>W_j = \frac{B^2_j} {SE^2_Bj}</math> |

|||

Although several statistical packages (e.g., SPSS, SAS) report the [[Wald Test|Wald statistic]] to assess the contribution of individual predictors, the [[Wald Test|Wald statistic]] is not without limitations. When the regression coefficient is large, the standard error of the regression coefficient also tends to be large increasing the probability of [[Type I and Type II errors|Type-II error]]. The [[Wald Test|Wald statistic]] also tends to be biased when data are sparse.<ref>{{cite book|first=Jacob Cohen|title=Applied multiple regression/correlation analysis for the behavioral sciences|publisher=Erlbaum|location=Mahwah, NJ [u.a.]|isbn=9780805822236|edition=3. ed.}}</ref> |

|||

This means that by this model, the subject's risk of dying from heart disease in the next 10 years is 0.07 (or 7%). |

|||

== Formal mathematical specification == |

== Formal mathematical specification == |

||

| Line 483: | Line 520: | ||

| year = 1991 |

| year = 1991 |

||

| isbn = 978-0-8247-8587-1 }} |

| isbn = 978-0-8247-8587-1 }} |

||

*{{cite book |

|||

| last = Cohen |

|||

| first = Jacob |

|||

| coauthors = Patricia Cohen, Steven G. West, Leona S. Aiken |

|||

| title = Applied Multiple Regression/Correlation Analysis for the Behavioral Sciences, 3rd ed. |

|||

| publisher = New York: Routledge |

|||

| year = 2003 |

|||

| isbn = 978-0-8058-2223-6 }} |

|||

*{{cite book |

*{{cite book |

||

| last = Greene |

| last = Greene |

||

| Line 505: | Line 550: | ||

| year = 2000 |

| year = 2000 |

||

| isbn = 0-471-35632-8 }} |

| isbn = 0-471-35632-8 }} |

||

*{{cite book |

|||

| last = Howell |

|||

| first = David C. |

|||

| title = Statistical Methods for Psychology, 7th ed. |

|||

| publisher = Belmont, CA; Thomson Wadsworth |

|||

| year = 2010 |

|||

| isbn = 978-0-495-59786-5 }} |

|||

*{{cite book |

|||

| last = Menard |

|||

| first = Scott W. |

|||

| title = Applied Logistic Regression, 2nd ed. |

|||

| publisher = Thousand Oaks; SAGE |

|||

| year = 2002 |

|||

| isbn = 9780761922087 }} |

|||

*{{cite journal |

|||

| last = Peduzzi |

|||

| first = P. |

|||

| coauthors = J. Concato, E. Kemper, T.R. Holford, A.R. Feinstein |

|||

| title = "A simulation study of the number of events per variable in logistic regression analysis" |

|||

| journal = Journal of clinical epidemiology |

|||

| volume = 49 (12) |

|||

| pages = 1373 - 1379 |

|||

| year = 1996 |

|||

| PMID = 8970487 }} |

|||

==External links== |

==External links== |

||

Revision as of 00:10, 28 April 2012

| Part of a series on |

| Regression analysis |

|---|

| Models |

| Estimation |

| Background |

In statistics, logistic regression is a type of regression analysis used for predicting the outcome of a categorical (a variable that can take on a limited number of categories) criterion variable based on one or more predictor variables. Logistic regression can be bi- or multinomial. Binomial or binary logistic regression refers to the instance in which the criterion can take on only two possible outcomes (e.g., "dead" vs. "alive", "success" vs. "failure", or "yes" vs. "no"). Multinomial logistic regression refers to the instance in which the criterion can take on three or more possible outcomes (e.g., "better' vs. "no change" vs. "worse"). Generally, the criterion is coded as "0" and "1" in binary logistic regression as it leads to the most straightforward interpretation.[1] The target group (referred to as a "case") is usually coded as "1" and the reference group (referred to as a "noncase") as "0". The binomial distribution has a mean equal to the proportion of cases, denoted P, and a variance equal to the product of cases and noncases, PQ, wherein Q is equal to the proportion of noncases or 1 - P. [2] Accordingly, the standard deviation is simply the square root of PQ. Logistic regression is used to predict the odds of being a case based on the predictor(s). The odds are defined as the probability of a case divided by the probability of a non case. The odds ratio is the primary measure of effect size in logistic regression and is computed to compare the odds that membership in one group will lead to a case outcome with the odds that membership in some other group will lead to a case outcome. The odds ratio (denoted OR) is simply the odds of being a case for one group divided by the odds of being a case for another group. An odds ratio of one indicates that the odds of a case outcome are equally likely for both groups under comparison. The further the odds deviate from one, the stronger the relationship. The odds ratio has a floor of zero but no ceiling (upper limit) - theoretically, the odds ratio can increase infinitely.[3]

Like other forms of regression analysis, logistic regression makes use of one or more predictor variables that may be either continuous or categorical. Also, like other linear regression models, the expected value (average value) of the response variable is fit to the predictors - the expected value of a Bernoulli distribution is simply the probability of a case. In other words, in logistic regression the base rate of a case for the null model (the model without any predictors or the intercept-only model) is fit to the model including one or more predictors. Unlike ordinary linear regression, however, logistic regression is used for predicting binary outcomes (Bernoulli trials) rather than continuous outcomes. Given this difference, it is necessary that logistic regression take the natural logarithm of the odds (referred to as the logit or log-odds) to create a continuous criterion. The logit of success is then fit to the predictors using regression analysis. The results of the logit, however, are not intuitive, so the logit is converted back to the odds via the exponential function or the inverse of the natural logarithm. Therefore, although the observed variables in logistic regression are categorical, the predicted scores are actually modelled as a continuous variable (the logit). The logit is referred to as the link function in logistic regression - although the output in logistic regression is binomial and displayed in a contingency table, the logit is an underlying continuous criterion upon which linear regression is conducted. [4]

For example, logistic regression might be used to predict whether a patient has a given disease (e.g. diabetes), based on observed characteristics of the patient (age, gender, body mass index, results of various blood tests, etc.). Another example might be to predict whether a voter will vote Democratic or Republican, based on age, income, gender, race, state of residence, votes in previous elections, etc. Logistic regression is used extensively in numerous disciplines: the medical and social sciences fields, natural language processing, marketing applications such as prediction of a customer's propensity to purchase a product or cease a subscription, etc. In each of these instances, a logistic regression model would compute the relevant odds for each predictor or interaction term, take the natural logarithm of the odds (compute the logit), conduct a linear regression analysis on the predicted values of the logit, and then take the exponential function of the logit to compute the odds ratio.

Introduction

Both linear and logistic regression analyses compare the observed values of the criterion with the predicted values with and without the variable(s) in question in order to determine if the model that includes the variable(s) more accurately predicts the outcome than the model without that variable (or set of variables).[5] Given that both analyses are guided by the same goal, why is it that logistic regression is needed for analyses with a dichotomous criterion? Why is linear regression inappropriate to use with a dichotomous criterion? There are several reasons why it is inappropriate to conduct linear regression on a dichotomous criterion. First, it violates the assumption of linearity. The linear regression line is the expected value of the criterion given the predictor(s) and is equal to the intercept (the value of the criterion when the predictor(s) are equal to zero) plus the product of the regression coefficient and some given value of the predictor plus some error term - this implies that it is possible for the expected value of the criterion given the value of the predictor to take on any value as the predictor(s) ranges from ; however, this is not the case with a dichotomous criterion.[6] The conditional mean of a dichotomous criterion must be greater than or equal to zero and less than or equal to one, thus, the distribution is not linear but sigmoid or S-shaped. [7] As the predictors approach the criterion asymptotes at zero and as the predictors approach the criterion asymptotes at one. Linear regression disregards this information and it becomes possible for the criterion to take on probabilities less than zero and greater than one although such values are not theoretically permissible.[8] Furthermore, there is no straightforward interpretation of such values.

Second, conducting linear regression with a dichotomous criterion violates the assumption that the error term is homoscedastic.[9] Homoscedasticity is the assumption that variance in the criterion is constant at all levels of the predictor(s). This assumption will always be violated when one has a criterion that is distributed binomially. Consider the variance formula: e = PQ, wherein P is equal to the proportion of "1's" or "cases" and Q is equal to (1 - P), the proportion of "0's" or "noncases" in the distribution. Given that there are only two possible outcomes in a binomial distribution, one can determine the proportion of "noncases" from the proportion of "cases" and vice versa. Likewise, one can also determine the variance of the distribution from either the proportion of "cases" or "noncases". That is to say that the variance is not independent of the predictor - the error term is not homoscedastic, but heteroscedastic, meaning that the variance is not equal at all levels of the predictor. The variance is greatest when the proportion of cases equals .5. e = PQ = .5(1 - .5) = .5(.5) = .25. As the proportion of cases approaches the extremes, however, error approaches zero. For example, when the proportion of cases equals .99, there is almost zero error: e = PQ = .99(1 - .99) = .99(.01) = .009. Therefore, error or variance in the criterion is not independent of the predictor variable(s).

Third, conducting linear regression with a dichotomous variable violates the assumption that error is normally distributed because the criterion has only two values.[10] Given that a dichotomous criterion violates these assumptions of linear regression, conducting linear regression with a dichotomous criterion may lead to errors in inference and at the very least, interpretation of the outcome will not be straightforward.[11]

Given the shortcomings of the linear regression model for dealing with a dichotomous criterion, it is necessary to use some other analysis. Besides logistic regression, there is at least one additional alternative analysis for dealing with a dichotomous criterion - discriminant function analysis. Like logistic regression, discriminant function analysis is a technique in which a set of predictors is used to determine group membership. There are two problems with discriminant function analysis, however: first, like linear regression, discriminant function analysis may produce probabilities greater than one or less than zero, even though such probabilities are theoretically inadmissible. In addition, discriminant function analysis assumes that the predictor variables are normally distributed.[12] Logistic regression neither produces probabilities that lie below zero or above one, nor imposes restrictive normality assumptions on the predictors.

Logistic regression is a generalized linear model, specifically a type of binomial regression. Logistic regression serves to transform the limited range of a probability, restricted to the range , into the full range , which makes the transformed value more suitable for fitting using a linear function. The effect of both functions is to transform the middle of the probability range (near 50%) more or less linearly, while stretching out the extremes (near 0% or 100%) exponentially. This is because in the middle of the probability range, one expects a relatively linear function - it is towards the extremes that the regression line begins to curve as it approaches asymptote; hence, the sigmoidal distribution (see Figure 1). In essence, when conducting logistic regression, one is transforming the probability of a case outcome into the odds of a case outcome and taking the natural logarithm of the odds to create the logit. The odds as a criterion provides an improvement over probability as the criterion as the odds has no fixed upper limit; however, the odds is still limited in that it has a fixed lower limit of zero and its values do not tend to be normally distributed or linearly related to the predictors. Hence, it is necessary to take the natural logarithm of the odds to remedy these limitations.

The natural logarithm is the power to which the base, e must be raised to produce some value Y (the criterion). Euler's number or e is a mathematical constant equal to about 2.71828. An excellent example of this relationship is when Y = 2.71828 or e. When Y = 2.71828, ln(Y or 2.71828) = 1, because Y equals e in this instance, so e must only be raised to the power of 1 to equal itself. In other words, Y is the power to which the base, e, must be raised to equal Y (2.71828). Given that the logit is not generally interpreted and that the inverse of the natural logarithm, the exponential function of the logit is generally interpreted instead, it is also helpful to examine this function (denoted: ). To illustrate the relationship between the exponential function and the natural logarithm, consider the exponentiation of the product of the natural logarithm above. There it was evident that the natural logarithm of 2.71828 was equal to 1. Here, if one exponentiates 1, the product is 2.71828; thus, the exponential function is the reciprocal of the natural logarithm. The logit can be thought of as a latent continuous variable that is fit to the predictors analogous to the manner in which a continuous criterion is fit to the predictors in linear regression analysis. After the criterion (the logit) is fit to the predictors the result is exponentiated, converting the unintuitive logit back in to the easily interpretable odds. It is important to note that, the probability, odds ratio, and logit all provide the same information. A probability of .5 is equal to an odds ratio of 1 and a logit of 0 - all three values indicate that "case" and "noncase" outcomes are equally likely.

It is also important to note that, although the observed outcomes of the response variables are categorical — simple "yes" or "no" outcomes — logistic regression actually models a continuous variable (the probability of "yes"). This probability is a latent variable that is assumed to generate the observed yes/no outcomes. At its heart, this is conceptually similar to ordinary linear regression, which predicts the unobserved expected value of the outcome (e.g. the average income, height, etc.), which in turn generates the observed value of the outcome (which is likely to be somewhere near the average, but may differ by an "error" term). The difference is that for a simple normally distributed continuous variable, the average (expected) value and observed value are measured with the same units. Thus it is convenient to conceive of the observed value as simply the expected value plus some error term, and often to blur the difference between the two. For logistic regression, however, the expected value and observed value are different types of values (continuous vs. discrete), and visualizing the observed value as expected value plus error does not work. As a result, the distinction between expected and observed value must always be kept in mind.

Definition

An explanation of logistic regression begins with an explanation of the logistic function, which, like probabilities, always takes on values between zero and one:

AND

AND

A graph of the function is shown in figure 1. The input is and the output is . The logistic function is useful because it can take as an input any value from negative infinity to positive infinity, whereas the output is confined to values between 0 and 1. In the above equations, g(X) refers to the logit function of some given predictor X, ln denotes the natural logarithm: is the probability of being a case, is the intercept from the linear regression equation (the value of the criterion when the predictor is equal to zero), is the regression coefficient multiplied by some value of the predictor, base e denotes the exponential function and e in the linear regression equation denotes the error term. The first formula illustrates that the probability of being a case is equal to the odds of the exponential function of the linear regression equation. This is important in that it shows that the input of the logistic regression equation (the linear regression equation) can vary from negative to positive infinity and yet, after exponentiating the odds of the equation, the output will vary between zero and one. The second equation illustrates that the logit (i.e., log-odds or natural logarithm of the odds) is equivalent to the linear regression equation. Likewise, the third equation illustrates that the odds of being a case is equivalent to the exponential function of the linear regression equation. This illustrates how the logit serves as a link function between the odds and the linear regression equation. Given that the logit varies from it provides an adequate criterion upon which to conduct linear regression and the logit is easily converted back into the odds.[14]

This is where it becomes extremely sensible to use reference cell coding ("0" = non case, "1" = case). With this coding scheme the odds ratio is equal to the exponential function of the regression coefficient.[15]

Therefore, when one uses a reference coding scheme, the exponentiation of the regression coefficient is the odds ratio and no further calculations are necessary.

Model Fitting

Maximum Likelihoods

In linear regression one uses an analytical solution to estimate regression coefficients by finding those values that minimize the sum of squared residuals (error variance).[17] In other words, there is a series of computations that one can make to derive a solution. In logistic regression there is no set of equations from which one can derive a solution - an analytical solution does not exist. Instead, logistic regression uses the maximum likelihood procedure to estimate the coefficients that maximize the likelihood of the regression coefficients given the predictors and criterion. [18] Unlike analytical solutions wherein it is possible to solve directly for the coefficients, the maximum likelihood solution is an iterative process that begins with a tentative solution, revises it slightly to see if it can be improved, and repeats this process until improvement is minute, at which point the model is said to have converged.[19] What this means is that the maximum likelihood procedure has found a solution that maximizes the likelihood of the coefficients given the predictor(s) and criterion.

In some instances the model may not reach convergence. When a model does not converge this indicates that the coefficients are not reliable as the model never reached a final solution. Lack of convergence may result from a number of problems: having a large ratio of predictors to cases, multicollinearity, sparseness, or complete separation. Although not a precise number, as a general rule of thumb, logistic regression models require a minimum of 10 cases per variable.[20] Having a large proportion of variables to cases results in an overly conservative Wald statistic (discussed below) and can lead to nonconvergence. Multicollinearity refers to unacceptably high correlations between predictors. As multicollinearity increases, coefficients remain unbiased but standard errors increase and the likelihood of model convergence decreases.[21] To detect multicollinearity amongst the predictors, one can conduct a linear regression analysis with the predictors of interest for the sole purpose of examining the tolerance statistic[22] used to assess whether multicollinearity is unacceptably high. Sparseness in the data refers to having a large proportion of empty cells (cells with zero counts). Zero cell counts are particularly problematic with categorical predictors. With continuous predictors, the model can infer values for the zero cell counts, but this is not the case with categorical predictors. The reason the model will not converge with zero cell counts for categorical predictors is because the natural logarithm of zero is an undefined value, so final solutions to the model cannot be reached. To remedy this problem, researchers may collapse categories in a theoretically meaningful way or may consider adding a constant to all cells.[23] Another numerical problem that may lead to a lack of convergence is complete separation, which refers to the instance in which the predictors perfectly predict the criterion - all cases are accurately classified. In such instances, one should reexamine the data, as there is likely some kind of error.[24]

Deviance and Likelihood Ratio Tests

In linear regression analysis, one is concerned with partitioning variance via the sum of squares calculations - variance in the criterion is essentially divided into variance accounted for by the predictors and residual variance. In logistic regression analysis, deviance is used in lieu of sum of squares calculations.[25] Deviance is analogous to the sum of squares calculations in linear regression[26] and is a measure of the lack of fit to the data in a logistic regression model.[27] Deviance is calculated by comparing a given model with the saturated model - a model with a theoretically perfect fit.[28] This computation is called the likelihood ratio test:

In the above equation D represents the deviance and ln represents the natural logarithm. The results of the likelihood ratio (the ratio of the fitted model to the saturated model) will produce a negative value, so the product is multiplied by negative two times its natural logarithm to produce a value with an approximate chi-square distribution. [30] Smaller values indicate better fit as the fitted model deviates less from the saturated model. When assessed upon a chi-square distribution, nonsignificant chi-square values indicate very little unexplained variance and thus, good model fit. Conversely, a significant chi-square value indicates that a significant amount of the variance is unexplained. Two measures of deviance are particularly important in logistic regression: null deviance and model deviance. The null deviance represents the difference between a model with only the intercept and no predictors and the saturated model. And, the model deviance represents the difference between a model with at least one predictor and the saturated model.[31] In this respect, the null model provides a baseline upon which to compare predictor models. Given that deviance is a measure of the difference between a given model and the saturated model, smaller values indicate better fit. Therefore, to assess the contribution of a predictor or set of predictors, one can subtract the model deviance from the null deviance and assess the difference on a chi-square distribution with one degree of freedom.[32] If the model deviance is significantly smaller than the null deviance then one can conclude that the predictor or set of predictors significantly improved model fit. This is analogous to the F-test used in linear regression analysis to assess the significance of prediction.[33]

Pseudo-R2s

In linear regression the squared multiple correlation, R2 is used to assess goodness of fit as it represents the proportion of variance in the criterion that is explained by the predictors.[34] In logistic regression analysis, there is no agreed upon analogous measure, but there are several competing measures each with limitations.[35] Three of the most commonly used indices are examined on this page beginning with the likelihood ratio R2, R2L:

This is the most analogous index to the squared multiple correlation in linear regression.[37] It represents the proportional reduction in the deviance wherein the deviance is treated as a measure of variation analogous but not identical to the variance in linear regression analysis.[38] One limitation of the likelihood ratio R2 is that it is not monotonically related to the odds ratio,[39] meaning that it does not necessarily increase as the odds ratio increases and does not necessarily decrease as the odds ratio decreases.

The Cox and Sell R2 is an alternative index of goodness of fit related to the R2 value from linear regression. The Cox and Snell index is problematic as its maximum value is .75, when the variance is at its maximum (.25). The Nagelkerke R2 provides a correction to the Cox and Snell R2 so that the maximum value is equal to one. Nevertheless, the Cox and Snell and likelihood ratio R2s show greater agreement with each other than either does with the Nagelkerke R2.[40] Of course, this might not be the case for values exceeding .75 as the Cox and Snell index is capped at this value. The likelihood ratio R2 is often preferred to the alternatives as it is most analogous to R2 in linear regression, is independent of the base rate (both Cox and Snell and Nagelkerke R2s increase as the proportion of cases increase from 0 to .5) and varies between 0 and 1.

A word of caution is in order when interpreting pseudo-R2 statistics. The reason these indices of fit are referred to as pseudo R2 is because they do not represent the proportionate reduction in error as the R2 in linear regression does.[41] Linear regression assumes homoscedasticity, that the error variance is the same for all values of the criterion. Logistic regression will always be heteroscedastic - the error variances differ for each value of the predicted score. For each value of the predicted score there would be a different value of the proportionate reduction in error. Therefore, it is inappropriate to think of R2 as a proportionate reduction in error in a universal sense in logistic regression.[42]

Coefficients

After fitting the model, it is likely that researchers will want to examine the contribution of individual predictors. To do so, they will want to examine the regression coefficients. In linear regression, the regression coefficients represent the change in the criterion for each unit change in the predictor.[43] In logistic regression, however, the regression coefficients represent the rate of change in the logit for each unit change in the predictor. Given that the logit is not intuitive, researchers are likely to focus on a predictor's effect on the exponential function of the regression coefficient - the odds ratio (see definition). In linear regression, the significance of a regression coefficient is assessed by computing a t-test. In logistic regression, there are a couple of different tests designed to assess the significance of an individual predictor, most notably, the likelihood ratio test and the Wald statistic.

Likelihood Ratio Test

The likelihood ratio test discussed above to assess model fit is also the recommended procedure to assess the contribution of individual predictors to a given model.[44][45][46] In the case of a single predictor model, one simply compares the predictor model with the null model on a chi-square distribution with a single degree of freedom. If the predictor model has a significantly smaller chi-square value, then one can conclude that the predictor significantly predicts the criterion. Given that some common statistical packages (e.g., SAS, SPSS) do not provide likelihood ratio test statistics, it can be more difficult to assess the contribution of individual predictors in the multiple logistic regression case. To assess the contribution of individual predictors one can enter the predictors hierarchically, comparing each new model with the previous to determine the contribution of each predictor.[47]

Wald Statistic

Alternatively, when assessing the contribution of individual predictors in a given model, one may examine the significance of the Wald statistic. The Wald statistic, analogous to the t-test in linear regression, is used to assess the significance of coefficients. The Wald statistic is the ratio of the square of the regression coefficient to the square of the standard error of the coefficient and is asymptotically distributed as a chi-square distribution.[48]