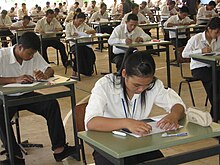

Exam

An examination (exam or evaluation) or test is an educational assessment intended to measure a test-taker's knowledge, skill, aptitude, physical fitness, or classification in many other topics (e.g., beliefs).[1] A test may be administered verbally, on paper, on a computer, or in a predetermined area that requires a test taker to demonstrate or perform a set of skills.

Tests vary in style, rigor and requirements. There is no general consensus or invariable standard for test formats and difficulty. Often, the format and difficulty of the test is dependent upon the educational philosophy of the instructor, subject matter, class size, policy of the educational institution, and requirements of accreditation or governing bodies.

A test may be administered formally or informally. An example of an informal test is a reading test administered by a parent to a child. A formal test might be a final examination administered by a teacher in a classroom or an IQ test administered by a psychologist in a clinic. Formal testing often results in a grade or a test score.[2] A test score may be interpreted with regards to a norm or criterion, or occasionally both. The norm may be established independently, or by statistical analysis of a large number of participants.

A test may be developed and administered by an instructor, a clinician, a governing body, or a test provider. In some instances, the developer of the test may not be directly responsible for its administration. For example, in the United States, Educational Testing Service (ETS), a nonprofit educational testing and assessment organization, develops standardized tests such as the SAT but may not directly be involved in the administration or proctoring of these tests.

History

[edit]

Oral and informal examinations

[edit]Informal, unofficial, and non-standardized tests and testing systems have existed throughout history. For example, tests of skill such as archery contests have existed in China since the Zhou dynasty (or, more mythologically, Yao).[3] Oral exams were administered in various parts of the world including ancient China and Europe. A precursor to the later Chinese imperial examinations was in place since the Han dynasty, during which the Confucian characteristic of the examinations was determined. However these examinations did not offer an official avenue to government appointment, the majority of which were filled through recommendations based on qualities such as social status, morals, and ability.

China

[edit]Standardized written examinations were first implemented in China. They were commonly known as the imperial examinations (keju).

The bureaucratic imperial examinations as a concept has its origins in the year 605 during the short lived Sui dynasty. Its successor, the Tang dynasty, implemented imperial examinations on a relatively small scale until the examination system was extensively expanded during the reign of Wu Zetian.[4] Included in the expanded examination system was a military exam that tested physical ability, but the military exam never had a significant impact on the Chinese officer corps and military degrees were seen as inferior to their civil counterpart. The exact nature of Wu's influence on the examination system is still a matter of scholarly debate.

During the Song dynasty the emperors expanded both examinations and the government school system, in part to counter the influence of hereditary nobility, increasing the number of degree holders to more than four to five times that of the Tang. From the Song dynasty onward, the examinations played the primary role in selecting scholar-officials, who formed the literati elite of society. However the examinations co-existed with other forms of recruitment such as direct appointments for the ruling family, nominations, quotas, clerical promotions, sale of official titles, and special procedures for eunuchs. The regular higher level degree examination cycle was decreed in 1067 to be 3 years but this triennial cycle only existed in nominal terms. In practice both before and after this, the examinations were irregularly implemented for significant periods of time: thus, the calculated statistical averages for the number of degrees conferred annually should be understood in this context. The jinshi exams were not a yearly event and should not be considered so; the annual average figures are a necessary artifact of quantitative analysis.[5] The operations of the examination system were part of the imperial record keeping system, and the date of receiving the jinshi degree is often a key biographical datum: sometimes the date of achieving jinshi is the only firm date known for even some of the most historically prominent persons in Chinese history.

A brief interruption to the examinations occurred at the beginning of the Mongol Yuan dynasty in the 13th century, but was later brought back with regional quotas which favored the Mongols and disadvantaged Southern Chinese. During the Ming and Qing dynasties, the system contributed to the narrow and focused nature of intellectual life and enhanced the autocratic power of the emperor. The system continued with some modifications until its abolition in 1905 during the last years of the Qing dynasty. The modern examination system for selecting civil servants also indirectly evolved from the imperial one.[6]

Spread

[edit]

Japan

[edit]Japan implemented the examination system for 200 years during the Heian period (794-1185). Like the Chinese examinations, the curriculum revolved around the Confucian canon. However, unlike in China, it was only ever applied to the minor nobility and so gradually faded away under the hereditary system during the Samurai era.[7]

Korea

[edit]The examination system was established in Korea in 958 under the reign of Gwangjong of Goryeo. Any free man (not Nobi) was able to take the examinations. By the Joseon period, high offices were closed to aristocrats who had not passed the exams. The examination system continued until 1894 when it was abolished by the Gabo Reform. As in China, the content of the examinations focused on the Confucian canon and ensured a loyal scholar bureaucrat class which upheld the throne.[8]

Vietnam

[edit]The Confucian examination system in Vietnam was established in 1075 under the Lý dynasty Emperor Lý Nhân Tông and lasted until the Nguyễn dynasty Emperor Khải Định (1919). There were only three levels of examinations in Vietnam: interprovincial, pre-court, and court.[8]

West

[edit]The imperial examination system was known to Europeans as early as 1570. It received great attention from the Jesuit Matteo Ricci (1552–1610), who viewed it and its Confucian appeal to rationalism favorably in comparison to religious reliance on "apocalypse." Knowledge of Confucianism and the examination system was disseminated broadly in Europe following the Latin translation of Ricci's journal in 1614. During the 18th century, the imperial examinations were often discussed in conjunction with Confucianism, which attracted great attention from contemporary European thinkers such as Gottfried Wilhelm Leibniz, Voltaire, Montesquieu, Baron d'Holbach, Johann Wolfgang von Goethe, and Friedrich Schiller.[9] In France and Britain, Confucian ideology was used in attacking the privilege of the elite.[10] Figures such as Voltaire claimed that the Chinese had "perfected moral science" and François Quesnay advocated an economic and political system modeled after that of the Chinese. According to Ferdinand Brunetière (1849-1906), followers of Physiocracy such as François Quesnay, whose theory of free trade was based on Chinese classical theory, were sinophiles bent on introducing "l'esprit chinois" to France. He also admits that French education was really based on Chinese literary examinations which were popularized in France by philosophers, especially Voltaire. Western perception of China in the 18th century admired the Chinese bureaucratic system as favourable over European governments for its seeming meritocracy.[11][12] However those who admired China such as Christian Wolff were sometimes persecuted. In 1721 he gave a lecture at the University of Halle praising Confucianism, for which he was accused of atheism and forced to give up his position at the university.[13]

The earliest evidence of examinations in Europe date to 1215 or 1219 in Bologna. These were chiefly oral in the form of a question or answer, disputation, determination, defense, or public lecture. The candidate gave a public lecture of two prepared passages assigned to him from the civil or canon law, and then doctors asked him questions, or expressed objections to answers. Evidence of written examinations do not appear until 1702 at Trinity College, Cambridge. According to Sir Michael Sadler, Europe may have had written examinations since 1518 but he admits the "evidence is not very clear." In Prussia, medication examinations began in 1725. The Mathematical Tripos, founded in 1747, is commonly believed to be the first honor examination, but James Bass Mullinger considered "the candidates not having really undergone any examination whatsoever" because the qualification for a degree was merely four years of residence. France adopted the examination system in 1791 as a result of the French Revolution but it collapsed after only ten years. Germany implemented the examination system around 1800.[12]

Englishmen in the 18th century such as Eustace Budgell recommended imitating the Chinese examination system but the first English person to recommend competitive examinations to qualify for employment was Adam Smith in 1776. In 1838, the Congregational church missionary Walter Henry Medhurst considered the Chinese exams to be "worthy of imitating."[12] In 1806, the British established a Civil Service College near London for training of the East India Company's administrators in India. This was based on the recommendations of British East India Company officials serving in China and had seen the Imperial examinations. In 1829, the company introduced civil service examinations in India on a limited basis.[14] This established the principle of qualification process for civil servants in England.[13] In 1847 and 1856, Thomas Taylor Meadows strongly recommended the adoption of the Chinese principle of competitive examinations in Great Britain in his Desultory Notes on the Government and People of China. According to Meadows, "the long duration of the Chinese empire is solely and altogether owing to the good government which consists in the advancement of men of talent and merit only."[15] Both Thomas Babington Macaulay, who was instrumental in passing the Saint Helena Act 1833, and Stafford Northcote, 1st Earl of Iddesleigh, who prepared the Northcote–Trevelyan Report that catalyzed the British civil service, were familiar with Chinese history and institutions. The Northcote–Trevelyan Report of 1854 made four principal recommendations: that recruitment should be on the basis of merit determined through standardized written examination, that candidates should have a solid general education to enable inter-departmental transfers, that recruits should be graded into a hierarchy, and that promotion should be through achievement, rather than 'preferment, patronage, or purchase'.[16]

When the report was brought up in parliament in 1853, Lord Monteagle argued against the implementation of open examinations because it was a Chinese system and China was not an "enlightened country." Lord Stanley called the examinations the "Chinese Principle." The Earl of Granville did not deny this but argued in favor of the examination system, considering that the minority Manchus had been able to rule China with it for over 200 years. In 1854, Edwin Chadwick reported that some noblemen did not agree with the measures introduced because they were Chinese. The examination system was finally implemented in the British Indian Civil Service in 1855, prior to which admission into the civil service was purely a matter of patronage, and in England in 1870. Even as late as ten years after the competitive examination plan was passed, people still attacked it as an "adopted Chinese culture." Alexander Baillie-Cochrane, 1st Baron Lamington insisted that the English "did not know that it was necessary for them to take lessons from the Celestial Empire." In 1875, Archibald Sayce voiced concern over the prevalence of competitive examinations, which he described as "the invasion of this new Chinese culture."[12]

After Great Britain's successful implementation of systematic, open, and competitive examinations in India in the 19th century, similar systems were instituted in the United Kingdom itself, and in other Western nations.[17] Like the British, the development of the French and American civil service was influenced by the Chinese system. When Thomas Jenckes made a Report from the Joint Select Committee on Retrenchment in 1868, it contained a chapter on the civil service in China. In 1870, William Spear wrote a book called The Oldest and the Newest Empire-China and the United States, in which he urged the United States government to adopt the Chinese examination system. Like in Britain, many of the American elites scorned the plan to implement competitive examinations, which they considered foreign, Chinese, and "un-American." As a result, the civil services reform introduced into the House of Representatives in 1868 was not passed until 1883. The Civil Service Commission tried to combat such sentiments in its report:[18]

...with no intention of commending either the religion or the imperialism of China, we could not see why the fact that the most enlightened and enduring government of the Eastern world had acquired an examination as to the merits of candidates for office, should any more deprive the American people of that advantage, if it might be an advantage, than the facts that Confucius had taught political morality, and the people of China had read books, used the compass, gunpowder, and the multiplication table, during centuries when this continent was a wilderness, should deprive our people of those conveniences.[12]

— Civil Service Commission

Modern development

[edit]

Standardized testing began to influence the method of examination in British universities from the 1850s, where oral exams had common since the Middle Ages. In the US, the transition happened under the influence of the educational reformer Horace Mann. The shift helped standardize an expansion of the curricula into the sciences and humanities, creating a rationalized method for the evaluation of teachers and institutions and creating a basis for the streaming of students according to ability.[19]

Both World War I and World War II demonstrated the necessity of standardized testing and the benefits associated with these tests. Tests were used to determine the mental aptitude of recruits to the military. The US Army used the Stanford–Binet Intelligence Scale to test the IQ of the soldiers.[20] After the War, industry began using tests to evaluate applicants for various jobs based on performance. In 1952, the first Advanced Placement (AP) test was administered to begin closing the gap between high schools and colleges.[21]

Contemporary tests

[edit]Education

[edit]Tests are used throughout most educational systems. Tests may range from brief, informal questions chosen by the teacher to major tests that students and teachers spend months preparing for.

Some countries such as the United Kingdom and France require all their secondary school students to take a standardized test on individual subjects such as the General Certificate of Secondary Education (GCSE) (in England) and Baccalauréat respectively as a requirement for graduation.[22] These tests are used primarily to assess a student's proficiency in specific subjects such as mathematics, science, or literature. In contrast, high school students in other countries such as the United States may not be required to take a standardized test to graduate. Moreover, students in these countries usually take standardized tests only to apply for a position in a university program and are typically given the option of taking different standardized tests such as the ACT or SAT, which are used primarily to measure a student's reasoning skill.[23][24] High school students in the United States may also take Advanced Placement tests on specific subjects to fulfill university-level credit. Depending on the policies of the test maker or country, administration of standardized tests may be done in a large hall, classroom, or testing center. A proctor or invigilator may also be present during the testing period to provide instructions, to answer questions, or to prevent cheating.

Grades or test scores from standardized test may also be used by universities to determine whether a student applicant should be admitted into one of its academic or professional programs. For example, universities in the United Kingdom admit applicants into their undergraduate programs based primarily or solely on an applicant's grades on pre-university qualifications such as the GCE A-levels or Cambridge Pre-U.[25][26] In contrast, universities in the United States use an applicant's test score on the SAT or ACT as just one of their many admission criteria to determine whether an applicant should be admitted into one of its undergraduate programs. The other criteria in this case may include the applicant's grades from high school, extracurricular activities, personal statement, and letters of recommendations.[27] Once admitted, undergraduate students in the United Kingdom or United States may be required by their respective programs to take a comprehensive examination as a requirement for passing their courses or for graduating from their respective programs.

Standardized tests are sometimes used by certain countries to manage the quality of their educational institutions. For example, the No Child Left Behind Act in the United States requires individual states to develop assessments for students in certain grades. In practice, these assessments typically appear in the form of standardized tests. Test scores of students in specific grades of an educational institution are then used to determine the status of that educational institution, i.e., whether it should be allowed to continue to operate in the same way or to receive funding.

Finally, standardized tests are sometimes used to compare proficiencies of students from different institutions or countries. For example, the Organisation for Economic Co-operation and Development (OECD) uses Programme for International Student Assessment (PISA) to evaluate certain skills and knowledge of students from different participating countries.[28]

Licensing and certification

[edit]Standardized tests are sometimes used by certain governing bodies to determine whether a test taker is allowed to practice a profession, to use a specific job title, or to claim competency in a specific set of skills. For example, a test taker who intends to become a lawyer is usually required by a governing body such as a governmental bar licensing agency to pass a bar exam.

Immigration and naturalization

[edit]Standardized tests are also used in certain countries to regulate immigration. For example, intended immigrants to Australia are legally required to pass a citizenship test as part of that country's naturalization process.[29]

Language testing in naturalization process

[edit]When analyzed in the context of language texting in the naturalization processes, the ideology can be found from two distinct but nearly related points. One refers to the construction and deconstruction of the nation's constitutive elements that makes their own identity, while the second has a more restricted view of the notion of specific language and ideologies that may served in a specific purpose.[30]

Intelligence quotient

[edit]Competitions

[edit]Tests are sometimes used as a tool to select for participants that have potential to succeed in a competition such as a sporting event. For example, skaters who wish to participate in figure skating competitions in the United States must pass official U.S. Figure Skating tests just to qualify.[31]

Group memberships

[edit]Tests are sometimes used by a group to select for certain types of individuals to join the group. For example, Mensa International is a high-IQ society that requires individuals to score at the 98th percentile or higher on a standardized, supervised IQ test.[32]

Types

[edit]Assessment types include:[33][34][35]

- Formative assessment

- Formative assessments are informal and formal tests taken during the learning process. These assessments modify the later learning activities, to improve student achievement. They identify strengths and weaknesses and help target areas that need work. The goal of formative assessment is to monitor student learning to provide ongoing feedback that can be used by instructors to improve their teaching and by students to improve their learning.[citation needed]

- Summative assessment

- Summative assessments evaluate competence at the end of an instructional unit, with the goal of determining if the candidate has assimilated the knowledge or skills to the required standard. Summative assessments may cover a few days' instruction, an entire term's work in cases such as final exams, or even multiple years' study, in the case of high school exit exams, GCE Advanced Level examples, or professional licensing tests such as the United States Medical Licensing Examination.

- Norm-referenced test

- Norm-referenced tests compare a student's performance against a national or other "norm" group. Only a certain percentage of test takers will get the best and worse scores. Norm-referencing is usually called grading on a curve when the comparison group is students in the same classroom. Norm-referenced tests report whether test takers performed better or worse than a hypothetical average student, which is determined by comparing scores against the performance results of a statistically selected group of test takers, typically of the same age or grade level, who have already taken the exam.[36]

- Criterion-referenced test

Criterion-referenced tests are designed to measure student performance against a fixed set of criteria or learning standards. It is possible for all test takers to pass, just like it is possible for all test takers to fail. These tests can use individual's scores to focus on improving the skills that were lacking in comprehension.[36]

- Performance-based assessments

- Performance-based assessments require students to solve real-world problems or produce something with real-world application. For example, the student can demonstrate baking skills by baking a cake, and having the outcome judged for appearance, flavor, and texture.

- Authentic assessment

- An authentic assessment is the measurement of accomplishments within a realistic, practical context that is relevant outside of the school setting.[37] For example, an authentic assessment of arithmetic skills is figuring out how much the family's groceries will cost this week. This provides as much information about the students' addition skills as a test question that asks what the sum of various numbers are.

- Standardized test

- Standardized tests are all tests that are administered and scored in a consistent manner, regardless of whether it is a quick quiz created by the local teacher or a heavily researched test given to millions of people.[38] Standardized tests are often used in education, professional certification, psychology (e.g., MMPI), the military, and many other fields.

- Non-standardized test

- Non-standardized tests are flexible in scope and format, and variable in difficulty. For example, a teacher may go around the classroom and ask each student a different question. Some questions will inevitably be harder than others, and the teacher may be more strict with the answers from better students. A non-standardized test may be used to determine the proficiency level of students, to motivate students to study, to provide feedback to students, and to modify the curriculum to make it more appropriate for either low- or high-skill students

- High-stakes test

- High-stakes tests are tests with important consequences for the individual test taker, such as getting a driver's license. A high-stakes test does not need to be a high-stress test, if the test taker is confident of passing.[citation needed]

- Competitive examinations

Competitive exams are norm-referenced, high-stakes tests in which candidates are ranked according to their grades and/or percentile, and then top rankers are selected. If the examination is open for n positions, then the first n candidates in ranks pass, the others are rejected. They are used as entrance examinations for university and college admissions such as the Joint Entrance Examination or to secondary schools. Types are civil service examinations, required for positions in the public sector; the U.S. Foreign Service Exam, and the United Nations Competitive Examination. Competitive examinations are considered an egalitarian way to select worthy applicants without risking influence peddling, bias or other concerns.

A single test can have multiple qualities. For example, the bar exam for aspiring lawyers may be a norm-referenced, standardized, summative assessment. This means that only the test takers with higher scores will pass, that all of them took the same test under the same circumstances and were graded with the same scoring standards, and that the test is meant to determine whether the law school graduates have learned enough to practice their profession.

Assessment formats

[edit]Written tests

[edit]

Written tests are tests that are administered on paper or on a computer (as an eExam). A test taker who takes a written test could respond to specific test items by writing or typing within a given space of the test or on a separate form or document.

In some tests; where knowledge of many constants or technical terms is required to effectively answer questions, like Chemistry or Biology – the test developer may allow every test taker to bring with them a cheat sheet.

A test developer's choice of which style or format to use when developing a written test is usually arbitrary given that there is no single invariant standard for testing. Be that as it may, certain test styles and formats have become more widely used than others. Below is a list of those formats of test items that are widely used by educators and test developers to construct paper or computer-based tests. As a result, these tests may consist of only one type of test item format (e.g., multiple-choice test, essay test) or may have a combination of different test item formats (e.g., a test that has multiple-choice and essay items).

Multiple choice

[edit]In a test that has items formatted as multiple-choice questions, a candidate would be given a number of set answers for each question, and the candidate must choose which answer or group of answers is correct. There are two families of multiple-choice questions.[39] The first family is known as the True/False question and it requires a test taker to choose all answers that are appropriate. The second family is known as One-Best-Answer question and it requires a test taker to answer only one from a list of answers.

There are several reasons to using multiple-choice questions in tests. In terms of administration, multiple-choice questions usually requires less time for test takers to answer, are easy to score and grade, provide greater coverage of material, allows for a wide range of difficulty, and can easily diagnose a test taker's difficulty with certain concepts.[40] As an educational tool, multiple-choice items test many levels of learning as well as a test taker's ability to integrate information, and it provides feedback to the test taker about why distractors were wrong and why correct answers were right. Nevertheless, there are difficulties associated with the use of multiple-choice questions. In administrative terms, multiple-choice items that are effective usually take a great time to construct.[40] As an educational tool, multiple-choice items do not allow test takers to demonstrate knowledge beyond the choices provided and may even encourage guessing or approximation due to the presence of at least one correct answer. For instance, a test taker might not work out explicitly that , but knowing that , they would choose an answer close to 48. Moreover, test takers may misinterpret these items and in the process, perceive these items to be tricky or picky. Finally, multiple-choice items do not test a test taker's attitudes towards learning because correct responses can be easily faked.

Alternative response

[edit]True/False questions present candidates with a binary choice – a statement is either true or false. This method presents problems, as depending on the number of questions, a significant number of candidates could get 100% just by guesswork, and should on average get 50%.

Matching type

[edit]A matching item is an item that provides a defined term and requires a test taker to match identifying characteristics to the correct term.[41] [example needed]

Completion type

[edit]A fill-in-the-blank item provides a test taker with identifying characteristics and requires the test taker to recall the correct term.[41] There are two types of fill-in-the-blank tests. The easier version provides a word bank of possible words that will fill in the blanks. For some exams all words in the word bank are used exactly once. If a teacher wanted to create a test of medium difficulty, they would provide a test with a word bank, but some words may be used more than once and others not at all. The hardest variety of such a test is a fill-in-the-blank test in which no word bank is provided at all. This generally requires a higher level of understanding and memory than a multiple-choice test. Because of this, fill-in-the-blank tests with no word bank are often feared by students.

Essay

[edit]Items such as short answer or essay typically require a test taker to write a response to fulfill the requirements of the item. In administrative terms, essay items take less time to construct.[40] As an assessment tool, essay items can test complex learning objectives as well as processes used to answer the question. The items can also provide a more realistic and generalizable task for test. Finally, these items make it difficult for test takers to guess the correct answers and require test takers to demonstrate their writing skills as well as correct spelling and grammar.

The difficulties with essay items are primarily administrative: for example, test takers require adequate time to be able to compose their answers.[40] When these questions are answered, the answers themselves are usually poorly written because test takers may not have time to organize and proofread their answers. In turn, it takes more time to score or grade these items. When these items are being scored or graded, the grading process itself becomes subjective as non-test related information may influence the process. Thus, considerable effort is required to minimize the subjectivity of the grading process. Finally, as an assessment tool, essay questions may potentially be unreliable in assessing the entire content of a subject matter.

Instructions to exam candidates rely on the use of command words, which direct the examinee to respond in a particular way, for example by describing or defining a concept, or comparing and contrasting two or more scenarios or events. Some command words require more insight or skill than others: for example, "analyse" and "synthesise" assess higher-level skills than "describe".[42] More demanding command words usually attract greater mark weighting in the examination. In the UK, Ofqual maintains an official list of command words explaining their meaning.[43] The Welsh government's guidance on the use of command words advises that they should be used "consistently and correctly", but notes that some subjects have their own traditions and expectations in regard to candidates' responses,[44] and Cambridge Assessment notes that in some cases, subject-specific command words may be in used.[45]

Quizzes

[edit]A quiz is a brief assessment which may cover a small amount of material that was given in a class. Some of them cover two to three lectures that were given in a period of times as a reading section or a given exercise in were the most important part of the class was summarize. However, a simple quiz usually does not count very much, and instructors usually provide this type of test as a formative assessment to help determine whether the student is learning the material. In addition, doing this at the time the instructor collected all can make a significant part of the final course grade.[46]

Mathematical questions

[edit]Most mathematics questions, or calculation questions from subjects such as chemistry, physics, or economics employ a style which does not fall into any of the above categories, although some papers, notably the Maths Challenge papers in the United Kingdom employ multiple choice. Instead, most mathematics questions state a mathematical problem or exercise that requires a student to write a freehand response. Marks are given more for the steps taken than for the correct answer. If the question has multiple parts, later parts may use answers from previous sections, and marks may be granted if an earlier incorrect answer was used but the correct method was followed, and an answer which is correct (given the incorrect input) is returned.

Higher-level mathematical papers may include variations on true/false, where the candidate is given a statement and asked to verify its validity by direct proof or stating a counterexample.

Open-book tests

[edit]Though not as popular as the closed-book test, open-book (or open-note) tests are slowly rising in popularity. An open-book test allows the test taker to access textbooks and all of their notes while taking the test.[47] The questions asked on open-book exams are typically more thought provoking and intellectual than questions on a closed-book exam. Rather than testing what facts test takers know, open-book exams force them to apply the facts to a broader question. The main benefit that is seen from open-book tests is that they are a better preparation for the real world where one does not have to memorize and has anything they need at their disposal.[48]

Oral tests

[edit]An oral test is a test that is answered orally (verbally). The teacher or oral test assessor will verbally ask a question to a student, who will then answer it using words.

Physical fitness tests

[edit]

A physical fitness test is a test designed to measure physical strength, agility, and endurance. They are commonly employed in educational institutions as part of the physical education curriculum, in medicine as part of diagnostic testing, and as eligibility requirements in fields that focus on physical ability such as military or police. Throughout the 20th century, scientific evidence emerged demonstrating the usefulness of strength training and aerobic exercise in maintaining overall health, and more agencies began to incorporate standardized fitness testing. In the United States, the President's Council on Youth Fitness was established in 1956 as a way to encourage and monitor fitness in schoolchildren.

Common tests[49][50][51] include timed running or the multi-stage fitness test (commonly known as the "beep test"), and numbers of push-ups, sit-ups/abdominal crunches, and pull-ups that the individual can perform. More specialised tests may be used to test ability to perform a particular job or role. Many gyms, private organisations and event organizers have their own fitness tests. Using military techniques developed by the British Army and modern test like Illinois Agility Run and Cooper Test.[52]

Stop watch timing was common until recent years when hand timing had proven to be inaccurate and inconsistent.[53] Electronic timing is the new standard in order to promote accuracy and consistency, and lessen bias.[citation needed]

Performance tests

[edit]A performance test is an assessment that requires an examinee to actually perform a task or activity, rather than simply answering questions referring to specific parts. The purpose is to ensure greater fidelity to what is being tested.

An example is a behind-the-wheel driving test to obtain a driver's license. Rather than only answering simple multiple-choice items regarding the driving of an automobile, a student is required to actually drive one while being evaluated.

Performance tests are commonly used in workplace and professional applications, such as professional certification and licensure. When used for personnel selection, the tests might be referred to as a work sample. A licensure example would be cosmetologists being required to demonstrate a haircut or manicure on a live person. The Group–Bourdon test is one of a number of psychometric tests which trainee train drivers in the UK are required to pass.[54]

Some performance tests are simulations. For instance, the assessment to become certified as an ophthalmic technician includes two components, a multiple-choice examination and a computerized skill simulation. The examinee must demonstrate the ability to complete seven tasks commonly performed on the job, such as retinoscopy, that are simulated on a computer.

Midterms and finals

[edit]Midterm exam

[edit]A midterm exam, is an exam given near the middle of an academic grading term, or near the middle of any given quarter or semester.[55] Midterm exams are a type of formative or summative assessment.[56]

Final examination

[edit]A final examination, annual, exam, final interview, or simply final, is a test given to students at the end of a course of study or training. Although the term can be used in the context of physical training, it most often occurs in the academic world. Most high schools, colleges, and universities run final exams at the end of a particular academic term, typically a quarter or semester, or more traditionally at the end of a complete degree course.

Isolated purpose and common practice

[edit]The purpose of the test is to make a final review of the topics covered and assessment of each student's knowledge of the subject. A final is technically just a greater form of a "unit test". They have the same purpose; finals are simply larger. Not all courses or curricula culminate in a final exam; instructors may assign a term paper or final project in some courses. The weighting of the final exam also varies. It may be the largest—or only—factor in the student's course grade; in other cases, it may carry the same weight as a midterm exam, or the student may be exempted. Not all finals need be cumulative, however, as some simply cover the material presented since the last exam. For example, a microbiology course might only cover fungi and parasites on the final exam if this were the policy of the professor, and all other subjects presented in the course would then not be tested on the final exam.

Prior to the examination period most students in the Commonwealth have a week or so of intense revision and study known as swotvac.

In the UK, most universities hold a single set of "Finals" at the end of the entire degree course. In Australia, the exam period varies, with high schools commonly assigning one or two weeks for final exams, but the university period—sometimes called "exam week" or just "exams"—may stretch to a maximum of three weeks.

Practice varies widely in the United States; "finals" or the "finals period" at the university level constitutes two or three weeks after the end of the academic term, but sometimes exams are administered in the last week of instruction. Some institutions designate a "study week" or "reading period" between the end of instruction and the beginning of finals, during which no examinations may be administered. Students at many institutions know the week before finals as "dead week." Most final exams incorporate the reading material that has been assigned throughout the term.

Though common in French tertiary institutions, final exams are not often assigned in French high schools. However, French high school students hoping to continue their studies at university level will sit a national exam, known as the Baccalauréat.

In some countries and locales that hold standardised exams, it is customary for schools to administer mock examinations, with formats modelling the real exam. Students from different schools are often seen exchanging mock papers as a means of test preparation.

Take-home finals

[edit]A take-home final is an examination at the end of an academic term that is usually too long or complex to be completed in a single session as an in-class final. There is usually a deadline for completion, such as within one or two weeks of the end of the semester. A take-home final differs from a final paper, often involving research, extended texts and display of data.[citation needed]

Schedule

[edit]In some cases, schools will run on a modified schedule for final exams to allow students more time to do their exams. However, this is not necessarily the case for every institution. [citation needed]

Preparations

[edit]From the perspective of a test developer, there is great variability with respect to time and effort needed to prepare a test. Likewise, from the perspective of a test taker, there is also great variability with respect to the time and effort needed to obtain a desired grade or score on any given test. When a test developer constructs a test, the amount of time and effort is dependent upon the significance of the test itself, the proficiency of the test taker, the format of the test, class size, deadline of the test, and experience of the test developer.

The process of test construction has been aided in several ways. For one, many test developers were themselves students at one time, and therefore are able to modify or outright adopt questions from their previous tests. In some countries, book publishers often provide teaching packages that include test banks to university instructors who adopt their published books for their courses.[57] These test banks may contain up to four thousand sample test questions that have been peer-reviewed and time-tested. The instructor who chooses to use this testbank would only have to select a fixed number of test questions from this test bank to construct a test.

As with test constructions, the time needed for a test taker to prepare for a test is dependent upon the frequency of the test, the test developer, and the significance of the test. In general, nonstandardized tests that are short, frequent, and do not constitute a major portion of the test taker's overall course grade or score do not require the test taker to spend much time preparing for the test.[58] Conversely, nonstandardized tests that are long, infrequent, and do constitute a major portion of the test taker's overall course grade or score usually require the test taker to spend great amounts of time preparing for the test. To prepare for a nonstandardized test, test takers may rely upon their reference books, class or lecture notes, Internet, and past experience. Test takers may also use various learning aids to study for tests such as flashcards and mnemonics.[59] Test takers may even hire tutors to coach them through the process so that they may increase the probability of obtaining a desired test grade or score. In countries such as the United Kingdom, demand for private tuition has increased significantly in recent years.[60] Finally, test takers may rely upon past copies of a test from previous years or semesters to study for a future test. These past tests may be provided by a friend or a group that has copies of previous tests or by instructors and their institutions, or by the test provider (such as an examination board) itself.[61][62]

Unlike a nonstandardized test, the time needed by test takers to prepare for standardized tests is less variable and usually considerable. This is because standardized tests are usually uniform in scope, format, and difficulty and often have important consequences with respect to a test taker's future such as a test taker's eligibility to attend a specific university program or to enter a desired profession. It is not unusual for test takers to prepare for standardized tests by relying upon commercially available books that provide in-depth coverage of the standardized test or compilations of previous tests (e.g., ten year series in Singapore). In many countries, test takers even enroll in test preparation centers or cram schools that provide extensive or supplementary instructions to test takers to help them better prepare for a standardized test. In Hong Kong, it has been suggested that the tutors running such centers are celebrities in their own right.[63] This has led to private tuition being a popular career choice for new graduates in developed economies.[64][65] Finally, in some countries, instructors and their institutions have also played a significant role in preparing test takers for a standardized test.

Cheating

[edit]

Cheating on a test is the process of using unauthorized means or methods to obtain a desired test score or grade. This may range from bringing and using notes during a closed book examination, to copying another test taker's answer or choice of answers during an individual test, to sending a paid proxy to take the test.[66]

Several common methods have been employed to combat cheating. They include the use of multiple proctors or invigilators during a testing period to monitor test takers. Test developers may construct multiple variants of the same test to be administered to different test takers at the same time, or write tests with few multiple-choice options, based on the theory that fully worked answers are difficult to imitate.[67] In some cases, instructors themselves may not administer their own tests but will leave the task to other instructors or invigilators, which may mean that the invigilators do not know the candidates, and thus some form of identification may be required. Finally, instructors or test providers may compare the answers of suspected cheaters on the test themselves to determine whether cheating did occur.

See also

[edit]- Academic dishonesty – Any type of cheating that occurs in relation to a formal academic exercise

- Homework, also known as Assignment (education) – Educational practice

- Bar examination – Test required to practice law in a specific jurisdiction

- Blue book exam, used in free response exams

- Computerized adaptive testing – Form of computer-based test that adapts to the examinee's ability level

- Computerized classification test

- Concept inventory – Knowledge assessment tool

- Cooper test – Physical fitness test, used by Law, Military, and Fire services

- Credential – Qualification or authority issued by a competent third party

- Driver's license – Document allowing one to drive a motorized vehicle

- Electronic assessment – Use of information technology in assessment

- E-scape – project at Goldsmiths University, London, a technology and approach that looks specifically at the assessment of creativity and collaboration.

- Educational software – Software intended for an educational purpose

- Test anxiety – Anxiety or stress triggered by exams

- General Educational Development – High school diploma test

- Grading in education – Standardized measurement of academic performance

- Harvard step test – Fitness test, a cardiovascular test

- Law

- Cross-examination – The interrogation of a witness called by one's opponent

- Direct examination – The questioning of a witness in a trial by the party who called the witness

- List of standardized tests in the United States

- Matriculation examination – Final examination before school graduation

- Medical College Admission Test – Standardized examination for prospective medical students in the United States and Canada

- Optical mark recognition – Capturing of human-marked data from document forms

- Performance testing – An assessment that requires the subject to actually perform a task or activity

- Physical examination – Process by which a medical professional investigates the body of a patient for signs of disease

- Pilot certification in the United States – Pilot certification

- Progress testing

- Project Talent – High School study (in the US)

- Vertical jump – Jump vertically in the air, a leg power test

- Trial and error – Method of problem-solving, a method of problem solving

International examinations

[edit]- Abitur – used in Germany.

- GCSE and A-level—Used in the UK except Scotland.

- International Baccalaureate Diploma Programme – international examination

- International General Certificate of Secondary Education (IGCSE) – international examinations

- Junior Certificate and Leaving Certificate – Republic of Ireland.

- Matura/Maturita – used in Austria, Bosnia and Herzegovina, Bulgaria, Croatia, the Czech Republic, Italy, Liechtenstein, Hungary, Montenegro, North Macedonia, Poland, Serbia, Slovenia, Switzerland, and Ukraine; previously used in Albania.

- Nationella prov – used in Sweden

- National 5, Higher Grade, and Advanced Higher – used in Scotland

- Uttar Pradesh Subordinate Services Selection Commission – Organization authorized to conduct examinations in Uttar Pradesh state in India

References

[edit]- ^ "Definition of test". Merriam-Webster.

- ^ Thissen, D. & Wainer, H. (2001). Test Scoring. Mahwah, NJ: Erlbaum, p. 1.

- ^ Wu, 413–419.

- ^ Paludin, 97.

- ^ Kracke, 252.

- ^ Ebrey, Patricia Buckley (2010). The Cambridge Illustrated History of China. Cambridge: Cambridge University Press, 2nd Ed., pp. 145–147, 198–200.

- ^ Liu, Haifeng (October 2007). "Influence of China’s Imperial Examinations on Japan, Korea and Vietnam". Frontiers of History in China, Volume 2, Issue 4, pp. 493–512.

- ^ a b Ko 2017.

- ^ Kracke, 251.

- ^ Yu 2009, p. 15-16.

- ^ Schwarz, Bill. (1996). The expansion of England: race, ethnicity and cultural history. Psychology Press; ISBN 0-415-06025-7 p. 232.

- ^ a b c d e Ssu-yu Teng, "Chinese Influence on the Western Examination System", Harvard Journal of Asiatic Studies 7 (1942–1943): 267–312.

- ^ a b Bodde, Derk, Chinese Ideas in the West. Committee on Asiatic Studies in American Education [1]

- ^ Huddleston 1996, p. 9.

- ^ Bodde, Derke. "China: A Teaching Workbook". Columbia University.

- ^ Kazin, Edwards, and Rothman (2010), 142.

- ^ Wu, 417

- ^ Huddleston, Mark W.; William W. Boyer (1996). The Higher Civil Service in the United States: Quest for Reform. University of Pittsburgh Pre. p. 15. ISBN 0822974738.

- ^ Russell, David R. (2002). Writing in the Academic Disciplines: A Curricular History. SIU Press. pp. 158–159. ISBN 9780809324675.

- ^ Kaplan, R. M. & Saccuzzo, D. P. (2009) Psychological Testing Belmont, CA: Wadsworth.

- ^ The College Board (2003). "A Brief History of the Advanced Placement Program" (PDF). Archived from the original (PDF) on 2009-02-05. Retrieved 2009-01-29.

- ^ "GCSEs: The official guide to the system" (PDF). Archived from the original (PDF) on 2012-06-04.

- ^ "About the SAT". 2016-11-28.

- ^ "About ACT: History". Archived from the original on October 8, 2006. Retrieved October 31, 2006. Name changed in 1996.

- ^ "Cambridge Pre-U - Post 16 Qualifications". www.cambridgeinternational.org.

- ^ "International Qualifications - University of Oxford". Archived from the original on 2010-08-22.

- ^ "Harvard College Admissions".

- ^ "PISA - PISA". www.oecd.org.

- ^ "Australian Citizenship - Australian Citizenship test".

- ^ Škifić, Sanja (2012). "Language ideology and citizenship: A comparative analysis of language testing in naturalisation processes". European Journal of Language Policy. 4 (2): 217–236. doi:10.3828/ejlp.2012.13.

- ^ "Welcome to U.S. Figure Skating". Archived from the original on 2010-07-27.

- ^ "How do I Join Up?". Mensa International.

- ^ University, Carnegie Mellon. "Homepage - CMU - Carnegie Mellon University". www.cmu.edu.

- ^ "Formal vs. Informal Assessments | Scholastic". www.scholastic.com.

- ^ "What Are Some Types of Assessment?". Edutopia.

- ^ a b Kaplan, Robert M. (2018). Psychological testing : principles, applications, & issues. Dennis P. Saccuzzo (Ninth ed.). Boston, MA. ISBN 978-1-337-09813-7. OCLC 982213567.

{{cite book}}: CS1 maint: location missing publisher (link) - ^ Ormiston, Meg (2011). Creating a Digital-Rich Classroom: Teaching & Learning in a Web 2.0 World. Bloomington, IN: Solution Tree Press. pp. 2–3. ISBN 978-1-935249-87-0.

- ^ North Central Regional Educational Laboratory, NCREL.org Archived 2008-03-05 at the Wayback Machine

- ^ "Constructing Written Test Questions For the Basic and Clinical Sciences" (PDF).

- ^ a b c d "Types of Test Item Formats".

- ^ a b "MFO Topic C5: Developing Test Questions".

- ^ NEBOSH, Guidance on command words used in learning outcomes and question papers – Diploma qualifications, version 5, June 2021, accessed 23 September 2023

- ^ AQA, Command words, accessed 27 December 2018

- ^ Welsh Government, Fair access by design, Guidance document 174/2015, issued June 2015, accessed 15 August 2020

- ^ Cambridge Assessment, Understanding Command Words, accessed 23 September 2023

- ^ Tobias, S (1995). Overcoming Math Anxiety. New York: W.W. Norton and Company. p. 85 (Chapter 4).

- ^ "Different Exam Types - Different Approaches". ExamTime. 2012-02-21. Retrieved 2017-12-11.

- ^ Johanns, Beth; Dinkens, Amber; Moore, Jill (2017-11-01). "A systematic review comparing open-book and closed-book examinations: Evaluating effects on development of critical thinking skills". Nurse Education in Practice. 27: 89–94. doi:10.1016/j.nepr.2017.08.018. ISSN 1471-5953. PMID 28881323.

- ^ "Army Fitness Standards".

- ^ "RAF Fitness Standards".

- ^ "USMC Personal Fitness Test (Chapter 2 - Conduct of the PFT)" (PDF).

- ^ "Welcome". Fittest.live. Retrieved 2016-11-10.

- ^ Mayhew, Jerry L.; Houser, Jeremy J.; Briney, Ben B.; Williams, Tyler B.; Piper, Fontaine C.; Brechue, William F. (2010). "Comparison Between Hand and Electronic Timing of 40-yd Dash Performance in College Football Players". Journal of Strength and Conditioning Research. 24 (2): 447–451. doi:10.1519/JSC.0b013e3181c08860. PMID 20072055. S2CID 35100936.

- ^ "Group–Bourdon tool". Digital Reality. Archived from the original on 3 January 2011. Retrieved 2 March 2011.

- ^ "Noun 1. midterm exam". The Free Dictionary. Retrieved 2012-05-12.

- ^ O'Connell, Robert M. "Tests Given Throughout a Course as Formative Assessment Can Improve Student Learning" (PDF). American Society for Engineering Education. Retrieved 6 April 2019.

- ^ WEHMEIER, Nicolas. "Oxford University Press | Online Resource Centre | Learn about Test banks". global.oup.com. Retrieved 2016-12-09.

- ^ "How to study for Quizzes and Exams in Biochemistry" (PDF). Archived from the original (PDF) on 2010-12-31.

- ^ "Study strategies". Archived from the original on 2011-10-07.

- ^ Weale, Sally (2016-09-07). "Sharp rise in children receiving private tuition". The Guardian. ISSN 0261-3077. Retrieved 2016-12-09.

- ^ "Past Exam Papers". Archived from the original on 2010-08-10.

- ^ "Past papers and mark schemes". www.aqa.org.uk. AQA. Archived from the original on 2016-12-21. Retrieved 2016-12-09.

- ^ Sharma, Yojana (2012-11-27). "Meet the 'tutor kings and queens'". BBC News. Retrieved 2016-12-09.

- ^ Lomax, Robert. "How to become a private tutor". Retrieved 2016-12-09.

- ^ Cohen, Daniel H. (2013-10-25). "The new boom in home tuition – if you can pay £40 an hour". The Guardian. ISSN 0261-3077. Retrieved 2016-12-09.

- ^ "Proxy test takers, item harvesters and cheaters... be very afraid". ccie-in-3-months.blogspot.co.uk. 24 April 2008. Retrieved 2016-12-09.

- ^ "Easy Ways to Prevent Cheating". TeachHUB. Archived from the original on 2016-12-06. Retrieved 2016-12-09.

Bibliography

[edit]- de Bary, William Theodore, ed. (1960) Sources of Chinese Tradition: Volume I (New York: Columbia University Press). ISBN 978-0-231-10939-0.

- Bol, Peter K. (2008), Neo-Confucianism in History

- Chaffee, John (1995), The Thorny Gates of Learning in Sung [Song] China, State University of New York Press

- Christie, Anthony (1968). Chinese Mythology. Feltham: Hamlyn Publishing. ISBN 0600006379.

- Ch'ü, T'ung-tsu (1967 [1957]). "Chinese Class Structure and its Ideology", in Chinese Thoughts & Institutions, John K. Fairbank, editor. Chicago and London: University of Chicago Press.

- Crossley, Pamela Kyle (1997). The Manchus. Cambridge, Mass.: Blackwell. ISBN 1557865604.

- Elman, Benjamin (2002), A Cultural History of Civil Examinations in Late Imperial China, University of California Press, ISBN 0-520-21509-5

- —— (2009), "Civil Service Examinations (Keju)" (PDF), Berkshire Encyclopedia of China, Great Barrington, MA: Berkshire, pp. 405–410

- Chaffee, John W. (1991), The Marriage of Sung Imperial Clanswomen

- Fairbank, John King (1992). China: A New History. Cambridge, Massachusetts: Belknap Press/Harvard University Press. ISBN 0-674-11670-4.

- Franke, Wolfgang (1960). The Reform and Abolition of the Traditional Chinese Examination System. Harvard Univ Asia Center. ISBN 978-0-674-75250-4.

- Gregory, Peter N. (1993), Religion and Society in T'ang and Sung China

- Hinton, David (2008). Classical Chinese Poetry: An Anthology. New York: Farrar, Straus, and Giroux. ISBN 0374105367 / ISBN 9780374105365

- Ho, Ping-Ti (1962), The Ladder of Success in Imperial China Aspects of Social Mobility, 1368–1911, New York: Columbia University Press

- Huddleston, Mark W. (1996), The Higher Civil Service in the United States

- Ko, Kwang Hyun (2017), "A Brief History of Imperial Examination and Its Influences", Society, 54 (3): 272–278, doi:10.1007/s12115-017-0134-9, S2CID 149230149

- Kracke, E. A. (1947) "Family vs. Merit in Chinese Civil Service Examinations under the Empire.” Harvard Journal of Asiatic Studies 10#2 1947, pp. 103–123. online

- Kracke, E. A., Jr. (1967 [1957]). "Region, Family, and Individual in the Chinese Examination System", in Chinese Thoughts & Institutions, John K. Fairbank, editor. Chicago: University of Chicago Press.

- Lee, Thomas H.C. Government Education and Examinations in Sung [Song] China (Hong Kong: Chinese University Press, New York: St. Martin's Press, 1985).

- Man-Cheong, Iona (2004). The Class of 1761: Examinations, the State and Elites in Eighteenth-Century China. Stanford: Stanford University Press.

- Miyazaki, Ichisada (1976), China's Examination Hell: The Civil Service Examinations of Imperial China, translated by Conrad Schirokauer, Weatherhill; reprint: Yale University Press, 1981, ISBN 9780300026399

- Murck, Alfreda (2000). Poetry and Painting in Song China: The Subtle Art of Dissent. Cambridge (Massachusetts) and London: Harvard University Asia Center for the Harvard-Yenching Institute. ISBN 0-674-00782-4.

- Paludan, Ann (1998). Chronicle of the Chinese Emperors: The Reign-by-Reign Record of the Rulers of Imperial China. New York, New York: Thames and Hudson. ISBN 0-500-05090-2

- Rossabi, Morris (1988). Khubilai Khan: His Life and Times. Berkeley: University of California Press. ISBN 0-520-05913-1

- Smith, Paul Jakov (2015), A Crisis in the Literati State

- Wang, Rui (2013). The Chinese Imperial Examination System : An Annotated Bibliography. Lanham: Scarecrow Press. ISBN 9780810887022.

- Wu, K. C. (1982). The Chinese Heritage. New York: Crown Publishers. ISBN 0-517-54475X.

- Yang, C. K. (Yang Ch'ing-k'un). Religion in Chinese Society : A Study of Contemporary Social Functions of Religion and Some of Their Historical Factors (1967 [1961]). Berkeley and Los Angeles: University of California Press.

- Yao, Xinzhong (2003), The Encyclopedia of Confucianism

- Yu, Pauline (2002). "Chinese Poetry and Its Institutions", in Hsiang Lectures on Chinese Poetry, Volume 2, Grace S. Fong, editor. (Montreal: Center for East Asian Research, McGill University).

- Yu, Jianfu (2009), "The influence and enlightenment of Confucian cultural education on modern European civilization", Front. Educ. China, 4 (1): 10–26, doi:10.1007/s11516-009-0002-5, S2CID 143586407

- Etienne Zi. Pratique Des Examens Militaires En Chine. (Shanghai, Variétés Sinologiques. No. 9, 1896). University of Oregon Libraries (not searchable) Archived 2014-04-15 at the Wayback Machine, American Libraries Internet Archive Google Books (Searchable).

- This article incorporates material from the Library of Congress that is believed to be in the public domain.

Further reading

[edit]- Airasian, P. (1994) "Classroom Assessment", Second Edition, NY: McGraw-Hill.

- Cangelosi, J. (1990) "Designing Tests for Evaluating Student Achievement". NY: Addison-Wesley.

- Gronlund, N. (1993) "How to make achievement tests and assessments", 5th edition, NY: Allyn and Bacon.

- Haladyna, T.M. & Downing, S.M. (1989) Validity of a Taxonomy of Multiple-Choice Item-Writing Rules. "Applied Measurement in Education", 2(1), 51–78.

- Monahan, T. (1998) The Rise of Standardized Educational Testing in the U.S. – A Bibliographic Overview.

- Phelps, R.P., Ed. (2008) Correcting Fallacies About Educational and Psychological Testing, American Psychological Association.

- Ravitch, Diane, "The Uses and Misuses of Tests" Archived 2017-10-18 at the Wayback Machine, in The Schools We Deserve (New York: Basic Books, 1985), pp. 172–181.

- Wilson, N. (1997) Educational standards and the problem of error. Education Policy Analysis Archives, Vol 6 No 10