Video game crash of 1983

| Part of a series on the |

| History of video games |

|---|

The video game crash of 1983 (known in Japan as the Atari shock)[1] was a large-scale recession in the video game industry that occurred from 1983 to 1985, primarily in the United States. The crash was attributed to several factors, including market saturation in the number of video game consoles and available games, many of which were of poor quality. Waning interest in console games in favor of personal computers also played a role. Home video game revenue peaked at around $3.2 billion in 1983 ($9.79 billion in 2023), then fell to around $100 million by 1985 ($283 million in 2023) (a drop of almost 97 percent). The crash abruptly ended what is retrospectively considered the second generation of console video gaming in North America. To a lesser extent, the arcade video game market also weakened as the golden age of arcade video games came to an end.

Lasting about two years, the crash shook a then-booming video game industry and led to the bankruptcy of several companies producing home computers and video game consoles. Analysts of the time expressed doubts about the long-term viability of video game consoles and software.

The North American video game console industry recovered a few years later, mostly due to the widespread success of Nintendo's Western branding for its Famicom console, the Nintendo Entertainment System (NES), released in October 1985. The NES was designed to avoid the missteps that caused the 1983 crash and the stigma associated with video games at that time.

Causes and factors[edit]

Flooded console market[edit]

The Atari Video Computer System (renamed the Atari 2600 in late 1982) was not the first home system with swappable game cartridges. By 1980, it was the most popular second-generation console by a wide margin. Launched in 1977 just ahead of the collapse of the market for home Pong console clones, the Atari VCS experienced modest sales for its first few years. In 1980, Atari's licensed version of Space Invaders from Taito became the console's killer application; sales of the VCS quadrupled, and the game was the first title to sell more than a million copies.[2][3] Spurred by the success of the Atari VCS, other consoles were introduced, both from Atari and other companies: Odyssey², Intellivision, ColecoVision, Atari 5200, and Vectrex. Notably, Coleco sold an add-on allowing Atari VCS games to be played on its ColecoVision, as well as bundling the console with a licensed home version of Nintendo's arcade hit Donkey Kong. In 1982, the ColecoVision held roughly 17% of the hardware market, compared to Atari VCS' 58%. This was the first real threat to Atari's dominance of the home console market.[4]

Each new console had its own library of games produced exclusively by the console maker, while the Atari VCS also had a large selection of titles produced by third-party developers. In 1982, analysts marked trends of saturation, mentioning that the amount of new software coming in would only allow a few big hits, that retailers had devoted too much floor space to systems, and that price drops for home computers could result in an industry shakeup.[5] Atari had a large inventory after significant portions of the 1982 orders were returned.[6]

In addition, the rapid growth of the video game industry led to an increased demand, which the manufacturers over-projected. In 1983, an analyst for Goldman Sachs stated the demand for video games was up 100% from the previous, but the manufacturing output had increased by 175%, creating a significant surplus. Atari CEO Raymond Kassar recognized in 1982 that the industry's saturation point was imminent. However, Kassar expected this to occur when about half of American households had a video game console. The crash occurred when about 15 million machines had been sold, which soundly under-shot Kassar's estimate.[7]

Loss of publishing control[edit]

Prior to 1979, there were no third-party developers, with console manufacturers like Atari publishing all the games for their respective platforms. This changed with the formation of Activision in 1979. Activision was founded by four former Atari video game programmers who left the company because they felt that Atari's developers should receive the same recognition and accolades (specifically in the form of sales-based royalties and public-facing credits) as the actors, directors, and musicians working for other subsidiaries of Warner Communications (Atari's parent company at the time). Already being quite familiar with the Atari VCS, the four programmers developed their own games and cartridge manufacturing processes. Atari quickly sued to block sales of Activision's products, but failed to secure a restraining order, and ultimately settled the case in 1982. While the settlement stipulated that Activision must pay royalties to Atari, this case ultimately legitimized the viability of third-party game developers. Activision's games were as popular as Atari's, with Pitfall! (released in 1982) selling over 4 million units.

Prior to 1982, Activision was one of only a handful of third-parties publishing games for the Atari VCS. By 1982, Activision's success emboldened numerous other competitors to penetrate the market. However, Activision's founder David Crane observed that several of these companies were supported by venture capitalists attempting to emulate the success of Activision. Without the experience and skill of Activision's team, these inexperienced competitors mostly created games of poor quality.[8] Crane notably described these as "the worst games you can imagine".[9] While Activision's success could be attributed to the team's existing familiarity with the Atari VCS, other publishers had no such advantage. They largely relied on industrial espionage (poaching each other's employees, reverse-engineering each other's products, etc.) in their attempts to gain market share. In fact, even Atari themselves engaged in such practices, hiring several programmers from Mattel's Intellivision development studio, prompting a lawsuit that included charges of industrial espionage.

The rapid growth of the third-party game industry was easily illustrated by the number of vendors present at the semi-annual Consumer Electronics Show (CES). According to Crane, the number of third-party developers jumped from 3 to 30 between two consecutive events.[9] At the Summer 1982 CES,[7] there were 17 companies, including MCA Inc., and Fox Video Games announcing a combined 90 new Atari games.[10] By 1983, an estimated 100 companies were attempting to leverage the CES into a foothold in the market. AtariAge documented 158 different vendors that had developed for the Atari VCS.[11] In June 1982, the Atari games on the market numbered just 100 which by December, grew to over 400. Experts predicted a glut in 1983, with only 10% of games producing 75% of sales.[12]

BYTE stated in December, "in 1982 few games broke new ground in either design or format ... If the public really likes an idea, it is milked for all its worth, and numerous clones of a different color soon crowd the shelves. That is, until the public stops buying or something better comes along. Companies who believe that microcomputer games are the hula hoop of the 1980s only want to play Quick Profit."[13] Bill Kunkel said in January 1983 that companies had "licensed everything that moves, walks, crawls, or tunnels beneath the earth. You have to wonder how tenuous the connection will be between the game and the movie Marathon Man. What are you going to do, present a video game root canal?"[14] By September 1983, the Phoenix stated that 2600 cartridges were "no longer a growth industry".[15] Activision, Atari, and Mattel all had experienced programmers, but many of the new companies rushing to join the market did not have the expertise or talent to create quality games. Titles such as the Kaboom!-like Lost Luggage, rock band tie-in Journey Escape, and plate-spinning game Dishaster, were examples of games made in the hopes of taking advantage of the video-game boom, but later proved unsuccessful with retailers and potential customers.

The flood of new games was released into a limited competitive space. According to Activision's Jim Levy, they had projected that the total cartridge market in 1982 would be around 60 million, anticipating Activision would be able to secure between 12% and 15% of that market for their production numbers. However, with at least 50 different companies in the new marketspace, and each having produced between one and two million cartridges, along with Atari's own estimated 60 million cartridges in 1982, there was over 200% production of the actual demand for cartridges in 1982, which contributed to the stockpiling of unsold inventory during the crash.[16]

Competition from home computers[edit]

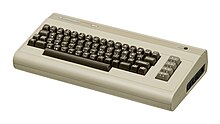

Inexpensive home computers had been first introduced in 1977. By 1979, Atari, Inc. unveiled the Atari 400 and 800 computers, built around a chipset originally meant for use in a game console, and which retailed for the same price as their respective names. In 1981, IBM introduced the first IBM Personal Computer with a $1,565 base price[17] (equivalent to $5,245 in 2023), while Sinclair Research introduced its low-end ZX81 microcomputer for £70 (equivalent to £339 in 2023). By 1982, new desktop computer designs were commonly providing better color graphics and sound than game consoles and personal computer sales were booming. The TI-99/4A and the Atari 400 were both at $349 (equivalent to $1,102 in 2023), the TRS-80 Color Computer sold at $379 (equivalent to $1,197 in 2023), and Commodore International had just reduced the price of the VIC-20 to $199 (equivalent to $628 in 2023) and the Commodore 64 to $499 (equivalent to $1,575 in 2023).[18][19]

Because computers generally had more memory and faster processors than a console, they permitted more sophisticated games. A 1984 compendium of reviews of Atari 8-bit software used 198 pages for games compared to 167 for all other software types.[20] Home computers could also be used for tasks such as word processing and home accounting. Games were easier to distribute, since they could be sold on floppy disks or cassette tapes instead of ROM cartridges. This opened the field to a cottage industry of third-party software developers. Writeable storage media allowed players to save games in progress, a useful feature for increasingly complex games which was not available on the consoles of the era.

In 1982, a price war that began between Commodore and Texas Instruments led to home computers becoming as inexpensive as video-game consoles;[21] after Commodore cut the retail price of the C64 to $300 in June 1983, some stores began selling it for as little as $199.[15] Dan Gutman, founder in 1982 of Video Games Player magazine in an article in 1987, recalled in 1983 that "People asked themselves, 'Why should I buy a video game system when I can buy a computer that will play games and do so much more?'"[22] The Boston Phoenix stated in September 1983 about the cancellation of the Intellivision III, "Who was going to pay $200-plus for a machine that could only play games?"[15] Commodore explicitly targeted video game players. Spokesman William Shatner asked in VIC-20 commercials "Why buy just a video game from Atari or Intellivision?", stating that "unlike games, it has a real computer keyboard" yet "plays great games too".[23] Commodore's ownership of chip fabricator MOS Technology allowed manufacture of integrated circuits in-house, so the VIC-20 and C64 sold for much lower prices than competing home computers. In addition, both Commodore computers were designed to utilize the ubiquitous Atari controllers so they could tap into the existing controller market.

"I've been in retailing 30 years and I have never seen any category of goods get on a self-destruct pattern like this", a Service Merchandise executive told The New York Times in June 1983.[21] The price war was so severe that in September Coleco CEO Arnold Greenberg welcomed rumors of an IBM 'Peanut' home computer because although IBM was a competitor, it "is a company that knows how to make money". "I look back a year or two in the videogame field, or the home-computer field", Greenberg added, "how much better everyone was, when most people were making money, rather than very few".[24] Companies reduced production in the middle of the year because of weak demand even as prices remained low, causing shortages as sales suddenly rose during the Christmas season;[25] only the Commodore 64 was widely available, with an estimated more than 500,000 computers sold during Christmas.[26] The 99/4A was such a disaster for TI, that the company's stock immediately rose by 25% after the company discontinued it and exited the home-computer market in late 1983.[27][28] JCPenney announced in December 1983 that it would soon no longer sell home computers, because of the combination of low supply and low prices.[29]

By that year, Gutman wrote, "Video games were officially dead and computers were hot". He renamed his magazine Computer Games in October 1983, but "I noticed that the word games became a dirty word in the press. We started replacing it with simulations as often as possible". Soon "The computer slump began ... Suddenly, everyone was saying that the home computer was a fad, just another hula hoop". Computer Games published its last issue in late 1984.[22] In 1988, Computer Gaming World founder Russell Sipe noted that "the arcade game crash of 1984 took down the majority of the computer game magazines with it." He stated that, by "the winter of 1984, only a few computer game magazines remained," and by mid-1985, Computer Gaming World "was the only 4-color computer game magazine left."[30]

Immediate effects[edit]

With the release of so many new games in 1982 that flooded the market, most stores had insufficient space to carry new games and consoles. As stores tried to return the surplus games to the new publishers, the publishers had neither new products nor cash to issue refunds to the retailers. Many publishers, including Games by Apollo[31] and U.S. Games,[32] quickly folded. Unable to return the unsold games to defunct publishers, stores marked down the titles and placed them in discount bins and sale tables. Recently released games which initially sold for US$35 (equivalent to $99 in 2021) were in bins for $5 ($14 in 2021).[32][33][16]

The presence of third-party sales drew the market share that the console manufacturers had. Atari's share of the cartridge-game market fell from 75% in 1981 to less than 40% in 1982, which negatively affected their finances.[34] The bargain sales of poor-quality titles further drew sales away from the more successful third-party companies like Activision due to poorly informed consumers being drawn by price to purchase the bargain titles rather than quality. By June 1983, the market for the more expensive games had shrunk dramatically and was replaced by a new market of rushed-to-market, low-budget games.[35] Crane said that "those awful games flooded the market at huge discounts, and ruined the video game business".[36]

A massive industry shakeout resulted. Magnavox abandoned the video game business entirely. Imagic withdrew its IPO the day before its stock was to go public; the company later collapsed. Activision had to downsize across 1984 and 1985 due to loss of revenue, and to stay competitive and maintain financial security, began development of games for the personal computer. Within a few years, Activision no longer produced cartridge-based games and focused solely on personal computer games.[35][16]

Atari was one of those companies most affected by the crash. As a company, its revenue dropped significantly due to dramatically lower sales and cost of returned stock. By mid-1983, the company had lost US$356 million, was forced to lay off 30% of its 10,000 employees, and moved all manufacturing to Hong Kong and Taiwan.[37] Unsold Pac-Man, E.T. the Extra-Terrestrial, and other 1982 and 1983 games and consoles started to fill their warehouses. In September 1983, Atari discreetly buried much of this excess stock in a landfill near Alamogordo, New Mexico, though Atari did not comment about their activity at the time. Misinformation related to sales of Pac-Man and E.T. led to the urban legend of the Atari video game burial, that millions of unsold cartridges were buried there. Gaming historians received permission to dig up the landfill as part of a documentary in 2014, during which former Atari executive James Heller, who had overseen the original burial clarified that only about 728,000 cartridges had been buried in 1982, backed by estimates made during the excavation, and disproving the scale of the urban legend.[38] Atari's burial remains an iconic representation of the 1983 video game crash.[39][40][41] By the end of 1983, Atari had over US$536 million in losses, leading to Warner Communication to sell Atari's consumer products division in July 1984 to Jack Tramiel, who had recently departed Commodore International. Tramiel's new company took the name Atari Corporation, and the directed their efforts into developing their new personal computer line, the Atari ST, over the console business.[42]

Lack of confidence in the video game sector caused many retailers to stop selling video game consoles or reduced their stock significantly, reserving floor or shelf space for other products.[43] Retailers established to exclusively sell video games folded, which impacted sales of personal computer games. [16]

The crash also affected video game arcades, which had had several years of a golden age since the introduction of Space Invaders in 1978 but was waning by 1983, due to the expansion of home consoles, the lack of novel games, and undue attention to teenage delinquency around video arcades.[42] While the number of arcades in the United States had doubled to 10,000 from 1980 to 1982, the crash led to a closure of around 1,500 arcades, and revenue of those that remained open had fallen by 40%.[7]

The full effects of the industry crash were not felt until 1985.[44] Despite Atari's claim of 1 million in worldwide sales of its 2600 game system that year,[45] recovery was slow. The sales of home video games had dropped from $3.2 billion in 1982[46] to $100 million in 1985.[47] Analysts doubted the long-term viability of the video game industry,[48] and, according to Electronic Arts' Trip Hawkins, it had been very difficult to convince retailers to carry video games due to the stigma carried by the fall of Atari until 1985.[16]

Two major events of 1985 helped to revitalize the video game industry. One factor came from increased sales of personal computers from Commodore and Tandy, which helped to maintain revenue for game developers like Activision and Electronic Arts, keeping the video game market alive. The other was the initial limited release of the Nintendo Entertainment System in North America in late 1985, followed by the full national release early the following year.[16] Following 1986, the industry began recovering, and by 1988, annual sales in the industry exceeding $2.3 billion with 70% of the market dominated by Nintendo.[49] In 1986, Nintendo president Hiroshi Yamauchi noted that "Atari collapsed because they gave too much freedom to third-party developers and the market was swamped with rubbish games". In response, Nintendo limited the number of titles that third-party developers could release for their system each year, and promoted its "Seal of Quality", which it allowed to be used on games and peripherals by publishers that met Nintendo's quality standards.[50]

The end of the crash allowed Commodore to raise the price of the C64 for the first time upon the June 1986 introduction of the Commodore 64c—a Commodore 64 redesigned for lower cost of manufacture—which Compute! cited as the end of the home-computer price war,[51][52] one of the causes of the crash.[53]

Long-term effects[edit]

The crash in 1983 had the largest impact in the United States. It rippled through all sectors of the global video game market worldwide, though sales of video games still remained strong in Japan, Europe, and Canada from the beleaguered American companies.[55] It took several years for the U.S. industry to recover. The estimated US$42 billion worldwide market in 1982, including arcade, console, and computer games, dropped to US$14 billion by 1985. There was also a significant shift in the home video game market, away from consoles to personal computer software, between 1983 and 1985.[54]

1984 is when some of the longer-term effects started to take a toll on the video game console. Companies like Magnavox had decided to pull out of the video game console industry. The general consensus was that video games were just a fad that came as quickly as they left. But outside of North America the video game industry was doing very well. Home consoles were growing in popularity in Japan while home computers were surging across Europe.

United States sales fell from $3 billion to around $100 million in 1985. Despite the decline, new gaming companies started to make their way onto the scene such as Westwood Studios, Code Masters, and SquareSoft, which all started in 1985. All of these companies would go on to create numerous genre-defining titles in the future.[56] During the holiday season of 1985 Hiroshi Yamauchi decided to go to New York small markets about putting their products in their stores. Minoru Arakawa offered a money back guarantee from Nintendo that they would pay back for any stock that was left unsold. In total Nintendo sold 50,000 units, about half of the units they shipped to the US.[57]

Japanese domination[edit]

The U.S. video game crash had two long-lasting results. The first result was that dominance in the home console market shifted from the United States to Japan. The crash did not directly affect the financial viability of the video game market in Japan, but it still came as a surprise there and created repercussions that changed that industry, and thus became known as the "Atari shock".[58]

Prior to the crash, Jonathan Greenberg of Forbes had predicted in early 1981 that Japanese companies would eventually dominate the North American video game industry, as American video game companies were increasingly licensing products from Japanese companies, who in turn were opening up North American branches.[59] By 1982–1983, Japanese manufacturers had captured a large share of the North American arcade market, which Gene Lipkin of Data East USA partly attributed to Japanese companies having more finances to invest in new ideas.[60]

As the crash was happening in the United States, Japan's game industry started to shift its attention from arcade games to home consoles. Within one month in 1983, two new home consoles were released in Japan: the Nintendo Family Computer (Famicom) and Sega's SG-1000 (which was later supplanted by the Master System) heralding the third generation of home consoles retrospectively.[61] These two consoles were very popular, buoyed by an economic bubble in Japan. The units readily outsold Atari and Mattel's existing systems, and with both Atari and Mattel focusing on recovering domestic sales, the Japanese consoles effectively went uncontested over the next few years.[61] By 1986, three years after its introduction, 6.5 million Japanese homes – 19% of the population – owned a Famicom, and Nintendo began exporting it to the U.S., where the home console industry was only just recovering from the crash.[50]

The impact on the retail sector of the crash was the most formidable barrier that confronted Nintendo as it tried to market the Famicom in the United States. A planned deal with Atari to distribute the Famicom in North America fell apart in the wake of the crash, resulting in Nintendo handling the international release themselves two years later.[62]: 283–285 Additionally, retailer opposition to video games was directly responsible for causing Nintendo to brand its product the Nintendo Entertainment System (NES) rather than a "video game system", and using terms such as "control deck" and "Game Pak", as well as producing a toy robot called R.O.B. to convince toy retailers to allow it in their stores. Furthermore, the design for the NES used a front-loading cartridge slot to mimic how video cassette recorders, popular at that time, were loaded, further pulling the NES away from previous console designs.[43][63][64]

By the time the U.S. video game market recovered in the late 1980s, the NES was by far the dominant console in the United States, leaving only a fraction of the market to Atari. By 1989, home video game sales in the United States had reached $5 billion, surpassing the 1982 peak of $3 billion during the previous generation. A large majority of the market was controlled by Nintendo; it sold more than 35 million units in the United States, exceeding the sales of other consoles and personal computers by a considerable margin.[65] New Japanese companies entered the market to challenge Nintendo's success in the United States, NEC's TurboGrafx-16 and the Sega Genesis, both released in the U.S. in 1989. While the TurboGrafx underwhelmed in the market, the Genesis' release set the stage for a major rivalry between Sega and Nintendo in the early 1990s in the United States video game market.

Impact on third-party software development[edit]

A second, highly visible result of the crash was the advancement of measures to control third-party development of software. Using secrecy to combat industrial espionage had failed to stop rival companies from reverse engineering the Mattel and Atari systems and hiring away their trained game programmers. While Mattel and Coleco implemented lockout measures to control third-party development (the ColecoVision BIOS checked for a copyright string on power-up), the Atari 2600 was completely unprotected and once information on its hardware became available, little prevented anyone from making games for the system. Nintendo thus instituted a strict licensing policy for the NES that included equipping the cartridge and console with lockout chips, which were region-specific, and had to match in order for a game to work. In addition to preventing the use of unlicensed games, it also was designed to combat software piracy, rarely a problem in North America or Western Europe, but rampant in East Asia. The concepts of such a control system remain in use on every major video game console produced today, even with fewer "cartridge-based" consoles on the market than in the 8/16-bit era. Replacing the security chips in most modern consoles are specially encoded optical discs that cannot be copied by most users and can only be read by a particular console under normal circumstances. Accolade achieved a technical victory in one court case against Sega, challenging this control, even though it ultimately yielded and signed the Sega licensing agreement. Several publishers, notably Tengen (Atari Games), Color Dreams, and Camerica, challenged Nintendo's control system during the 8-bit era by producing unlicensed NES games.

Initially, Nintendo was the only developer for the Famicom. Under pressure from Namco and Hudson Soft, it opened the Famicom to third-party development, but instituted a license fee of 30% per game cartridge for these third-parties to develop games, a system used by console manufactures to this day.[66] Nintendo maintained strict manufacturing control and requiring payment in full before manufacturing. Cartridges could not be returned to Nintendo, so publishers assumed all the financial risk of selling all units ordered. Nintendo limited most third-party publishers to only five games per year on its systems (some companies tried to get around this by creating additional company labels like Konami's Ultra Games label). Nintendo ultimately dropped this rule by 1993, after the release of the successor Super Nintendo Entertainment System.[67] Nintendo's strong-armed oversight of Famicom cartridge manufacturing led to both legitimate and bootleg unlicensed cartridges to be made in the Asian regions. Nintendo placed its Nintendo Seal of Quality on all licensed games released for the system to try to promote authenticity and detract from bootleg sales, but failed to make significant traction to stalling these sales.[68]

As Nintendo prepared to release the Famicom in the United States, it wanted to avoid both the bootleg problem it had in Asia as well as the mistakes that led up to the 1983 crash. The company created the proprietary 10NES system, a lockout chip which was designed to prevent cartridges made without the chip from being played on the NES. The 10NES lockout was not perfect, as later in the NES' lifecycle methods were found to bypass it, but it did sufficiently allow Nintendo to strengthen its publishing control to avoid the mistakes Atari had made and initially prevent bootleg cartridges in the Western markets.[69] These strict licensing measures backfired somewhat after Nintendo was accused of monopolistic behavior.[70] In the long run, this pushed many western third-party publishers such as Electronic Arts away from Nintendo consoles and supported competing consoles such as the Sega Genesis or Sony PlayStation. Most of the Nintendo platform-control measures were adopted by later console manufacturers such as Sega, Sony, and Microsoft, although not as stringently.

Computer game growth[edit]

With waning console interests in the United States, the computer game market was able to gain a strong foothold in 1983 and beyond.[61] Developers that had been primarily in the console games space, like Activision, turned their attention to developing computer game titles to stay viable.[61] Newer companies also were founded to capture the growing interest in the computer games space with novel elements that borrowed from console games, as well as taking advantage of low-cost dial-up modems that allowed for multiplayer capabilities.[61] The computer game market grew between 1983 and 1984, overtaking the console market, but overall video game revenue had declined significantly due to the considerable decline of the console market as well as the arcade market to an extent.[54] The home computer industry, however, experienced a downturn in mid-1984,[71] with global computer game sales declining in 1985 to a certain extent.[54]

Microcomputers dominated the European market throughout the 1980s and with domestic production for those formats thriving over the same period, there was minimal trans-Atlantic ripple from American game production and trends.[72] Partly as a distant knock-on effect of the crash and partly due to the continuing quality of homegrown computer and microcomputer games, consoles did not achieve a dominant position in some European markets until the early 1990s.[73] In the United Kingdom, there was a short-lived home console market between 1980 and 1982, but the 1983 crash led to the decline of consoles in the UK, which was offset by the rise of LCD games in 1983 and then the rise of computer games in 1984. It was not until the late 1980s with the arrival of the Master System and NES that the home console market recovered in the UK. Computer games remained the dominant sector of the UK home video game market up until they were surpassed by Sega and Nintendo consoles in 1991.[73]

References[edit]

- ^ "Wikipediaに「アタリショックという言葉を初めて使ったのはトイザらスの副社長」と書くことはできない". Runner's High!. Retrieved November 6, 2023.

- ^ Kent, Steven (2001). "Chapter 12: The Battle for the Home". Ultimate History of Video Games. Three Rivers Press. p. 190. ISBN 0-7615-3643-4.

- ^ Weiss, Brett (2007). Classic home video games, 1972–1984: a complete reference guide. Jefferson, N.C.: McFarland. p. 108. ISBN 978-0-7864-3226-4.

- ^ Gallager, Scott; Ho Park, Seung (February 2002). "Innovation and Competition in Standard-Based Industries: A Historical Analysis of the U.S. Home Video Game Market". IEEE Transactions on Engineering Management. 49 (1): 67–82. doi:10.1109/17.985749.

- ^ Jones, Robert S. (December 12, 1982). "Home Video Games Are Coming Under a Strong Attack". Gainesville Sun. p. 21F. Archived from the original on June 25, 2020. Retrieved July 26, 2016 – via Google Books.

- ^ Gallagher, S.; Seung Ho Park (February 2002). "Innovation and competition in standard-based industries: a historical analysis of the US home video game market". IEEE Transactions on Engineering Management. 49 (1): 67–82. doi:10.1109/17.985749.

- ^ a b c Kleinfield, N.R. (October 17, 1983). "Video Games Industry Comes Down To Earth". The New York Times. Archived from the original on September 13, 2018. Retrieved September 21, 2018.

- ^ Fleming, Jeffrey. "The History Of Activision". Gamasutra. Archived from the original on December 20, 2016. Retrieved December 30, 2016.

- ^ a b Adrian (May 9, 2016). "INTERVIEW – DAVID CRANE (ATARI/ACTIVISION/SKYWORKS)". Arcade Attack. Archived from the original on May 9, 2016. Retrieved May 10, 2016.

- ^ Goodman, Danny (Spring 1983). "Home Video Games: Video Games Update". Creative Computing Video & Arcade Games. p. 32. Archived from the original on November 7, 2017.

- ^ Ernkvist, Mirko (2006). Down Many Times, but Still Playing the Game: Creative Destruction and Industry Crashes in the Early Video Game Industry 1971-1986 (PDF). XIV International Economic History Congress. Helsinki. Archived (PDF) from the original on August 10, 2020. Retrieved September 11, 2020.

- ^ "Stream of video games is endless". Milwaukee Journal. December 26, 1982. pp. Business 1. Archived from the original on March 12, 2016. Retrieved January 10, 2015.

- ^ Clark, Pamela (December 1982). "The Play's the Thing". BYTE. p. 6. Retrieved October 19, 2013.

- ^ Harmetz, Aljean (January 15, 1983). "New Faces, More Profits For Video Games". Times-Union. p. 18. Archived from the original on August 1, 2019. Retrieved February 28, 2012.

- ^ a b c Mitchell, Peter W. (September 6, 1983). "A summer-CES report". Boston Phoenix. p. 4. Archived from the original on February 9, 2021. Retrieved January 10, 2015.

- ^ a b c d e f DeMaria, Rusel; Wilson, Johnny L. (2003). High Score!: The Illustrated History of Electronic Games (2 ed.). New York: McGraw-Hill/Osborne. pp. 103–105. ISBN 0-07-223172-6.

- ^ "IBM Archives: The birth of the IBM PC". January 23, 2003. Archived from the original on January 2, 2014.

- ^ Ahl, David H. (1984 November). The first decade of personal computing Archived December 10, 2016, at the Wayback Machine. Creative Computing, vol. 10, no. 11: p. 30.

- ^ "The Inflation Calculator". Archived from the original on March 26, 2018.

- ^ Stanton, Jeffrey; Wells, Robert P.; Rochowansky, Sandra; Mellin, Michael (1984). The Addison-Wesley Book of Atari Software 1984. Addison-Wesley. pp. TOC. ISBN 020116454X.

- ^ a b Pollack, Andrew (June 19, 1983). "The Coming Crisis in Home Computers". The New York Times. Archived from the original on January 20, 2015. Retrieved January 19, 2015.

- ^ a b Gutman, Dan (December 1987). "The Fall And Rise of Computer Games". Compute!'s Apple Applications. Vol. 5, no. 2 #6. p. 64. Retrieved August 18, 2014.

- ^ Commodore VIC-20 ad with William Shatner. June 9, 2010. Archived from the original on April 6, 2017.

- ^ Coleco Presents The Adam Computer System. YouTube. May 3, 2016 [1983-09-28]. Event occurs at 1:06:55. Archived from the original on January 3, 2017.

IBM is just not another strong company making a positive statement about the home-computer field's future. IBM is a company that knows how to make money. IBM is a company that knows how to make money in hardware, and makes more money in software. What IBM can bring to the home-computer field is something that the field collectively needs, particularly now: A respect for profitability. A capability to earn money. That is precisely what the field needs ... I look back a year or two in the videogame field, or the home-computer field, how much better everyone was, when most people were making money, rather than very few were making money.

- ^ Rosenberg, Ronald (December 8, 1983). "Home Computer? Maybe Next Year". The Boston Globe.

- ^ "Under 1983 Christmas Tree, Expect the Home Computer". The New York Times. December 10, 1983. ISSN 0362-4331. Archived from the original on November 7, 2017. Retrieved July 2, 2017.

- ^ "IBM's Peanut Begins New Computer Phase". The Boston Globe. Associated Press. November 1, 1983.

- ^ Mace, Scott (November 21, 1983). "TI retires from home-computer market". InfoWorld. pp. 22, 27. Retrieved February 25, 2011.

- ^ "Penney Shelves its Computers". The Boston Globe. December 17, 1983.

- ^ Sipe, Russell (August 1988). "The Greatest Story Ever told" (PDF). Computer Gaming World. No. 50. pp. 6–7. Archived (PDF) from the original on April 18, 2016.

- ^ Seitz, Lee K., CVG Nexus: Timeline - 1980s, archived from the original on October 13, 2007, retrieved November 16, 2007

- ^ a b Prince, Suzan (September 1983). "Faded Glory: The Decline, Fall and Possible Salvation of Home Video". Video Games. Pumpkin Press. Retrieved February 24, 2016.

- ^ Daglow, Don L. (August 1988). "The Changing Role of Computer Game Designers". Computer Gaming World. No. 50. pp. 18, 42.

- ^ Rosenberg, Ron (December 11, 1982). "Competitors Claim Role in Warner Setback". The Boston Globe. p. 1. Archived from the original on November 7, 2012. Retrieved March 6, 2012.

- ^ a b Flemming, Jeffrey. "The History Of Activision". Gamasutra. Archived from the original on December 20, 2016. Retrieved December 30, 2016.

- ^ Adrian (May 9, 2016). "INTERVIEW – DAVID CRANE (ATARI/ACTIVISION/SKYWORKS)". Arcade Attack. Archived from the original on May 9, 2016. Retrieved May 10, 2016.

- ^ Kocurek, Carly A. (2015). Coin-operated Americans: rebooting boyhood at the video game arcade. Minneapolis London: University of Minnesota Press. ISBN 978-0-8166-9183-8.

- ^ "Diggers Find Atari's E.T. Games in Landfill". Associated Press. April 26, 2014. Archived from the original on April 26, 2014. Retrieved April 26, 2014.

- ^ Dvorak, John C (August 12, 1985). "Is the PCJr Doomed To Be Landfill?". InfoWorld. Vol. 7, no. 32. p. 64. Archived from the original on February 9, 2023. Retrieved September 10, 2011.

- ^ Jary, Simon (August 19, 2011). "HP TouchPads to be dumped in landfill?". PC Advisor. Archived from the original on November 8, 2011. Retrieved September 10, 2011.

- ^ Kennedy, James (August 20, 2011). "Book Review: Super Mario". Wall Street Journal. Archived from the original on September 6, 2017. Retrieved September 10, 2011.

- ^ a b Kent, Steven (2001). "Chapter 14: The Fall". Ultimate History of Video Games. Three Rivers Press. p. 190. ISBN 0-7615-3643-4.

- ^ a b "NES". Icons. Season 4. Episode 5010. December 1, 2005. G4. Archived from the original on October 16, 2012.

- ^ Katz, Arnie (January 1985). "1984: The Year That Shook Electronic Gaming". Electronic Games. Vol. 3, no. 35. pp. 30–31 [30]. Retrieved February 2, 2012.[dead link]

- ^ Halfhill, Tom R. "A Turning Point for Atari? Report from the Winter Consumer Electronics Show". Archived from the original on April 9, 2016.

- ^ Liedholm, Marcus and Mattias. "The Famicom rules the world! – (1983–89)". Nintendo Land. Archived from the original on January 1, 2010. Retrieved February 14, 2006.

- ^ Dvorchak, Robert (July 30, 1989). "NEC out to dazzle Nintendo fans". The Times-News. p. 1D. Archived from the original on May 12, 2016. Retrieved May 11, 2017.

- ^ "Gainesville Sun - Google News Archive Search". Archived from the original on February 1, 2021. Retrieved November 22, 2020.

- ^ "Toy Trends", Orange Coast, vol. 14, no. 12, Emmis Communications, p. 88, December 1988, ISSN 0279-0483, archived from the original on February 9, 2023, retrieved April 26, 2011

- ^ a b Takiff, Jonathan (June 20, 1986). "Video Games Gain in Japan, Are Due For Assault on U.S." The Vindicator. p. 2. Archived from the original on February 2, 2021. Retrieved April 10, 2012.

- ^ Lock, Robert; Halfhill, Tom R. (July 1986). "Editor's Notes". Compute!. No. 74. p. 6. Retrieved November 8, 2013.

- ^ Leemon, Sheldon (February 1987). "Microfocus". Compute!. No. 81. p. 24. Retrieved November 9, 2013.

- ^ "Ten Facts about the Great Video Game Crash of '83". September 21, 2011. Archived from the original on May 10, 2015.

Around the time home consoles started falling out of favor, home computers like the Commodore VIC-20, the Commodore 64, and the Apple ][ became affordable for the average family. Needless to say, the computer manufacturers of the age seized on the opportunity to ask parents, "Hey, why are you spending money on a game console when a computer can let you play games and prepare you for a job?"

- ^ a b c d Naramura, Yuki (January 23, 2019). "Peak Video Game? Top Analyst Sees Industry Slumping in 2019". Bloomberg L.P. Archived from the original on July 15, 2019. Retrieved January 29, 2019.

- ^ Kent, Steven (2001). "Chapter 17: We Tried to Keep from Laughing". Ultimate History of Video Games. Three Rivers Press. p. 190. ISBN 0-7615-3643-4.

- ^ Boyd, Andy. "No. 3038: The Video Game Crash of 1983". www.uh.edu. Archived from the original on September 27, 2020. Retrieved September 30, 2020.

- ^ Wardyga, Brian (2018). The Video Games Textbook. New York: A K Peters/ CRC Press. p. 113. ISBN 9781351172363.

- ^ "Down Many Times, but Still Playing the Game: Creative Destruction and Industry Crashes in the Early Video Game Industry 1971-1986" (PDF). Archived (PDF) from the original on May 1, 2014.

- ^ Greenberg, Jonathan (April 13, 1981). "Japanese invaders: Move over Asteroids and Defenders, the next adversary in the electronic video game wars may be even tougher to beat" (PDF). Forbes. Vol. 127, no. 8. pp. 98, 102. Archived (PDF) from the original on December 2, 2021. Retrieved December 2, 2021.

- ^ "Special Report: Gene Lipkin (Data East USA)". RePlay. Vol. 16, no. 4. January 1991. p. 92.

- ^ a b c d e Parish, Jeremy (August 28, 2014). "Greatest Years in Gaming History: 1983". USGamer. Archived from the original on January 29, 2021. Retrieved September 13, 2019.

- ^ Kent, Steven L. (2001). The Ultimate History of Video Games: The Story Behind the Craze that Touched our Lives and Changed the World. Roseville, California: Prima Publishing. ISBN 0-7615-3643-4.

- ^ "25 Smartest Moments in Gaming". GameSpy. July 21–25, 2003. p. 22. Archived from the original on September 2, 2012.

- ^ O'Kane, Sean (October 18, 2015). "7 things I learned from the designer of the NES". The Verge. Archived from the original on October 19, 2015. Retrieved September 21, 2018.

- ^ Kinder, Marsha (1993), Playing with Power in Movies, Television, and Video Games: From Muppet Babies to Teenage Mutant Ninja Turtles, University of California Press, p. 90, ISBN 0-520-07776-8, archived from the original on February 9, 2023, retrieved April 26, 2011

- ^ Mochizuki, Takahashi; Savov, Vlad (August 25, 2020). "Epic's Battle With Apple and Google Actually Dates Back to Pac-Man". Bloomberg News. Archived from the original on November 6, 2021. Retrieved August 25, 2020.

- ^ Plunkett, Luke (July 21, 2012). "Konami's Cheat to Get Around a Silly Nintendo Rule". Kotaku. Archived from the original on September 21, 2018. Retrieved September 21, 2018.

- ^ O'Donnell, Casey (2011). "The Nintendo Entertainment System and the 10NES Chip: Carving the Video Game Industry in Silicon". Games and Culture. 6 (1): 83–100. doi:10.1177/1555412010377319. S2CID 53358125.

- ^ Cunningham, Andrew (July 15, 2013). "The NES turns 30: How it began, worked, and saved an industry". Ars Technica. Archived from the original on July 22, 2021. Retrieved September 21, 2018.

- ^ U.S. Court of Appeals; Federal Circuit (1992). "Atari Games Corp. v. Nintendo of America Inc". Digital Law Online. Archived from the original on August 8, 2011. Retrieved March 30, 2005.

- ^ Sanger, David E. (June 5, 1984). "Expected boom in home computer market fizzles". Pittsburgh Post-Gazette. Archived from the original on December 2, 2021. Retrieved December 2, 2021.

- ^ Oxford, Nadia (January 18, 2012). "Ten Facts about the Great Video Game Crash of '83". IGN. Archived from the original on January 28, 2021. Retrieved September 11, 2020.

- ^ a b "Market size and market shares". Video Games: A Report on the Supply of Video Games in the UK. United Kingdom: Monopolies and Mergers Commission (MMC), H.M. Stationery Office. April 1995. pp. 66 to 68. ISBN 978-0-10-127812-6.

Further reading[edit]

- DeMaria, Rusel & Wilson, Johnny L. (2003). High Score!: The Illustrated History of Electronic Games (2nd ed.). New York: McGraw-Hill/Osborne. ISBN 0-07-222428-2.

- Gallagher, Scott & Park, Seung Ho (2002). "Innovation and Competition in Standard-Based Industries: A Historical Analysis of the U.S. Home Video Game Market". IEEE Transactions on Engineering Management, vol. 49, no. 1, February 2002, pp. 67–82. doi: 10.1109/17.985749

External links[edit]

- The Dot Eaters.com: "Chronicle of the Great Videogame Crash" Archived June 12, 2018, at the Wayback Machine

- Twin Galaxies Official Video Game & Pinball Book of World Records: "The Golden Age of Video Game Arcades" — story within the 1998 book.

- Intellivisionlives.com: Official Intellivision History Archived January 16, 2018, at the Wayback Machine — by the original programmers.

- The History of Computer Games: The Atari Years — by Chris Crawford, a game designer at Atari during the crash.

- Pctimeline.info: Chronology of the Commodore 64 Computer— Events & Game release dates (1982–1990). Archived August 9, 2012, at the Wayback Machine