CORDIC

| Trigonometry |

|---|

|

| Reference |

| Laws and theorems |

| Calculus |

| Mathematicians |

CORDIC (coordinate rotation digital computer), Volder's algorithm, Digit-by-digit method, Circular CORDIC (Jack E. Volder),[1][2] Linear CORDIC, Hyperbolic CORDIC (John Stephen Walther),[3][4] and Generalized Hyperbolic CORDIC (GH CORDIC) (Yuanyong Luo et al.),[5][6] is a simple and efficient algorithm to calculate trigonometric functions, hyperbolic functions, square roots, multiplications, divisions, and exponentials and logarithms with arbitrary base, typically converging with one digit (or bit) per iteration. CORDIC is therefore also an example of digit-by-digit algorithms. CORDIC and closely related methods known as pseudo-multiplication and pseudo-division or factor combining are commonly used when no hardware multiplier is available (e.g. in simple microcontrollers and field-programmable gate arrays or FPGAs), as the only operations they require are additions, subtractions, bitshift and lookup tables. As such, they all belong to the class of shift-and-add algorithms. In computer science, CORDIC is often used to implement floating-point arithmetic when the target platform lacks hardware multiply for cost or space reasons.

History[edit]

Similar mathematical techniques were published by Henry Briggs as early as 1624[7][8] and Robert Flower in 1771,[9] but CORDIC is better optimized for low-complexity finite-state CPUs.

CORDIC was conceived in 1956[10][11] by Jack E. Volder at the aeroelectronics department of Convair out of necessity to replace the analog resolver in the B-58 bomber's navigation computer with a more accurate and faster real-time digital solution.[11] Therefore, CORDIC is sometimes referred to as a digital resolver.[12][13]

In his research Volder was inspired by a formula in the 1946 edition of the CRC Handbook of Chemistry and Physics:[11]

where is such that , and .

His research led to an internal technical report proposing the CORDIC algorithm to solve sine and cosine functions and a prototypical computer implementing it.[10][11] The report also discussed the possibility to compute hyperbolic coordinate rotation, logarithms and exponential functions with modified CORDIC algorithms.[10][11] Utilizing CORDIC for multiplication and division was also conceived at this time.[11] Based on the CORDIC principle, Dan H. Daggett, a colleague of Volder at Convair, developed conversion algorithms between binary and binary-coded decimal (BCD).[11][14]

In 1958, Convair finally started to build a demonstration system to solve radar fix–taking problems named CORDIC I, completed in 1960 without Volder, who had left the company already.[1][11] More universal CORDIC II models A (stationary) and B (airborne) were built and tested by Daggett and Harry Schuss in 1962.[11][15]

Volder's CORDIC algorithm was first described in public in 1959,[1][2][11][13][16] which caused it to be incorporated into navigation computers by companies including Martin-Orlando, Computer Control, Litton, Kearfott, Lear-Siegler, Sperry, Raytheon, and Collins Radio.[11]

Volder teamed up with Malcolm McMillan to build Athena, a fixed-point desktop calculator utilizing his binary CORDIC algorithm.[17] The design was introduced to Hewlett-Packard in June 1965, but not accepted.[17] Still, McMillan introduced David S. Cochran (HP) to Volder's algorithm and when Cochran later met Volder he referred him to a similar approach John E. Meggitt (IBM[18]) had proposed as pseudo-multiplication and pseudo-division in 1961.[18][19] Meggitt's method also suggested the use of base 10[18] rather than base 2, as used by Volder's CORDIC so far. These efforts led to the ROMable logic implementation of a decimal CORDIC prototype machine inside of Hewlett-Packard in 1966,[20][19] built by and conceptually derived from Thomas E. Osborne's prototypical Green Machine, a four-function, floating-point desktop calculator he had completed in DTL logic[17] in December 1964.[21] This project resulted in the public demonstration of Hewlett-Packard's first desktop calculator with scientific functions, the HP 9100A in March 1968, with series production starting later that year.[17][21][22][23]

When Wang Laboratories found that the HP 9100A used an approach similar to the factor combining method in their earlier LOCI-1[24] (September 1964) and LOCI-2 (January 1965)[25][26] Logarithmic Computing Instrument desktop calculators,[27] they unsuccessfully accused Hewlett-Packard of infringement of one of An Wang's patents in 1968.[19][28][29][30]

John Stephen Walther at Hewlett-Packard generalized the algorithm into the Unified CORDIC algorithm in 1971, allowing it to calculate hyperbolic functions, natural exponentials, natural logarithms, multiplications, divisions, and square roots.[31][3][4][32] The CORDIC subroutines for trigonometric and hyperbolic functions could share most of their code.[28] This development resulted in the first scientific handheld calculator, the HP-35 in 1972.[28][33][34][35][36][37] Based on hyperbolic CORDIC, Yuanyong Luo et al. further proposed a Generalized Hyperbolic CORDIC (GH CORDIC) to directly compute logarithms and exponentials with an arbitrary fixed base in 2019.[5][6][38][39][40] Theoretically, Hyperbolic CORDIC is a special case of GH CORDIC.[5]

Originally, CORDIC was implemented only using the binary numeral system and despite Meggitt suggesting the use of the decimal system for his pseudo-multiplication approach, decimal CORDIC continued to remain mostly unheard of for several more years, so that Hermann Schmid and Anthony Bogacki still suggested it as a novelty as late as 1973[16][13][41][42][43] and it was found only later that Hewlett-Packard had implemented it in 1966 already.[11][13][20][28]

Decimal CORDIC became widely used in pocket calculators,[13] most of which operate in binary-coded decimal (BCD) rather than binary. This change in the input and output format did not alter CORDIC's core calculation algorithms. CORDIC is particularly well-suited for handheld calculators, in which low cost – and thus low chip gate count – is much more important than speed.

CORDIC has been implemented in the ARM-based STM32G4, Intel 8087,[43][44][45][46][47] 80287,[47][48] 80387[47][48] up to the 80486[43] coprocessor series as well as in the Motorola 68881[43][44] and 68882 for some kinds of floating-point instructions, mainly as a way to reduce the gate counts (and complexity) of the FPU sub-system.

Applications[edit]

CORDIC uses simple shift-add operations for several computing tasks such as the calculation of trigonometric, hyperbolic and logarithmic functions, real and complex multiplications, division, square-root calculation, solution of linear systems, eigenvalue estimation, singular value decomposition, QR factorization and many others. As a consequence, CORDIC has been used for applications in diverse areas such as signal and image processing, communication systems, robotics and 3D graphics apart from general scientific and technical computation.[49][50]

Hardware[edit]

The algorithm was used in the navigational system of the Apollo program's Lunar Roving Vehicle to compute bearing and range, or distance from the Lunar module.[51][52] CORDIC was used to implement the Intel 8087 math coprocessor in 1980, avoiding the need to implement hardware multiplication.[53]

CORDIC is generally faster than other approaches when a hardware multiplier is not available (e.g., a microcontroller), or when the number of gates required to implement the functions it supports should be minimized (e.g., in an FPGA or ASIC). In fact, CORDIC is a standard drop-in IP in FPGA development applications such as Vivado for Xilinx, while a power series implementation is not due to the specificity of such an IP, i.e. CORDIC can compute many different functions (general purpose) while a hardware multiplier configured to execute power series implementations can only compute the function it was designed for.

On the other hand, when a hardware multiplier is available (e.g., in a DSP microprocessor), table-lookup methods and power series are generally faster than CORDIC. In recent years, the CORDIC algorithm has been used extensively for various biomedical applications, especially in FPGA implementations[citation needed].

The STM32G4 series and certain STM32H7 series of MCUs implement a CORDIC module to accelerate computations in various mixed signal applications such as graphics for human-machine interface and field oriented control of motors. While not as fast as a power series approximation, CORDIC is indeed faster than interpolating table based implementations such as the ones provided by the ARM CMSIS and C standard libraries.[54] Though the results may be slightly less accurate as the CORDIC modules provided only achieve 20 bits of precision in the result. For example, most of the performance difference compared to the ARM implementation is due to the overhead of the interpolation algorithm, which achieves full floating point precision (24 bits) and can likely achieve relative error to that precision.[55] Another benefit is that the CORDIC module is a coprocessor and can be run in parallel with other CPU tasks.

The issue with using Taylor series is that while they do provide small absolute error, they do not exhibit well behaved relative error.[56] Other means of polynomial approximation, such as minimax optimization, may be used to control both kinds of error.

Software[edit]

Many older systems with integer-only CPUs have implemented CORDIC to varying extents as part of their IEEE floating-point libraries. As most modern general-purpose CPUs have floating-point registers with common operations such as add, subtract, multiply, divide, sine, cosine, square root, log10, natural log, the need to implement CORDIC in them with software is nearly non-existent. Only microcontroller or special safety and time-constrained software applications would need to consider using CORDIC.

Modes of operation[edit]

Rotation mode[edit]

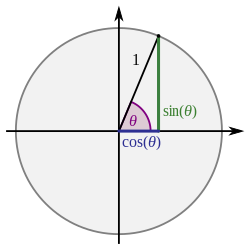

CORDIC can be used to calculate a number of different functions. This explanation shows how to use CORDIC in rotation mode to calculate the sine and cosine of an angle, assuming that the desired angle is given in radians and represented in a fixed-point format. To determine the sine or cosine for an angle , the y or x coordinate of a point on the unit circle corresponding to the desired angle must be found. Using CORDIC, one would start with the vector :

In the first iteration, this vector is rotated 45° counterclockwise to get the vector . Successive iterations rotate the vector in one or the other direction by size-decreasing steps, until the desired angle has been achieved. Each step angle is for .

More formally, every iteration calculates a rotation, which is performed by multiplying the vector with the rotation matrix :

The rotation matrix is given by

Using the following two trigonometric identities:

the rotation matrix becomes

The expression for the rotated vector then becomes

where and are the components of . Restricting the angles such that , the multiplication with the tangent can be replaced by a division by a power of two, which is efficiently done in digital computer hardware using a bit shift. The expression then becomes

where

and is used to determine the direction of the rotation: if the angle is positive, then is +1, otherwise it is −1.

All factors can be ignored in the iterative process and then applied all at once afterwards with a scaling factor

which is calculated in advance and stored in a table or as a single constant, if the number of iterations is fixed. This correction could also be made in advance, by scaling and hence saving a multiplication. Additionally, it can be noted that[43]

to allow further reduction of the algorithm's complexity. Some applications may avoid correcting for altogether, resulting in a processing gain :[57]

After a sufficient number of iterations, the vector's angle will be close to the wanted angle . For most ordinary purposes, 40 iterations (n = 40) are sufficient to obtain the correct result to the 10th decimal place.

The only task left is to determine whether the rotation should be clockwise or counterclockwise at each iteration (choosing the value of ). This is done by keeping track of how much the angle was rotated at each iteration and subtracting that from the wanted angle; then in order to get closer to the wanted angle , if is positive, the rotation is clockwise, otherwise it is negative and the rotation is counterclockwise:

The values of must also be precomputed and stored. But for small angles, in fixed-point representation, reducing table size.

As can be seen in the illustration above, the sine of the angle is the y coordinate of the final vector while the x coordinate is the cosine value.

Vectoring mode[edit]

The rotation-mode algorithm described above can rotate any vector (not only a unit vector aligned along the x axis) by an angle between −90° and +90°. Decisions on the direction of the rotation depend on being positive or negative.

The vectoring-mode of operation requires a slight modification of the algorithm. It starts with a vector whose x coordinate is positive whereas the y coordinate is arbitrary. Successive rotations have the goal of rotating the vector to the x axis (and therefore reducing the y coordinate to zero). At each step, the value of y determines the direction of the rotation. The final value of contains the total angle of rotation. The final value of x will be the magnitude of the original vector scaled by K. So, an obvious use of the vectoring mode is the transformation from rectangular to polar coordinates.

Implementation[edit]

In Java the Math class has a scalb(double x,int scale) method to perform such a shift,[58] C has the ldexp function,[59] and the x86 class of processors have the fscale floating point operation.[60]

Software Example (Python)[edit]

from math import atan2, sqrt, sin, cos, radians

ITERS = 16

theta_table = [atan2(1, 2**i) for i in range(ITERS)]

def compute_K(n):

"""

Compute K(n) for n = ITERS. This could also be

stored as an explicit constant if ITERS above is fixed.

"""

k = 1.0

for i in range(n):

k *= 1 / sqrt(1 + 2 ** (-2 * i))

return k

def CORDIC(alpha, n):

K_n = compute_K(n)

theta = 0.0

x = 1.0

y = 0.0

P2i = 1 # This will be 2**(-i) in the loop below

for arc_tangent in theta_table:

sigma = +1 if theta < alpha else -1

theta += sigma * arc_tangent

x, y = x - sigma * y * P2i, sigma * P2i * x + y

P2i /= 2

return x * K_n, y * K_n

if __name__ == "__main__":

# Print a table of computed sines and cosines, from -90° to +90°, in steps of 15°,

# comparing against the available math routines.

print(" x sin(x) diff. sine cos(x) diff. cosine ")

for x in range(-90, 91, 15):

cos_x, sin_x = CORDIC(radians(x), ITERS)

print(

f"{x:+05.1f}° {sin_x:+.8f} ({sin_x-sin(radians(x)):+.8f}) {cos_x:+.8f} ({cos_x-cos(radians(x)):+.8f})"

)

Output[edit]

$ python cordic.py

x sin(x) diff. sine cos(x) diff. cosine

-90.0° -1.00000000 (+0.00000000) -0.00001759 (-0.00001759)

-75.0° -0.96592181 (+0.00000402) +0.25883404 (+0.00001499)

-60.0° -0.86601812 (+0.00000729) +0.50001262 (+0.00001262)

-45.0° -0.70711776 (-0.00001098) +0.70709580 (-0.00001098)

-30.0° -0.50001262 (-0.00001262) +0.86601812 (-0.00000729)

-15.0° -0.25883404 (-0.00001499) +0.96592181 (-0.00000402)

+00.0° +0.00001759 (+0.00001759) +1.00000000 (-0.00000000)

+15.0° +0.25883404 (+0.00001499) +0.96592181 (-0.00000402)

+30.0° +0.50001262 (+0.00001262) +0.86601812 (-0.00000729)

+45.0° +0.70709580 (-0.00001098) +0.70711776 (+0.00001098)

+60.0° +0.86601812 (-0.00000729) +0.50001262 (+0.00001262)

+75.0° +0.96592181 (-0.00000402) +0.25883404 (+0.00001499)

+90.0° +1.00000000 (-0.00000000) -0.00001759 (-0.00001759)

Hardware example[edit]

The number of logic gates for the implementation of a CORDIC is roughly comparable to the number required for a multiplier as both require combinations of shifts and additions. The choice for a multiplier-based or CORDIC-based implementation will depend on the context. The multiplication of two complex numbers represented by their real and imaginary components (rectangular coordinates), for example, requires 4 multiplications, but could be realized by a single CORDIC operating on complex numbers represented by their polar coordinates, especially if the magnitude of the numbers is not relevant (multiplying a complex vector with a vector on the unit circle actually amounts to a rotation). CORDICs are often used in circuits for telecommunications such as digital down converters.

Double iterations CORDIC[edit]

In two of the publications by Vladimir Baykov,[61][62] it was proposed to use the double iterations method for the implementation of the functions: arcsine, arccosine, natural logarithm, exponential function, as well as for the calculation of the hyperbolic functions. Double iterations method consists in the fact that unlike the classical CORDIC method, where the iteration step value changes every time, i.e. on each iteration, in the double iteration method, the iteration step value is repeated twice and changes only through one iteration. Hence the designation for the degree indicator for double iterations appeared: . Whereas with ordinary iterations: . The double iteration method guarantees the convergence of the method throughout the valid range of argument changes.

The generalization of the CORDIC convergence problems for the arbitrary positional number system with radix showed[63] that for the functions sine, cosine, arctangent, it is enough to perform iterations for each value of i (i = 0 or 1 to n, where n is the number of digits), i.e. for each digit of the result. For the natural logarithm, exponential, hyperbolic sine, cosine and arctangent, iterations should be performed for each value . For the functions arcsine and arccosine, two iterations should be performed for each number digit, i.e. for each value of .[63]

For inverse hyperbolic sine and arcosine functions, the number of iterations will be for each , that is, for each result digit.

Related algorithms[edit]

CORDIC is part of the class of "shift-and-add" algorithms, as are the logarithm and exponential algorithms derived from Henry Briggs' work. Another shift-and-add algorithm which can be used for computing many elementary functions is the BKM algorithm, which is a generalization of the logarithm and exponential algorithms to the complex plane. For instance, BKM can be used to compute the sine and cosine of a real angle (in radians) by computing the exponential of , which is . The BKM algorithm is slightly more complex than CORDIC, but has the advantage that it does not need a scaling factor (K).

See also[edit]

- Methods of computing square roots

- IEEE 754

- Floating-point units

- Digital Circuits/CORDIC in Wikibooks

References[edit]

- ^ a b c Volder, Jack E. (1959-03-03). "The CORDIC Computing Technique" (PDF). Proceedings of the Western Joint Computer Conference (WJCC) (presentation). San Francisco, California, USA: National Joint Computer Committee (NJCC): 257–261. Retrieved 2016-01-02.

- ^ a b Volder, Jack E. (1959-05-25). "The CORDIC Trigonometric Computing Technique" (PDF). IRE Transactions on Electronic Computers. 8 (3). The Institute of Radio Engineers, Inc. (IRE) (published September 1959): 330–334 (reprint: 226–230). EC-8(3):330–334. Archived from the original (PDF) on 2021-06-12. Retrieved 2016-01-01.

- ^ a b Walther, John Stephen (May 1971). Written at Palo Alto, California, USA. "A unified algorithm for elementary functions" (PDF). Proceedings of the Spring Joint Computer Conference. 38. Atlantic City, New Jersey, USA: Hewlett-Packard Company: 379–385. Archived from the original (PDF) on 2021-06-12. Retrieved 2016-01-01 – via American Federation of Information Processing Societies (AFIPS).

- ^ a b Walther, John Stephen (June 2000). "The Story of Unified CORDIC". The Journal of VLSI Signal Processing. 25 (2 (Special issue on CORDIC)). Hingham, MA, USA: Kluwer Academic Publishers: 107–112. doi:10.1023/A:1008162721424. ISSN 0922-5773. S2CID 26922158.

- ^ a b c Luo, Yuanyong; Wang, Yuxuan; Ha, Yajun; Wang, Zhongfeng; Chen, Siyuan; Pan, Hongbing (September 2019). "Generalized Hyperbolic CORDIC and Its Logarithmic and Exponential Computation With Arbitrary Fixed Base". IEEE Transactions on Very Large Scale Integration (VLSI) Systems. 27 (9): 2156–2169. doi:10.1109/TVLSI.2019.2919557. S2CID 196171166.

- ^ a b Luo, Yuanyong; Wang, Yuxuan; Ha, Yajun; Wang, Zhongfeng; Chen, Siyuan; Pan, Hongbing (September 2019). "Corrections to "Generalized Hyperbolic CORDIC and Its Logarithmic and Exponential Computation With Arbitrary Fixed Base"". IEEE Transactions on Very Large Scale Integration (VLSI) Systems. 27 (9): 2222. doi:10.1109/TVLSI.2019.2932174. S2CID 201711001.

- ^ Briggs, Henry (1624). Arithmetica Logarithmica. London. (Translation: [1] Archived 4 March 2016 at the Wayback Machine)

- ^ Laporte, Jacques (2014) [2005]. "Henry Briggs and the HP 35". Paris, France. Archived from the original on 2015-03-09. Retrieved 2016-01-02. [2] Archived 2020-08-10 at the Wayback Machine

- ^ Flower, Robert (1771). The Radix. A new way of making logarithms. London: J. Beecroft. Retrieved 2016-01-02.

- ^ a b c Volder, Jack E. (1956-06-15), Binary Computation Algorithms for Coordinate Rotation and Function Generation (internal report), Convair, Aeroelectronics group, IAR-1.148

- ^ a b c d e f g h i j k l Volder, Jack E. (June 2000). "The Birth of CORDIC" (PDF). Journal of VLSI Signal Processing. 25 (2 (Special issue on CORDIC)). Hingham, MA, USA: Kluwer Academic Publishers: 101–105. doi:10.1023/A:1008110704586. ISSN 0922-5773. S2CID 112881. Archived from the original (PDF) on 2016-03-04. Retrieved 2016-01-02.

- ^ Perle, Michael D. (June 1971), "CORDIC Technique Reduces Trigonometric Function Look-Up", Computer Design, Boston, MA, USA: Computer Design Publishing Corp.: 72–78 (NB. Some sources erroneously refer to this as by P. Z. Perle or in Component Design.)

- ^ a b c d e Schmid, Hermann (1983) [1974]. Decimal Computation (1 (reprint) ed.). Malabar, Florida, USA: Robert E. Krieger Publishing Company. pp. 162, 165–176, 181–193. ISBN 0-89874-318-4. Retrieved 2016-01-03. (NB. At least some batches of this reprint edition were misprints with defective pages 115–146.)

- ^ Daggett, Dan H. (September 1959). "Decimal-Binary Conversions in CORDIC". IRE Transactions on Electronic Computers. 8 (3). The Institute of Radio Engineers, Inc. (IRE): 335–339. doi:10.1109/TEC.1959.5222694. ISSN 0367-9950. EC-8(3):335–339. Retrieved 2016-01-02.

- ^ Advanced Systems Group (1962-08-06), Technical Description of Fix-taking Tie-in Equipment (report), Fort Worth, Texas, USA: General Dynamics, FZE-052

- ^ a b Schmid, Hermann (1974). Decimal Computation (1 ed.). Binghamton, New York, USA: John Wiley & Sons, Inc. pp. 162, 165–176, 181–193. ISBN 0-471-76180-X. Retrieved 2016-01-03.

So far CORDIC has been known to be implemented only in binary form. But, as will be demonstrated here, the algorithm can be easily modified for a decimal system.* […] *In the meantime it has been learned that Hewlett-Packard and other calculator manufacturers employ the decimal CORDIC techniques in their scientific calculators.

- ^ a b c d Leibson, Steven (2010). "The HP 9100 Project: An Exothermic Reaction". Retrieved 2016-01-02.

- ^ a b c Meggitt, John E. (1961-08-29). "Pseudo Division and Pseudo Multiplication Processes" (PDF). IBM Journal of Research and Development. 6 (2). Riverton, New Jersey, USA: IBM Corporation (published April 1962): 210–226, 287. doi:10.1147/rd.62.0210. Archived from the original (PDF) on 2022-02-04. Retrieved 2016-01-09.

John E. Meggitt B.A., 1953; PhD, 1958, Cambridge University. Awarded the First Smith Prize at Cambridge in 1955 and elected a Research Fellowship at Emmanuel College. […] Joined IBM British Laboratory at Hursley, Winchester in 1958. Interests include error-correcting codes and small microprogrammed computers.

([3], [4]) - ^ a b c Cochran, David S. (2010-11-19). "A Quarter Century at HP" (interview typescript). Computer History Museum / HP Memories. 7: Scientific Calculators, circa 1966. CHM X5992.2011. Retrieved 2016-01-02.

I even flew down to Southern California to talk with Jack Volder who had implemented the transcendental functions in the Athena machine and talked to him for about an hour. He referred me to the original papers by Meggitt where he'd gotten the pseudo division, pseudo multiplication generalized functions. […] I did quite a bit of literary research leading to some very interesting discoveries. […] I found a treatise from 1624 by Henry Briggs discussing the calculation of common logarithms, interestingly used the same pseudo-division/pseudo-multiplication method that MacMillan and Volder used in Athena. […] We had purchased a LOCI-2 from Wang Labs and recognized that Wang Labs LOCI II used the same algorithm to do square root as well as log and exponential. After the introduction of the 9100 our legal department got a letter from Wang saying that we had infringed on their patent. And I just sent a note back with the Briggs reference in Latin and it said, "It looks like prior art to me." We never heard another word.

([5]) - ^ a b Cochran, David S. (1966-03-14), About utilizing CORDIC for computing transcendental functions in BCD (private communication with Jack E. Volder)

- ^ a b Osborne, Thomas E. (2010) [1994]. "Tom Osborne's Story in His Own Words". Retrieved 2016-01-01.

- ^ Leibson, Steven (2010). "The HP 9100: The Initial Journey". Retrieved 2016-01-02.

- ^ Cochran, David S. (September 1968). "Internal Programming of the 9100A Calculator". Hewlett-Packard Journal. Palo Alto, California, USA: Hewlett-Packard: 14–16. Retrieved 2016-01-02. ([6])

- ^ Extend your Personal Computing Power with the new LOCI-1 Logarithmic Computing Instrument, Wang Laboratories, Inc., 1964, pp. 2–3, retrieved 2016-01-03

- ^ Bensene, Rick (2013-08-31) [1997]. "Wang LOCI-2". Old Calculator Web Museum. Beavercreek, Oregon City, Oregon, USA. Retrieved 2016-01-03.

- ^ "Wang LOCI Service Manual" (PDF). Wang Laboratories, Inc. 1967. L55-67. Retrieved 2018-09-14.

- ^ Bensene, Rick (2004-10-23) [1997]. "Wang Model 360SE Calculator System". Old Calculator Web Museum. Beavercreek, Oregon City, Oregon, USA. Retrieved 2016-01-03.

- ^ a b c d Cochran, David S. (June 2010). "The HP-35 Design, A Case Study in Innovation". HP Memory Project. Retrieved 2016-01-02.

During the development of the desktop HP 9100 calculator I was responsible for developing the algorithms to fit the architecture suggested by Tom Osborne. Although the suggested methodology for the algorithms came from Malcolm McMillan I did considerable amount of reading to understand the core calculations […] Although Wang Laboratories had used similar methods of calculation, my study found prior art dated 1624 that read on their patents. […] This research enabled the adaption of the transcendental functions through the use of the algorithms to match the needs of the customer within the constraints of the hardware. This proved invaluable during the development of the HP-35, […] Power series, polynomial expansions, continued fractions, and Chebyshev polynomials were all considered for the transcendental functions. All were too slow because of the number of multiplications and divisions required. The generalized algorithm that best suited the requirements of speed and programming efficiency for the HP-35 was an iterative pseudo-division and pseudo-multiplication method first described in 1624 by Henry Briggs in 'Arithmetica Logarithmica' and later by Volder and Meggitt. This is the same type of algorithm that was used in previous HP desktop calculators. […] The complexity of the algorithms made multilevel programming a necessity. This meant the calculator had to have subroutine capability, […] To generate a transcendental function such as Arc-Hyperbolic-Tan required several levels of subroutines. […] Chris Clare later documented this as Algorithmic State Machine (ASM) methodology. Even the simple Sine or Cosine used the Tangent routine, and then calculated the Sine from trigonometric identities. These arduous manipulations were necessary to minimize the number of unique programs and program steps […] The arithmetic instruction set was designed specifically for a decimal transcendental-function calculator. The basic arithmetic operations are performed by a 10's complement adder-subtractor which has data paths to three of the registers that are used as working storage.

- ^ US patent 3402285A, Wang, An, "Calculating apparatus", published 1968-09-17, issued 1968-09-17, assigned to Wang Laboratories ([7], [8])

- ^ DE patent 1499281B1, Wang, An, "Rechenmaschine fuer logarithmische Rechnungen", published 1970-05-06, issued 1970-05-06, assigned to Wang Laboratories ([9])

- ^ Swartzlander, Jr., Earl E. (1990). Computer Arithmetic. Vol. 1 (2 ed.). Los Alamitos: IEEE Computer Society Press. ISBN 9780818689314. 0818689315. Retrieved 2016-01-02.

- ^ Petrocelli, Orlando R., ed. (1972), The Best Computer Papers of 1971, Auerbach Publishers, p. 71, ISBN 0877691274, retrieved 2016-01-02

- ^ Cochran, David S. (June 1972). "Algorithms and Accuracy in the HP-35" (PDF). Hewlett-Packard Journal. 23 (10): 10–11. Archived from the original (PDF) on 2013-10-04. Retrieved 2016-01-02.

- ^ Laporte, Jacques (2005-12-06). "HP35 trigonometric algorithm". Paris, France. Archived from the original on 2015-03-09. Retrieved 2016-01-02. [10] Archived 2020-08-10 at the Wayback Machine

- ^ Laporte, Jacques (February 2005) [1981]. "The secret of the algorithms". L'Ordinateur Individuel (24). Paris, France. Archived from the original on 2016-08-18. Retrieved 2016-01-02. [11] Archived 2021-06-12 at the Wayback Machine

- ^ Laporte, Jacques (February 2012) [2006]. "Digit by digit methods". Paris, France. Archived from the original on 2016-08-18. Retrieved 2016-01-02. [12] Archived 2021-06-12 at the Wayback Machine

- ^ Laporte, Jacques (February 2012) [2007]. "HP 35 Logarithm Algorithm". Paris, France. Archived from the original on 2016-08-18. Retrieved 2016-01-07. [13] Archived 2020-08-10 at the Wayback Machine

- ^ Wang, Yuxuan; Luo, Yuanyong; Wang, Zhongfeng; Shen, Qinghong; Pan, Hongbing (January 2020). "GH CORDIC-Based Architecture for Computing Nth Root of Single-Precision Floating-Point Number". IEEE Transactions on Very Large Scale Integration (VLSI) Systems. 28 (4): 864–875. doi:10.1109/TVLSI.2019.2959847. S2CID 212975618.

- ^ Mopuri, Suresh; Acharyya, Amit (September 2019). "Low Complexity Generic VLSI Architecture Design Methodology for Nth Root and Nth Power Computations". IEEE Transactions on Circuits and Systems I: Regular Papers. 66 (12): 4673–4686. doi:10.1109/TCSI.2019.2939720. S2CID 203992880.

- ^ Vachhani, Leena (November 2019). "CORDIC as a Switched Nonlinear System". Circuits, Systems and Signal Processing. 39 (6): 3234–3249. doi:10.1007/s00034-019-01295-8. S2CID 209904108.

- ^ Schmid, Hermann; Bogacki, Anthony (1973-02-20). "Use Decimal CORDIC for Generation of Many Transcendental Functions". EDN: 64–73.

- ^ Franke, Richard (1973-05-08). An Analysis of Algorithms for Hardware Evaluation of Elementary Functions (PDF). Monterey, California, USA: Department of the Navy, Naval Postgraduate School. NPS-53FE73051A. Retrieved 2016-01-03.

- ^ a b c d e Muller, Jean-Michel (2006). Elementary Functions: Algorithms and Implementation (2 ed.). Boston: Birkhäuser. p. 134. ISBN 978-0-8176-4372-0. LCCN 2005048094. Retrieved 2015-12-01.

- ^ Palmer, John F.; Morse, Stephen Paul (1984). The 8087 Primer (1 ed.). John Wiley & Sons Australia, Limited. ISBN 0471875694. 9780471875697. Retrieved 2016-01-02.

- ^ Glass, L. Brent (January 1990). "Math Coprocessors: A look at what they do, and how they do it". Byte. 15 (1): 337–348. ISSN 0360-5280.

- ^ a b c Jarvis, Pitts (1990-10-01). "Implementing CORDIC algorithms – A single compact routine for computing transcendental functions". Dr. Dobb's Journal: 152–156. Archived from the original on 2016-03-04. Retrieved 2016-01-02.

- ^ a b Yuen, A. K. (1988). "Intel's Floating-Point Processors". Electro/88 Conference Record: 48/5/1–7.

- ^ Meher, Pramod Kumar; Valls, Javier; Juang, Tso-Bing; Sridharan, K.; Maharatna, Koushik (2008-08-22). "50 Years of CORDIC: Algorithms, Architectures and Applications" (PDF). IEEE Transactions on Circuits and Systems I: Regular Papers. 56 (9) (published 2009-09-09): 1893–1907. doi:10.1109/TCSI.2009.2025803. S2CID 5465045.

- ^ Meher, Pramod Kumar; Park, Sang Yoon (February 2013). "Low Complexity Generic VLSI Architecture Design Methodology for Nth Root and Nth Power Computations". IEEE Transactions on Very Large Scale Integration (VLSI) Systems. 21 (2): 217–228. doi:10.1109/TVLSI.2012.2187080. S2CID 7059383.

- ^ Heffron, W. G.; LaPiana, F. (1970-12-11). "Technical Memorandum 70-2014-8: The Navigation System of the Lunar Roving Vehicle" (PDF). NASA. Washington, D.C., USA: Bellcomm. p. 14.

- ^ Smith, Earnest C.; Mastin, William C. (November 1973). "Technical Note D-7469: Lunar Roving Vehicle Navigation System Performance Review" (PDF). NASA. Huntsville, Alabama, USA: Marshall Space Flight Center. p. 17.

- ^ Shirriff, Ken (May 2020). "Extracting ROM constants from the 8087 math coprocessor's die". righto.com. Retrieved 2020-09-03.

The ROM contains 16 arctangent values, the arctans of 2−n. It also contains 14 log values, the base-2 logs of (1+2−n). These may seem like unusual values, but they are used in an efficient algorithm called CORDIC, which was invented in 1958.

- ^ "Getting started with the CORDIC accelerator using STM32CubeG4 MCU Package" (PDF). STMicroelectronics. Retrieved 2021-01-01.

- ^ "CMSIS/CMSIS/DSP_Lib/Source/ControllerFunctions/arm_sin_cos_f32.c". Github. ARM. Retrieved 2021-01-01.

- ^ "Error bounds of Taylor Expansion for Sine". Math Stack Exchange. Retrieved 2021-01-01.

- ^ Andraka, Ray (1998). "A survey of CORDIC algorithms for FPGA based computers" (PDF). ACM. North Kingstown, RI, USA: Andraka Consulting Group, Inc. 0-89791-978-5/98/01. Retrieved 2016-05-08.

- ^ "Class Math". Java Platform Standard (8 ed.). Oracle Corporation. 2018 [1993]. Archived from the original on 2018-08-06. Retrieved 2018-08-06.

- ^ "ldexp, ldexpf, ldexpl". cppreference.com. 2015-06-11. Archived from the original on 2018-08-06. Retrieved 2018-08-06.

- ^ "Section 8.3.9 Logarithmic, Exponential, and Scale". Intel 64 and IA-32 Architectures Software Developer's Manual Volume 1: Basic Architecture (PDF). Intel Corporation. September 2016. pp. 8–22.

- ^ Baykov, Vladimir. "The outline (autoreferat) of my PhD, published in 1972". baykov.de. Retrieved 2023-05-03.

- ^ Baykov, Vladimir. "Hardware implementation of elementary functions in computers". baykov.de. Retrieved 2023-05-03.

- ^ a b Baykov, Vladimir. "Special-purpose processors: iterative algorithms and structures". baykov.de. Retrieved 2023-05-03.

Further reading[edit]

- Parini, Joseph A. (1966-09-05). "DIVIC Gives Answer to Complex Navigation Questions". Electronics: 105–111. ISSN 0013-5070. (NB. DIVIC stands for DIgital Variable Increments Computer. Some sources erroneously refer to this as by J. M. Parini.)

- Anderson, Stanley F.; Earle, John G.; Goldschmidt, Robert Elliott; Powers, Don M. (1965-11-01). "The IBM System/360 Model 91: Floating-Point Execution Unit" (PDF). IBM Journal of Research and Development. 11 (1). Riverton, New Jersey, USA (published January 1967): 34–53. doi:10.1147/rd.111.0034. Archived from the original (PDF) on 2016-03-05. Retrieved 2016-01-02.

- Liccardo, Michael A. (September 1968). An Interconnect Processor with Emphasis on CORDIC Mode Operation (MSc thesis). Berkeley, CA, USA: University of California, Berkeley, Department of Electrical Engineering. OCLC 500565168.

- US patent 3576983A, Cochran, David S., "Digital calculator system for computing square roots", published 1971-05-04, issued 1971-05-04, assigned to Hewlett-Packard Co. ([14])

- Chen, Tien Chi (July 1972). "Automatic Computation of Exponentials, Logarithms, Ratios, and Square Roots" (PDF). IBM Journal of Research and Development. 16 (4): 380–388. doi:10.1147/rd.164.0380. ISSN 0018-8646. Archived from the original (PDF) on 2016-08-12. Retrieved 2016-01-02.

- Egbert, William E. (May 1977). "Personal Calculator Algorithms I: Square Roots" (PDF). Hewlett-Packard Journal. 28 (9). Palo Alto, California, USA: Hewlett-Packard: 22–24. Archived from the original (PDF) on 2015-12-18. Retrieved 2016-01-02. ([15])

- Egbert, William E. (June 1977). "Personal Calculator Algorithms II: Trigonometric Functions" (PDF). Hewlett-Packard Journal. 28 (10). Palo Alto, California, USA: Hewlett-Packard: 17–20. Archived from the original (PDF) on 2016-03-04. Retrieved 2016-01-02. ([16])

- Egbert, William E. (November 1977). "Personal Calculator Algorithms III: Inverse Trigonometric Functions" (PDF). Hewlett-Packard Journal. 29 (3). Palo Alto, California, USA: Hewlett-Packard: 22–23. Archived from the original (PDF) on 2016-03-04. Retrieved 2016-01-02. ([17])

- Egbert, William E. (April 1978). "Personal Calculator Algorithms IV: Logarithmic Functions" (PDF). Hewlett-Packard Journal. 29 (8). Palo Alto, California, USA: Hewlett-Packard: 29–32. Archived from the original (PDF) on 2016-03-04. Retrieved 2016-01-02. ([18])

- Senzig, Don (1975). "Calculator Algorithms". IEEE Compcon Reader Digest. IEEE: 139–141. IEEE Catalog No. 75 CH 0920-9C.

- Baykov, Vladimir D. (1972), Вопросы исследования вычисления элементарных функций по методу «цифра за цифрой» [Problems of elementary functions evaluation based on digit by digit (CORDIC) technique] (PhD thesis) (in Russian), Leningrad State University of Electrical Engineering

- Baykov, Vladimir D.; Smolov, Vladimir B. (1975). Apparaturnaja realizatsija elementarnikh funktsij v CVM Аппаратурная реализация элементарных функций в ЦВМ [Hardware implementation of elementary functions in computers] (in Russian). Leningrad State University. Archived from the original on 2019-03-02. Retrieved 2019-03-02.

- Baykov, Vladimir D.; Seljutin, S. A. (1982). Вычисление элементарных функций в ЭКВМ [Elementary functions evaluation in microcalculators] (in Russian). Moscow: Radio i svjaz (Радио и связь).

- Baykov, Vladimir D.; Smolov, Vladimir B. (1985). Специализированные процессоры: итерационные алгоритмы и структуры [Special-purpose processors: iterative algorithms and structures] (in Russian). Moscow: Radio i svjaz (Радио и связь).

- Coppens, Thomas, ed. (January 1980). "CORDIC constants in TI 58/59 ROM". Texas Instruments Software Exchange Newsletter. 2 (2). Kapellen, Belgium: TISOFT.

- Coppens, Thomas, ed. (April–June 1980). "Natural logarithm computation scheme / ex computing scheme / 1/x computing scheme". Texas Instruments Software Exchange Newsletter. 2 (3). Kapellen, Belgium: TISOFT. (about CORDIC in TI-58/TI-59)

- TI Graphic Products Team (1995) [1993]. "Transcendental function algorithms". Dallas, Texas, USA: Texas Instruments, Consumer Products. Archived from the original on 2016-03-17. Retrieved 2019-03-02.

- Jorke, Günter; Lampe, Bernhard; Wengel, Norbert (1989). Arithmetische Algorithmen der Mikrorechentechnik (in German) (1 ed.). Berlin, Germany: VEB Verlag Technik. pp. 219, 261, 271–296. ISBN 3341005153. EAN 9783341005156. MPN 5539165. License 201.370/4/89. Retrieved 2015-12-01.

- Zechmeister, M. (2021). "Solving Kepler's equation with CORDIC double iterations". Monthly Notices of the Royal Astronomical Society. 500 (1). Göttingen, Germany: Institut für Astrophysik, Georg-August-Universität: 109–117. arXiv:2008.02894. doi:10.1093/mnras/staa2441.

- Frerking, Marvin E. (1994). Digital Signal Processing in Communication Systems (1 ed.).

- Kantabutra, Vitit (1996). "On hardware for computing exponential and trigonometric functions". IEEE Transactions on Computers. 45 (3): 328–339. doi:10.1109/12.485571.

- Johansson, Kenny (2008). "6.5 Sine and Cosine Functions". Low Power and Low Complexity Shift-and-Add Based Computations (PDF) (Dissertation thesis). Linköping Studies in Science and Technology (1 ed.). Linköping, Sweden: Department of Electrical Engineering, Linköping University. pp. 244–250. ISBN 978-91-7393-836-5. ISSN 0345-7524. No. 1201. Archived (PDF) from the original on 2017-08-13. Retrieved 2021-08-23. (x+268 pages)

- Banerjee, Ayan (2001). "FPGA realization of a CORDIC based FFT processor for biomedical signal processing". Microprocessors and Microsystems. 25 (3). Kharagpur, West Bengal, India: 131–142. doi:10.1016/S0141-9331(01)00106-5.

- Kahan, William Morton (2002-05-20). "Pseudo-Division Algorithms for Floating-Point Logarithms and Exponentials" (PDF). Berkeley, CA, USA: University of California. Archived from the original (PDF) on 2015-12-25. Retrieved 2016-01-15.

- Cockrum, Chris K. (Fall 2008). "Implementation of a CORDIC Algorithm in a Digital Down-Converter" (PDF).

- Lakshmi, Boppana; Dhar, Anindya Sundar (2009-10-06). "CORDIC Architectures: A Survey". VLSI Design. 2010. Kharagpur, West Bengal, India: Department of Electronics and Electrical Communication Engineering, Indian Institute of Technology (published 2010-10-10): 1–19. doi:10.1155/2010/794891. 794891.

- Savard, John J. G. (2018) [2006]. "Advanced Arithmetic Techniques". quadibloc. Archived from the original on 2018-07-03. Retrieved 2018-07-16.

External links[edit]

- Wang, Shaoyun (July 2011), CORDIC Bibliography Site, archived from the original on 2000-10-17 – via Chandra Department of Electical and Computer Engineering, Cockrell School of Engineering, The University of Texas at Austin

- Soft CORDIC IP (verilog HDL code)

- CORDIC Bibliography Site

- BASIC Stamp, CORDIC math implementation

- CORDIC implementation in verilog

- CORDIC Vectoring with Arbitrary Target Value

- Python CORDIC implementation

- Simple C code for fixed-point CORDIC

- Tutorial and MATLAB Implementation – Using CORDIC to Estimate Phase of a Complex Number

- Descriptions of hardware CORDICs in Arx with testbenches in C++ and VHDL

- An Introduction to the CORDIC algorithm

- Implementation of the CORDIC Algorithm in a Digital Down-Converter

- Implementation of the CORDIC Algorithm: fixed point C code for trigonometric and hyperbolic functions, C code for test and performance verification