Causality

Causality is an influence by which one event, process, state, or object (a cause) contributes to the production of another event, process, state, or object (an effect) where the cause is partly responsible for the effect, and the effect is partly dependent on the cause. In general, a process has many causes,[1] which are also said to be causal factors for it, and all lie in its past. An effect can in turn be a cause of, or causal factor for, many other effects, which all lie in its future. Some writers have held that causality is metaphysically prior to notions of time and space.[2][3][4]

Causality is an abstraction that indicates how the world progresses.[5] As such it is a basic concept; it is more apt to be an explanation of other concepts of progression than something to be explained by other more fundamental concepts. The concept is like those of agency and efficacy. For this reason, a leap of intuition may be needed to grasp it.[6][7] Accordingly, causality is implicit in the logic and structure of ordinary language,[8] as well as explicit in the language of scientific causal notation.

In English studies of Aristotelian philosophy, the word "cause" is used as a specialized technical term, the translation of Aristotle's term αἰτία, by which Aristotle meant "explanation" or "answer to a 'why' question". Aristotle categorized the four types of answers as material, formal, efficient, and final "causes". In this case, the "cause" is the explanans for the explanandum, and failure to recognize that different kinds of "cause" are being considered can lead to futile debate. Of Aristotle's four explanatory modes, the one nearest to the concerns of the present article is the "efficient" one.

David Hume, as part of his opposition to rationalism, argued that pure reason alone cannot prove the reality of efficient causality; instead, he appealed to custom and mental habit, observing that all human knowledge derives solely from experience.

The topic of causality remains a staple in contemporary philosophy.

Concept[edit]

Metaphysics[edit]

The nature of cause and effect is a concern of the subject known as metaphysics. Kant thought that time and space were notions prior to human understanding of the progress or evolution of the world, and he also recognized the priority of causality. But he did not have the understanding that came with knowledge of Minkowski geometry and the special theory of relativity, that the notion of causality can be used as a prior foundation from which to construct notions of time and space.[2][3][4]

Ontology[edit]

A general metaphysical question about cause and effect is: "what kind of entity can be a cause, and what kind of entity can be an effect?"

One viewpoint on this question is that cause and effect are of one and the same kind of entity, causality being an asymmetric relation between them. That is to say, it would make good sense grammatically to say either "A is the cause and B the effect" or "B is the cause and A the effect", though only one of those two can be actually true. In this view, one opinion, proposed as a metaphysical principle in process philosophy, is that every cause and every effect is respectively some process, event, becoming, or happening.[3] An example is 'his tripping over the step was the cause, and his breaking his ankle the effect'. Another view is that causes and effects are 'states of affairs', with the exact natures of those entities being more loosely defined than in process philosophy.[9]

Another viewpoint on this question is the more classical one, that a cause and its effect can be of different kinds of entity. For example, in Aristotle's efficient causal explanation, an action can be a cause while an enduring object is its effect. For example, the generative actions of his parents can be regarded as the efficient cause, with Socrates being the effect, Socrates being regarded as an enduring object, in philosophical tradition called a 'substance', as distinct from an action.

Epistemology[edit]

Since causality is a subtle metaphysical notion, considerable intellectual effort, along with exhibition of evidence, is needed to establish knowledge of it in particular empirical circumstances. According to David Hume, the human mind is unable to perceive causal relations directly. On this ground, the scholar distinguished between the regularity view of causality and the counterfactual notion.[10] According to the counterfactual view, X causes Y if and only if, without X, Y would not exist. Hume interpreted the latter as an ontological view, i.e., as a description of the nature of causality but, given the limitations of the human mind, advised using the former (stating, roughly, that X causes Y if and only if the two events are spatiotemporally conjoined, and X precedes Y) as an epistemic definition of causality. We need an epistemic concept of causality in order to distinguish between causal and noncausal relations. The contemporary philosophical literature on causality can be divided into five big approaches to causality. These include the (mentioned above) regularity, probabilistic, counterfactual, mechanistic, and manipulationist views. The five approaches can be shown to be reductive, i.e., define causality in terms of relations of other types.[11] According to this reading, they define causality in terms of, respectively, empirical regularities (constant conjunctions of events), changes in conditional probabilities, counterfactual conditions, mechanisms underlying causal relations, and invariance under intervention.

Geometrical significance[edit]

Causality has the properties of antecedence and contiguity.[12][13] These are topological, and are ingredients for space-time geometry. As developed by Alfred Robb, these properties allow the derivation of the notions of time and space.[14] Max Jammer writes "the Einstein postulate ... opens the way to a straightforward construction of the causal topology ... of Minkowski space."[15] Causal efficacy propagates no faster than light.[16]

Thus, the notion of causality is metaphysically prior to the notions of time and space. In practical terms, this is because use of the relation of causality is necessary for the interpretation of empirical experiments. Interpretation of experiments is needed to establish the physical and geometrical notions of time and space.

Volition[edit]

The deterministic world-view holds that the history of the universe can be exhaustively represented as a progression of events following one after the other as cause and effect.[13] Incompatibilism holds that determinism is incompatible with free will, so if determinism is true, "free will" does not exist. Compatibilism, on the other hand, holds that determinism is compatible with, or even necessary for, free will.[17]

Necessary and sufficient causes[edit]

Causes may sometimes be distinguished into two types: necessary and sufficient.[18] A third type of causation, which requires neither necessity nor sufficiency, but which contributes to the effect, is called a "contributory cause".

- Necessary causes

- If x is a necessary cause of y, then the presence of y necessarily implies the prior occurrence of x. The presence of x, however, does not imply that y will occur.[19]

- Sufficient causes

- If x is a sufficient cause of y, then the presence of x necessarily implies the subsequent occurrence of y. However, another cause z may alternatively cause y. Thus the presence of y does not imply the prior occurrence of x.[19]

- Contributory causes

- For some specific effect, in a singular case, a factor that is a contributory cause is one among several co-occurrent causes. It is implicit that all of them are contributory. For the specific effect, in general, there is no implication that a contributory cause is necessary, though it may be so. In general, a factor that is a contributory cause is not sufficient, because it is by definition accompanied by other causes, which would not count as causes if it were sufficient. For the specific effect, a factor that is on some occasions a contributory cause might on some other occasions be sufficient, but on those other occasions it would not be merely contributory.[20]

J. L. Mackie argues that usual talk of "cause" in fact refers to INUS conditions (insufficient but non-redundant parts of a condition which is itself unnecessary but sufficient for the occurrence of the effect).[21] An example is a short circuit as a cause for a house burning down. Consider the collection of events: the short circuit, the proximity of flammable material, and the absence of firefighters. Together these are unnecessary but sufficient to the house's burning down (since many other collections of events certainly could have led to the house burning down, for example shooting the house with a flamethrower in the presence of oxygen and so forth). Within this collection, the short circuit is an insufficient (since the short circuit by itself would not have caused the fire) but non-redundant (because the fire would not have happened without it, everything else being equal) part of a condition which is itself unnecessary but sufficient for the occurrence of the effect. So, the short circuit is an INUS condition for the occurrence of the house burning down.

Contrasted with conditionals[edit]

This section needs additional citations for verification. (January 2017) |

Conditional statements are not statements of causality. An important distinction is that statements of causality require the antecedent to precede or coincide with the consequent in time, whereas conditional statements do not require this temporal order. Confusion commonly arises since many different statements in English may be presented using "If ..., then ..." form (and, arguably, because this form is far more commonly used to make a statement of causality). The two types of statements are distinct, however.

For example, all of the following statements are true when interpreting "If ..., then ..." as the material conditional:

- If Barack Obama is president of the United States in 2011, then Germany is in Europe.

- If George Washington is president of the United States in 2011, then ⟨arbitrary statement⟩.

The first is true since both the antecedent and the consequent are true. The second is true in sentential logic and indeterminate in natural language, regardless of the consequent statement that follows, because the antecedent is false.

The ordinary indicative conditional has somewhat more structure than the material conditional. For instance, although the first is the closest, neither of the preceding two statements seems true as an ordinary indicative reading. But the sentence:

- If Shakespeare of Stratford-on-Avon did not write Macbeth, then someone else did.

intuitively seems to be true, even though there is no straightforward causal relation in this hypothetical situation between Shakespeare's not writing Macbeth and someone else's actually writing it.

Another sort of conditional, the counterfactual conditional, has a stronger connection with causality, yet even counterfactual statements are not all examples of causality. Consider the following two statements:

- If A were a triangle, then A would have three sides.

- If switch S were thrown, then bulb B would light.

In the first case, it would be incorrect to say that A's being a triangle caused it to have three sides, since the relationship between triangularity and three-sidedness is that of definition. The property of having three sides actually determines A's state as a triangle. Nonetheless, even when interpreted counterfactually, the first statement is true. An early version of Aristotle's "four cause" theory is described as recognizing "essential cause". In this version of the theory, that the closed polygon has three sides is said to be the "essential cause" of its being a triangle.[22] This use of the word 'cause' is of course now far obsolete. Nevertheless, it is within the scope of ordinary language to say that it is essential to a triangle that it has three sides.

A full grasp of the concept of conditionals is important to understanding the literature on causality. In everyday language, loose conditional statements are often enough made, and need to be interpreted carefully.

Questionable cause[edit]

Fallacies of questionable cause, also known as causal fallacies, non-causa pro causa (Latin for "non-cause for cause"), or false cause, are informal fallacies where a cause is incorrectly identified.

Theories[edit]

Counterfactual theories[edit]

Counterfactual theories define causation in terms of a counterfactual relation, and can often be seen as "floating" their account of causality on top of an account of the logic of counterfactual conditionals. Counterfactual theories reduce facts about causation to facts about what would have been true under counterfactual circumstances.[23] The idea is that causal relations can be framed in the form of "Had C not occurred, E would not have occurred." This approach can be traced back to David Hume's definition of the causal relation as that "where, if the first object had not been, the second never had existed."[24] More full-fledged analysis of causation in terms of counterfactual conditionals only came in the 20th century after development of the possible world semantics for the evaluation of counterfactual conditionals. In his 1973 paper "Causation," David Lewis proposed the following definition of the notion of causal dependence:[25]

- An event E causally depends on C if, and only if, (i) if C had occurred, then E would have occurred, and (ii) if C had not occurred, then E would not have occurred.

Causation is then analyzed in terms of counterfactual dependence. That is, C causes E if and only if there exists a sequence of events C, D1, D2, ... Dk, E such that each event in the sequence counterfactually depends on the previous. This chain of causal dependence may be called a mechanism.

Note that the analysis does not purport to explain how we make causal judgements or how we reason about causation, but rather to give a metaphysical account of what it is for there to be a causal relation between some pair of events. If correct, the analysis has the power to explain certain features of causation. Knowing that causation is a matter of counterfactual dependence, we may reflect on the nature of counterfactual dependence to account for the nature of causation. For example, in his paper "Counterfactual Dependence and Time's Arrow," Lewis sought to account for the time-directedness of counterfactual dependence in terms of the semantics of the counterfactual conditional.[26] If correct, this theory can serve to explain a fundamental part of our experience, which is that we can causally affect the future but not the past.

One challenge for the counterfactual account is overdetermination, whereby an effect has multiple causes. For instance, suppose Alice and Bob both throw bricks at a window and it breaks. If Alice hadn't thrown the brick, then it still would have broken, suggesting that Alice wasn't a cause; however, intuitively, Alice did cause the window to break. The Halpern-Pearl definitions of causality take account of examples like these.[27] The first and third Halpern-Pearl conditions are easiest to understand: AC1 requires that Alice threw the brick and the window broke in the actual work. AC3 requires that Alice throwing the brick is a minimal cause (cf. blowing a kiss and throwing a brick). Taking the "updated" version of AC2(a), the basic idea is that we have to find a set of variables and settings thereof such that preventing Alice from throwing a brick also stops the window from breaking. One way to do this is to stop Bob from throwing the brick. Finally, for AC2(b), we have to hold things as per AC2(a) and show that Alice throwing the brick breaks the window. (The full definition is a little more involved, involving checking all subsets of variables.)

Probabilistic causation[edit]

Interpreting causation as a deterministic relation means that if A causes B, then A must always be followed by B. In this sense, war does not cause deaths, nor does smoking cause cancer or emphysema. As a result, many turn to a notion of probabilistic causation. Informally, A ("The person is a smoker") probabilistically causes B ("The person has now or will have cancer at some time in the future"), if the information that A occurred increases the likelihood of Bs occurrence. Formally, P{B|A}≥ P{B} where P{B|A} is the conditional probability that B will occur given the information that A occurred, and P{B} is the probability that B will occur having no knowledge whether A did or did not occur. This intuitive condition is not adequate as a definition for probabilistic causation because of its being too general and thus not meeting our intuitive notion of cause and effect. For example, if A denotes the event "The person is a smoker," B denotes the event "The person now has or will have cancer at some time in the future" and C denotes the event "The person now has or will have emphysema some time in the future," then the following three relationships hold: P{B|A} ≥ P{B}, P{C|A} ≥ P{C} and P{B|C} ≥ P{B}. The last relationship states that knowing that the person has emphysema increases the likelihood that he will have cancer. The reason for this is that having the information that the person has emphysema increases the likelihood that the person is a smoker, thus indirectly increasing the likelihood that the person will have cancer. However, we would not want to conclude that having emphysema causes cancer. Thus, we need additional conditions such as temporal relationship of A to B and a rational explanation as to the mechanism of action. It is hard to quantify this last requirement and thus different authors prefer somewhat different definitions.[citation needed]

Causal calculus[edit]

When experimental interventions are infeasible or illegal, the derivation of a cause-and-effect relationship from observational studies must rest on some qualitative theoretical assumptions, for example, that symptoms do not cause diseases, usually expressed in the form of missing arrows in causal graphs such as Bayesian networks or path diagrams. The theory underlying these derivations relies on the distinction between conditional probabilities, as in , and interventional probabilities, as in . The former reads: "the probability of finding cancer in a person known to smoke, having started, unforced by the experimenter, to do so at an unspecified time in the past", while the latter reads: "the probability of finding cancer in a person forced by the experimenter to smoke at a specified time in the past". The former is a statistical notion that can be estimated by observation with negligible intervention by the experimenter, while the latter is a causal notion which is estimated in an experiment with an important controlled randomized intervention. It is specifically characteristic of quantal phenomena that observations defined by incompatible variables always involve important intervention by the experimenter, as described quantitatively by the observer effect.[vague] In classical thermodynamics, processes are initiated by interventions called thermodynamic operations. In other branches of science, for example astronomy, the experimenter can often observe with negligible intervention.

The theory of "causal calculus"[28] (also known as do-calculus, Judea Pearl's Causal Calculus, Calculus of Actions) permits one to infer interventional probabilities from conditional probabilities in causal Bayesian networks with unmeasured variables. One very practical result of this theory is the characterization of confounding variables, namely, a sufficient set of variables that, if adjusted for, would yield the correct causal effect between variables of interest. It can be shown that a sufficient set for estimating the causal effect of on is any set of non-descendants of that -separate from after removing all arrows emanating from . This criterion, called "backdoor", provides a mathematical definition of "confounding" and helps researchers identify accessible sets of variables worthy of measurement.

Structure learning[edit]

While derivations in causal calculus rely on the structure of the causal graph, parts of the causal structure can, under certain assumptions, be learned from statistical data. The basic idea goes back to Sewall Wright's 1921 work[29] on path analysis. A "recovery" algorithm was developed by Rebane and Pearl (1987)[30] which rests on Wright's distinction between the three possible types of causal substructures allowed in a directed acyclic graph (DAG):

Type 1 and type 2 represent the same statistical dependencies (i.e., and are independent given ) and are, therefore, indistinguishable within purely cross-sectional data. Type 3, however, can be uniquely identified, since and are marginally independent and all other pairs are dependent. Thus, while the skeletons (the graphs stripped of arrows) of these three triplets are identical, the directionality of the arrows is partially identifiable. The same distinction applies when and have common ancestors, except that one must first condition on those ancestors. Algorithms have been developed to systematically determine the skeleton of the underlying graph and, then, orient all arrows whose directionality is dictated by the conditional independencies observed.[28][31][32][33]

Alternative methods of structure learning search through the many possible causal structures among the variables, and remove ones which are strongly incompatible with the observed correlations. In general this leaves a set of possible causal relations, which should then be tested by analyzing time series data or, preferably, designing appropriately controlled experiments. In contrast with Bayesian Networks, path analysis (and its generalization, structural equation modeling), serve better to estimate a known causal effect or to test a causal model than to generate causal hypotheses.

For nonexperimental data, causal direction can often be inferred if information about time is available. This is because (according to many, though not all, theories) causes must precede their effects temporally. This can be determined by statistical time series models, for instance, or with a statistical test based on the idea of Granger causality, or by direct experimental manipulation. The use of temporal data can permit statistical tests of a pre-existing theory of causal direction. For instance, our degree of confidence in the direction and nature of causality is much greater when supported by cross-correlations, ARIMA models, or cross-spectral analysis using vector time series data than by cross-sectional data.

Derivation theories[edit]

Nobel laureate Herbert A. Simon and philosopher Nicholas Rescher[34] claim that the asymmetry of the causal relation is unrelated to the asymmetry of any mode of implication that contraposes. Rather, a causal relation is not a relation between values of variables, but a function of one variable (the cause) on to another (the effect). So, given a system of equations, and a set of variables appearing in these equations, we can introduce an asymmetric relation among individual equations and variables that corresponds perfectly to our commonsense notion of a causal ordering. The system of equations must have certain properties, most importantly, if some values are chosen arbitrarily, the remaining values will be determined uniquely through a path of serial discovery that is perfectly causal. They postulate the inherent serialization of such a system of equations may correctly capture causation in all empirical fields, including physics and economics.

Manipulation theories[edit]

Some theorists have equated causality with manipulability.[35][36][37][38] Under these theories, x causes y only in the case that one can change x in order to change y. This coincides with commonsense notions of causations, since often we ask causal questions in order to change some feature of the world. For instance, we are interested in knowing the causes of crime so that we might find ways of reducing it.

These theories have been criticized on two primary grounds. First, theorists complain that these accounts are circular. Attempting to reduce causal claims to manipulation requires that manipulation is more basic than causal interaction. But describing manipulations in non-causal terms has provided a substantial difficulty.

The second criticism centers around concerns of anthropocentrism. It seems to many people that causality is some existing relationship in the world that we can harness for our desires. If causality is identified with our manipulation, then this intuition is lost. In this sense, it makes humans overly central to interactions in the world.

Some attempts to defend manipulability theories are recent accounts that do not claim to reduce causality to manipulation. These accounts use manipulation as a sign or feature in causation without claiming that manipulation is more fundamental than causation.[28][39]

Process theories[edit]

Some theorists are interested in distinguishing between causal processes and non-causal processes (Russell 1948; Salmon 1984).[40][41] These theorists often want to distinguish between a process and a pseudo-process. As an example, a ball moving through the air (a process) is contrasted with the motion of a shadow (a pseudo-process). The former is causal in nature while the latter is not.

Salmon (1984)[40] claims that causal processes can be identified by their ability to transmit an alteration over space and time. An alteration of the ball (a mark by a pen, perhaps) is carried with it as the ball goes through the air. On the other hand, an alteration of the shadow (insofar as it is possible) will not be transmitted by the shadow as it moves along.

These theorists claim that the important concept for understanding causality is not causal relationships or causal interactions, but rather identifying causal processes. The former notions can then be defined in terms of causal processes.

A subgroup of the process theories is the mechanistic view on causality. It states that causal relations supervene on mechanisms. While the notion of mechanism is understood differently, the definition put forward by the group of philosophers referred to as the 'New Mechanists' dominate the literature.[42]

Fields[edit]

Science[edit]

For the scientific investigation of efficient causality, the cause and effect are each best conceived of as temporally transient processes.

Within the conceptual frame of the scientific method, an investigator sets up several distinct and contrasting temporally transient material processes that have the structure of experiments, and records candidate material responses, normally intending to determine causality in the physical world.[43] For instance, one may want to know whether a high intake of carrots causes humans to develop the bubonic plague. The quantity of carrot intake is a process that is varied from occasion to occasion. The occurrence or non-occurrence of subsequent bubonic plague is recorded. To establish causality, the experiment must fulfill certain criteria, only one example of which is mentioned here. For example, instances of the hypothesized cause must be set up to occur at a time when the hypothesized effect is relatively unlikely in the absence of the hypothesized cause; such unlikelihood is to be established by empirical evidence. A mere observation of a correlation is not nearly adequate to establish causality. In nearly all cases, establishment of causality relies on repetition of experiments and probabilistic reasoning. Hardly ever is causality established more firmly than as more or less probable. It is most convenient for establishment of causality if the contrasting material states of affairs are precisely matched, except for only one variable factor, perhaps measured by a real number.

Physics[edit]

One has to be careful in the use of the word cause in physics. Properly speaking, the hypothesized cause and the hypothesized effect are each temporally transient processes. For example, force is a useful concept for the explanation of acceleration, but force is not by itself a cause. More is needed. For example, a temporally transient process might be characterized by a definite change of force at a definite time. Such a process can be regarded as a cause. Causality is not inherently implied in equations of motion, but postulated as an additional constraint that needs to be satisfied (i.e. a cause always precedes its effect). This constraint has mathematical implications[44] such as the Kramers-Kronig relations.

Causality is one of the most fundamental and essential notions of physics.[45] Causal efficacy cannot 'propagate' faster than light. Otherwise, reference coordinate systems could be constructed (using the Lorentz transform of special relativity) in which an observer would see an effect precede its cause (i.e. the postulate of causality would be violated).

Causal notions appear in the context of the flow of mass-energy. Any actual process has causal efficacy that can propagate no faster than light. In contrast, an abstraction has no causal efficacy. Its mathematical expression does not propagate in the ordinary sense of the word, though it may refer to virtual or nominal 'velocities' with magnitudes greater than that of light. For example, wave packets are mathematical objects that have group velocity and phase velocity. The energy of a wave packet travels at the group velocity (under normal circumstances); since energy has causal efficacy, the group velocity cannot be faster than the speed of light. The phase of a wave packet travels at the phase velocity; since phase is not causal, the phase velocity of a wave packet can be faster than light.[46]

Causal notions are important in general relativity to the extent that the existence of an arrow of time demands that the universe's semi-Riemannian manifold be orientable, so that "future" and "past" are globally definable quantities.

Engineering[edit]

A causal system is a system with output and internal states that depends only on the current and previous input values. A system that has some dependence on input values from the future (in addition to possible past or current input values) is termed an acausal system, and a system that depends solely on future input values is an anticausal system. Acausal filters, for example, can only exist as postprocessing filters, because these filters can extract future values from a memory buffer or a file.

We have to be very careful with causality in physics and engineering. Cellier, Elmqvist, and Otter[47] describe causality forming the basis of physics as a misconception, because physics is essentially acausal. In their article they cite a simple example: "The relationship between voltage across and current through an electrical resistor can be described by Ohm's law: V = IR, yet, whether it is the current flowing through the resistor that causes a voltage drop, or whether it is the difference between the electrical potentials on the two wires that causes current to flow is, from a physical perspective, a meaningless question". In fact, if we explain cause-effect using the law, we need two explanations to describe an electrical resistor: as a voltage-drop-causer or as a current-flow-causer. There is no physical experiment in the world that can distinguish between action and reaction.

Biology, medicine and epidemiology[edit]

Austin Bradford Hill built upon the work of Hume and Popper and suggested in his paper "The Environment and Disease: Association or Causation?" that aspects of an association such as strength, consistency, specificity, and temporality be considered in attempting to distinguish causal from noncausal associations in the epidemiological situation. (See Bradford-Hill criteria.) He did not note however, that temporality is the only necessary criterion among those aspects. Directed acyclic graphs (DAGs) are increasingly used in epidemiology to help enlighten causal thinking.[48]

Psychology[edit]

Psychologists take an empirical approach to causality, investigating how people and non-human animals detect or infer causation from sensory information, prior experience and innate knowledge.

Attribution: Attribution theory is the theory concerning how people explain individual occurrences of causation. Attribution can be external (assigning causality to an outside agent or force—claiming that some outside thing motivated the event) or internal (assigning causality to factors within the person—taking personal responsibility or accountability for one's actions and claiming that the person was directly responsible for the event). Taking causation one step further, the type of attribution a person provides influences their future behavior.

The intention behind the cause or the effect can be covered by the subject of action. See also accident; blame; intent; and responsibility.

- Causal powers

Whereas David Hume argued that causes are inferred from non-causal observations, Immanuel Kant claimed that people have innate assumptions about causes. Within psychology, Patricia Cheng[7] attempted to reconcile the Humean and Kantian views. According to her power PC theory, people filter observations of events through an intuition that causes have the power to generate (or prevent) their effects, thereby inferring specific cause-effect relations.

- Causation and salience

Our view of causation depends on what we consider to be the relevant events. Another way to view the statement, "Lightning causes thunder" is to see both lightning and thunder as two perceptions of the same event, viz., an electric discharge that we perceive first visually and then aurally.

- Naming and causality

David Sobel and Alison Gopnik from the Psychology Department of UC Berkeley designed a device known as the blicket detector which would turn on when an object was placed on it. Their research suggests that "even young children will easily and swiftly learn about a new causal power of an object and spontaneously use that information in classifying and naming the object."[49]

- Perception of launching events

Some researchers such as Anjan Chatterjee at the University of Pennsylvania and Jonathan Fugelsang at the University of Waterloo are using neuroscience techniques to investigate the neural and psychological underpinnings of causal launching events in which one object causes another object to move. Both temporal and spatial factors can be manipulated.[50]

See Causal Reasoning (Psychology) for more information.

Statistics and economics[edit]

Statistics and economics usually employ pre-existing data or experimental data to infer causality by regression methods. The body of statistical techniques involves substantial use of regression analysis. Typically a linear relationship such as

is postulated, in which is the ith observation of the dependent variable (hypothesized to be the caused variable), for j=1,...,k is the ith observation on the jth independent variable (hypothesized to be a causative variable), and is the error term for the ith observation (containing the combined effects of all other causative variables, which must be uncorrelated with the included independent variables). If there is reason to believe that none of the s is caused by y, then estimates of the coefficients are obtained. If the null hypothesis that is rejected, then the alternative hypothesis that and equivalently that causes y cannot be rejected. On the other hand, if the null hypothesis that cannot be rejected, then equivalently the hypothesis of no causal effect of on y cannot be rejected. Here the notion of causality is one of contributory causality as discussed above: If the true value , then a change in will result in a change in y unless some other causative variable(s), either included in the regression or implicit in the error term, change in such a way as to exactly offset its effect; thus a change in is not sufficient to change y. Likewise, a change in is not necessary to change y, because a change in y could be caused by something implicit in the error term (or by some other causative explanatory variable included in the model).

The above way of testing for causality requires belief that there is no reverse causation, in which y would cause . This belief can be established in one of several ways. First, the variable may be a non-economic variable: for example, if rainfall amount is hypothesized to affect the futures price y of some agricultural commodity, it is impossible that in fact the futures price affects rainfall amount (provided that cloud seeding is never attempted). Second, the instrumental variables technique may be employed to remove any reverse causation by introducing a role for other variables (instruments) that are known to be unaffected by the dependent variable. Third, the principle that effects cannot precede causes can be invoked, by including on the right side of the regression only variables that precede in time the dependent variable; this principle is invoked, for example, in testing for Granger causality and in its multivariate analog, vector autoregression, both of which control for lagged values of the dependent variable while testing for causal effects of lagged independent variables.

Regression analysis controls for other relevant variables by including them as regressors (explanatory variables). This helps to avoid false inferences of causality due to the presence of a third, underlying, variable that influences both the potentially causative variable and the potentially caused variable: its effect on the potentially caused variable is captured by directly including it in the regression, so that effect will not be picked up as an indirect effect through the potentially causative variable of interest. Given the above procedures, coincidental (as opposed to causal) correlation can be probabilistically rejected if data samples are large and if regression results pass cross-validation tests showing that the correlations hold even for data that were not used in the regression. Asserting with certitude that a common-cause is absent and the regression represents the true causal structure is in principle impossible.[51]

The problem of omitted variable bias, however, has to be balanced against the risk of inserting Causal colliders, in which the addition of a new variable induces a correlation between and via Berkson's paradox.[52]

Apart from constructing statistical models of observational and experimental data, economists use axiomatic (mathematical) models to infer and represent causal mechanisms. Highly abstract theoretical models that isolate and idealize one mechanism dominate microeconomics. In macroeconomics, economists use broad mathematical models that are calibrated on historical data. A subgroup of calibrated models, dynamic stochastic general equilibrium (DSGE) models are employed to represent (in a simplified way) the whole economy and simulate changes in fiscal and monetary policy.[53]

Management[edit]

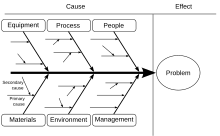

For quality control in manufacturing in the 1960s, Kaoru Ishikawa developed a cause and effect diagram, known as an Ishikawa diagram or fishbone diagram. The diagram categorizes causes, such as into the six main categories shown here. These categories are then sub-divided. Ishikawa's method identifies "causes" in brainstorming sessions conducted among various groups involved in the manufacturing process. These groups can then be labeled as categories in the diagrams. The use of these diagrams has now spread beyond quality control, and they are used in other areas of management and in design and engineering. Ishikawa diagrams have been criticized for failing to make the distinction between necessary conditions and sufficient conditions. It seems that Ishikawa was not even aware of this distinction.[54]

Humanities[edit]

History[edit]

In the discussion of history, events are sometimes considered as if in some way being agents that can then bring about other historical events. Thus, the combination of poor harvests, the hardships of the peasants, high taxes, lack of representation of the people, and kingly ineptitude are among the causes of the French Revolution. This is a somewhat Platonic and Hegelian view that reifies causes as ontological entities. In Aristotelian terminology, this use approximates to the case of the efficient cause.

Some philosophers of history such as Arthur Danto have claimed that "explanations in history and elsewhere" describe "not simply an event—something that happens—but a change".[55] Like many practicing historians, they treat causes as intersecting actions and sets of actions which bring about "larger changes", in Danto's words: to decide "what are the elements which persist through a change" is "rather simple" when treating an individual's "shift in attitude", but "it is considerably more complex and metaphysically challenging when we are interested in such a change as, say, the break-up of feudalism or the emergence of nationalism".[56]

Much of the historical debate about causes has focused on the relationship between communicative and other actions, between singular and repeated ones, and between actions, structures of action or group and institutional contexts and wider sets of conditions.[57] John Gaddis has distinguished between exceptional and general causes (following Marc Bloch) and between "routine" and "distinctive links" in causal relationships: "in accounting for what happened at Hiroshima on August 6, 1945, we attach greater importance to the fact that President Truman ordered the dropping of an atomic bomb than to the decision of the Army Air Force to carry out his orders."[58] He has also pointed to the difference between immediate, intermediate and distant causes.[59] For his part, Christopher Lloyd puts forward four "general concepts of causation" used in history: the "metaphysical idealist concept, which asserts that the phenomena of the universe are products of or emanations from an omnipotent being or such final cause"; "the empiricist (or Humean) regularity concept, which is based on the idea of causation being a matter of constant conjunctions of events"; "the functional/teleological/consequential concept", which is "goal-directed, so that goals are causes"; and the "realist, structurist and dispositional approach, which sees relational structures and internal dispositions as the causes of phenomena".[60]

Law[edit]

According to law and jurisprudence, legal cause must be demonstrated to hold a defendant liable for a crime or a tort (i.e. a civil wrong such as negligence or trespass). It must be proven that causality, or a "sufficient causal link" relates the defendant's actions to the criminal event or damage in question. Causation is also an essential legal element that must be proven to qualify for remedy measures under international trade law.[61]

History[edit]

Hindu philosophy[edit]

Vedic period (c. 1750–500 BCE) literature has karma's Eastern origins.[62] Karma is the belief held by Sanatana Dharma and major religions that a person's actions cause certain effects in the current life and/or in future life, positively or negatively. The various philosophical schools (darshanas) provide different accounts of the subject. The doctrine of satkaryavada affirms that the effect inheres in the cause in some way. The effect is thus either a real or apparent modification of the cause. The doctrine of asatkaryavada affirms that the effect does not inhere in the cause, but is a new arising. See Nyaya for some details of the theory of causation in the Nyaya school. In Brahma Samhita, Brahma describes Krishna as the prime cause of all causes.[63]

Bhagavad-gītā 18.14 identifies five causes for any action (knowing which it can be perfected): the body, the individual soul, the senses, the efforts and the supersoul.

According to Monier-Williams, in the Nyāya causation theory from Sutra I.2.I,2 in the Vaisheshika philosophy, from causal non-existence is effectual non-existence; but, not effectual non-existence from causal non-existence. A cause precedes an effect. With a threads and cloth metaphors, three causes are:

- Co-inherence cause: resulting from substantial contact, 'substantial causes', threads are substantial to cloth, corresponding to Aristotle's material cause.

- Non-substantial cause: Methods putting threads into cloth, corresponding to Aristotle's formal cause.

- Instrumental cause: Tools to make the cloth, corresponding to Aristotle's efficient cause.

Monier-Williams also proposed that Aristotle's and the Nyaya's causality are considered conditional aggregates necessary to man's productive work.[64]

Buddhist philosophy[edit]

Karma is the causality principle focusing on 1) causes, 2) actions, 3) effects, where it is the mind's phenomena that guide the actions that the actor performs. Buddhism trains the actor's actions for continued and uncontrived virtuous outcomes aimed at reducing suffering. This follows the Subject–verb–object structure.[citation needed]

The general or universal definition of pratityasamutpada (or "dependent origination" or "dependent arising" or "interdependent co-arising") is that everything arises in dependence upon multiple causes and conditions; nothing exists as a singular, independent entity. A traditional example in Buddhist texts is of three sticks standing upright and leaning against each other and supporting each other. If one stick is taken away, the other two will fall to the ground.[65][66]

Causality in the Chittamatrin Buddhist school approach, Asanga's (c. 400 CE) mind-only Buddhist school, asserts that objects cause consciousness in the mind's image. Because causes precede effects, which must be different entities, then subject and object are different. For this school, there are no objects which are entities external to a perceiving consciousness. The Chittamatrin and the Yogachara Svatantrika schools accept that there are no objects external to the observer's causality. This largely follows the Nikayas approach.[67][68][69][70]

The Vaibhashika (c. 500 CE) is an early Buddhist school which favors direct object contact and accepts simultaneous cause and effects. This is based in the consciousness example which says, intentions and feelings are mutually accompanying mental factors that support each other like poles in tripod. In contrast, simultaneous cause and effect rejectors say that if the effect already exists, then it cannot effect the same way again. How past, present and future are accepted is a basis for various Buddhist school's causality viewpoints.[71][72][73]

All the classic Buddhist schools teach karma. "The law of karma is a special instance of the law of cause and effect, according to which all our actions of body, speech, and mind are causes and all our experiences are their effects."[74]

Western philosophy[edit]

Aristotelian[edit]

Aristotle identified four kinds of answer or explanatory mode to various "Why?" questions. He thought that, for any given topic, all four kinds of explanatory mode were important, each in its own right. As a result of traditional specialized philosophical peculiarities of language, with translations between ancient Greek, Latin, and English, the word 'cause' is nowadays in specialized philosophical writings used to label Aristotle's four kinds.[22][75] In ordinary language, the word 'cause' has a variety of meanings, the most common of which refers to efficient causation, which is the topic of the present article.

- Material cause, the material whence a thing has come or that which persists while it changes, as for example, one's mother or the bronze of a statue (see also substance theory).[76]

- Formal cause, whereby a thing's dynamic form or static shape determines the thing's properties and function, as a human differs from a statue of a human or as a statue differs from a lump of bronze.[77]

- Efficient cause, which imparts the first relevant movement, as a human lifts a rock or raises a statue. This is the main topic of the present article.

- Final cause, the criterion of completion, or the end; it may refer to an action or to an inanimate process. Examples: Socrates takes a walk after dinner for the sake of his health; earth falls to the lowest level because that is its nature.

Of Aristotle's four kinds or explanatory modes, only one, the 'efficient cause' is a cause as defined in the leading paragraph of this present article. The other three explanatory modes might be rendered material composition, structure and dynamics, and, again, criterion of completion. The word that Aristotle used was αἰτία. For the present purpose, that Greek word would be better translated as "explanation" than as "cause" as those words are most often used in current English. Another translation of Aristotle is that he meant "the four Becauses" as four kinds of answer to "why" questions.[22]

Aristotle assumed efficient causality as referring to a basic fact of experience, not explicable by, or reducible to, anything more fundamental or basic.

In some works of Aristotle, the four causes are listed as (1) the essential cause, (2) the logical ground, (3) the moving cause, and (4) the final cause. In this listing, a statement of essential cause is a demonstration that an indicated object conforms to a definition of the word that refers to it. A statement of logical ground is an argument as to why an object statement is true. These are further examples of the idea that a "cause" in general in the context of Aristotle's usage is an "explanation".[22]

The word "efficient" used here can also be translated from Aristotle as "moving" or "initiating".[22]

Efficient causation was connected with Aristotelian physics, which recognized the four elements (earth, air, fire, water), and added the fifth element (aether). Water and earth by their intrinsic property gravitas or heaviness intrinsically fall toward, whereas air and fire by their intrinsic property levitas or lightness intrinsically rise away from, Earth's center—the motionless center of the universe—in a straight line while accelerating during the substance's approach to its natural place.

As air remained on Earth, however, and did not escape Earth while eventually achieving infinite speed—an absurdity—Aristotle inferred that the universe is finite in size and contains an invisible substance that holds planet Earth and its atmosphere, the sublunary sphere, centered in the universe. And since celestial bodies exhibit perpetual, unaccelerated motion orbiting planet Earth in unchanging relations, Aristotle inferred that the fifth element, aither, that fills space and composes celestial bodies intrinsically moves in perpetual circles, the only constant motion between two points. (An object traveling a straight line from point A to B and back must stop at either point before returning to the other.)

Left to itself, a thing exhibits natural motion, but can—according to Aristotelian metaphysics—exhibit enforced motion imparted by an efficient cause. The form of plants endows plants with the processes nutrition and reproduction, the form of animals adds locomotion, and the form of humankind adds reason atop these. A rock normally exhibits natural motion—explained by the rock's material cause of being composed of the element earth—but a living thing can lift the rock, an enforced motion diverting the rock from its natural place and natural motion. As a further kind of explanation, Aristotle identified the final cause, specifying a purpose or criterion of completion in light of which something should be understood.

Aristotle himself explained,

Cause means

(a) in one sense, that as the result of whose presence something comes into being—e.g., the bronze of a statue and the silver of a cup, and the classes which contain these [i.e., the material cause];

(b) in another sense, the form or pattern; that is, the essential formula and the classes which contain it—e.g. the ratio 2:1 and number in general is the cause of the octave—and the parts of the formula [i.e., the formal cause].

(c) The source of the first beginning of change or rest; e.g. the man who plans is a cause, and the father is the cause of the child, and in general that which produces is the cause of that which is produced, and that which changes of that which is changed [i.e., the efficient cause].

(d) The same as "end"; i.e. the final cause; e.g., as the "end" of walking is health. For why does a man walk? "To be healthy", we say, and by saying this we consider that we have supplied the cause [the final cause].

(e) All those means towards the end which arise at the instigation of something else, as, e.g., fat-reducing, purging, drugs, and instruments are causes of health; for they all have the end as their object, although they differ from each other as being some instruments, others actions [i.e., necessary conditions].

— Metaphysics, Book 5, section 1013a, translated by Hugh Tredennick[78]

Aristotle further discerned two modes of causation: proper (prior) causation and accidental (chance) causation. All causes, proper and accidental, can be spoken as potential or as actual, particular or generic. The same language refers to the effects of causes, so that generic effects are assigned to generic causes, particular effects to particular causes, and actual effects to operating causes.

Averting infinite regress, Aristotle inferred the first mover—an unmoved mover. The first mover's motion, too, must have been caused, but, being an unmoved mover, must have moved only toward a particular goal or desire.

Pyrrhonism[edit]

While the plausibility of causality was accepted in Pyrrhonism,[79] it was equally accepted that it was plausible that nothing was the cause of anything.[80]

Middle Ages[edit]

In line with Aristotelian cosmology, Thomas Aquinas posed a hierarchy prioritizing Aristotle's four causes: "final > efficient > material > formal".[81] Aquinas sought to identify the first efficient cause—now simply first cause—as everyone would agree, said Aquinas, to call it God. Later in the Middle Ages, many scholars conceded that the first cause was God, but explained that many earthly events occur within God's design or plan, and thereby scholars sought freedom to investigate the numerous secondary causes.[82]

After the Middle Ages[edit]

For Aristotelian philosophy before Aquinas, the word cause had a broad meaning. It meant 'answer to a why question' or 'explanation', and Aristotelian scholars recognized four kinds of such answers. With the end of the Middle Ages, in many philosophical usages, the meaning of the word 'cause' narrowed. It often lost that broad meaning, and was restricted to just one of the four kinds. For authors such as Niccolò Machiavelli, in the field of political thinking, and Francis Bacon, concerning science more generally, Aristotle's moving cause was the focus of their interest. A widely used modern definition of causality in this newly narrowed sense was assumed by David Hume.[81] He undertook an epistemological and metaphysical investigation of the notion of moving cause. He denied that we can ever perceive cause and effect, except by developing a habit or custom of mind where we come to associate two types of object or event, always contiguous and occurring one after the other.[10] In Part III, section XV of his book A Treatise of Human Nature, Hume expanded this to a list of eight ways of judging whether two things might be cause and effect. The first three:

- "The cause and effect must be contiguous in space and time."

- "The cause must be prior to the effect."

- "There must be a constant union betwixt the cause and effect. 'Tis chiefly this quality, that constitutes the relation."

And then additionally there are three connected criteria which come from our experience and which are "the source of most of our philosophical reasonings":

- "The same cause always produces the same effect, and the same effect never arises but from the same cause. This principle we derive from experience, and is the source of most of our philosophical reasonings."

- Hanging upon the above, Hume says that "where several different objects produce the same effect, it must be by means of some quality, which we discover to be common amongst them."

- And "founded on the same reason": "The difference in the effects of two resembling objects must proceed from that particular, in which they differ."

And then two more:

- "When any object increases or diminishes with the increase or diminution of its cause, 'tis to be regarded as a compounded effect, deriv'd from the union of the several different effects, which arise from the several different parts of the cause."

- An "object, which exists for any time in its full perfection without any effect, is not the sole cause of that effect, but requires to be assisted by some other principle, which may forward its influence and operation."

In 1949, physicist Max Born distinguished determination from causality. For him, determination meant that actual events are so linked by laws of nature that certainly reliable predictions and retrodictions can be made from sufficient present data about them. He describes two kinds of causation: nomic or generic causation and singular causation. Nomic causality means that cause and effect are linked by more or less certain or probabilistic general laws covering many possible or potential instances; this can be recognized as a probabilized version of Hume's criterion 3. An occasion of singular causation is a particular occurrence of a definite complex of events that are physically linked by antecedence and contiguity, which may be recognized as criteria 1 and 2.[12]

See also[edit]

References[edit]

- ^ Compare: Bunge, Mario (1960) [1959]. Causality and Modern Science. Vol. 187 (3, revised ed.) (published 2012). pp. 123–124. Bibcode:1960Natur.187...92W. doi:10.1038/187092a0. ISBN 9780486144870. S2CID 4290073. Retrieved 12 March 2018.

Multiple causation has been defended, and even taken for granted, by the most diverse thinkers [...] simple causation is suspected of artificiality on account of its very simplicity. Granted, the assignment of a single cause (or effect) to a set of effects (or causes) may be a superficial, nonilluminating hypothesis. But so is usually the hypothesis of simple causation. Why should we remain satisfied with statements of causation, instead of attempting to go beyond the first simple relation that is found?

{{cite book}}:|journal=ignored (help) - ^ a b Robb, A. A. (1911). Optical Geometry of Motion. Cambridge: W. Heffer and Sons Ltd. Retrieved 12 May 2021.

- ^ a b c Whitehead, A.N. (1929). Process and Reality. An Essay in Cosmology. Gifford Lectures Delivered in the University of Edinburgh During the Session 1927–1928. Cambridge: Cambridge University Press. ISBN 9781439118368.

- ^ a b Malament, David B. (July 1977). "The class of continuous timelike curves determines the topology of spacetime" (PDF). Journal of Mathematical Physics. 18 (7): 1399–1404. Bibcode:1977JMP....18.1399M. doi:10.1063/1.523436. Archived (PDF) from the original on 9 October 2022.

- ^ Mackie, J.L. (2002) [1980]. The Cement of the Universe: a Study of Causation. Oxford: Oxford University Press. p. 1.

... it is part of the business of philosophy to determine what causal relationships in general are, what it is for one thing to cause another, or what it is for nature to obey causal laws. As I understand it, this is an ontological question, a question about how the world goes on.

- ^ Whitehead, A.N. (1929). Process and Reality. An Essay in Cosmology. Gifford Lectures Delivered in the University of Edinburgh During the Session 1927–1928, Macmillan, New York; Cambridge University Press, Cambridge UK, "The sole appeal is to intuition."

- ^ a b Cheng, P.W. (1997). "From Covariation to Causation: A Causal Power Theory". Psychological Review. 104 (2): 367–405. doi:10.1037/0033-295x.104.2.367.

- ^ Copley, Bridget (27 January 2015). Causation in Grammatical Structures. Oxford University Press. ISBN 9780199672073. Retrieved 30 January 2016.

- ^ Armstrong, D.M. (1997). A World of States of Affairs, Cambridge University Press, Cambridge UK, ISBN 0-521-58064-1, pp. 89, 265.

- ^ a b Hume, David (1888). A Treatise on Human Nature. Oxford: Clarendon Press.

- ^ Maziarz, Mariusz (2020). The Philosophy of Causality in Economics: Causal Inferences and Policy Proposals. New York & London: Routledge.

- ^ a b Born, M. (1949). Natural Philosophy of Cause and Chance, Oxford University Press, London, p. 9.

- ^ a b Sklar, L. (1995). Determinism, pp. 117–119 in A Companion to Metaphysics, edited by Kim, J. Sosa, E., Blackwell, Oxford UK, pp. 177–181.

- ^ Robb, A.A. (1936). Geometry of Time and Space, Cambridge University Press, Cambridge UK.

- ^ Jammer, M. (1982). 'Einstein and quantum physics', pp. 59–76 in Albert Einstein: Historical and Cultural Perspectives; the Centennial Symposium in Jerusalem, edited by G. Holton, Y. Elkana, Princeton University Press, Princeton NJ, ISBN 0-691-08299-5, p. 61.

- ^ Naber, G.L. (1992). The Geometry of Minkowski Spacetime: An Introduction to the Mathematics of the Special Theory of Relativity, Springer, New York, ISBN 978-1-4419-7837-0, pp. 4–5.

- ^ Watson, G. (1995). Free will, pp. 175–182 in A Companion to Metaphysics, edited by Kim, J. Sosa, E., Blackwell, Oxford UK, pp. 177–181.

- ^ Epp, Susanna S.: "Discrete Mathematics with Applications, Third Edition", pp. 25–26. Brooks/Cole – Thomson Learning, 2004. ISBN 0-534-35945-0

- ^ a b "Causal Reasoning". www.istarassessment.org. Retrieved 2 March 2016.

- ^ Riegelman, R. (1979). "Contributory cause: Unnecessary and insufficient". Postgraduate Medicine. 66 (2): 177–179. doi:10.1080/00325481.1979.11715231. PMID 450828.

- ^ Mackie, John Leslie (1974). The Cement of the Universe: A Study of Causation. Clarendon Press. ISBN 9780198244059.

- ^ a b c d e Graham, D.W. (1987). Aristotle's Two Systems Archived 1 July 2015 at the Wayback Machine, Oxford University Press, Oxford UK, ISBN 0-19-824970-5

- ^ Ney, Alyssa (23 February 2023). Metaphysics: An Introduction (2 ed.). London: Routledge. doi:10.4324/9781351141208. ISBN 978-1-351-14120-8.

- ^ Hume, David (1748). An Enquiry concerning Human Understanding. Sec. VII.

- ^ Lewis, David (1973). "Causation". The Journal of Philosophy. 70 (17): 556–567. doi:10.2307/2025310. JSTOR 2025310.

- ^ Lewis, David (1979). "Counterfactual Dependence and Time's Arrow". Noûs. 13 (4): 455–476. doi:10.2307/2215339. JSTOR 2215339.

- ^ Halpern, Joseph Y. (2016). Actual Causality. The MIT Press.

- ^ a b c Pearl, Judea (2000). Causality: Models, Reasoning, and Inference Archived 31 August 2021 at the Wayback Machine, Cambridge University Press.

- ^ Wright, S. "Correlation and Causation". Journal of Agricultural Research. 20 (7): 557–585.

- ^ Rebane, G. and Pearl, J., "The Recovery of Causal Poly-trees from Statistical Data Archived 26 July 2020 at the Wayback Machine", Proceedings, 3rd Workshop on Uncertainty in AI, (Seattle) pp. 222–228, 1987

- ^ Spirites, P. and Glymour, C., "An algorithm for fast recovery of sparse causal graphs", Social Science Computer Review, Vol. 9, pp. 62–72, 1991.

- ^ Spirtes, P. and Glymour, C. and Scheines, R., Causation, Prediction, and Search, New York: Springer-Verlag, 1993

- ^ Verma, T. and Pearl, J., "Equivalence and Synthesis of Causal Models", Proceedings of the Sixth Conference on Uncertainty in Artificial Intelligence, (July, Cambridge, Massachusetts), pp. 220–227, 1990. Reprinted in P. Bonissone, M. Henrion, L.N. Kanal and J.F.\ Lemmer (Eds.), Uncertainty in Artificial Intelligence 6, Amsterdam: Elsevier Science Publishers, B.V., pp. 225–268, 1991

- ^ Simon, Herbert; Rescher, Nicholas (1966). "Cause and Counterfactual". Philosophy of Science. 33 (4): 323–340. doi:10.1086/288105. S2CID 224834481.

- ^ Collingwood, R. (1940) An Essay on Metaphysics. Clarendon Press.

- ^ Gasking, D (1955). "Causation and Recipes". Mind. 64 (256): 479–487. doi:10.1093/mind/lxiv.256.479.

- ^ Menzies, P.; Price, H. (1993). "Causation as a Secondary Quality". British Journal for the Philosophy of Science. 44 (2): 187–203. CiteSeerX 10.1.1.28.9736. doi:10.1093/bjps/44.2.187. S2CID 160013822.

- ^ von Wright, G. (1971) Explanation and Understanding. Cornell University Press.

- ^ Woodward, James (2003) Making Things Happen: A Theory of Causal Explanation. Oxford University Press, ISBN 0-19-515527-0

- ^ a b Salmon, W. (1984) Scientific Explanation and the Causal Structure of the World Archived 12 December 2022 at the Wayback Machine. Princeton University Press.

- ^ Russell, B. (1948) Human Knowledge. Simon and Schuster.

- ^ Williamson, Jon (2011). "Mechanistic theories of causality part I". Philosophy Compass. 6 (6): 421–432. doi:10.1111/j.1747-9991.2011.00400.x.

- ^ Born, M. (1949). Natural Philosophy of Cause and Chance, Oxford University Press, Oxford UK, p. 18: "... scientific work will always be the search for causal interdependence of phenomena."

- ^ Kinsler, P. (2011). "How to be causal". Eur. J. Phys. 32 (6): 1687–1700. arXiv:1106.1792. Bibcode:2011EJPh...32.1687K. doi:10.1088/0143-0807/32/6/022. S2CID 56034806.

- ^ Einstein, A. (1910/2005). 'On Boltzmann's Principle and some immediate consequences thereof', unpublished manuscript of a 1910 lecture by Einstein, translated by B. Duplantier and E. Parks, reprinted on pp. 183–199 in Einstein,1905–2005, Poincaré Seminar 2005, edited by T. Damour, O. Darrigol, B. Duplantier, V. Rivasseau, Birkhäuser Verlag, Basel, ISBN 3-7643-7435-7, from Einstein, Albert: The Collected Papers of Albert Einstein, 1987–2005, Hebrew University and Princeton University Press; p. 183: "All natural science is based upon the hypothesis of the complete causal connection of all events."

- ^ Griffiths, David (2017). Introduction to electrodynamics (Fourth ed.). Cambridge University Press. p. 418. ISBN 978-1-108-42041-9.

- ^ Cellier, Francois E., Hilding Elmqvist, and Martin Otter. "Modeling from physical principles." The Control Handbook, 1996 by CRC Press, Inc., ed. William S. Levine (1996): 99-108.

- ^ Chiolero, A; Paradis, G; Kaufman, JS (1 January 2014). "Assessing the possible direct effect of birth weight on childhood blood pressure: a sensitivity analysis". American Journal of Epidemiology. 179 (1): 4–11. doi:10.1093/aje/kwt228. PMID 24186972.

- ^ Gopnik, A; Sobel, David M. (September–October 2000). "Detecting Blickets: How Young Children Use Information about Novel Causal Powers in Categorization and Induction". Child Development. 71 (5): 1205–1222. doi:10.1111/1467-8624.00224. PMID 11108092.

- ^ Straube, B; Chatterjee, A (2010). "Space and time in perceptual causality". Frontiers in Human Neuroscience. 4: 28. doi:10.3389/fnhum.2010.00028. PMC 2868299. PMID 20463866.

- ^ Henschen, Tobias (2018). "The in-principle inconclusiveness of causal evidence in macroeconomics". European Journal for the Philosophy of Science. 8 (3): 709–733. doi:10.1007/s13194-018-0207-7. S2CID 158264284.

- ^ Pearl, Judea (2000). Causality. Cambridge, MA: MIT Press. ISBN 9780521773621.

- ^ Maziarz Mariusz, Mróz Robert (2020). "A rejoinder to Henschen: the issue of VAR and DSGE models". Journal of Economic Methodology. 27 (3): 266–268. doi:10.1080/1350178X.2020.1731102. S2CID 212838652.

- ^ Gregory, Frank Hutson (1992). . Journal of the Operational Research Society. 44 (4): 333–344. doi:10.1057/jors.1993.63. ISSN 0160-5682. S2CID 60817414.

- ^ Danto, Arthur (1965) Analytical Philosophy of History, 233.

- ^ Danto, Arthur (1965) Analytical Philosophy of History, 249.

- ^ Hewitson, Mark (2014) History and Causality, 86–116.

- ^ Gaddis, John L. (2002), The Landscape of History: How Historians Map the Past, 64.

- ^ Gaddis, John L. (2002), The Landscape of History: How Historians Map the Past, 95.

- ^ Lloyd, Christopher (1993) Structures of History, 159.

- ^ Moon, William J.; Ahn, Dukgeun (6 May 2010). "Dukgeun Ahn & William J. Moon, Alternative Approach to Causation Analysis in Trade Remedy Investigations, Journal of World Trade". SSRN 1601531.

{{cite journal}}: Cite journal requires|journal=(help) - ^ Krishan, Y. (6 August 2010). "The Vedic Origins of the Doctrine of Karma". South Asian Studies. 4 (1): 51–55. doi:10.1080/02666030.1988.9628366.

- ^ "Brahma Samhita, Chapter 5: Hymn to the Absolute Truth". Bhaktivedanta Book Trust. Archived from the original on 7 May 2014. Retrieved 19 May 2014.

- ^ Williams, Monier (1875). Indian Wisdom or Examples of the Religious, Philosophical and Ethical Doctrines of the Hindus. London: Oxford. p. 81. ISBN 9781108007955.

- ^ Macy, Joanna (1991). "Dependent Co-Arising as Mutual Causality". Mutual Causality in Buddhism and General Systems Theory: The Dharma of Natural Systems. Albany: State University of New York Press. p. 56. ISBN 0-7914-0636-9.

- ^ Tanaka, Kenneth K. (1985). "Simultaneous Relation (Sahabhū-hetu): A Study in Buddhist Theory of Causation". The Journal of the International Association of Buddhist Studies. 8 (1): 94, 101.

- ^ Hopkins, Jeffrey (15 June 1996). Meditation on Emptiness (Rep Sub ed.). Wisdom Publications. p. 367. ISBN 978-0861711109.

- ^ Lusthaus, Dan. "What is and isn't Yogācāra". Yogacara Buddhism Research Associations. Yogacara Buddhism Research Associations: Resources for East Asian Language and Thought, A. Charles Muller Faculty of Letters, University of Tokyo [Site Established July 1995]. Retrieved 30 January 2016.

- ^ Suk-Fun, Ng (2014). "Time and causality in Yogācāra Buddhism". The HKU Scholars Hub.

- ^ Makeham, John (1 April 2014). Transforming Consciousness: Yogacara Thought in Modern China (1st ed.). Oxford University Press. p. 253. ISBN 978-0199358137.

- ^ Hopkins, Jeffrey (15 June 1996). Meditation on Emptiness (Rep Sub ed.). Wisdom Publications. p. 339. ISBN 978-0861711109.

- ^ Klien, Anne Carolyn (1 January 1987). Knowledge And Liberation: Tibetan Buddhist Epistemology In Support Of Transformative Religious Experience (2nd ed.). Snow Lion. p. 101. ISBN 978-1559391146. Retrieved 30 January 2016.

- ^ Bartley, Christopher (30 July 2015). An Introduction to Indian Philosophy: Hindu and Buddhist Ideas from Original Sources (Kindle ed.). Bloomsbury Academic. ISBN 9781472528513. Retrieved 30 January 2016.

- ^ Kelsang Gyatso, Geshe (1995). Joyful Path of Good Fortune : The Complete Guide to the Buddhist Path to Enlightenment (2nd ed rev ed.). London: Tharpa. ISBN 978-0948006463. OCLC 34411408.

- ^ "WISDOM SUPREME | Aristotle's Four Causes". Archived from the original on 15 August 2018. Retrieved 9 October 2012.

- ^ Soccio, D.J. (2011). Archetypes of Wisdom: An Introduction to Philosophy, 8th Ed.: An Introduction to Philosophy. Wadsworth. p. 167. ISBN 9781111837792.

- ^ Falcon, Andrea (2012). Edward N. Zalta (ed.). "Aristotle on Causality". The Stanford Encyclopedia of Philosophy (Winter 2012 ed.).

In the Physics, Aristotle builds on his general account of the four causes by developing explanatory principles that are specific to the study of nature. Here Aristotle insists that all four modes of explanation are called for in the study of natural phenomena, and that the job of "the student of nature is to bring the why-question back to them all in the way appropriate to the science of nature" (Phys. 198 a 21–23). The best way to understand this methodological recommendation is the following: the science of nature is concerned with natural bodies insofar as they are subject to change, and the job of the student of nature is to provide the explanation of their natural change. The factors that are involved in the explanation of natural change turn out to be matter, form, that which produces the change, and the end of this change. Note that Aristotle does not say that all four explanatory factors are involved in the explanation of each and every instance of natural change. Rather, he says that an adequate explanation of natural change may involve a reference to all of them. Aristotle goes on by adding a specification on his doctrine of the four causes: the form and the end often coincide, and they are formally the same as that which produces the change (Phys. 198 a 23–26).

- ^ Aristotle. Aristotle in 23 Volumes, Vols.17, 18, translated by Hugh Tredennick. Cambridge, MA, Harvard University Press; London, William Heinemann Ltd. 1933, 1989. Archived 4 March 2021 at the Wayback Machine (hosted at perseus.tufts.edu.)

- ^ Sextus Empiricus, Outlines of Pyrrhonism Book III Chapter 5 Section 17

- ^ Sextus Empiricus, Outlines of Pyrrhonism Book III Chapter 5 Section 20

- ^ a b William E. May (April 1970). "Knowledge of Causality in Hume and Aquinas". The Thomist. 34. Archived from the original on 1 May 2011. Retrieved 6 April 2011.

- ^ O'Meara, T.F. (2018). "The dignity of being a cause". Open Theology. 4 (1): 186–191. doi:10.1515/opth-2018-0013.

Further reading[edit]

- Chisholm, Hugh, ed. (1911). . Encyclopædia Britannica. Vol. 5 (11th ed.). Cambridge University Press. pp. 557–558.

- Arthur Danto (1965). Analytical Philosophy of History. Cambridge University Press.

- Idem, 'Complex Events', Philosophy and Phenomenological Research, 30 (1969), 66–77.

- Idem, 'On Explanations in History', Philosophy of Science, 23 (1956), 15–30.

- Green, Celia (2003). The Lost Cause: Causation and the Mind-Body Problem. Oxford: Oxford Forum. ISBN 0-9536772-1-4 Includes three chapters on causality at the microlevel in physics.

- Hewitson, Mark (2014). History and Causality. Palgrave Macmillan. ISBN 978-1-137-37239-0.

- Little, Daniel (1998). Microfoundations, Method and Causation: On the Philosophy of the Social Sciences. New York: Transaction.

- Lloyd, Christopher (1993). The Structures of History. Oxford: Blackwell.

- Idem (1986). Explanation in Social History. Oxford: Blackwell.

- Maurice Mandelbaum (1977). The Anatomy of Historical Knowledge. Baltimore: Johns Hopkins Press.

- Judea Pearl (2000). Causality: Models of Reasoning and Inference CAUSALITY, 2nd Edition, 2009 Archived 9 August 2011 at the Wayback Machine Cambridge University Press ISBN 978-0-521-77362-1

- Rosenberg, M. (1968). The Logic of Survey Analysis. New York: Basic Books, Inc.

- Spirtes, Peter, Clark Glymour and Richard Scheines Causation, Prediction, and Search, MIT Press, ISBN 0-262-19440-6

- University of California journal articles, including Judea Pearl's articles between 1984 and 1998 Search Results - Technical Reports Archived 5 July 2022 at the Wayback Machine.

- Miguel Espinoza, Théorie du déterminisme causal, L'Harmattan, Paris, 2006. ISBN 2-296-01198-5.

External links[edit]

- Causality at PhilPapers

- Causality at the Indiana Philosophy Ontology Project

- Causation – Internet Encyclopedia of Philosophy

- Metaphysics of Science – Internet Encyclopedia of Philosophy

- Causal Processes at the Stanford Encyclopedia of Philosophy

- The Art and Science of Cause and Effect – A slide show and tutorial lecture by Judea Pearl

- Donald Davidson: Causal Explanation of Action – The Internet Encyclopedia of Philosophy

- Causal inference in statistics: An overview – By Judea Pearl (September 2009)

- An R implementation of causal calculus

- TimeSleuth – A tool for discovering causality