CUDA

| Developer(s) | NVIDIA Corporation |

|---|---|

| Stable release | 3.2

/ September 17, 2010 |

| Operating system | Windows 7, Windows Vista, Windows XP, Windows Server 2008, Windows Server 2003, Linux, Mac OS X |

| Type | GPGPU |

| License | Proprietary, Freeware |

| Website | Nvidia's CUDA zone |

CUDA (an acronym for Compute Unified Device Architecture) is a parallel computing architecture developed by NVIDIA. CUDA is the computing engine in NVIDIA graphics processing units (GPUs) that is accessible to software developers through variants of industry standard programming languages. Programmers use 'C for CUDA' (C with NVIDIA extensions and certain restrictions), compiled through a PathScale Open64 C compiler,[1] to code algorithms for execution on the GPU. CUDA architecture shares a range of computational interfaces with two competitors -the Khronos Group's Open Computing Language[2] and Microsoft's DirectCompute[3]. Third party wrappers are also available for Python, Perl, Fortran, Java, Ruby, Lua, and MATLAB.

CUDA gives developers access to the virtual instruction set and memory of the parallel computational elements in CUDA GPUs. Using CUDA, the latest NVIDIA GPUs become accessible for computation like CPUs. Unlike CPUs however, GPUs have a parallel throughput architecture that emphasizes executing many concurrent threads slowly, rather than executing a single thread very fast. This approach of solving general purpose problems on GPUs is known as GPGPU.

In the computer game industry, in addition to graphics rendering, GPUs are used in game physics calculations (physical effects like debris, smoke, fire, fluids); examples include PhysX and Bullet. CUDA has also been used to accelerate non-graphical applications in computational biology, cryptography and other fields by an order of magnitude or more.[4][5][6][7] An example of this is the BOINC distributed computing client.[8]

CUDA provides both a low level API and a higher level API. The initial CUDA SDK was made public on 15 February 2007, for Microsoft Windows and Linux. Mac OS X support was later added in version 2.0[9], which supersedes the beta released February 14, 2008.[10] CUDA works with all NVIDIA GPUs from the G8X series onwards, including GeForce, Quadro and the Tesla line. NVIDIA states that programs developed for the GeForce 8 series will also work without modification on all future NVIDIA video cards, due to binary compatibility.

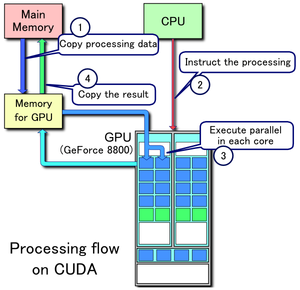

1. Copy data from main mem to GPU mem

2. CPU instructs the process to GPU

3. GPU execute parallel in each core

4. Copy the result from GPU mem to main mem

Advantages

CUDA has several advantages over traditional general purpose computation on GPUs (GPGPU) using graphics APIs.

- Scattered reads – code can read from arbitrary addresses in memory.

- Shared memory – CUDA exposes a fast shared memory region (16KB in size) that can be shared amongst threads. This can be used as a user-managed cache, enabling higher bandwidth than is possible using texture lookups.[11]

- Faster downloads and readbacks to and from the GPU

- Full support for integer and bitwise operations, including integer texture lookups.

Limitations

- CUDA (with compute capability 1.x) uses a recursion-free, function-pointer-free subset of the C language, plus some simple extensions. However, a single process must run spread across multiple disjoint memory spaces, unlike other C language runtime environments. Fermi GPUs now have (nearly) full support of C++. Exceptions as follows:

- Code compiled for devices with compute capability 2.0 (Fermi) and greater may make use of C++ classes, as long as none of the member functions are virtual (this restriction will be removed in some future release). [See CUDA C Programming Guide 3.1 - Appendix D.6]

- Texture rendering is not supported.

- For double precision (only supported in newer GPUs like GTX 260[12]) there are some deviations from the IEEE 754 standard: round-to-nearest-even is the only supported rounding mode for reciprocal, division, and square root. In single precision, denormals and signalling NaNs are not supported; only two IEEE rounding modes are supported (chop and round-to-nearest even), and those are specified on a per-instruction basis rather than in a control word; and the precision of division/square root is slightly lower than single precision.

- The bus bandwidth and latency between the CPU and the GPU may be a bottleneck.

- Threads should be running in groups of at least 32 for best performance, with total number of threads numbering in the thousands. Branches in the program code do not impact performance significantly, provided that each of 32 threads takes the same execution path; the SIMD execution model becomes a significant limitation for any inherently divergent task (e.g. traversing a space partitioning data structure during raytracing).

- Unlike OpenCL, CUDA-enabled GPUs are only available from NVIDIA (GeForce 8 series and above, Quadro and Tesla).[13]

Supported GPUs

Compute capability table (version of CUDA supported)[14]

| Compute capability (version) |

GPUs |

|---|---|

| 1.0 | G80 |

| 1.1 | G86, G84, G98, G96, G96b, G94, G94b, G92, G92b |

| 1.2 | GT218, GT216, GT215 |

| 1.3 | GT200, GT200b |

| 2.0 | GF100, GF104, GF106, GF108 |

A table of devices officially supporting CUDA (Note that many applications require at least 256 MB of dedicated VRAM).[15]

|

|

|

See the Comparison of Nvidia graphics processing units for more information.

Example

This example code in C++ loads a texture from an image into an array on the GPU:

void foo()

{

cudaArray* cu_array;

texture<float, 2, cudaReadModeElementType> tex;

// Allocate array

cudaChannelFormatDesc description = cudaCreateChannelDesc<float>();

cudaMallocArray(&cu_array, &description, width, height);

// Copy image data to array

cudaMemcpyToArray(cu_array, image, width*height*sizeof(float), cudaMemcpyHostToDevice);

// Set texture parameters (default)

tex.addressMode[0] = cudaAddressModeClamp;

tex.addressMode[1] = cudaAddressModeClamp;

tex.filterMode = cudaFilterModePoint;

tex.normalized = false; // do not normalize coordinates

// Bind the array to the texture

cudaBindTextureToArray(tex, cu_array);

// Run kernel

dim3 blockDim(16, 16, 1);

dim3 gridDim((width + blockDim.x - 1)/ blockDim.x, (height + blockDim.y - 1) / blockDim.y, 1);

kernel<<< gridDim, blockDim, 0 >>>(d_odata, height, width);

// Unbind the array from the texture

cudaUnbindTexture(tex);

} //end foo()

__global__ void kernel(float* odata, int height, int width)

{

unsigned int x = blockIdx.x*blockDim.x + threadIdx.x;

unsigned int y = blockIdx.y*blockDim.y + threadIdx.y;

if (x < width && y < height) {

float c = tex2D(tex, x, y);

odata[y*width+x] = c;

}

}

Below is an example given in Python that computes the product of two arrays on the GPU. The unofficial Python language bindings can be obtained from PyCUDA.

import pycuda.compiler as comp

import pycuda.driver as drv

import numpy

import pycuda.autoinit

mod = comp.SourceModule("""

__global__ void multiply_them(float *dest, float *a, float *b)

{

const int i = threadIdx.x;

dest[i] = a[i] * b[i];

}

""")

multiply_them = mod.get_function("multiply_them")

a = numpy.random.randn(400).astype(numpy.float32)

b = numpy.random.randn(400).astype(numpy.float32)

dest = numpy.zeros_like(a)

multiply_them(

drv.Out(dest), drv.In(a), drv.In(b),

block=(400,1,1))

print dest-a*b

Additional Python bindings to simplify matrix multiplication operations can be found in the program pycublas.

import numpy

from pycublas import CUBLASMatrix

A = CUBLASMatrix( numpy.mat([[1,2,3],[4,5,6]],numpy.float32) )

B = CUBLASMatrix( numpy.mat([[2,3],[4,5],[6,7]],numpy.float32) )

C = A*B

print C.np_mat()

Language bindings

- Python - PyCUDA KappaCUDA

- Java - jCUDA, JCuda, JCublas, JCufft

- .NET - CUDA.NET

- MATLAB - Jacket, GPUmat

- Fortran - FORTRAN CUDA, PGI CUDA Fortran Compiler

- Perl - KappaCUDA

- Ruby - KappaCUDA

- Lua - KappaCUDA

Current CUDA architectures

The next generation CUDA architecture (codename: "Fermi") which is standard on NVIDIA's released (GeForce 400 Series [GF100] (GPU) 2010-03-27)[16] GPU is designed from the ground up to natively support more programming languages such as C++. It has eight times the peak double-precision floating-point performance compared to Nvidia's previous-generation Tesla GPU. It also introduced several new features[17] including:

- up to 512 CUDA cores and 3.0 billion transistors

- NVIDIA Parallel DataCache technology

- NVIDIA GigaThread engine

- ECC memory support

- Native support for Visual Studio

Current and future usages of CUDA architecture

- Accelerated rendering of 3D graphics

- Real Time Cloth Simulation OptiTex.com - Real Time Cloth Simulation

- Distributed Calculations, such as predicting the native conformation of proteins

- Medical analysis simulations, for example virtual reality based on CT and MRI scan images.

- Physical simulations, in particular in fluid dynamics.

- Environment statistics

- Accelerated encryption, decryption and compression

- Accelerated interconversion of video file formats

- Artificial intelligence

See also

- GeForce 8 series

- GeForce 9 series

- GeForce 200 Series

- GeForce 400 Series

- Nvidia Quadro - Nvidia's workstation graphics solution

- Nvidia Tesla - Nvidia's first dedicated general purpose GPU (graphics processing unit)

- GPGPU - general purpose computation on GPUs.

- OpenCL - The cross-platform standard supported by both NVidia and AMD/ATI

- DirectCompute - Microsoft API for GPU Computing in Windows Vista and Windows 7

- BrookGPU

- Vectorization

- Lib Sh

- Comparison of MPI, OpenMP, and Stream Processing

- Nvidia Corporation

- Graphics Processing Unit (GPU)

- Stream processing

- Shader

- Larrabee

- Molecular modeling on GPU

- AMD FireStream (ATI GPUs)

- Close to Metal

References

- ^ NVIDIA Clears Water Muddied by Larrabee Shane McGlaun (Blog) - August 5, 2008 - DailyTech

- ^ First OpenCL demo on a GPU on YouTube

- ^ DirectCompute Ocean Demo Running on NVIDIA CUDA-enabled GPU on YouTube

- ^ Giorgos Vasiliadis, Spiros Antonatos, Michalis Polychronakis, Evangelos P. Markatos and Sotiris Ioannidis (2008, Boston, MA, USA). "Gnort: High Performance Network Intrusion Detection Using Graphics Processors" (PDF). Proceedings of the 11th International Symposium on Recent Advances in Intrusion Detection (RAID).

{{cite journal}}: Check date values in:|year=(help); Unknown parameter|month=ignored (help)CS1 maint: multiple names: authors list (link) CS1 maint: year (link) - ^ Schatz, M.C., Trapnell, C., Delcher, A.L., Varshney, A. (2007). "High-throughput sequence alignment using Graphics Processing Units". BMC Bioinformatics. 8:474: 474. doi:10.1186/1471-2105-8-474. PMC 2222658. PMID 18070356.

{{cite journal}}: CS1 maint: multiple names: authors list (link) CS1 maint: unflagged free DOI (link) - ^ Pyrit - Google Code http://code.google.com/p/pyrit/

- ^ Use your NVIDIA GPU for scientific computing, BOINC official site (December 18, 2008)

- ^ NVIDIA CUDA Software Development Kit (CUDA SDK) - Release Notes Version 2.0 for MAC OSX

- ^ CUDA 1.1 - Now on Mac OS X- (Posted on Feb 14, 2008)

- ^ Silberstein, Mark (2007). "Efficient computation of Sum-products on GPUs" (PDF).

- ^ CUDA and double precision floating point numbers

- ^ "CUDA-Enabled Products". CUDA Zone. NVIDIA Corporation. Retrieved 2008-11-03.

- ^ NVIDIA CUDA Compute Capability Comparative Table

- ^ CUDA-Enabled GPU Products

- ^ http://www.hardware.info/nl-NL/video/wmGTacRpaA/nVidia_GeForce_GTX_480_special/ Hardware.info broadcast about Nvidia GeForce GTX 470 and 480

- ^ http://www.nvidia.com/object/fermi_architecture.html The Current Generation CUDA Architecture, Code Named Fermi

External links

This article's use of external links may not follow Wikipedia's policies or guidelines. (April 2010) |

- Official site

- Nvidia Parallel Nsight

- Nvidia CUDA developer registration for professional developers and researchers

- Nvidia CUDA GPU Computing developer forums

- Programming Massively Parallel Processors: A Hands-on Approach

- Intro to GPGPU computing featuring CUDA and OpenCL examples

- A conversation with Jen-Hsun Huang, CEO Nvidia Charlie Rose, February 5, 2009

- Scientific Publications, Videos and Software using CUDA

- How-to Guide: Running CUDA on Visual Studio 2008

- Beyond3D – Introducing CUDA Nvidia's Vision for GPU Computing March 10, 2007

- University of Illinois Nvidia CUDA Course taught by Wen-mei Hwu and David Kirk, Spring 2009

- CUDA: Breaking the Intel & AMD Dominance

- NVidia CUDA Tutorial Slides (from DoD HPCMP2009)

- Ascalaph Liquid GPU molecular dynamics.

- CUDA implementation for multi-core processors

- Integrate CUDA with Visual C++, September 26, 2008

- CUDA.NET - .NET library for CUDA, Linux/Windows compliant

- Using NVIDIA GPU for scientific computing with BOINC software

- CUDA.CS.MSU.SU Russian CUDA developer community

- Enable Intellisense for CUDA in Visual Studio 2008, April 29, 2009

- CUDA Tutorials for high performance computing

- An introduction to CUDA (French)

- NVidia CUDA Tutorial & Examples (from ISC2009)

- GPUBrasil.com, First website on GPGPU in Portuguese

- 3D cloth Simulation OptiTex.com, Implementation of CUDA in Cloth simulation

- DDJ CUDA series, First in the Doctor Dobb's Journal series teaching CUDA (over 13 articles by Rob Farber)