History of computing hardware: Difference between revisions

Ancheta Wis (talk | contribs) m rv tests |

|||

| Line 349: | Line 349: | ||

==See also== |

==See also== |

||

* [[History of computing]] |

* [[History of computing]] hi |

||

* [[Timeline of computing]] |

* [[Timeline of computing]] |

||

* [[List of books on the history of computing]] |

* [[List of books on the history of computing]] |

||

Revision as of 16:21, 5 September 2008

The history of computer hardware encompasses the hardware, its architecture, and its impact on software. The elements of computing hardware have undergone significant improvement over their history. This improvement has triggered world-wide use of the technology, performance has improved and the price has declined.[1] Computers have become commodities accessible to ever-increasing sectors of the world's population.[2] Computing hardware has become a platform for uses other than computation, such as automation, communication, control, entertainment, and education. Each field in turn has imposed its own requirements on the hardware, which has evolved in response to those requirements.[3]

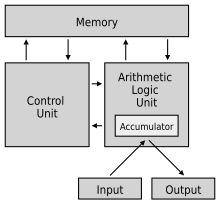

The von Neumann architecture unifies our current computing hardware implementations.[4] Since digital computers rely on digital storage, and tend to be limited by the size and speed of memory, the history of computer data storage is tied to the development of computers. The major elements of computing hardware implement abstractions: input,[5] output,[6] memory,[7] and processor. A processor is composed of control[8] and datapath.[9] In the von Neumann architecture, control of the datapath is stored in memory. This allowed control to become an automatic process; the datapath could be under software control, perhaps in response to events. Beginning with mechanical datapaths such as the abacus and astrolabe, the hardware first started using analogs for a computation, including water and even air as the analog quantities: analog computers have used lengths, pressures, voltages, and currents to represent the results of calculations.[10] Eventually the voltages or currents were standardized, and then digitized. Digital computing elements have ranged from mechanical gears, to electromechanical relays, to vacuum tubes, to transistors, and to integrated circuits, all of which are currently implementing the von Neumann architecture.[11]

| History of computing |

|---|

|

| Hardware |

| Software |

| Computer science |

| Modern concepts |

| By country |

| Timeline of computing |

| Glossary of computer science |

Before computer hardware

Originally calculations were computed by humans, whose job title was computers.[12] These human computers were typically engaged in the calculation of a mathematical expression, say for astronomical ephemerides, for artillery firing tables, or for nautical navigation. The calculations of this period were specialized and expensive, requiring years of training in mathematics.

Earliest calculators

Devices have been used to aid computation for thousands of years, using one-to-one correspondence with our fingers.[13] The earliest counting device was probably a form of tally stick. Later record keeping aids throughout the Fertile Crescent included clay shapes, which represented counts of items, probably livestock or grains, sealed in containers.[14] The abacus was used for arithmetic tasks. The Roman abacus was used in Babylonia as early as 2400 BC. Since then, many other forms of reckoning boards or tables have been invented. In a medieval counting house, a checkered cloth would be placed on a table, and markers moved around on it according to certain rules, as an aid to calculating sums of money (this is the origin of "Exchequer" as a term for a nation's treasury).

A number of analog computers were constructed in ancient and medieval times to perform astronomical calculations. These include the Antikythera mechanism and the astrolabe from ancient Greece (c. 150–100 BC), which are generally regarded as the first mechanical analog computers.[15] Other early versions of mechanical devices used to perform some type of calculations include the planisphere and other mechanical computing devices invented by Abū Rayhān al-Bīrūnī (c. AD 1000); the equatorium and universal latitude-independent astrolabe by Abū Ishāq Ibrāhīm al-Zarqālī (c. AD 1015); the astronomical analog computers of other medieval Muslim astronomers and engineers; and the astronomical clock tower of Su Song (c. AD 1090) during the Song Dynasty.

Scottish mathematician and physicist John Napier noted multiplication and division of numbers could be performed by addition and subtraction, respectively, of logarithms of those numbers. While producing the first logarithmic tables Napier needed to perform many multiplications, and it was at this point that he designed Napier's bones, an abacus-like device used for multiplication and division.[16] Since real numbers can be represented as distances or intervals on a line, the slide rule was invented in the 1620s to allow multiplication and division operations to be carried out significantly faster than was previously possible.[17] Slide rules were used by generations of engineers and other mathematically inclined professional workers, until the invention of the pocket calculator. The engineers in the Apollo program to send a man to the moon made many of their calculations on slide rules, which were accurate to three or four significant figures.[18]

German polymath Wilhelm Schickard built the first digital mechanical calculator in 1623, and thus became the father of the computing era.[19] Since his calculator used techniques such as cogs and gears first developed for clocks, it was also called a 'calculating clock'. It was put to practical use by his friend Johannes Kepler, who revolutionized astronomy when he condensed decades of astronomical observations into algebraic expressions. An original calculator by Pascal (1640) is preserved in the Zwinger Museum. Machines by Blaise Pascal (the Pascaline, 1642) and Gottfried Wilhelm von Leibniz (1671) followed. Leibniz once said "It is unworthy of excellent men to lose hours like slaves in the labour of calculation which could safely be relegated to anyone else if machines were used."[20]

Around 1820, Charles Xavier Thomas created the first successful, mass-produced mechanical calculator, the Thomas Arithmometer, that could add, subtract, multiply, and divide.[21] It was mainly based on Leibniz' work. Mechanical calculators, like the base-ten addiator, the comptometer, the Monroe, the Curta and the Addo-X remained in use until the 1970s. Leibniz also described the binary numeral system,[22] a central ingredient of all modern computers. However, up to the 1940s, many subsequent designs (including Charles Babbage's machines of the 1800s and even ENIAC of 1945) were based on the decimal system;[23] ENIAC's ring counters emulated the operation of the digit wheels of a mechanical adding machine.

In Japan, Ryōichi Yazu (矢頭 良一, Yazu Ryōichi, 1878–1908) had been studying the airplane with engine. He visited Mori Ōgai to show a conceptual model of calculator. He also solicited further financing for his study in 1901, and invented JIDOSOROBAN (Automatic Soroban) in 1902. He also patented the Yazu Arithmometer the same year. His calculator used gears to work. It consisted of a single cylinder and 22 gears and accepted Soroban-like input. It employed both base-2 and base-5 number system mixed (base 2-5 number system). The carry and the end of calculation were determined automatically.[24] More than 200 units of the arithmometer were produced and sold to Ministry of War of Japan, Home Ministry and Agricultural experiment station. Yazu then obtained funding for a further study of the airplane model and succeeded in making a prototype engine. The Wright brothers superseded him, though, and achieved human flight in 1903. Yazu passed away due to a disease 5 years later, in 1908. His patent, the Yazu Arithmometer was later listed in Mechanical Engineering Heritage of Japan No. 30 in July 2008.[25][26][27]

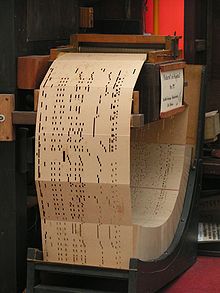

1801: punched card technology

As early as 1725 Basile Bouchon used a perforated paper loop in a loom to establish the pattern to be reproduced on cloth, and in 1726 his co-worker Jean-Baptiste Falcon improved on his design by using perforated paper cards attached to one another for efficiency in adapting and changing the program. The Bouchon-Falcon loom was semi-automatic and required manual feed of the program. In 1801, Joseph-Marie Jacquard developed a loom in which the pattern being woven was controlled by punched cards. The series of cards could be changed without changing the mechanical design of the loom. This was a landmark point in programmability.

In 1833, Charles Babbage moved on from developing his difference engine to developing a more complete design, the analytical engine, which would draw directly on Jacquard's punched cards for its programming.[28] In 1835, Babbage described his analytical engine. It was the plan of a general-purpose programmable computer, employing punch cards for input and a steam engine for power. One crucial invention was to use gears for the function served by the beads of an abacus. In a real sense, computers all contain automatic abacuses (the datapath, arithmetic logic unit, or floating-point unit). His initial idea was to use punch-cards to control a machine that could calculate and print logarithmic tables with huge precision (a specific purpose machine). Babbage's idea soon developed into a general-purpose programmable computer, his analytical engine. While his design was sound and the plans were probably correct, or at least debuggable, the project was slowed by various problems. Babbage was a difficult man to work with and argued with anyone who didn't respect his ideas. All the parts for his machine had to be made by hand. Small errors in each item can sometimes sum up to large discrepancies in a machine with thousands of parts, which required these parts to be much better than the usual tolerances needed at the time. The project dissolved in disputes with the artisan who built parts and was ended with the depletion of government funding. Ada Lovelace, Lord Byron's daughter, translated and added notes to the "Sketch of the Analytical Engine" by Federico Luigi, Conte Menabrea.[29]

A reconstruction of the Difference Engine II, an earlier, more limited design, has been operational since 1991 at the London Science Museum. With a few trivial changes, it works as Babbage designed it and shows that Babbage was right in theory. The museum used computer-operated machine tools to construct the necessary parts, following tolerances which a machinist of the period would have been able to achieve. The failure of Babbage to complete the engine can be chiefly attributed to difficulties not only related to politics and financing, but also to his desire to develop an increasingly sophisticated computer.[30] Following in the footsteps of Babbage, although unaware of his earlier work, was Percy Ludgate, an accountant from Dublin, Ireland. He independently designed a programmable mechanical computer, which he described in a work that was published in 1909.

In 1890, the United States Census Bureau used punched cards, sorting machines, and tabulating machines designed by Herman Hollerith to handle the flood of data from the decennial census mandated by the Constitution.[31] Hollerith's company eventually became the core of IBM. IBM developed punch card technology into a powerful tool for business data-processing and produced an extensive line of specialized unit record equipment. By 1950, the IBM card had become ubiquitous in industry and government. The warning printed on most cards intended for circulation as documents (checks, for example), "Do not fold, spindle or mutilate," became a motto for the post-World War II era.[32]

Leslie Comrie's articles on punched card methods and W.J. Eckert's publication of Punched Card Methods in Scientific Computation in 1940, described techniques which were sufficiently advanced to solve differential equations[33] or perform multiplication and division using floating point representations, all on punched cards and unit record machines. In the image of the tabulator (see left), note the patch panel, which is visible on the right side of the tabulator. A row of toggle switches is above the patch panel. The Thomas J. Watson Astronomical Computing Bureau, Columbia University performed astronomical calculations representing the state of the art in computing.[34]

Computer programming in the punch card era revolved around the computer center. The computer users, for example, science and engineering students at universities, would submit their programming assignments to their local computer center. in the form of a stack of cards, one card per program line. They then had to wait for the program to be queued for processing, compiled, and executed. In due course a printout of any results, marked with the submitter's identification, would be placed in an output tray outside the computer center. In many cases these results would comprise solely a printout of error messages regarding program syntax etc., necessitating another edit-compile-run cycle.[35] Punched cards are still used and manufactured to this day, and their distinctive dimensions[36] (and 80-column capacity) can still be recognized in forms, records, and programs around the world.

1930s–1960s: desktop calculators

By the 1900s, earlier mechanical calculators, cash registers, accounting machines, and so on were redesigned to use electric motors, with gear position as the representation for the state of a variable. The word "computer" was a job title assigned to people who used these calculators to perform mathematical calculations. By the 1920s Lewis Fry Richardson's interest in weather prediction led him to propose human computers and numerical analysis to model the weather; to this day, the most powerful computers on Earth are needed to adequately model its weather using the Navier-Stokes equations.[37]

Companies like Friden, Marchant Calculator and Monroe made desktop mechanical calculators from the 1930s that could add, subtract, multiply and divide. During the Manhattan project, future Nobel laureate Richard Feynman was the supervisor of the roomful of human computers, many of them women mathematicians, who understood the differential equations which were being solved for the war effort. Even the renowned Stanisław Ulam was pressed into service to translate the mathematics into computable approximations for the hydrogen bomb, after the war.

In 1948, the Curta was introduced. This was a small, portable, mechanical calculator that was about the size of a pepper grinder. Over time, during the 1950s and 1960s a variety of different brands of mechanical calculator appeared on the market. The first all-electronic desktop calculator was the British ANITA Mk.VII, which used a Nixie tube display and 177 subminiature thyratron tubes. In June 1963, Friden introduced the four-function EC-130. It had an all-transistor design, 13-digit capacity on a 5-inch (130 mm) CRT, and introduced reverse Polish notation (RPN) to the calculator market at a price of $2200. The model EC-132 added square root and reciprocal functions. In 1965, Wang Laboratories produced the LOCI-2, a 10-digit transistorized desktop calculator that used a Nixie tube display and could compute logarithms.

Advanced analog computers

Before World War II, mechanical and electrical analog computers were considered the "state of the art", and many thought they were the future of computing. Analog computers take advantage of the strong similarities between the mathematics of small-scale properties — the position and motion of wheels or the voltage and current of electronic components — and the mathematics of other physical phenomena,[38] e.g. ballistic trajectories, inertia, resonance, energy transfer, momentum, etc. They model physical phenomena with electrical voltages and currents[39][40] as the analog quantities.

Centrally, these analog systems work by creating electrical analogs of other systems, allowing users to predict behavior of the systems of interest by observing the electrical analogs.[41] The most useful of the analogies was the way the small-scale behavior could be represented with integral and differential equations, and could be thus used to solve those equations. An ingenious example of such a machine, using water as the analog quantity, was the water integrator built in 1928; an electrical example is the Mallock machine built in 1941. A planimeter is a device which does integrals, using distance as the analog quantity. Until the 1980s, HVAC systems used air both as the analog quantity and the controlling element. Unlike modern digital computers, analog computers are not very flexible, and need to be reconfigured (i.e., reprogrammed) manually to switch them from working on one problem to another. Analog computers had an advantage over early digital computers in that they could be used to solve complex problems using behavioral analogues while the earliest attempts at digital computers were quite limited.

Since computers were rare in this era, the solutions were often hard-coded into paper forms such as graphs and nomograms,[42] which could then produce analog solutions to these problems, such as the distribution of pressures and temperatures in a heating system. Some of the most widely deployed analog computers included devices for aiming weapons, such as the Norden bombsight[43] and the fire-control systems,[44] such as Arthur Pollen's Argo system for naval vessels. Some stayed in use for decades after WWII; the Mark I Fire Control Computer was deployed by the United States Navy on a variety of ships from destroyers to battleships. Other analog computers included the Heathkit EC-1, and the hydraulic MONIAC Computer which modeled econometric flows.[45]

The art of analog computing reached its zenith with the differential analyzer,[46] invented in 1876 by James Thomson and built by H. W. Nieman and Vannevar Bush at MIT starting in 1927. Fewer than a dozen of these devices were ever built; the most powerful was constructed at the University of Pennsylvania's Moore School of Electrical Engineering, where the ENIAC was built. Digital electronic computers like the ENIAC spelled the end for most analog computing machines, but hybrid analog computers, controlled by digital electronics, remained in substantial use into the 1950s and 1960s, and later in some specialized applications. But like all digital devices, the decimal precision of a digital device is a limitation,[47] as compared to an analog device, in which the accuracy is a limitation.[48] As electronics progressed during the twentieth century, its problems of operation at low voltages while maintaining high signal-to-noise ratios[49] were steadily addressed, as shown below, for a digital circuit is a specialized form of analog circuit, intended to operate at standardized settings (continuing in the same vein, logic gates can be realized as forms of digital circuits). But as digital computers have become faster and use larger memory (e.g., RAM or internal storage), they have almost entirely displaced analog computers. Computer programming, or coding, has arisen as another human profession.

The era of modern computing began with a flurry of development before and during World War II, as electronic circuit elements[50] replaced mechanical equivalents and digital calculations replaced analog calculations. Machines such as the Z3, the Atanasoff–Berry Computer, the Colossus computers, and the ENIAC were built by hand using circuits containing relays or valves (vacuum tubes), and often used punched cards or punched paper tape for input and as the main (non-volatile) storage medium.

In this era, a number of different machines were produced with steadily advancing capabilities. At the beginning of this period, nothing remotely resembling a modern computer existed, except in the long-lost plans of Charles Babbage and the mathematical musings of Alan Turing and others. At the end of the era, devices like the EDSAC had been built, and are universally agreed to be digital computers. Defining a single point in the series as the "first computer" misses many subtleties (see the table "Defining characteristics of some early digital computers of the 1940s" below).

Alan Turing's 1936 paper[51] proved enormously influential in computing and computer science in two ways. Its main purpose was to prove that there were problems (namely the halting problem) that could not be solved by any sequential process. In doing so, Turing provided a definition of a universal computer which executes a program stored on tape. This construct came to be called a Turing machine; it replaces Kurt Gödel's more cumbersome universal language based on arithmetics. Except for the limitations imposed by their finite memory stores, modern computers are said to be Turing-complete, which is to say, they have algorithm execution capability equivalent to a universal Turing machine.

For a computing machine to be a practical general-purpose computer, there must be some convenient read-write mechanism, punched tape, for example. With a knowledge of Alan Turing's theoretical 'universal computing machine' John von Neumann defined an architecture which uses the same memory both to store programs and data: virtually all contemporary computers use this architecture (or some variant). While it is theoretically possible to implement a full computer entirely mechanically (as Babbage's design showed), electronics made possible the speed and later the miniaturization that characterize modern computers.

There were three parallel streams of computer development in the World War II era; the first stream largely ignored, and the second stream deliberately kept secret. The first was the German work of Konrad Zuse. The second was the secret development of the Colossus computers in the UK. Neither of these had much influence on the various computing projects in the United States. The third stream of computer development, Eckert and Mauchly's ENIAC and EDVAC, was widely publicized.[52][53]

Program-controlled computers

Working in isolation in Germany, Konrad Zuse started construction in 1936 of his first Z-series calculators featuring memory and (initially limited) programmability. Zuse's purely mechanical, but already binary Z1, finished in 1938, never worked reliably due to problems with the precision of parts.

Zuse's subsequent machine, the Z3,[54][Do you mean Z2 instead? was finished in 1941. It was based on telephone relays and did work satisfactorily. The Z3 thus became the first functional program-controlled, all-purpose, digital computer. In many ways it was quite similar to modern machines, pioneering numerous advances, such as floating point numbers. Replacement of the hard-to-implement decimal system (used in Charles Babbage's earlier design) by the simpler binary system meant that Zuse's machines were easier to build and potentially more reliable, given the technologies available at that time.

Programs were fed into Z3 on punched films. Conditional jumps were missing, but since the 1990s it has been proved theoretically that Z3 was still a universal computer (ignoring its physical storage size limitations). In two 1936 patent applications, Konrad Zuse also anticipated that machine instructions could be stored in the same storage used for data – the key insight of what became known as the von Neumann architecture and was first implemented in the later British EDSAC design (1949). Zuse also claimed to have designed the first higher-level programming language, (Plankalkül), in 1945 (which was published in 1948) although it was implemented for the first time in 2000 by a team around Raúl Rojas at the Free University of Berlin – five years after Zuse died.

Zuse suffered setbacks during World War II when some of his machines were destroyed in the course of Allied bombing campaigns. Apparently his work remained largely unknown to engineers in the UK and US until much later, although at least IBM was aware of it as it financed his post-war startup company in 1946 in return for an option on Zuse's patents.

Colossus

During World War II, the British at Bletchley Park (40 miles north of London) achieved a number of successes at breaking encrypted German military communications. The German encryption machine, Enigma, was attacked with the help of electro-mechanical machines called bombes. The bombe, designed by Alan Turing and Gordon Welchman, after the Polish cryptographic bomba by Marian Rejewski (1938) came into use in 1941.[55] They ruled out possible Enigma settings by performing chains of logical deductions implemented electrically. Most possibilities led to a contradiction, and the few remaining could be tested by hand.

The Germans also developed a series of teleprinter encryption systems, quite different from Enigma. The Lorenz SZ 40/42 machine was used for high-level Army communications, termed "Tunny" by the British. The first intercepts of Lorenz messages began in 1941. As part of an attack on Tunny, Professor Max Newman and his colleagues helped specify the Colossus.[56] The Mk I Colossus was built between March and December 1943 by Tommy Flowers and his colleagues at the Post Office Research Station at Dollis Hill in London and then shipped to Bletchley Park in January 1944.

Colossus was the first totally electronic computing device. The Colossus used a large number of valves (vacuum tubes). It had paper-tape input and was capable of being configured to perform a variety of boolean logical operations on its data, but it was not Turing-complete. Nine Mk II Colossi were built (The Mk I was converted to a Mk II making ten machines in total). Details of their existence, design, and use were kept secret well into the 1970s. Winston Churchill personally issued an order for their destruction into pieces no larger than a man's hand. Due to this secrecy the Colossi were not included in many histories of computing. A reconstructed copy of one of the Colossus machines is now on display at Bletchley Park.

American developments

In 1937, Shannon produced his master's thesis[57] at MIT that implemented Boolean algebra using electronic relays and switches for the first time in history. Entitled A Symbolic Analysis of Relay and Switching Circuits, Shannon's thesis essentially founded practical digital circuit design. George Stibitz completed a relay-based computer he dubbed the "Model K" at Bell Labs in November 1937. Bell Labs authorized a full research program in late 1938 with Stibitz at the helm. Their Complex Number Calculator,[58] completed January 8, 1940, was able to calculate complex numbers. In a demonstration to the American Mathematical Society conference at Dartmouth College on September 11, 1940, Stibitz was able to send the Complex Number Calculator remote commands over telephone lines by a teletype. It was the first computing machine ever used remotely, in this case over a phone line. Some participants in the conference who witnessed the demonstration were John von Neumann, John Mauchly, and Norbert Wiener, who wrote about it in their memoirs.

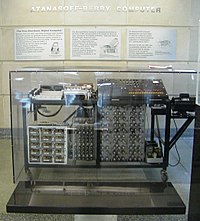

In 1939, John Vincent Atanasoff and Clifford E. Berry of Iowa State University developed the Atanasoff–Berry Computer (ABC),[59] a special purpose digital electronic calculator for solving systems of linear equations. The design used over 300 vacuum tubes for high speed and employed capacitors fixed in a mechanically rotating drum for memory. Though the ABC machine was not programmable, it was the first to use electronic circuits. ENIAC co-inventor John Mauchly examined the ABC in June 1941, and its influence on the design of the later ENIAC machine is a matter of contention among computer historians. The ABC was largely forgotten until it became the focus of the lawsuit Honeywell v. Sperry Rand, the ruling of which invalidated the ENIAC patent (and several others) as, among many reasons, having been anticipated by Atanasoff's work.

In 1939, development began at IBM's Endicott laboratories on the Harvard Mark I. Known officially as the Automatic Sequence Controlled Calculator,[60] the Mark I was a general purpose electro-mechanical computer built with IBM financing and with assistance from IBM personnel, under the direction of Harvard mathematician Howard Aiken. Its design was influenced by Babbage's Analytical Engine, using decimal arithmetic and storage wheels and rotary switches in addition to electromagnetic relays. It was programmable via punched paper tape, and contained several calculation units working in parallel. Later versions contained several paper tape readers and the machine could switch between readers based on a condition. Nevertheless, the machine was not quite Turing-complete. The Mark I was moved to Harvard University and began operation in May 1944.

ENIAC

The US-built ENIAC (Electronic Numerical Integrator and Computer) was the first electronic general-purpose computer. Built under the direction of John Mauchly and J. Presper Eckert at the University of Pennsylvania, it was 1,000 times faster than the Harvard Mark I. ENIAC's development and construction lasted from 1943 to full operation at the end of 1945.

When its design was proposed, many researchers believed that the thousands of delicate valves (i.e. vacuum tubes) would burn out often enough that the ENIAC would be so frequently down for repairs as to be useless. It was, however, capable of up to thousands of operations per second for hours at a time between valve failures.

ENIAC was unambiguously a Turing-complete device. A "program" on the ENIAC, however, was defined by the states of its patch cables and switches, a far cry from the stored program electronic machines that evolved from it. To program it meant to rewire it.[61] (Improvements completed in 1948 made it possible to execute stored programs set in function table memory, which made programming less a "one-off" effort, and more systematic.) It was possible to run operations in parallel, as it could be wired to operate multiple accumulators simultaneously. Thus the sequential operation which is the hallmark of a von Neumann machine occurred after ENIAC.

First-generation von Neumann machines

Even before the ENIAC was finished, Eckert and Mauchly recognized its limitations and started the design of a stored-program computer, EDVAC. John von Neumann was credited with a widely-circulated report describing the EDVAC design in which both the programs and working data were stored in a single, unified store. This basic design, denoted the von Neumann architecture, would serve as the foundation for the world-wide development of ENIAC's successors.[62] In this generation of equipment, temporary or working storage was provided by acoustic delay lines, which used the propagation time of sound through a medium such as liquid mercury (or through a wire) to briefly store data. As series of acoustic pulses is sent along a tube; after a time, as the pulse reached the end of the tube, the circuitry detected whether the pulse represented a 1 or 0 and caused the oscillator to re-send the pulse. Others used Williams tubes, which use the ability of a television picture tube to store and retrieve data. By 1954, magnetic core memory[63] was rapidly displacing most other forms of temporary storage, and dominated the field through the mid-1970s.

EDVAC was the first stored-program computer designed; however it was not the first to run. Eckert and Mauchly left the project and its construction floundered. The first working von Neumann machine was the Manchester "Baby" or Small-Scale Experimental Machine, developed by Frederic C. Williams and Tom Kilburn at University of Manchester in 1948;[64] it was followed in 1949 by the Manchester Mark I computer, a complete system, using Williams tube and magnetic drum memory, and introducing index registers.[65] The other contender for the title "first digital stored program computer" had been EDSAC, designed and constructed at the University of Cambridge. Operational less than one year after the Manchester "Baby", it was also capable of tackling real problems. EDSAC was actually inspired by plans for EDVAC (Electronic Discrete Variable Automatic Computer), the successor to ENIAC; these plans were already in place by the time ENIAC was successfully operational. Unlike ENIAC, which used parallel processing, EDVAC used a single processing unit. This design was simpler and was the first to be implemented in each succeeding wave of miniaturization, and increased reliability. Some view Manchester Mark I / EDSAC / EDVAC as the "Eves" from which nearly all current computers derive their architecture. Manchester University's machine became the prototype for the Ferranti Mark I. The first Ferranti Mark I machine was delivered to the University in February, 1951 and at least nine others were sold between 1951 and 1957.

The first universal programmable computer in the Soviet Union was created by a team of scientists under direction of Sergei Alekseyevich Lebedev from Kiev Institute of Electrotechnology, Soviet Union (now Ukraine). The computer MESM (МЭСМ, Small Electronic Calculating Machine) became operational in 1950. It had about 6,000 vacuum tubes and consumed 25 kW of power. It could perform approximately 3,000 operations per second. Another early machine was CSIRAC, an Australian design that ran its first test program in 1949. CSIRAC is the oldest computer still in existence and the first to have been used to play digital music.[66]

In October 1947, the directors of J. Lyons & Company, a British catering company famous for its teashops but with strong interests in new office management techniques, decided to take an active role in promoting the commercial development of computers. By 1951 the LEO I computer was operational and ran the world's first regular routine office computer job. On 17 November 1951, the J. Lyons company began weekly operation of a bakery valuations job on the LEO (Lyons Electronic Office). This was the first business application to go live on a stored program computer.[67]

Template:Early computer characteristics

In June 1951, the UNIVAC I (Universal Automatic Computer) was delivered to the U.S. Census Bureau. Remington Rand eventually sold 46 machines at more than $1 million each. UNIVAC was the first 'mass produced' computer; all predecessors had been 'one-off' units. It used 5,200 vacuum tubes and consumed 125 kW of power. It used a mercury delay line capable of storing 1,000 words of 11 decimal digits plus sign (72-bit words) for memory. A key feature of the UNIVAC system was a newly invented type of metal magnetic tape, and a high-speed tape unit, for non-volatile storage. Magnetic media is still used in almost all computers.[68]

In 1952, IBM publicly announced the IBM 701 Electronic Data Processing Machine, the first in its successful 700/7000 series and its first IBM mainframe computer. The IBM 704, introduced in 1954, used magnetic core memory, which became the standard for large machines. The first implemented high-level general purpose programming language, Fortran, was also being developed at IBM for the 704 during 1955 and 1956 and released in early 1957. (Konrad Zuse's 1945 design of the high-level language Plankalkül was not implemented at that time.) A volunteer user group was founded in 1955 to share their software and experiences with the IBM 701; this group, which exists to this day, was a progenitor of open source.

IBM introduced a smaller, more affordable computer in 1954 that proved very popular. The IBM 650 weighed over 900 kg, the attached power supply weighed around 1350 kg and both were held in separate cabinets of roughly 1.5 meters by 0.9 meters by 1.8 meters. It cost $500,000 or could be leased for $3,500 a month. Its drum memory was originally only 2000 ten-digit words, and required arcane programming for efficient computing. Memory limitations such as this were to dominate programming for decades afterward, until the evolution of hardware capabilities and a programming model that were more sympathetic to software development.

In 1955, Maurice Wilkes invented microprogramming,[69] which allows the base instruction set to be defined or extended by built-in programs (now called firmware or microcode).[70] It was widely used in the CPUs and floating-point units of mainframe and other computers, such as the IBM 360 series.[71]

In 1956, IBM sold its first magnetic disk system, RAMAC (Random Access Method of Accounting and Control). It used 50 24-inch (610 mm) metal disks, with 100 tracks per side. It could store 5 megabytes of data and cost $10,000 per megabyte.[72] (As of 2008, magnetic storage, in the form of hard disks, costs less than one 50th of a cent per megabyte).

Second generation: transistors

In the second half of the 1950s bipolar junction transistors (BJTs)[73] replaced vacuum tubes. Their use gave rise to the "second generation" computers. Initially, it was believed that very few computers would ever be produced or used.[74] This was due in part to their size, cost, and the skill required to operate or interpret their results. Transistors[75] greatly reduced computers' size, initial cost and operating cost. The bipolar junction transistor[76] was invented in 1947.[77] If no electrical current flows through the base-emitter path of a bipolar transistor, the transistor's collector-emitter path blocks electrical current (and the transistor is said to "turn full off"). If sufficient current flows through the base-emitter path of a transistor, that transistor's collector-emitter path also passes current (and the transistor is said to "turn full on"). Current flow or current blockage represent binary 1 (true) or 0 (false), respectively.[78] Compared to vacuum tubes, transistors have many advantages: they are less expensive to manufacture and are much faster, switching from the condition 1 to 0 in millionths or billionths of a second. Transistor volume is measured in cubic millimeters compared to vacuum tubes' cubic centimeters. Transistors' lower operating temperature increased their reliability, compared to vacuum tubes. Transistorized computers could contain tens of thousands of binary logic circuits in a relatively compact space.

Typically, second-generation computers[79][80] were composed of large numbers of printed circuit boards such as the IBM Standard Modular System[81] each carrying one to four logic gates or flip-flops. A second generation computer, the IBM 1401, captured about one third of the world market. IBM installed more than one hundred thousand 1401s between 1960 and 1964— This period saw the only Italian attempt: the ELEA by Olivetti, produced in 110 units.

Transistorized electronics improved not only the CPU (Central Processing Unit), but also the peripheral devices. The IBM 350 RAMAC was introduced in 1956 and was the world's first disk drive. The second generation disk data storage units were able to store tens of millions of letters and digits. Multiple Peripherals can be connected to the CPU, increasing the total memory capacity to hundreds of millions of characters. Next to the fixed disk storage units, connected to the CPU via high-speed data transmission, were removable disk data storage units. A removable disk stack can be easily exchanged with another stack in a few seconds. Even if the removable disks' capacity is smaller than fixed disks,' their interchangeability guarantees an nearly unlimited quantity of data close at hand. But magnetic tape provided archival capability for this data, at a lower cost than disk.

Many second generation CPUs delegated peripheral device communications to a secondary processor. For example, while the communication processor controlled card reading and punching, the main CPU executed calculations and binary branch instructions. One databus would bear data between the main CPU and core memory at the CPU's fetch-execute cycle rate, and other databusses would typically serve the peripheral devices. On the PDP-1, the core memory's cycle time was 5 microseconds; consequently most arithmetic instructions took 10 microseconds (100,000 operations per second) because most operations took at least two memory cycles; one for the instruction, one for the operand data fetch.

During the second generation remote terminal units (often in the form of teletype machines like a Friden Flexowriter) saw greatly increased use. Telephone connections provided sufficient speed for early remote terminals and allowed hundreds of kilometers separation between remote-terminals and the computing center. Eventually these stand-alone computer networks would be generalized into an interconnected network of networks — the Internet.[82]

Post-1960: third generation and beyond

The explosion in the use of computers began with 'Third Generation' computers. These relied on Jack St. Clair Kilby's[83] and Robert Noyce's[84] independent invention of the integrated circuit (or microchip), which later led to the invention of the microprocessor,[85] by Ted Hoff, Federico Faggin, and Stanley Mazor at Intel.[86] The integrated circuit in the image on the right, for example, an Intel 8742, is an 8-bit microcontroller that includes a CPU running at 12 MHz, 128 bytes of RAM, 2048 bytes of EPROM, and I/O in the same chip.

During the 1960s there was considerable overlap between second and third generation technologies.[87] IBM implemented its IBM Solid Logic Technology modules in hybrid circuits for the IBM System/360 in 1964. As late as 1975, Sperry Univac continued the manufacture of second-generation machines such as the UNIVAC 494. The Burroughs large systems such as the B5000 were stack machines which allowed for simpler programming. These pushdown automatons were also implemented in minicomputers and microprocessors later, which influenced programming language design. Minicomputers served as low-cost computer centers for industry, business and universities.[88] It became possible to simulate analog circuits with the simulation program with integrated circuit emphasis, or SPICE (1971) on minicomputers, one of the programs for electronic design automation (EDA). The microprocessor led to the development of the microcomputer, small, low-cost computers that could be owned by individuals and small businesses. Microcomputers, the first of which appeared in the 1970s, became ubiquitous in the 1980s and beyond. Steve Wozniak, co-founder of Apple Computer, is credited with developing the first mass-market home computers. However, his first computer, the Apple I, came out some time after the MOS Technology KIM-1 and Altair 8800, and the first Apple computer with graphic and sound capabilities came out well after the Commodore PET. Computing has evolved with microcomputer architectures, with features added from their larger brethren, now dominant in most market segments.

Systems as complicated as computers require very high reliability. ENIAC remained on, in continuous operation from 1947 to 1955, for eight years before being shut down. Although a vacuum tube might fail, it would be replaced without bringing down the system. By the simple strategy of never shutting down ENIAC, the failures were dramatically reduced. Hot-pluggable hard disks, like the hot-pluggable vacuum tubes of yesteryear, continue the tradition of repair during continuous operation. Semiconductor memories routinely have no errors when they operate, although operating systems like Unix have employed memory tests on start-up to detect failing hardware. Today, the requirement of reliable performance is made even more stringent when server farms are the delivery platform. Google has managed this by using fault-tolerant software to recover from hardware failures, and is even working on the concept of replacing entire server farms on-the-fly, during a service event.[89]

In the twenty-first century, multi-core CPUs became commercially available. Content-addressable memory (CAM)[90] has become inexpensive enough to be used in networking, although no computer system has yet implemented hardware CAMs for use in programming languages. Currently, CAMs (or associative arrays) in software are programming-language-specific. Semiconductor memory cell arrays are very regular structures, and manufacturers prove their processes on them; this allows price reductions on memory products. After semiconductor memories became commodities, computer software became less labor-intensive; programming codes became less arcane, more understandable.[91] When the CMOS field effect transistor-based logic gates supplanted bipolar transistors, computer power consumption could decrease dramatically (A CMOS FET draws current during the 'transition' between logic states, unlike the higher current draw of a BJT). This has allowed computing to become a commodity which is now ubiquitous, embedded in many forms, from greeting cards and telephones to satellites. Computing hardware and its software have even become a metaphor for the operation of the universe.[92]

An indication of the rapidity of development of this field can be inferred by the history of the seminal article.[93] By the time that anyone had time to write anything down, it was obsolete. After 1945, others read John von Neumann's First Draft of a Report on the EDVAC, and immediately started implementing their own systems. To this day, the pace of development has continued, worldwide.[94][95]

See also

External links

Notes

- ^ Patterson & Hennessy 1998, p. 3

- ^ Although the price of hardware has fallen dramatically in the past century, a computer system needs software and skilled use, to this day, and the total cost is still subject to economic forces, such as competition. For example, the open source movement has prompted some formerly proprietary software vendors to open up some of their software, so as to remain competitive in the market.

- ^ Keepon is a small robot which has merged automation, communication, control, entertainment, and education in 'one' research project.

- ^ Backus 1978

- ^ Historically, the media for 'input' have been sequences of events: punch card and paper tape hole punch, keyboard keystroke, or mouse click.

- ^ Historically, the media for 'output' have been sequences of states: punch card and paper tape hole punch, print mark, or light bulb.

- ^ 'Memory' is US usage; 'storage' is UK usage; the terms are not completely equivalent: 'memory' has the connotation of rapid access; 'storage' has the connotation of large capacity. (The use of the term 'storage' dates back to Ada Lovelace in the nineteenth century.) Magnetic core memory was much faster than disk; which was cheaper, with higher capacity. There is a hierarchy of storage, which trades capacity for speed of access. To this day, semiconductor memory is more expensive than disk or tape.

- ^ Historically, 'control' has been implemented by processes, which have been sequences of manual interventions (the earliest versions), switch settings (nineteenth century), patch panel connections (twentieth century), and then stored programs, perhaps in microcode.

- ^ The datapath for integers dates back to the ENIAC's decision (1945–1946) to implement arithmetic as part of the fundamental architecture, and to defer the implementation of floating point arithmetic to a later date as noted in Patterson & Hennessy 1998, pp. 312–334; other data types (audio, video, etc.) can take other datapaths.

- ^ Babbage's Analytical engine was intended to be steam powered.

- ^ The major advance in this architecture over its prototype, ENIAC, is that a computer program be stored in memory. Zuse' architecture did the same. However, Von Neumann deferred his floating point implementation until after ENIAC, while Zuse' Z3 implemented floating point. Patterson & Hennessy 1998, pp. 34–35 note the Burks, Goldstine, and von Neumann "... paper was incredible for the period. Reading it today, you would never guess this landmark paper was written more than 50 years ago because it discusses most of the architectural concepts seen in modern computers. "

- ^ The term 'computer'—meaning a piece of hardware—shifted to its current meaning in the years after the Manhattan Project, when people were still computers.

- ^ Georges Ifrah notes that humans learned to count on their hands. Ifrah shows, for example, a picture of Boethius (who lived 480–524 or 525) reckoning on his fingers in Ifrah 2000, p. 48.

- ^ According to Schmandt-Besserat 1981, these clay containers contained tokens, the total of which were the count of objects being transferred. The containers thus served as a bill of lading or an accounts book. In order to avoid breaking open the containers, marks were placed on the outside of the containers, for the count. Eventually (Schmandt-Besserat estimates it took 4000 years) the marks on the outside of the containers were all that were needed to convey the count, and the clay containers evolved into clay tablets with marks for the count.

- ^ Lazos 1994

- ^ A Spanish implementation of Napier's bones (1617), is documented in Montaner & Simon 1887, pp. 19–20.

- ^ Kells, Kern & Bland 1943, p. 92

- ^ Kells, Kern & Bland 1943, p. 82, as log(2)=.3010, or 4 places.

- ^ Schmidhuber

- ^ As quoted in Smith 1929, pp. 180–181

- ^ Discovering the Arithmometer, Cornell University

- ^ Leibniz 1703

- ^ Binary-coded decimal (BCD) is a numeric representation, or character encoding, which is still extant.

- ^ "Yazu Ryōichi: The dream for the sky and invention of Arithmometer" (in Japanese). Yomiuri Shinbun. Retrieved 2008-08-05.

- ^ The History of Japanese Mechanical Calculating Machines

- ^ Mechanical Calculator, "JIDOSOROBAN", The Japan Society of Mechanical Engineers

- ^ 8/9 page: Biquinary mechanical calculating machine,“Jido-Soroban” (automatic abacus), built by Ryoichi Yazu, National Science Museum of Japan

- ^ Jones

- ^ Menabrea & Lovelace 1843

- ^ Today, many in the computer field term this sort of obsession creeping featuritis.

- ^ Hollerith 1890

- ^ Lubar 1991

- ^ Eckert 1935

- ^ Eckert 1940, pp. 101=114. Chapter XII is "The Computation of Planetary Pertubations".

- ^ Fisk 2005

- ^ Hollerith selected the size of the punch card to fit in the metal containers which held the American dollar bills of the day. The dollar is now smaller than it was then.

- ^ Hunt 1998, pp. xiii–xxxvi

- ^ "The same equations have the same solutions." — R. P. Feynman

- ^ Electrical circuits are composed of elements with resistance, capacitance, inductance, and researchers have just found a fourth basic integrated circuit element, called a memristor as of April 2008.

- ^ Chua 1971, pp. 507–519

- ^ See, for example, Horowitz & Hill 1989, pp. 1–44

- ^ Steinhaus 1999, pp. 92–95, 301

- ^ Norden

- ^ Singer 1946

- ^ Phillips

- ^ Template:Fr Coriolis 1836, pp. 5–9

- ^ The number of digits in the accumulator is a fundamental limitation to a computation. If a result exceeds the number of digits, this condition is called overflow.

- ^ The noise level, compared to the signal level, is a fundamental factor, see for example Davenport & Root 1958, pp. 112–364.

- ^ Ziemer, Tranter & Fannin 1993, p. 370.

- ^ In this era, the circuit elements were relays, resistors, capacitors, inductors, and vacuum tubes.

- ^ Turing 1937, pp. 230–265. Online versions: Proceedings of the London Mathematical Society Another version online.

- ^ Moye 1996

- ^ Bergin 1996

- ^ Zuse

- ^ Welchman 1984, pp. 138–145, 295–309

- ^ Copeland 2006.

- ^ Shannon 1940

- ^ George Stibitz, US patent 2668661, "Complex Computer", issued 1954-02-09, assigned to AT&T, 102 pages.

- ^ January 15, 1941 notice in the Des Moines Register.

- ^ Da Cruz 2008

- ^ Six women did most of the programming of ENIAC.

- ^ von Neumann 1945, p. 1. The title page, as submitted by Goldstine, reads: "First Draft of a Report on the EDVAC by John von Neumann, Contract No. W-670-ORD-4926, Between the United States Army Ordnance Department and the University of Pennsylvania Moore School of Electrical Engineering".

- ^ An Wang filed October 1949, US patent 2708722, "Pulse transfer controlling devices", issued 1955-05-17.

- ^ Enticknap 1998, p. 1; Baby's 'first good run' was June 21, 1948.

- ^ Manchester 1998, by R.B.E. Napper, et.al.

- ^ CSIRAC 2005

- ^ Martin 2008, p. 24 notes that David Caminer (1915–2008) served as the first corporate electronic systems analyst, for this first business computer system, a Leo computer, part of J. Lyons & Company. LEO would calculate an employee's pay, handle billing, and other office automation tasks.

- ^ Magnetic tape will be the primary data storage mechanism when CERN's Large Hadron Collider comes online in 2008.

- ^ Wilkes 1986, pp. 115–126

- ^ Horowitz & Hill 1989, p. 743

- ^ Patterson & Hennessy 1998, p. 424: note that when IBM was preparing its transition from the 700/7000 series to S/360, they emulated the software of the older systems in microcode, so as to be able to run older programs on the new IBM 360.

- ^ IBM 1956

- ^ Feynman, Leighton & Sands 1965, pp. III 14-11 to 14-12

- ^ Bowden 1970, pp. 43–53

- ^ A transistor is an electronic device of very pure germanium, silicon or other semiconductor monocrystal to which dopants have been added in very precisely controlled quantities, to selected sections of the device.

- ^ In 1947, Bardeen and Brattain prototyped the point-contact transistor, in form very much like a cat's whisker diode, which had inherent reliability problems. It was superseded by the BJT.

- ^ Americans John Bardeen, Walter Brattain and William Shockley shared the 1956 Nobel Prize in Physics for their invention of the transistor.

- ^ Cleary 1964, pp. 139–204

- ^ Second generation computers include the CDC 1604 (1960), DEC PDP-1 (1960), IBM 7030 Stretch (1961), IBM 7090 (1959), IBM 1401 (1959), IBM 1620 (1959), Sperry Rand Athena (1957), Univac LARC (1960), and Western Electric 1ESS Switch (1965).

- ^ The 1ESS switch could not be marketed as a 'computer' in order for AT&T to comply with their anti-monopoly consent decree, which also affected the Unix operating system.

- ^ IBM_SMS 1960

- ^ Mayo & Newcomb 2008, p. 96–117; Jimbo Wales is quoted on p. 115.

- ^ Kilby 2000

- ^ Robert Noyce's Unitary circuit, US patent 2981877, "Semiconductor device-and-lead structure", issued 1961-04-25, assigned to Fairchild Semiconductor Corporation.

- ^ Intel_4004 1971

- ^ The Intel 4004 (1971) die was , composed of 2300 transistors; by comparison, the Pentium Pro was , composed of 5.5 million transistors, according to Patterson & Hennessy 1998, pp. 27–39

- ^ In the defense field, considerable work was done in the computerized implementation of equations such as Kalman 1960, pp. 35–45

- ^ Eckhouse & Morris 1979, pp. 1–2

- ^ "If you're running 10,000 machines, something is going to die every day." —Jeff Dean of Google, as quoted in Shankland 2008.

- ^ Kohonen 1980, pp. 1–368

- ^ For example, programming on a drum memory required that the programmer be aware of the real-time position of the read head, as the drum was spinning.

- ^ Smolin 2001, pp. 53–57. Pages 220–226 are annotated references and guide for further reading.

- ^ Burks, Goldstine & von Neumann 1947, pp. 1–464 reprinted in Datamation, September-October 1962. Note that preliminary discussion/design was the term later called system analysis/design, and even later, called system architecture.

- ^ IEEE_Annals 1979 Online access to the IEEE Annals of the History of Computing here. DBLP summarizes the Annals of the History of Computing year by year, back to 1996, so far.

- ^ The fastest supercomputer of the top 500 is expected to be IBM Roadrunner, topping Blue Gene/L as of May 25, 2008.

References

- Backus, John (August 1978), "Can Programming be Liberated from the von Neumann Style?" (PDF), Communications of the ACM, 21 (8), 1977 ACM Turing Award Lecture

{{citation}}: CS1 maint: date and year (link). - Bell, Gordon; Newell, Allen (1971), Computer Structures: Readings and Examples, New York: McGraw-Hill, ISBN 0-07-004357-4.

- Bergin, Thomas J. (ed.) (November 13 and 14, 1996), Fifty Years of Army Computing: from ENIAC to MSRC (PDF), A record of a symposium and celebration, Aberdeen Proving Ground.: Army Research Laboratory and the U.S.Army Ordnance Center and School., retrieved 2008-05-17

{{citation}}:|first=has generic name (help); Check date values in:|date=(help)CS1 maint: date and year (link). - Bowden, B. V. (1970), "The Language of Computers", American Scientist, 58: pp. 43—53, retrieved 2008-05-17

{{citation}}:|pages=has extra text (help). - Burks, Arthur W.; Goldstine, Herman; von Neumann, John (1947), Preliminary discussion of the Logical Design of an Electronic Computing Instrument, Princeton, NJ: Institute for Advanced Study, retrieved 2008-05-18.

- Chua, Leon O (September 1971), "Memristor—The Missing Circuit Element", IEEE Transactions on Circuit Theory, CT-18 (5): pp. 507—519

{{citation}}:|pages=has extra text (help)CS1 maint: date and year (link). - Cleary, J. F. (1964), GE Transistor Manual (7th ed.), General Electric, Semiconductor Products Department, Syracuse, NY, pp. pp. 139—204, OCLC 223686427

{{citation}}:|pages=has extra text (help). - Copeland, B. Jack (ed.) (2006), Colossus: The Secrets of Bletchley Park's Codebreaking Computers, Oxford University Press, ISBN 019284055X

{{citation}}:|first=has generic name (help); Unknown parameter|Location=ignored (|location=suggested) (help). - Template:Fr Coriolis, Gaspard-Gustave (1836), "Note sur un moyen de tracer des courbes données par des équations différentielles", Journal de Mathématiques Pures et appliquées series I, 1: pp. 5—9, retrieved 2008-07-06

{{citation}}:|pages=has extra text (help). - CSIRAC: Australia’s first computer, Commonwealth Scientific and Industrial Research Organisation (CSIRAC), June 3, 2005, retrieved 2007-12-21

{{citation}}: Check date values in:|date=(help)CS1 maint: date and year (link). - Da Cruz, Frank (February 28, 2008), "The IBM Automatic Sequence Controlled Calculator (ASCC)", Columbia University Computing History: A Chronology of Computing at Columbia University, Columbia University ACIS, retrieved 2008-05-17

{{citation}}: Check date values in:|date=(help)CS1 maint: date and year (link). - Davenport, Wilbur B., Jr; Root, William L. (1958), An Introduction to the theory of Random Signals and Noise, McGraw-Hill, pp. 112–364, OCLC 573270

{{citation}}: CS1 maint: multiple names: authors list (link). - Eckert, Wallace (1935), "The Computation of Special Perturbations by the Punched Card Method.", Astronomical Journal (1034).

- Eckert, Wallace (1940), "XII: "The Computation of Planetary Pertubations"", Punched Card Methods in Scientific Computation, Thomas J. Watson Astronomical Computing Bureau, Columbia University, pp. 101–114, OCLC 2275308.

- Eckhouse, Richard H., Jr.; Morris, L. Robert (1979), Minicomputer Systems: organization, programming, and applications (PDP-11), Prentice-Hall, pp. 1–2, ISBN 0135839149

{{citation}}: CS1 maint: multiple names: authors list (link). - Enticknap, Nicholas (Summer 1998), "Computing's Golden Jubilee", RESURRECTION (20), The Computer Conservation Society, ISSN 0958-7403, retrieved 2008-04-19

{{citation}}: CS1 maint: date and year (link). - Feynman, R. P.; Leighton, Robert; Sands, Matthew (1965), Feynman Lectures on Physics, Reading, Mass: Addison-Wesley, pp. III 14—11 to 14—12, ISBN 0201020106, OCLC 531535.

- Fisk, Dale (2005), Punch cards (PDF), Columbia University ACIS, retrieved 2008-05-19.

- Hollerith, Herman (1890), In connection with the electric tabulation system which has been adopted by U.S. government for the work of the census bureau, Columbia University School of Mines

{{citation}}:|format=requires|url=(help). - Horowitz, Paul; Hill, Winfield (1989), The Art of Electronics (2nd ed.), Cambridge University Press, ISBN 0521370957.

- Hunt, J. C. R. (1998), "Lewis Fry Richardson and his contributions to Mathematics, Meteorology and Models of Conflict" (PDF), Ann. Rev. Fluid Mech., 30: pp. XIII—XXXVI, retrieved 2008-06-15

{{citation}}:|pages=has extra text (help). - IBM_SMS (1960), IBM Standard Modular System SMS Cards, IBM, retrieved 2008-03-06

{{citation}}: Cite has empty unknown parameter:|1=(help). - IBM (September, 1956), IBM 350 disk storage unit, IBM, retrieved 2008-07-01

{{citation}}: Check date values in:|date=(help)CS1 maint: date and year (link). - IEEE_Annals (Series dates from 1979), Annals of the History of Computing, IEEE, retrieved 2008-05-19

{{citation}}: Check date values in:|date=(help)CS1 maint: date and year (link). - Ifrah, Georges (2000), The Universal History of Numbers: From prehistory to the invention of the computer., John Wiley and Sons, p. p. 48, ISBN 0-471-39340-1

{{citation}}:|page=has extra text (help). Translated from the French by David Bellos, E.F. Harding, Sophie Wood and Ian Monk. Ifrah supports his thesis by quoting idiomatic phrases from languages across the entire world. - Intel_4004 (November 1971), Intel's First Microprocessor—the Intel® 4004, Intel Corp., retrieved 2008-05-17

{{citation}}: CS1 maint: date and year (link) CS1 maint: numeric names: authors list (link). - Jones, Douglas W, Punched Cards: A brief illustrated technical history, The University of Iowa, retrieved 2008-05-15.

- Kalman, R.E. (1960), "A new approach to linear filtering and prediction problems" (PDF), Journal of Basic Engineering, 82 (1): pp. 35–45, retrieved 2008-05-03

{{citation}}:|pages=has extra text (help); More than one of|author=and|last=specified (help). - Kells; Kern; Bland (1943), The Log-Log Duplex Decitrig Slide Rule No. 4081: A Manual, Keuffel & Esser, p. p. 92

{{citation}}:|page=has extra text (help). - Kilby, Jack (2000), Nobel lecture (PDF), Stockholm: Nobel Foundation, retrieved 2008-05-15.

- Kohonen, Teuvo (1980), Content-addressable memories, Springer-Verlag, p. 368, ISBN 0387098232.

- Lazos (1994), The Antikythera Computer (Ο ΥΠΟΛΟΓΙΣΤΗΣ ΤΩΝ ΑΝΤΙΚΥΘΗΡΩΝ), ΑΙΟΛΟΣ PUBLICATIONS GR

{{citation}}: More than one of|author=and|last=specified (help). - Leibniz, Gottfried (1703), Explication de l'Arithmétique Binaire.

- Lubar, Steve (1991), "Do not fold, spindle or mutilate": A cultural history of the punched card, retrieved 2006-10-31

{{citation}}: Unknown parameter|month=ignored (help). - Manchester (1998, 1999), Mark I, Computer History Museum, The University of Manchester, retrieved 2008-04-19

{{citation}}: Check date values in:|date=(help)CS1 maint: date and year (link). - Martin, Douglas (June 29, 2008), "David Caminer, 92 Dies; A Pioneer in Computers", New York Times, p. p. 24

{{citation}}:|page=has extra text (help); Check date values in:|date=(help)CS1 maint: date and year (link). - Mayo, Keenan; Newcomb, Peter (July 2008), "Inventing the Internet: an oral history", Vanity Fair (575): pp. 96—117, retrieved 2008-06-14

{{citation}}:|pages=has extra text (help)CS1 maint: date and year (link). Jimbo Wales is quoted on p. 115. - Mead, Carver; Conway, Lynn (1980), Introduction to VLSI Systems, Reading, Mass.: Addison-Wesley, ISBN 0201043580.

- Menabrea, Luigi Federico; Lovelace, Ada (1843), "Sketch of the Analytical Engine Invented by Charles Babbage", Scientific Memoirs, 3. With notes upon the Memoir by the Translator.

- Menninger, Karl (1992), Number Words and Number Symbols: A Cultural History of Numbers, Dover Publications. German to English translation, M.I.T., 1969.

- Montaner; Simon (1887), Diccionario Enciclopédico Hispano-Americano (Hispano-American Encyclopedic Dictionary).

- Moye, William T. (January 1996), ENIAC: The Army-Sponsored Revolution, retrieved 2008-05-17

{{citation}}: CS1 maint: date and year (link). - Norden, M9 Bombsight, National Museum of the USAF, retrieved 2008-05-17.

- Noyce, Robert US patent 2981877, Robert Noyce, "Semiconductor device-and-lead structure", issued 1961-04-25, assigned to Fairchild Semiconductor Corporation.

- Patterson, David; Hennessy, John (1998), Computer Organization and Design, San Francisco: Morgan Kaufman, ISBN 1-55860-428-6.

- Pellerin, David; Thibault, Scott (April 22, 2005), Practical FPGA Programming in C, Prentice Hall Modern Semiconductor Design Series Sub Series: PH Signal Integrity Library, pp. 1–464, ISBN 0-13-154318-0

{{citation}}: Check date values in:|date=(help)CS1 maint: date and year (link). - Phillips, A.W.H., The MONIAC (PDF), Reserve Bank Museum, retrieved 2006-05-17.

- Rojas, Raul; Hashagen, Ulf (eds., 2000). The First Computers: History and Architectures. Cambridge: MIT Press. ISBN 0-262-68137-4.

- Schmandt-Besserat, Denise (1981), "Decipherment of the earliest tablets", Science, 211: pp. 283—285, doi:10.1126/science.211.4479.283, PMID 17748027

{{citation}}:|pages=has extra text (help). - Schmidhuber, Jürgen, Wilhelm Schickard (1592–1635) Father of the computer age, retrieved 2008-05-15.

- Shankland, Stephen (May 30, 2008), Google spotlights data center inner workings, Cnet, retrieved 2008-05-31

{{citation}}: Check date values in:|date=(help)CS1 maint: date and year (link). - Shannon, Claude (1940), A symbolic analysis of relay and switching circuits, Massachusetts Institute of Technology, Dept. of Electrical Engineering

{{citation}}: More than one of|author=and|last=specified (help). - Simon, Herbert (1991), Models of My Life, Basic Books, Sloan Foundation Series.

- Singer (1946), Singer in World War II, 1939–1945 - the M5 Director, Singer Manufacturing Co., retrieved 2008-05-17.

- Smith, David Eugene (1929), A Source Book in Mathematics, New York: McGraw-Hill, pp. pp. 180—181

{{citation}}:|pages=has extra text (help). - Smolin, Lee (2001), Three roads to quantum gravity, Basic Books, pp. pp. 53—57, ISBN 0-465-08735-4

{{citation}}:|pages=has extra text (help); Check|isbn=value: checksum (help). Pages 220–226 are annotated references and guide for further reading. - Steinhaus, H. (1999), Mathematical Snapshots (3rd ed.), New York: Dover, pp. pp. 92—95, p. 301

{{citation}}:|pages=has extra text (help). - Stern, Nancy (1981), From ENIAC to UNIVAC: An Appraisal of the Eckert-Mauchly Computers, Digital Press, ISBN 0-932376-14-2.

- Stibitz, George US patent 2668661, George Stibitz, "Complex Computer", issued 1954-02-09, assigned to AT&T.

- Turing, A.M. (1936), "On Computable Numbers, with an Application to the Entscheidungsproblem", Proceedings of the London Mathematical Society, 2, vol. 42 (published 1937), pp. 230–65 (and Turing, A.M. (1937), "On Computable Numbers, with an Application to the Entscheidungsproblem: A correction", Proceedings of the London Mathematical Society, 2, vol. 43, pp. 544–6)Other online versions: Proceedings of the London Mathematical Society Another link online.

- Ulam, Stanisław (1983), Adventures of a Mathematician, New York: Charles Scribner's Sons, (autobiography).

- von Neumann, John (June 30, 1945), First Draft of a Report on the EDVAC, Moore School of Electrical Engineering: University of Pennsylvania

{{citation}}: Check date values in:|date=(help)CS1 maint: date and year (link). - Wang, An US patent 2708722, An Wang, "Pulse transfer controlling devices", issued 1955-05-17.

- Welchman, Gordon (1984), The Hut Six Story: Breaking the Enigma Codes, Penguin Books, pp. pp. 138—145, 295–309

{{citation}}:|pages=has extra text (help); Unknown parameter|Location=ignored (|location=suggested) (help). - Wilkes, Maurice (1986), "The Genesis of Microprogramming", Ann. Hist. Comp., 8 (2): pp. 115—126

{{citation}}:|pages=has extra text (help). - Ziemer, Roger E.; Tranter, William H.; Fannin, D. Ronald (1993), Signals and Systems: Continuous and Discrete, Macmillan, p. p. 370, ISBN 0-02-431641-5

{{citation}}:|page=has extra text (help). - Zuse, Z3 Computer (1938–1941), retrieved 2008-06-01.