Wikipedia:Bots/Requests for approval: Difference between revisions

- العربية

- Arpetan

- Asturianu

- Avañe'ẽ

- تۆرکجه

- বাংলা

- Башҡортса

- Беларуская

- भोजपुरी

- Български

- Bosanski

- Català

- Čeština

- Corsu

- Dansk

- الدارجة

- Deutsch

- ދިވެހިބަސް

- Español

- Esperanto

- Estremeñu

- Euskara

- فارسی

- Føroyskt

- Français

- Galego

- ГӀалгӀай

- 贛語

- ગુજરાતી

- 한국어

- Հայերեն

- हिन्दी

- Hrvatski

- Ido

- Igbo

- Bahasa Indonesia

- Interlingua

- Italiano

- עברית

- ಕನ್ನಡ

- ქართული

- Қазақша

- Кыргызча

- Ladino

- ລາວ

- Latviešu

- Lombard

- Magyar

- मैथिली

- Македонски

- Malagasy

- മലയാളം

- Malti

- मराठी

- مصرى

- Bahasa Melayu

- ꯃꯤꯇꯩ ꯂꯣꯟ

- Minangkabau

- မြန်မာဘာသာ

- Nederlands

- नेपाली

- 日本語

- Нохчийн

- Norsk bokmål

- Occitan

- Oʻzbekcha / ўзбекча

- پنجابی

- ပအိုဝ်ႏဘာႏသာႏ

- پښتو

- Piemontèis

- Plattdüütsch

- Polski

- Português

- Qırımtatarca

- Română

- Romani čhib

- Русский

- Shqip

- Sicilianu

- Simple English

- سنڌي

- SiSwati

- Slovenčina

- Slovenščina

- Soomaaliga

- Српски / srpski

- Srpskohrvatski / српскохрватски

- Suomi

- Svenska

- தமிழ்

- ၽႃႇသႃႇတႆး

- తెలుగు

- ไทย

- Tsetsêhestâhese

- Türkçe

- Українська

- اردو

- Vèneto

- Tiếng Việt

- Walon

- ייִדיש

- 粵語

- 粵語

- 中文

{{BRFA|TedderBot|3|Open}} |

{{BRFA|DASHBot|8|Open -> Trial}} & {{BRFA|WildBot|3|Open -> Trial}} for Harej |

||

| (One intermediate revision by the same user not shown) | |||

| Line 4: | Line 4: | ||

<!-- Add NEW entries at the TOP of this section, on a new line directly below this message. --> |

<!-- Add NEW entries at the TOP of this section, on a new line directly below this message. --> |

||

{{BRFA|RjwilmsiBot|2|Open}} |

{{BRFA|RjwilmsiBot|2|Open}} |

||

| ⚫ | |||

{{BRFA|Redirectcreation Bot||Open}} |

{{BRFA|Redirectcreation Bot||Open}} |

||

{{BRFA|TedderBot|2|Open}} |

{{BRFA|TedderBot|2|Open}} |

||

| Line 17: | Line 16: | ||

{{BRFA|WildBot|4|Open}} |

{{BRFA|WildBot|4|Open}} |

||

{{BRFA|BotMultichill|5|Open}} |

{{BRFA|BotMultichill|5|Open}} |

||

| ⚫ | |||

{{BRFA|WildBot|3|Open}} |

|||

{{BRFA|Yobot|11|Open}} |

{{BRFA|Yobot|11|Open}} |

||

{{BRFA|SoxBot|20|Open}} |

{{BRFA|SoxBot|20|Open}} |

||

| Line 25: | Line 22: | ||

=Bots in a trial period= |

=Bots in a trial period= |

||

<!-- Add NEW trials here at the TOP of this section right BELOW this comment. --><!--Stop messing with BAGBot's comments!!! --ST --><!--NT--> |

<!-- Add NEW trials here at the TOP of this section right BELOW this comment. --><!--Stop messing with BAGBot's comments!!! --ST --><!--NT--> |

||

| ⚫ | |||

| ⚫ | |||

{{BRFA|Addbot|22|Trial}} |

{{BRFA|Addbot|22|Trial}} |

||

<!-- Add NEW entries at the TOP of this section. The other top. --> |

<!-- Add NEW entries at the TOP of this section. The other top. --> |

||

| Line 37: | Line 36: | ||

=Denied requests= |

=Denied requests= |

||

Bots that have been denied for operations will be listed here for informational purposes for at least 7 days before being archived. No other action is required for these bots. Older requests can be found in the [[:Category:Denied Wikipedia bot requests for approval|Archive]].<!--ND--> |

Bots that have been denied for operations will be listed here for informational purposes for at least 7 days before being archived. No other action is required for these bots. Older requests can be found in the [[:Category:Denied Wikipedia bot requests for approval|Archive]].<!--ND--> |

||

{{BRFA|GeneGoBot||Denied|08:20, 23 January 2010 (UTC)}} |

|||

{{BRFA|Template Maintenance Bot||Denied|22:29, 28 December 2009 (UTC)}} |

{{BRFA|Template Maintenance Bot||Denied|22:29, 28 December 2009 (UTC)}} |

||

{{BRFA|IronBot||Denied|02:18, 19 December 2009 (UTC)}} |

{{BRFA|IronBot||Denied|02:18, 19 December 2009 (UTC)}} |

||

Revision as of 08:24, 23 January 2010

| All editors are encouraged to participate in the requests below – your comments are appreciated more than you may think! |

New to bots on Wikipedia? Read these primers!

- Approval process – How these discussions work

- Overview/Policy – What bots are/What they can (or can't) do

- Dictionary – Explains bot-related jargon

To run a bot on the English Wikipedia, you must first get it approved. Follow the instructions below to add a request. If you are not familiar with programming consider asking someone else to run a bot for you.

| Instructions for bot operators | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| ||||||||||

| Bot-related archives (v·t·e) |

|---|

|

1, 2, 3, 4, 5, 6, 7, 8, 9, 10 11, 12, 13, 14, 15, 16, 17, 18, 19, 20 21, 22, 23, 24, 25, 26, 27, 28, 29, 30 31, 32, 33, 34, 35, 36, 37, 38, 39, 40 41, 42, 43, 44, 45, 46, 47, 48, 49, 50 51, 52, 53, 54, 55, 56, 57, 58, 59, 60 61, 62, 63, 64, 65, 66, 67, 68, 69, 70 71, 72, 73, 74, 75, 76, 77, 78, 79, 80 81, 82, 83, 84, 85, 86 |

|

|

| Bot Name | Status | Created | Last editor | Date/Time | Last BAG editor | Date/Time |

|---|---|---|---|---|---|---|

| IznoBot 4 (T|C|B|F) | Open | 2024-07-13, 15:47:52 | Izno | 2024-07-13, 15:48:19 | Never edited by BAG | n/a |

| Platybot (T|C|B|F) | Open | 2024-07-08, 08:52:05 | BilledMammal | 2024-07-08, 11:47:35 | Never edited by BAG | n/a |

| BattyBot 81 (T|C|B|F) | On hold | 2024-02-07, 14:12:49 | ProcrastinatingReader | 2024-02-15, 12:09:35 | ProcrastinatingReader | 2024-02-15, 12:09:35 |

| C1MM-bot 2 (T|C|B|F) | In trial | 2024-06-25, 00:56:44 | Primefac | 2024-07-05, 17:53:58 | Primefac | 2024-07-05, 17:53:58 |

| BaranBOT 3 (T|C|B|F) | In trial | 2024-06-19, 05:08:35 | Primefac | 2024-07-10, 15:32:21 | Primefac | 2024-07-10, 15:32:21 |

| DannyS712 bot III 74 (T|C|B|F) | In trial | 2024-05-09, 00:02:12 | TheSandDoctor | 2024-07-13, 22:20:18 | TheSandDoctor | 2024-07-13, 22:20:18 |

| StradBot 2 (T|C|B|F) | In trial | 2024-02-17, 03:20:39 | SD0001 | 2024-02-17, 05:58:51 | SD0001 | 2024-02-17, 05:58:51 |

| CapsuleBot 2 (T|C|B|F) | Extended trial | 2023-06-14, 00:14:29 | Capsulecap | 2024-01-20, 02:36:30 | Primefac | 2024-01-15, 07:40:39 |

| AussieBot 1 (T|C|B|F) | Extended trial: User response needed! | 2023-03-22, 01:57:36 | Hawkeye7 | 2024-02-18, 23:33:13 | Primefac | 2024-02-18, 20:10:45 |

| DoggoBot 10 (T|C|B|F) | In trial | 2023-03-02, 02:55:00 | Frostly | 2024-02-21, 22:41:18 | Primefac | 2024-01-15, 07:40:49 |

| Mdann52 bot 14 (T|C|B|F) | Trial complete | 2024-06-10, 17:46:58 | Mdann52 | 2024-07-06, 08:56:38 | Primefac | 2024-07-05, 17:57:38 |

| BaranBOT 2 (T|C|B|F) | Trial complete | 2024-05-27, 14:01:46 | DreamRimmer | 2024-07-06, 14:03:24 | Primefac | 2024-06-27, 15:25:33 |

| PrimeBOT 39 (T|C|B|F) | On hold | 2023-05-11, 12:48:50 | Primefac | 2023-09-22, 10:51:59 | Headbomb | 2023-07-02, 17:38:58 |

Current requests for approval

- The following discussion is an archived debate. Please do not modify it. To request review of this BRFA, please start a new section at WT:BRFA. The result of the discussion was

Approved.

Approved.

Operator: Rjwilmsi

Automatic or Manually assisted: Automatic

Programming language(s): AWB

Source code available: AWB

Function overview: Tag redirect pages with WP:TMR tags

Links to relevant discussions (where appropriate): WP:TMR

Edit period(s): probably single run on release of new database dump

Estimated number of pages affected: Thousands. Exact number not yet clear.

Exclusion compliant (Y/N): Y

Already has a bot flag (Y/N): Y

Function details: Use a new general fix function in AWB being developed by me to tag redirect pages with one or more of the tags specified on WP:TMR. If possible I would like to keep the scope of authorisation open-ended to allow future expansion of the task without the need to bother the BAG for a small change. As it stands the current tagging criteria will be:

- where the redirect page title and the redirect target differ only by punctuation, tag with {{R from modification}} (e.g. diff) if not already tagged with that template.

- where the redirect page title equals the redirect target with diacritics replaced with the equivalent Latin character, tag with {{R from title without diacritics}} (no example as yet) if not already tagged with that template.

- Other similar tagging per WP:TMR. Clearly only some of the tags can be decided by a bot.

Scope: mainspace en-wiki only. Redirect pages only.

Note on number of pages: I'm not currently clear on what database dump includes the title and text of redirects, so don't yet know volumes.

Discussion

If I could request that redirects to disambiguation pages that aren't already tagged get tagged with {{R from unknown disambiguation}} that will give WP:Disambiguation something to work with - a generated list that can then be sorted into the various redirect classes for disambiguation pages. Josh Parris 10:29, 21 January 2010 (UTC)[reply]

![]() Approved for trial (50 edits). Please provide a link to the relevant contributions and/or diffs when the trial is complete. MBisanz talk 16:46, 27 January 2010 (UTC)[reply]

Approved for trial (50 edits). Please provide a link to the relevant contributions and/or diffs when the trial is complete. MBisanz talk 16:46, 27 January 2010 (UTC)[reply]

Trial complete.. No problems. Rjwilmsi 08:19, 28 January 2010 (UTC)[reply]

Trial complete.. No problems. Rjwilmsi 08:19, 28 January 2010 (UTC)[reply]

Approved. Nice work. Tim1357 (talk) 03:58, 30 January 2010 (UTC)[reply]

Approved. Nice work. Tim1357 (talk) 03:58, 30 January 2010 (UTC)[reply]

- The above discussion is preserved as an archive of the debate. Please do not modify it. To request review of this BRFA, please start a new section at WT:BRFA.

- The following discussion is an archived debate. Please do not modify it. To request review of this BRFA, please start a new section at WT:BRFA. The result of the discussion was

Withdrawn by operator.

Withdrawn by operator.

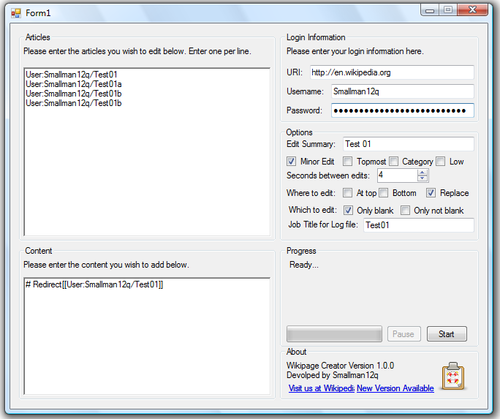

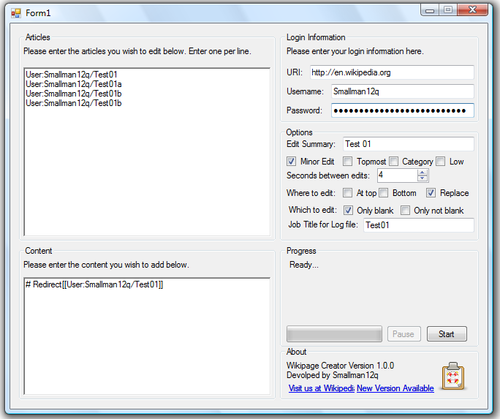

Operator: Smallman12q

Automatic or Manually assisted: Automatic

Programming language(s): VB.Net using the DotNetWikiBot Framework

Source code available: Available on request.

Function overview: To create redirect pages towards a target page.

Links to relevant discussions (where appropriate):

Edit period(s): Daily/weekly

Estimated number of pages affected: Few hundred per week

Exclusion compliant (Y/N): N/A

Already has a bot flag (Y/N): N

Function details: The purpose of the bot will be to create redirect pages based on a given list. It can also redirect to sections. It is not compliant in that it will only create a redirect on a blank page, therefore, there is no need to check for bot templates.

For example, the bot could create a set of redirects to United States incarceration rate from a list such as

U.S. incarceration rate

American incarceration rate

Rate of incarceration in America

Rate of incarceration in the United States

Incarceration rate in the United States

The idea is to increase the number of redirects so more people can find what they are looking for.

- Addition 1

The bot could also check the page view statistics for a page for a minimum number prior to creating a redirect. It also could check the page view statistics for a redirect after a page has been created to see if the redirect is worth keeping.

Discussion

Any thoughts on this? Anyone beside me see a need for this?Smallman12q (talk) 19:01, 8 January 2010 (UTC)[reply]

- I don't really think this is a great idea. It has the potential to create an infinite number of redirects and some of them might be very low value. The javascript predictive title search and search engine should typically be enough to get readers where they need to be. –xenotalk 19:07, 8 January 2010 (UTC)[reply]

- I'm sure that there could be some that are of value. The idea is to make it much easier to create redirects.Smallman12q (talk) 19:35, 8 January 2010 (UTC)[reply]

- (e/c)I don't think this is necessary. We have a search engine for a reason. To use your example, when searching for all of the potential redirect titles, United States incarceration rate always comes up in the first page of results ("U.S. incarceration rate" is the only one that doesn't return it in the top 4 results). Mr.Z-man 19:16, 8 January 2010 (UTC)[reply]

- Same with mr. z man. However, there is a need for bots to create redirects for US states, and with variations on capitilizations. There are scripts in pywikipedia that do this. Tim1357 (talk) 21:55, 8 January 2010 (UTC)[reply]

- Different capitalization should be handled automatically by the search engine. Most US states are only 1-word, what redirects do they need? Mr.Z-man 15:50, 10 January 2010 (UTC)[reply]

- I suggest you float your proposal at Wikipedia talk:Redirects for discussion, the folk there have a good understand of what constitutes a good redirect. Josh Parris 23:23, 8 January 2010 (UTC)[reply]

- I cleaned up over 3000 redirects created by a bot. I don't know how many wound up usable, probably less than 10%. It's not a good idea, imo. Redirects require thought, not bots. However, standard variations in capitalization from use laziness, if that's a problem for wikipedia, that might be useful. --IP69.226.103.13 | Talk about me. 05:10, 9 January 2010 (UTC)[reply]

- I'm hesitant to approve only because I'm unsure how the bot will heuristically decide a redirect is needed and what form it should take. MBisanz talk 05:48, 10 January 2010 (UTC)[reply]

- A list of article names that should be redirected are provided. The bot then goes through and creates the articles as redirects.Smallman12q (talk) 17:30, 10 January 2010 (UTC)[reply]

- Does this mean the idea is: a user requests that a list of redirects be created and the bot creates only those listed redirects? This is something different, a user tool, and that would be okay, if the bot only created redirects specified by another user. This could be useful. --IP69.226.103.13 | Talk about me. 22:55, 10 January 2010 (UTC)[reply]

- I'm still not too sure. If its too easy to create redirects, users might just feed it huge lists of unnecessary redirects, so the end result wouldn't be much better than the original idea. If this is done, the bot should run a search via the API and verify that the requested title doesn't come up in the first 20 results. Mr.Z-man 23:41, 10 January 2010 (UTC)[reply]

- Well I've added a few more features to it. I believe it'll serve more as a tool for certain tasks...see below.

- Any thoughts as to whether this would be a useful tool?Smallman12q (talk) 14:22, 11 January 2010 (UTC)[reply]

- Before I release this tool...anybody have any thoughts?Smallman12q (talk) 14:33, 15 January 2010 (UTC)[reply]

- I withdraw my request as it will be developed as an automated tool.Smallman12q (talk) 23:59, 24 January 2010 (UTC)[reply]

- Before I release this tool...anybody have any thoughts?Smallman12q (talk) 14:33, 15 January 2010 (UTC)[reply]

- Well I've added a few more features to it. I believe it'll serve more as a tool for certain tasks...see below.

- I'm still not too sure. If its too easy to create redirects, users might just feed it huge lists of unnecessary redirects, so the end result wouldn't be much better than the original idea. If this is done, the bot should run a search via the API and verify that the requested title doesn't come up in the first 20 results. Mr.Z-man 23:41, 10 January 2010 (UTC)[reply]

- Does this mean the idea is: a user requests that a list of redirects be created and the bot creates only those listed redirects? This is something different, a user tool, and that would be okay, if the bot only created redirects specified by another user. This could be useful. --IP69.226.103.13 | Talk about me. 22:55, 10 January 2010 (UTC)[reply]

Withdrawn by operator. Withdrawn by operator Q T C 02:32, 25 January 2010 (UTC)[reply]

Withdrawn by operator. Withdrawn by operator Q T C 02:32, 25 January 2010 (UTC)[reply]

- The above discussion is preserved as an archive of the debate. Please do not modify it. To request review of this BRFA, please start a new section at WT:BRFA.

- The following discussion is an archived debate. Please do not modify it. To request review of this BRFA, please start a new section at WT:BRFA. The result of the discussion was

Approved.

Approved.

Operator: Tedder

Automatic or Manually assisted: Automatic, supervised

Programming language(s): perl

Source code available: yes, github, password is outside of repository

Function overview: Rename transclusions of FOO to BAR. Specific request: Wikipedia:Bot requests#Change of template name.

Links to relevant discussions (where appropriate):

Trivial task, so the only consensus necessary should be a given BOTREQ: Wikipedia:Bot requests#Change of template name.

Edit period(s): One-time Manually run.

Estimated number of pages affected: 58 Unknown, depends on request. Trials and delays between edits are possible.

Exclusion compliant (Y/N): Yes.

Already has a bot flag (Y/N): No.

Function details: Simple regex substitution: s/{{Space telescopes/{{Space observatories/g using "embeddedin" and "backlinks" on namespace 0 to find list. Logs to User:TedderBot/TranscludeReplace/log.

Keep in mind I'm asking for approval of any sort of transclusion type change, not just this specific one. It's still an incredibly limited scope. since this is my first (successful?) botreq.

Discussion

- Can't 58 pages be simply edited with AWB? –Juliancolton | Talk 15:07, 4 January 2010 (UTC)[reply]

- Yes, definitely. And now the root problem has been taken care of. It'd be nice, however, to have this approved for use in the general case. tedder (talk) 15:45, 4 January 2010 (UTC)[reply]

- You're asking for approval for a bot that changes the used name of templates generally? Who decides which templates? Is the original problem fixed? Josh Parris 01:48, 5 January 2010 (UTC)[reply]

- Yes, definitely. And now the root problem has been taken care of. It'd be nice, however, to have this approved for use in the general case. tedder (talk) 15:45, 4 January 2010 (UTC)[reply]

Hi. So the original problem is moot, but the idea is to have a bot that can run, on demand and with consensus of the given template community, to update template names. tedder (talk) 06:18, 5 January 2010 (UTC)[reply]

- Is the template community WP:TfD? Or Wikipedia:Redirects for discussion, or Wikipedia:Requested moves? Josh Parris 06:37, 5 January 2010 (UTC)[reply]

- Sorry, I meant the relevant wikiproject, the template talk, or whoever is discussing the template. For instance, {{WikiProject Oregon}} is at WP:WPORE. tedder (talk) 06:44, 5 January 2010 (UTC)[reply]

- How will these communities know that this bot is able to serve them? Josh Parris 06:48, 5 January 2010 (UTC)[reply]

- Sorry, I meant the relevant wikiproject, the template talk, or whoever is discussing the template. For instance, {{WikiProject Oregon}} is at WP:WPORE. tedder (talk) 06:44, 5 January 2010 (UTC)[reply]

- As was noted on the bot request, unless the old name needs to be repurposed for something else, or other rare reasons, we don't need to fix redirects that aren't broken. Mr.Z-man 17:10, 5 January 2010 (UTC)[reply]

Trying to understand the request: so this is the sort of bot that you want to have so that you can complete possible, future request-type-things? Even if it doesn't actually get its tasks from that particular page. Ale_Jrbtalk 18:33, 6 January 2010 (UTC)[reply]

- That's correct, Ale. It's not like I'd go through an unilaterally/arbitrarily change templates. I can see a group wanting to unify templates from {{PROJECTNAME}} to {{WikiProject PROJECTNAME}}, for instance. However, if it's not desired because the preference of a project is overruled by "don't fix what isn't broken", then this should be withdrawn. tedder (talk) 18:37, 6 January 2010 (UTC)[reply]

- I'm not sure if this is problematic dealing with wikiprojects, if the project can show a clear-cut consensus for a change. But I think the bigger projects might not show this readily, and the smaller projects may get no input. Is there a place where this can be discussed in general, like the community pumps, or someplace more specific, asking projects whether a dedicated bot for project templates would serve a purpose? I don't think it's far-fetched to assign a bot to doing tasks as they come up by community agreement. --IP69.226.103.13 | Talk about me. 05:30, 9 January 2010 (UTC)[reply]

- Is anybody still interested with this? –Juliancolton | Talk 22:32, 9 February 2010 (UTC)[reply]

IMO, this bot has to be rejected, someone do the changes manually and Tedder can request other tasks for its bot. -- Magioladitis (talk) 22:40, 11 February 2010 (UTC)[reply]

- Why does it "has to be rejected"(sic)? tedder (talk) 22:51, 11 February 2010 (UTC)[reply]

- I don't see the need for a general "template replacement bot". AWB can be used to do "{{foo}}" → "{{bar}}" replacements (assuming there is reason for it), so why would we need a bot to handle these? The operator is not requesting approval for more complex replacements (e.g., "{{foo|bar}}" → "{{baz|bur}}", that would actually require a bot and specific code; they are requesting approval for a general-purpose, simple replacement bot. Other than slightly less work (esp. if there are a lot of pages involved), I don't see a reason for it: AWB would probably get the job done faster, because there are hundreds upon hundreds of users with access to it, and any one of them could complete the task, but in order for the task to be done with this bot, you would need to be notified. Unless, of course, I misunderstand the task? — The Earwig @ 23:32, 11 February 2010 (UTC)[reply]

- I replaced all Space telescopes -> Space observatories using AWB. -- Magioladitis (talk) 17:34, 13 February 2010 (UTC)[reply]

- I don't see the need for a general "template replacement bot". AWB can be used to do "{{foo}}" → "{{bar}}" replacements (assuming there is reason for it), so why would we need a bot to handle these? The operator is not requesting approval for more complex replacements (e.g., "{{foo|bar}}" → "{{baz|bur}}", that would actually require a bot and specific code; they are requesting approval for a general-purpose, simple replacement bot. Other than slightly less work (esp. if there are a lot of pages involved), I don't see a reason for it: AWB would probably get the job done faster, because there are hundreds upon hundreds of users with access to it, and any one of them could complete the task, but in order for the task to be done with this bot, you would need to be notified. Unless, of course, I misunderstand the task? — The Earwig @ 23:32, 11 February 2010 (UTC)[reply]

![]() Approved for trial (50 edits). Please provide a link to the relevant contributions and/or diffs when the trial is complete. Please address Wikipedia:Bot requests/Archive 34#Update Template Transclusions a 7000 transclusion request. Your source code doesn't seem particularly generalized. Do you intend to change to be more flexible? Josh Parris 08:00, 26 February 2010 (UTC)[reply]

Approved for trial (50 edits). Please provide a link to the relevant contributions and/or diffs when the trial is complete. Please address Wikipedia:Bot requests/Archive 34#Update Template Transclusions a 7000 transclusion request. Your source code doesn't seem particularly generalized. Do you intend to change to be more flexible? Josh Parris 08:00, 26 February 2010 (UTC)[reply]

- Why the request says only one-time run and 58 pages affected? I thought it wanted to change only the pages I fixed. If this bot is gonna do more stuff, we have to change the request above. -- Magioladitis (talk) 08:54, 26 February 2010 (UTC)[reply]

- This is a good point. Tedder, please update the request to reflect your current proposal. Josh Parris 09:38, 26 February 2010 (UTC)[reply]

Doing... Making some changes, will modify before running, and will run against one fix before doing 50. tedder (talk) 19:17, 26 February 2010 (UTC)[reply]

Doing... Making some changes, will modify before running, and will run against one fix before doing 50. tedder (talk) 19:17, 26 February 2010 (UTC)[reply]

- Okay, I genericized the script, added a page documenting the request, and then ran against (almost) 50 requests. Here's the log: User:TedderBot/TranscludeReplace/log. tedder (talk) 20:30, 26 February 2010 (UTC)[reply]

- Can you do minor changes at the same time? More specifically in talk pages if a change is made change: talkheader"" to "talk header", "skiptotalk" to "skip to talk", "WPBS" to "WikiProjectBannerShell". This will help my project in making talk pages for readable.

- If you run it manually I 100% agree that you do it but I think some substitutions can be done automatically. Moreover, no need to log every edit. Just record date, which substitution you did and how many articles were affected. -- Magioladitis (talk) 03:05, 27 February 2010 (UTC)[reply]

- Okay, I genericized the script, added a page documenting the request, and then ran against (almost) 50 requests. Here's the log: User:TedderBot/TranscludeReplace/log. tedder (talk) 20:30, 26 February 2010 (UTC)[reply]

- This is a good point. Tedder, please update the request to reflect your current proposal. Josh Parris 09:38, 26 February 2010 (UTC)[reply]

- The script isn't really built to do AWB-style changes. I'm nervous that adding in those changes isn't going to get consensus- but if it does, it'd be a PERFECT use of this script- run it manually or automatically and keep fixing them up as WPBS keeps getting used, for instance. tedder (talk) 04:57, 27 February 2010 (UTC)[reply]

- I suggest that these changes can be done ONLY if the major substitution is done. My bot (Yobot) has already a similar approval and they are no complains. -- Magioladitis (talk) 10:09, 27 February 2010 (UTC)[reply]

- The script isn't really built to do AWB-style changes. I'm nervous that adding in those changes isn't going to get consensus- but if it does, it'd be a PERFECT use of this script- run it manually or automatically and keep fixing them up as WPBS keeps getting used, for instance. tedder (talk) 04:57, 27 February 2010 (UTC)[reply]

- The behaviour of your bot is as described in your BRFA. Do you intend to accommodate Magioladitis' request, or press on as the BRFA is currently framed? Josh Parris 12:07, 27 February 2010 (UTC)[reply]

- I don't intend to add in the additional behavior- trying to keep the bot with a narrow scope. tedder (talk) 14:23, 27 February 2010 (UTC)[reply]

![]() Approved. having reviewed the trial edits and the sources, and given Tedder's desire to keep it simple, this bot task seems useful and harmless. Josh Parris 06:23, 28 February 2010 (UTC)[reply]

Approved. having reviewed the trial edits and the sources, and given Tedder's desire to keep it simple, this bot task seems useful and harmless. Josh Parris 06:23, 28 February 2010 (UTC)[reply]

- The above discussion is preserved as an archive of the debate. Please do not modify it. To request review of this BRFA, please start a new section at WT:BRFA.

- The following discussion is an archived debate. Please do not modify it. To request review of this BRFA, please start a new section at WT:BRFA. The result of the discussion was

Approved.

Approved.

Operator: Basilicofresco

Automatic or Manually assisted: Auto (where not stated differently)

Programming language(s): python (pywikipedia)

Source code available: standard pywikipedia

Function overview: remove useless piping within wikilinks links syntax

Links to relevant discussions (where appropriate): Wikipedia talk:Piped link#Changing existing links to piped links with capital first letter?

Edit period(s): every few months (or less) using the xml dump file

Estimated number of pages affected: 20k (rough guess)

Exclusion compliant (Y/N): Y

Already has a bot flag (Y/N): Y

| Discussion regarding original proposal |

|---|

| The following discussion has been closed. Please do not modify it. |

|

Function details: as stated in Wikipedia:Piped link#When not to use we should never use piped links to convert first letter to lower case. This well-tested bot will correct occurences like [[Country code second-level domain|country code second-level domain]]. Already in use on italian Wikipedia. More examples:

DiscussionThree things:

Tim1357 (talk) 15:15, 28 December 2009 (UTC)[reply]

TBH, I really don't like the idea of a bot making 16000 edits that will result in no visible change to the rendered page. Its like WP:NOTBROKEN, except with possibly fewer benefits. Mr.Z-man 22:43, 28 December 2009 (UTC)[reply]

There is nothing wrong with having another smackbot. However, I think we should ask Rich to give the bot's code to User:Basilicofresco, so we can cram as many general fixes into one edit as possible. Tim1357 (talk) 02:40, 30 December 2009 (UTC)[reply]

I like the idea of adding general fixes to other bots. --IP69.226.103.13 (talk) 16:59, 30 December 2009 (UTC)[reply]

Well, I can easily add other fixes, for example:

Basilicofresco (msg) 13:50, 3 January 2010 (UTC)[reply]

Yes, they are. The above examples are real. Do you prefer to carefully eye-check 3 million of pages and manually edit about 4k pages? (1:820, guess based on random articles sampling) -- Basilicofresco (msg) 08:53, 4 January 2010 (UTC)[reply]

FrescoBot 2 bisNever mind, if the majority considers "cosmetic" my proposal about useless pipings, I can remove it. I'm here to help you, not to raise my editcount. So, what about the second group of replacements? -- Basilicofresco (msg) 20:59, 5 January 2010 (UTC)[reply]

Fixing also external linksI'm testing on italian wikipedia a new set of regex for syntax errors in external links. It probably would be nice to add them here in order to create a single task. I will add details here as soon as possible. -- Basilicofresco (msg) 09:02, 9 January 2010 (UTC)[reply] |

Function details: (new proposal) Using replace.py I will apply several accurate regular expressions in order to correct these errors:

Example: wrong wikisource --> replaced wikisource = error as appears in the article --> replaced text as appears in the article

- External links

- [HTTP://www.google.it link] --> [http://www.google.it link] = link --> link

- [http://http://www.google.it link] --> [http://www.google.it link] = link --> link

- [http:www.google.it link] --> [http://www.google.it link] = [http:www.google.it link] --> link

- [http:/www.google.it link] --> [http://www.google.it link] = [http:/www.google.it link] --> link

- [http:///www.google.it link] --> [http://www.google.it link] = link --> link

- [[http://www.google.it link]] --> [http://www.google.it link] = [link] --> link

- [[http://www.google.it link] --> [http://www.google.it link] = [link --> link

- [http:://www.google.it link] --> [http://www.google.it link] = [http:://www.google.it link] --> link

- [http//www.google.it link] --> [http://www.google.it link] = [http//www.google.it link] --> link

- something[http://www.google.it link] --> something [http://www.google.it link] = somethinglink --> something link

- [http://www.google.it link]something --> [http://www.google.it link] something = linksomething--> link something

- few other very rare variants - (manually assisted)

- [http://images.google.com/imgres?imgurl=http://habitant.org/images/stignatius.jpg&imgrefurl=http://habitant.org/houghton/fcgenealogy.htm&h=287&w=320&sz=29&hl=en&start=33&um=1&tbnid=ibPawlbIEskUcM:&tbnh=106&tbnw=118&prev=/images%3Fq%3DHoughton%2BMI%26start%3D20%26ndsp%3D20%26svnum%3D10%26um%3D1%26hl%3Den%26sa%3DN Flat Broke Blues Band Photo Album] --> [http://www.flatbrokebluesband.com/photos.php Flat Broke Blues Band Photo Album] = Flat Broke Blues Band Photo Album --> Flat Broke Blues Band Photo Album

- [http://images.google.com/imgres?imgurl=http://habitant.org/images/stignatius.jpg&imgrefurl=http://habitant.org/houghton/fcgenealogy.htm&h=287&w=320&sz=29&hl=en&start=33&um=1&tbnid=ibPawlbIEskUcM:&tbnh=106&tbnw=118&prev=/images%3Fq%3DHoughton%2BMI%26start%3D20%26ndsp%3D20%26svnum%3D10%26um%3D1%26hl%3Den%26sa%3DN Google Image Result for http://www.flatbrokebluesband.com/photos.php<!-- Bot generated title -->] --> [http://www.flatbrokebluesband.com/photos.php] = Google Image Result for http://www.flatbrokebluesband.com/photos.php --> [1]

- Wikilinks

- [[Sonar||sidescan sonar]] --> [[Sonar|sidescan sonar]] = |sidescan sonar --> sidescan sonar

- [['''''sonar''''']] --> '''''[[sonar]]''''' = sonar --> sonar

- [['''sonar''']] --> '''[[sonar]]''' = sonar --> sonar

- [[''sonar'']] --> ''[[sonar]]'' = sonar --> sonar

- [["sonar"]] --> "[[sonar]]" = "sonar" --> "sonar" - I will avoid the few (24) exceptions, eg. "Them")

- [[(sonar)]] --> ([[sonar]]) = (sonar) --> (sonar) - I will avoid the few (28) exceptions, eg. (not adam)

- [['sonar']] --> '[[sonar]]' = 'sonar' --> 'sonar' - I will avoid the few (1) exceptions, eg. 'Hours'

- [[sonar,]] --> [[sonar]], = sonar, --> sonar, - I will avoid the few (1) exceptions, eg. Alors voilà,

- something[[sonar]] --> something [[sonar]] = somethingsonar --> something sonar

- something[[ sonar]] --> something [[sonar]] = somethingsonar --> something sonar

- [[1992-1998]] --> [[1992]]-[[1998]] = 1992-1998 --> 1992-1998 - any type of dash, I will avoid the few (26) exceptions, eg. 1967–1970, I will also avoid any decade eg. 1950-1959 (I just created these redirects to decades in order to capture any red-but-plausible wikilink)

- [[1992-98]] --> [[1992]]-[[1998|98]] = 1992-98 --> 1992-98 - any type of dash, I will avoid the few (2) exceptions, eg. 1806-20, I will avoid potentially ambiguous intervals (cross-century) eg. 1862-34

- [[Nile Delta ]]and --> [[Nile Delta]] and = Nile Delta and --> Nile Delta and - (manually assisted)

- [[sonar.]] --> [[sonar]]. = sonar. --> sonar. - (manually assisted)

- few other very rare variants - (manually assisted)

- Internal links conversion

- [http://en.wikipedia.org/wiki/ECFS_%28cable_system%29 ECFS] --> [[ECFS (cable system)|ECFS]] = ECFS --> ECFS (handles piping and common url encoding)

- [http://en.wikipedia.org/wiki/File:Flag_of_Brunei.svg Flag of Brunei] --> [[:File:Flag of Brunei.svg|Flag of Brunei]] = Flag of Brunei --> Flag of Brunei (properly handles files and categories)

- [http://fr.wikipedia.org/wiki/Ren%C3%A9-Maurice_Gattefoss%C3%A9 René-Maurice Gattefossé] --> [[:fr:René-Maurice Gattefossé|René-Maurice Gattefossé]] = René-Maurice Gattefossé --> René-Maurice Gattefossé (handles links to wikipedia in foreign languages)

- [http://en.wikipedia.org/wiki/Louise_Marie_Ad%C3%A9la%C3%AFde_de_Bourbon-Penthi%C3%A8vre Mme de Genliss] --> [[Louise Marie Adélaïde de Bourbon-Penthièvre|Mme de Genliss]] = Mme de Genliss --> Mme de Genliss (converts a good number of unicode sequences)

- [http://hi.wikipedia.org/wiki/%E0%A4%B5%E0%A4%BF%E0%A4%95%E0%A4%BF%E0%A4%AA%E0%A5%80%E0%A4%A1%E0%A4%BF%E0%A4%AF%E0%A4%BE:%E0%A4%87%E0%A4%82%E0%A4%9F%E0%A4%B0%E0%A4%A8%E0%A5%87%E0%A4%9F_%E0%A4%AA%E0%A4%B0_%E0%A4%B9%E0%A4%BF%E0%A4%A8%E0%A5%8D%E0%A4%A6%E0%A5%80_%E0%A4%95%E0%A5%87_%E0%A4%B8%E0%A4%BE%E0%A4%A7%E0%A4%A8 Tools and Techniques for Hindi Computing] --> [[:hi:%E0%A4%B5%E0%A4%BF%E0%A4%95%E0%A4%BF%E0%A4%AA%E0%A5%80%E0%A4%A1%E0%A4%BF%E0%A4%AF%E0%A4%BE:%E0%A4%87%E0%A4%82%E0%A4%9F%E0%A4%B0%E0%A4%A8%E0%A5%87%E0%A4%9F %E0%A4%AA%E0%A4%B0 %E0%A4%B9%E0%A4%BF%E0%A4%A8%E0%A5%8D%E0%A4%A6%E0%A5%80 %E0%A4%95%E0%A5%87 %E0%A4%B8%E0%A4%BE%E0%A4%A7%E0%A4%A8|Tools and Techniques for Hindi Computing]] = Tools and Techniques for Hindi Computing --> Tools and Techniques for Hindi Computing (does not screw up with exotic not-recognized unicode sequences)

Discussion about new proposal

What will you do in the event of people creating articles that you don't currently have in your exceptions? Ale_Jrbtalk 19:41, 16 January 2010 (UTC)[reply]

- Excluding years intervals, there are currently only 60 exceptions over 3162000 articles. This means 60/3162000 = 1/52700. The probability of replacing a red wikilink (article missing) with a "incorrect" red wikilink (missing quotes/brakets/etc.) is about 1/52700. Pretty low. Moreover any new article with such a peculiar name will likely came with a redirect from the cleaned name. The risk is negligible and acceptable considering this task for example will fix +3k broken wikilinks. However what I can do is to periodically update the exception list, make my best to avoid any error and promptly correct any problem. -- Basilicofresco (msg) 13:15, 17 January 2010 (UTC)[reply]

- Periodic updates to the list sounds like a reasonable work-around. How often do you think you'll update the list of exception cases? Josh Parris 04:31, 19 January 2010 (UTC)[reply]

- I plan to run the script every time is available a new dump file and I'm going to check for new exclusions before every run. It is the safest method. -- Basilicofresco (msg) 08:41, 19 January 2010 (UTC)[reply]

Perhaps a heuristic you could use is that if the link is a redirect, you can repair; if it's an article, you can't repair. How does this fit with the exceptions you have identified? Josh Parris 12:53, 18 January 2010 (UTC)[reply]

- Of course, I created the exclusion list starting from the existing articles with a matched name. -- Basilicofresco (msg) 19:51, 18 January 2010 (UTC)[reply]

Meanwhile I also tested the not trivial conversion of "internal links" in wikilinks (take a look above). As you asked after my first proposal I put toghether a good bunch of several tasks. Let me know if there are any other common link problems I can solve of if you would like I reintroduce also the cleaning from useless piping. -- Basilicofresco (msg) 09:35, 21 January 2010 (UTC)[reply]

I would like to know which ones are currently fixed by WP:AWB and/or are part of WP:CHECKWIKI i.e. they are fixed in daily basis from many editors -- Magioladitis (talk) 09:28, 24 January 2010 (UTC)[reply]

- I don't know. Probably few fixes are included or partially-included, but it is far from being a problem. Can I start with some test edits so we can see if AWB editors are really able to correct any error on every page on daily basis? -- Basilicofresco (msg) 12:05, 24 January 2010 (UTC)[reply]

Info: This is what AWB can do atm. -- Magioladitis (talk) 15:23, 9 February 2010 (UTC) ...and some more. Basilicofresco, very good ideas! PS Better poke someone from BAG to get approved. -- Magioladitis (talk) 19:46, 9 February 2010 (UTC)[reply]

Update: I'm performing additional tests in order to further improve the above collection. I will soon ping a BAG operator. -- Basilicofresco (msg) 09:41, 12 February 2010 (UTC)[reply]

Useless piping

I checked the 2009/11/28 dump and I found out that just about 7% of useless pipings (existing at that date) have been corrected since its creation (2 months and 15 days ago). It means that AWB "daily basis" fixing is simply not enough. Many of you criticized the first proposal (usless piping removal only) because "cosmetic only". Ok, but now there is a whole collection of fixes and adding also a useless piping removal imho seems appropriate and balanced. See also Wikipedia:Piped link#When not to use. Is there any objection? -- Basilicofresco (msg) 16:07, 12 February 2010 (UTC)[reply]

- Useless piping (improved)

- [[Sidescan sonar|Sidescan sonar]] --> [[Sidescan sonar]] = Sidescan sonar --> Sidescan sonar

- [[sidescan sonar|Sidescan sonar]] --> [[Sidescan sonar]] = Sidescan sonar --> Sidescan sonar

- [[Sidescan sonar|sidescan sonar]] --> [[sidescan sonar]] = sidescan sonar --> sidescan sonar

- [[Breakfast_of_Champions|Breakfast of Champions]] --> [[Breakfast of Champions]] = Breakfast of Champions --> Breakfast of Champions

- [[Breakfast of Champions|"Breakfast of Champions"]] --> "[[Breakfast of Champions]]" = "Breakfast of Champions" --> "Breakfast of Champions" - also with ( ) and '' ''

- [[Breakfast_of_Champions|"Breakfast of Champions"]] --> "[[Breakfast of Champions]]" = "Breakfast of Champions" --> "Breakfast of Champions" - also with ( ) and '' ''

- other minor variants

Basilicofresco (msg) 16:07, 12 February 2010 (UTC)[reply]

- I support these fixes if the number is so big. -- Magioladitis (talk) 16:13, 12 February 2010 (UTC)[reply]

- Info: that's what AWB can do v.5.0.1.0 (rev. 6203). -- Magioladitis (talk) 10:51, 13 February 2010 (UTC)[reply]

Basilicofresco, where will your list of exceptions be located? Will be updated manually or automatically? Can other editors update it? -- Magioladitis (talk) 10:53, 13 February 2010 (UTC)[reply]

- Exceptions were located on my userspace on it.wikipedia, but they are now also present here. I'm going to systematically check for new exclusions among page names on the fresh dump before every run (1 per month or less). Obiouvsly suggestions are always welcome. -- Basilicofresco (msg) 18:47, 13 February 2010 (UTC)[reply]

- I think the majority of your fixes are good (i.e., the ones that change the way the page appears or the ones that change the final target of the link), and I would be interested in approving this bot for a trial. However, I'm not so sure if certain fixes you mentioned above are necessary, such as the ones that only change wikicode, and do not affect the final rendered page. A bot making an edit solely for cosmetic purposes, and not to fix links that are actually broken, may be a little wasteful (e.g., Sidescan sonar → Sidescan sonar). I think it would be best to stick with external links/wikilinks/internal link conversions, and avoid fixing useless piping for now. — The Earwig @ 01:43, 18 February 2010 (UTC)[reply]

- It's part of CHECKWIKI anyway. Some people solely do that. Why not a bot to save us time and effort? Of course it depends of the amount of edits done per day. We need some estimate but I don't think there were be many anyway. -- Magioladitis (talk) 07:29, 18 February 2010 (UTC)[reply]

- Useless piping is considered a middle priority issue by checkwiki project. And, as you can see, they pointed out the problem should be corrected by "AWB, AutoEd, BOT". I checked againg the november dump with a larger sampling (900 old mistakes in the middle of the dump) and the test shows that only about 150 (17%) were corrected during the past 3 months. IMO general fixes of AWB and AutoEd are in need of help. For this reason I asked again for your opinion. Other comments are welcome. -- Basilicofresco (msg) 08:10, 19 February 2010 (UTC)[reply]

- Fair enough.

Approved for trial (50 edits) Please provide a link to the relevant contributions and/or diffs when the trial is complete., with all fixes enabled. — The Earwig @ 16:11, 19 February 2010 (UTC)[reply]

Approved for trial (50 edits) Please provide a link to the relevant contributions and/or diffs when the trial is complete., with all fixes enabled. — The Earwig @ 16:11, 19 February 2010 (UTC)[reply]

- Fair enough.

- Done. The most common corrections are missing spaces near links, useless piping and wiki/interwikification of "fake" external links. -- Basilicofresco (msg) 18:52, 20 February 2010 (UTC)[reply]

Trial complete. Results look good. Any comments/objections before this task is approved? — The Earwig @ 22:23, 20 February 2010 (UTC)[reply]

Trial complete. Results look good. Any comments/objections before this task is approved? — The Earwig @ 22:23, 20 February 2010 (UTC)[reply]

- Is the code soemwhere published? --Magioladitis (talk) 23:28, 20 February 2010 (UTC)[reply]

- No, but if everything will go fine, I will probably publish it in a near future. -- Basilicofresco (msg) 01:26, 21 February 2010 (UTC)[reply]

- Is the code soemwhere published? --Magioladitis (talk) 23:28, 20 February 2010 (UTC)[reply]

- Ps. I'm going to include also namespace 6 because it's useful and harmless (tested). Is it ok? -- Basilicofresco (msg) 06:52, 22 February 2010 (UTC)[reply]

If there are no objections, I'm ready to start. -- Basilicofresco (msg) 15:01, 24 February 2010 (UTC)[reply]

![]() Approved. after reviewing the results, which provide a good example of the breadth of functionality to be exercised. Josh Parris 10:21, 25 February 2010 (UTC)[reply]

Approved. after reviewing the results, which provide a good example of the breadth of functionality to be exercised. Josh Parris 10:21, 25 February 2010 (UTC)[reply]

- The above discussion is preserved as an archive of the debate. Please do not modify it. To request review of this BRFA, please start a new section at WT:BRFA.

- The following discussion is an archived debate. Please do not modify it. To request review of this BRFA, please start a new section at WT:BRFA. The result of the discussion was

Approved.

Approved.

Operator: Written by Kingpin13, will be run by Sodam Yat ThaddeusB to start off with

Automatic or Manually assisted: Automatic

Programming language(s): C# using DotNetWikiBot

Source code available: No

Function overview: Will take over from User:CSDWarnBot

Links to relevant discussions (where appropriate): recent discussion at WT:CSD / Disscussion which led to CSDWarnBot's block

Edit period(s): Continuous

Estimated number of pages affected: 50+ per day, according to CSDWarnBot's edits

Exclusion compliant (Y/N): Yes

Already has a bot flag (Y/N): N

Function details: The reason CSDWarnBot was stopped is because it wasn't waiting before notifying users, this bot will wait 15 minutes (this time can be very easily changed if wanted). Basically, for each page tagged under CSD, the bot will check if the creator of the page was warned, if not, then the bot will warn the creator.

Discussion

The main problem is what template the bot should use to notify the user. I'll have a look into creating one :). - Kingpin13 (talk) 19:06, 17 November 2009 (UTC)[reply]

- Note discussion on talk: Wikipedia talk:Bots/Requests for approval/SDPatrolBot II - Kingpin13 (talk) 08:58, 18 November 2009 (UTC)[reply]

- Can the bot also notify the CSDer that they should notify the creator of the article in a timely fashion? Time looks good.

- Why run by Sodam Yot? The user page suggests he has is not willing to discuss issues about deletion:

It's rare that I actually re-visit a discussion that I'm not actively involved in. If I commented on an AFD or marked an article for Speedy Deletion, I'm not actually paying attention to it beyond that point. Should my points be proven wrong, or should evidence that I missed surface, I have no problem with an Admin reading my !vote the other way.

- Deletions are a matter of community consensus that results from discussions. A user who make unilateral decisions and announces an unwillingness or rather lack of concern about the discussion does not strike me as an appropriate bot operator. The second sentence is pointless, admins aren't going to say, "Oh, I read this guy's user page, and he won't mind if I discount his vote." So, essentially the user is commenting on AfDs and marking articles for speedy deletion, but is not amenable to community input once he's voiced his opinion on the article.

- So, he'll operate the bot and ignore what the bot does? IMO this bot should not go forward with this operator. --IP69.226.103.13 (talk) 11:24, 19 November 2009 (UTC)[reply]

- Firstly, I don't take that as him saying he doesn't care about it, rather that he doesn't manage to keep track of it. Anyway, I will be running this bot too, it's just I don't have a computer running 24/7, which Sodam Yat does. But I will most likely be the one paying attention to what the bot does, as I'm the one who has written it, and therefore the one who will actually be able to address concerns. - Kingpin13 (talk) 12:31, 19 November 2009 (UTC)[reply]

- P.S. Yes the bot can identify the nominator (and already does). I can have it leave them a message too. - Kingpin13 (talk) 12:34, 19 November 2009 (UTC)[reply]

- I think this is useful as it will cut down on repeat offenders who don't understand it's a courtesy to prioritize nominating the article's creator. --IP69.226.103.13 (talk) 19:27, 19 November 2009 (UTC)[reply]

- That was mainly because I rarely comment in AFD debates, and do not add them to my watchlist. So if I make an argument based on something presented, and that is later proven incorrect, chances are I will not recheck my !vote to make sure it is still the correct choice. If someone wants to bring something to my attention, I will be more than happy to talk about it. While I run the bot, I will make every effort to monitor it while I am available, and will try to check periodically even if I am away, in case something is going wrong. Sodam Yat (talk) 16:35, 19 November 2009 (UTC)[reply]

- My concerns are not alleviated. A bot operator has to keep track of what the bot does. I have to say that bot policy does not favor an operator who sees community consensus as his putting in his vote and then ignoring the discussion. Wikipedia is an encyclopedia written by community consensus. It's actually working. Bots require community consensus and operators who are communicating with the community. I am uncomfortable with this bot being operated at any time by this user, as I see high potential for unnecessary drama and incivility due to the operator's stated lack of involvement in developing community consensus for deletion discussions. This is particularly a problem with this bot and this bot operator. User:Sodam Yat has offered, on my user talk page, to attempt to find another operator to work with Kingpin13 on running the bot, and I think he should be taken up on this offer.

- As usual, I have no issues with Kingpin13 as operator or coder. --IP69.226.103.13 (talk) 19:27, 19 November 2009 (UTC)[reply]

- In light of the concerns raised, I withdraw my offer to run the bot. If I am able to find someone else to run it, I will do so, but even if I cannot I can't in good conscience run a bot with opposition. Sodam Yat (talk) 19:50, 19 November 2009 (UTC)[reply]

- Well, that's a shame IMO. I've contacted ThaddeusB, since he mentioned something about it.. - Kingpin13 (talk) 20:02, 19 November 2009 (UTC)[reply]

- If I don't get back to this, just a note that I see no issues with ThaddeusB as a bot operator. --IP69.226.103.13 (talk) 20:07, 19 November 2009 (UTC)[reply]

- Well, that's a shame IMO. I've contacted ThaddeusB, since he mentioned something about it.. - Kingpin13 (talk) 20:02, 19 November 2009 (UTC)[reply]

- In light of the concerns raised, I withdraw my offer to run the bot. If I am able to find someone else to run it, I will do so, but even if I cannot I can't in good conscience run a bot with opposition. Sodam Yat (talk) 19:50, 19 November 2009 (UTC)[reply]

- Kingpin has asked me to run the bot and I would be happy to do so. Reducing BITE on Wikipedia is one of the things I care deeply about, so I will of course respond to any questions/complaints that come in. My C is fairly rudimentary, but I trust that Kingpin will make sure any bugs that may arise get ironed out.

As far as the bot goes, I have two comments:

- I think a 10 minute delay would be sufficient.

- The templated message needed to be carefully crafted. I trust a draft version of it will be released soon.

- --ThaddeusB (talk) 01:16, 20 November 2009 (UTC)[reply]

- So basically, the bot will do the following: For certain CSD types, it will wait until at least 10 minutes after the CSD tag was placed on the article and then notify the creator of the article if they have not been notified already. Will it also not bother notifying if the creator has edited the article within that 10 minutes? Will it catch a situation where the creator was notified and deleted the notification? Was the suggestion at Wikipedia talk:Bots/Requests for approval/SDPatrolBot II#Template bombing implemented? If the bot's templates are not to be fully protected, will it verify that they have not been vandalized before substing them? Will the bot have a non-admin shutoff? Does the exclusion compliance include

{{bots|optout=something}}(is there even an appropriate "something"?)? - The bot's userpage also needs to be updated to indicate just which CSD types will be processed and which template(s) will be used, and to indicate the current operator(s); an editnotice pointing out that posting to the bot's talk page to contest a CSD is inappropriate and futile would probably also be helpful. Also, please verify that the account password is only known to the appropriate person or persons (i.e. if Sodam Yat ever knew the password, it must have been changed). Anomie⚔ 03:33, 2 December 2009 (UTC)[reply]

- The bot doesn't care if the article has been edited since the CSD tag was placed. But it won't notify if the csd tag has been removed, or the page deleted (it won't be able to read the page anyway). The bot will actually be searching the history for the nominator, so removal of the warning shouldn't matter. The template bombing has not yet been implemented, but it should be pretty easy, I'm just yet to actually write the code. I don't plan on having a non-admin shutoff, but I could if it's wanted. At the moment, only I know the password, once the bot is approved I'll let ThaddeusB know. I'll either have fully protected templates, or keep them in code and off-wiki. ThaddeusB; 10 minutes is good by me. And I will try to get around to the template creation soon :), - Kingpin13 (talk) 14:21, 2 December 2009 (UTC)[reply]

- P.S. About the exclusion compliance; at the moment the only parameter in optout which will stop the bot is the bot's name, I could add more if wanted..? - Kingpin13 (talk) 14:30, 2 December 2009 (UTC)[reply]

- Sounds good about the templates and the password. The bot's userpage still needs updating, the rest of the questions were really just to throw ideas out there. Do consider implementing the check for not notifying if the creator edited the article since the CSD went up along with the anti-bombing, unless there is something I'm missing where they are likely to not have noticed the CSD when they made the edit. Let me know when the anti-bombing and templates are ready and I'll give a trial. Anomie⚔ 20:48, 2 December 2009 (UTC)[reply]

- I don't see a point in a non-admin shut off for this bot with this task and operator, even with change of operator, the task itself does not require a non-admin shut-off, imo. Looks fine, details covered, monitored by competent bot operators/watchers. --69.226.100.7 (talk) 19:49, 22 December 2009 (UTC)[reply]

- So basically, the bot will do the following: For certain CSD types, it will wait until at least 10 minutes after the CSD tag was placed on the article and then notify the creator of the article if they have not been notified already. Will it also not bother notifying if the creator has edited the article within that 10 minutes? Will it catch a situation where the creator was notified and deleted the notification? Was the suggestion at Wikipedia talk:Bots/Requests for approval/SDPatrolBot II#Template bombing implemented? If the bot's templates are not to be fully protected, will it verify that they have not been vandalized before substing them? Will the bot have a non-admin shutoff? Does the exclusion compliance include

- {{OperatorAssistanceNeeded}} Status report Mr. Pin? MBisanz talk 00:59, 16 December 2009 (UTC)[reply]

- The difficult thing is the templates :/. I'll try very hard to get them started today :D. - Kingpin13 (talk) 09:46, 16 December 2009 (UTC)[reply]

- Great, thanks for the update. MBisanz talk 10:22, 30 December 2009 (UTC)[reply]

- Haha, really sorry about the slow progress of this :/ - Kingpin13 (talk) 21:52, 30 December 2009 (UTC)[reply]

- No hurry, it'll just be attribute to BAGS ponderous ways. --IP69.226.103.13 | Talk about me. 07:33, 2 January 2010 (UTC)[reply]

- Haha, really sorry about the slow progress of this :/ - Kingpin13 (talk) 21:52, 30 December 2009 (UTC)[reply]

- Great, thanks for the update. MBisanz talk 10:22, 30 December 2009 (UTC)[reply]

- The difficult thing is the templates :/. I'll try very hard to get them started today :D. - Kingpin13 (talk) 09:46, 16 December 2009 (UTC)[reply]

How does the bot detect whether someone has been notified? — Carl (CBM · talk) 23:09, 6 January 2010 (UTC)[reply]

- At the moment it is just if the user's talk page has been edited since the page was created (to avoid template bombing). - Kingpin13 (talk) 10:06, 14 January 2010 (UTC)[reply]

Kingpin: these templates may be of use to you: Wikipedia:Bots/Requests for approval/SoxBot VII 3. (X! · talk) · @751 · 17:01, 9 January 2010 (UTC)[reply]

- Thanks X! - Kingpin13 (talk) 10:06, 14 January 2010 (UTC)[reply]

- Hi Kingpin, I understand the bot will not be able to notify the author if their page has been both tagged and deleted within ten minutes. I wouldn't want to delay implementation of the Bot, as I still see the bot as very much needed even with that limitation, but I would appreciate it if you could find a workaround for this. ϢereSpielChequers 18:55, 9 January 2010 (UTC)[reply]

- Well, I can shorten it to a matter of seconds/a minute (depending on the number of articles the bot is looking through). But it depends partly on ThaddeusB.

I have now created the warnings (they can be seen at User:SDPatrolBot II/Warning Examples). I haven't created one for every CSD, but if someone else wants to make some more I can add them to the bot. - Kingpin13 (talk) 10:06, 14 January 2010 (UTC)[reply]- Thanks, I think the gap between article tag and informing the author is important as it allows for manual and tailored messages; I was just wondering if there was some workaround that would still inform the author even if the article had been deleted in the interim. Is the problem that without making it an admin bot it can't see the deleted articles; or would it require it to be continuously running, keeping a log of tagged articles and then checking ten minutes later so that it could avoid warning the author if either the article was still there and no longer tagged or the author had been notified in the meantime? ϢereSpielChequers 11:09, 14 January 2010 (UTC)[reply]

- Yuh, the problem is that the bot (as a non-admin bot) can't see deleted edits, and although it does keep a log of the pages which have been tagged for deletion, it checks the page just before notifying the user, so the only workaround would be for the bot to be an admin (which isn't possible with me as op). BAG: The bot should be pretty much ready for a trial if we want :) - Kingpin13 (talk) 16:47, 16 January 2010 (UTC)[reply]

- A couple quick notes: I am sure changing the delay at some future point would not be an issue, if the need arises. I am an admin, so if admin functionality is decided to be necessary, that won't be an issue. --ThaddeusB (talk) 01:05, 21 January 2010 (UTC)[reply]

- But it would prove to be a bit of a problem with me coding it. I'd be happy to give the source code to an admin willing to take a shot at making this work with deleted pages. - Kingpin13 (talk) 13:09, 22 January 2010 (UTC)[reply]

- A couple quick notes: I am sure changing the delay at some future point would not be an issue, if the need arises. I am an admin, so if admin functionality is decided to be necessary, that won't be an issue. --ThaddeusB (talk) 01:05, 21 January 2010 (UTC)[reply]

- Yuh, the problem is that the bot (as a non-admin bot) can't see deleted edits, and although it does keep a log of the pages which have been tagged for deletion, it checks the page just before notifying the user, so the only workaround would be for the bot to be an admin (which isn't possible with me as op). BAG: The bot should be pretty much ready for a trial if we want :) - Kingpin13 (talk) 16:47, 16 January 2010 (UTC)[reply]

- Thanks, I think the gap between article tag and informing the author is important as it allows for manual and tailored messages; I was just wondering if there was some workaround that would still inform the author even if the article had been deleted in the interim. Is the problem that without making it an admin bot it can't see the deleted articles; or would it require it to be continuously running, keeping a log of tagged articles and then checking ten minutes later so that it could avoid warning the author if either the article was still there and no longer tagged or the author had been notified in the meantime? ϢereSpielChequers 11:09, 14 January 2010 (UTC)[reply]

- Well, I can shorten it to a matter of seconds/a minute (depending on the number of articles the bot is looking through). But it depends partly on ThaddeusB.

![]() Approved for trial (50 edits). Please provide a link to the relevant contributions and/or diffs when the trial is complete. Tim1357 (talk) 16:41, 24 January 2010 (UTC)[reply]

Approved for trial (50 edits). Please provide a link to the relevant contributions and/or diffs when the trial is complete. Tim1357 (talk) 16:41, 24 January 2010 (UTC)[reply]

- Grr, trying to get this done asap, but for some reason DotNetWikiBot is refusing to work properly in this particular project (it was fine before, and is working in other projects), I'm working on finding the cause of this. Sorry for the delay - Kingpin13 (talk) 20:58, 27 January 2010 (UTC)[reply]

Trial complete. I messed up the first few edits, - Kingpin13 (talk) 16:57, 2 February 2010 (UTC)[reply]

Trial complete. I messed up the first few edits, - Kingpin13 (talk) 16:57, 2 February 2010 (UTC)[reply]

Approved. Looks like everything went well. Good luck! (X! · talk) · @783 · 17:47, 3 February 2010 (UTC)[reply]

Approved. Looks like everything went well. Good luck! (X! · talk) · @783 · 17:47, 3 February 2010 (UTC)[reply]

- The above discussion is preserved as an archive of the debate. Please do not modify it. To request review of this BRFA, please start a new section at WT:BRFA.

- The following discussion is an archived debate. Please do not modify it. To request review of this BRFA, please start a new section at WT:BRFA. The result of the discussion was

Request Expired.

Request Expired.

Operator: ThaddeusB

Automatic or Manually assisted: automatic, unsupervised

Programming language(s): Perl

Source code available: here

Function overview: fill in tables with data on prehistoric creatures

Edit period(s): one time run

Estimated number of pages affected: 13

Exclusion compliant (Y/N): N/A - this only applies to user & talk pages, correct?

Already has a bot flag (Y/N): N

Function details: Using a large database of information downloaded from http://paleodb.org and http://strata.geology.wisc.edu/jack/ the first function of this bot will be to fill in the tables found in various "list of" articles. A sample entry has been filled in here. Any data that is missing from the database will simply to left blank.

Only a tiny number of pages will be affected, but the amount of bot filled in content will be immense. As such, I am suggesting the bot trial be something along the lines of "the first 10 entries on each page" rather than a number of edits.

A copy of the database is available here (425k). The database is organized as follows:

Genus--Valid?--Naming Scientist--Year named--Time period it lived during--Approx dates lived--locations

- A "1" in the valid column means it is currently listed as a valid genus, "NoData" means it couldn't be determined - most likely because there are two genus with the same name, and "No-{explanation}" means it is not currently listed as a valid genus.

- Data proceed by a "*" means it was derived from Sepkoski's data, using the dates found here (compiled by User:Abyssal). All other data came from paleodb, using their fossil collection data for more precise dates (when available.)

- Spot checking of my data is encouraged, although I'm confident no novel errors have been introduced. If anyone knows of additional sources to derive similar data, let me know and I'll incorporate those sources into the database.

List of pages to to affected: (might be expanded slightly if others are found)

- List of prehistoric starfish

- List of prehistoric barnacles

- List of crinoid genera

- List of prehistoric echinoids

- List of edrioasteroids

- List of graptolites

- List of prehistoric sea cucumbers

- List of hyoliths

- List of prehistoric malacostracans

- List of prehistoric brittle stars

- List of prehistoric ostracods

- List of prehistoric chitons

- List of prehistoric stylophorans

Discussion

Source code will be published shortly, although the code itself is a quite simple "read from db publish to Wikipedia" operation. --ThaddeusB (talk) 01:48, 3 September 2009 (UTC)[reply]

- Now available here. --ThaddeusB (talk) 13:16, 8 September 2009 (UTC)[reply]

I have spammed asked the relevant projects for input: [2] --ThaddeusB (talk) 02:08, 3 September 2009 (UTC)[reply]

- Wikipedia talk:WikiProject Gastropods#BOT notice.

- List of genera of Monoplacophora would be fine. (Uncertain Monoplacophora/Gastropoda should be on separate list. See Monoplacophora#Fossil species.) --Snek01 (talk) 09:29, 3 September 2009 (UTC)[reply]

Bullets?

Maybe the countries should be a bulleted list to save horizontal space. IE:

- Poland

- Switzerland

as opposed to "Switzerland, Poland". Abyssal (talk) 15:30, 3 September 2009 (UTC)[reply]

- Which is preferable: Option 1

| Genus | Authors | Year | Status | Age | Location(s) | Notes |

|---|---|---|---|---|---|---|

| Advenaster | Hess | 1955 | Valid | Late Bajocian to Late Callovian | Switzerland, Poland | |

| SampleEntry | Hess | 1955 | Valid | Early Cretaceous to present | Switzerland, Poland, United States, France | |

| Bad Genus | Invalid | rank changed to subgenus, name to Genus (Sub genus) |

- Option 2

| Genus | Authors | Year | Status | Age | Location(s) | Notes |

|---|---|---|---|---|---|---|

| Advenaster | Hess | 1955 | Valid | Late Bajocian to Late Callovian | Switzerland Poland |

|

| SampleEntry | Hess | 1955 | Valid | Early Cretaceous to present | Switzerland Poland United States France |

|

| Bad Genus | Invalid | rank changed to subgenus, name to Genus (Sub genus) |

- or Option 3

| Genus | Authors | Year | Status | Age | Location(s) | Notes |

|---|---|---|---|---|---|---|

| Advenaster | Hess | 1955 | Valid | Late Bajocian to Late Callovian |

|

|

| SampleEntry | Hess | 1955 | Valid | Early Cretaceous to present |

|

|

| Bad Genus | Invalid | rank changed to subgenus, name to Genus (Sub genus) |

- Any is fine by me. --ThaddeusB (talk) 18:49, 3 September 2009 (UTC)[reply]

- Definitely 2 or 3, although I don't care which. I love that you're using the sort template. <3 Also, could you have the year link to the "year in paleontology" article? And link to the countries? Abyssal (talk) 04:35, 4 September 2009 (UTC)[reply]

- No problem, I will link the date & countries. --ThaddeusB (talk) 13:45, 4 September 2009 (UTC)[reply]

- Hmm, WP:Context and all that - re countries. Surprise links to "year in" are not that great either. Rich Farmbrough, 09:51, 6 September 2009 (UTC).[reply]

- No problem, I will link the date & countries. --ThaddeusB (talk) 13:45, 4 September 2009 (UTC)[reply]

- Definitely 2 or 3, although I don't care which. I love that you're using the sort template. <3 Also, could you have the year link to the "year in paleontology" article? And link to the countries? Abyssal (talk) 04:35, 4 September 2009 (UTC)[reply]

Invalid genera should be in separate table, because it is better for general public. If there is any reason to have them together, then it could be also OK. Such tables will be also useful. --Snek01 (talk) 11:48, 4 September 2009 (UTC)[reply]

- I'm neutral on linking the countries, although I will point out that such links would allow someone to easily figure out where in the world the fossil was found. I view the "year in" links as completely appropriate as naming of new genus is something that is/should be covered in those articles. --ThaddeusB (talk) 13:16, 8 September 2009 (UTC)[reply]

- The bot is only going by the entries that already exist in the tables & it looks like there are only about 5 invalid entries across the dozen or so pages. As such, I've leave it up to regular editors to pull the entries out of the main table rather than trying to write a function to do it.

Improper age sorting (fixed)

A sample update has been performed here. --ThaddeusB (talk) 13:16, 8 September 2009 (UTC)[reply]

- I haven't had time to look closely at it, but I notice the age sorting isn't working right, but I can't figure out why. Any idea? Abyssal (talk) 18:31, 8 September 2009 (UTC)[reply]

- Hmm, could you be more specific as it seems to work for me. The first click put it from most recent to oldest and the second from oldest to most recent. --ThaddeusB (talk) 00:57, 9 September 2009 (UTC)[reply]

- The first 20% or so is alright, but after the Miocene (when viewed in ascending order) it starts listing Jurassic stages, then it goes back to Pleistocene, and for some reason Cretaceous ages are listed as if they were the oldest. It's looked this way both at home and at school. The browser I used is Firefox. Abyssal (talk) 19:09, 9 September 2009 (UTC)[reply]

- OK, I figured out the problem. Apparently the {{sort}} template doesn't work properly with numbers, so everything is being sorted "alphabetically" - that is 1 < 10 < 2 etc. It was only coincidence that the first 20% is still correct (and I didn't look down far enough to realize the error). I'll add a fix for this tonight & re-upload the sample page. --ThaddeusB (talk) 20:05, 9 September 2009 (UTC)[reply]

- Good sleuthing! Abyssal (talk) 22:28, 9 September 2009 (UTC)[reply]

- OK, I figured out the problem. Apparently the {{sort}} template doesn't work properly with numbers, so everything is being sorted "alphabetically" - that is 1 < 10 < 2 etc. It was only coincidence that the first 20% is still correct (and I didn't look down far enough to realize the error). I'll add a fix for this tonight & re-upload the sample page. --ThaddeusB (talk) 20:05, 9 September 2009 (UTC)[reply]

- The first 20% or so is alright, but after the Miocene (when viewed in ascending order) it starts listing Jurassic stages, then it goes back to Pleistocene, and for some reason Cretaceous ages are listed as if they were the oldest. It's looked this way both at home and at school. The browser I used is Firefox. Abyssal (talk) 19:09, 9 September 2009 (UTC)[reply]

- Hmm, could you be more specific as it seems to work for me. The first click put it from most recent to oldest and the second from oldest to most recent. --ThaddeusB (talk) 00:57, 9 September 2009 (UTC)[reply]

I believe the latest upload fixes the issues. --ThaddeusB (talk) 21:19, 10 September 2009 (UTC)[reply]

- How did you fix the problem (I'll need to use the same method for other articles). Abyssal (talk) 02:28, 11 September 2009 (UTC)[reply]

- By adding enough zeros in front of the numbers to make them correctly sort as strings. That is, by converting "1.23" to "001.23", "45" to "045", etc. --ThaddeusB (talk) 20:41, 12 September 2009 (UTC)[reply]

Need for outside input

Just a thought, but in light of the Anybot debacle - it might be a good idea to put a call out to the WikiProjects and recruit some marine biologists/fossil guys/crustacean guys/etc. to come take a look at your trial edits and check them over with a fine tooth comb before the bot is given final approval. If Anybot taught us anything, it's how simple errors in interpreting database content can lead to masses of incorrect information going live to the 'pedia and remaining there for months, unnoticed. --Kurt Shaped Box (talk) 22:28, 10 September 2009 (UTC)[reply]

- I certainly want several people to look over the data and have notified the 6 most relevant WikiProjects. So far, those notices don't seem to have attracted many people. :( --ThaddeusB (talk) 22:45, 10 September 2009 (UTC)[reply]

- Can you try asking specific editors by the type of articles you intend to produce? Asking editors if they will check the data? --69.225.12.99 (talk) 02:34, 11 September 2009 (UTC)[reply]

13 pages? Why do we need to approve a bot for that? Run your program. Dump the output to a screen. Post it by hand. Preview. Save. A bot will save you a few minutes whilst vastly increasing the risk to the project. Hesperian 23:55, 10 September 2009 (UTC)[reply]

- First off, let's not be ridiculous - me running a program locally & manually uploading the data is no less of a risk than me running a program locally & it automatically uploading the data. Now, there are several reasons I am requesting approval rather than just uploading the data:

- Yes it will only edit a few pages, but the amount of data it will import is immense, as we are talking about the automated filling of several thousand table entries. The amount of info that is being auto generated and added deserves community consent, IMO.

- I want as many people as possible to look it over to make sure the bot isn't adding inaccurate info. If I just uploaded it all in my name, it wouldn't get the same scrutiny

- There is a planned second part of this task (automated creation of stubs) that will edit thousands of articles. This will be a separate BRFA, but the idea here is to get any bugs/inaccurate input data fixed on a relatively non-controversial task before moving onto a possibly controversial one. If the bot can handle accurate adding content to existing articles, then there is concrete evidence that it should be able to create stubs with prose based on the same information.

- I hope that clear it up. --ThaddeusB (talk) 00:20, 11 September 2009 (UTC)[reply]

- I obviously agree with Thad. This sort of tedious point-by-point extraction of information from a database is what bots are Wikipedia bots are made for. As someone who has filled similar tables out manually, I can vouch that using a bot for this purpose is the most effective way to accomplish it. Abyssal (talk) 02:18, 11 September 2009 (UTC)[reply]

- I agree with that, Abyssal. I run scripts like that myself. But you don't need a bot account to run a script against an external data source. You only need a bot account to post the formatted results to Wikipedia. Personally I prefer to run my scripts, examine the results, tweak the scripts and run them again if necessary, iterate, eventually load the results into an edit window, preview, tweak, and finally save. It is a lesser risk to do it this way. The risk is only the same if you are going to copy-paste-save without examining the results, just like a bot would do. And in that case, I cannot comprehend why a bot is necessary. Once you've generated the data, posting 13 pages by hand will take you 6½ minutes. Thaddeus, I sure hope you'll be spending more time than that implementing and testing your bot. So where is the benefit? I also dispute the scrutiny argument. People don't scrutinise bots more; they largely ignore them until they screw up royally. And no, you don't need to obtain community consent before you edit Wikipedia, even if you are posting big pages. Hesperian 05:42, 16 September 2009 (UTC)[reply]

- I already have examine the input data, tested the bot, and reviewed its results fairly extensively. I've probably put around 40 hours into it in fact. I am well aware that I didn't need approval to do this task. I merely feel it is better to do it with approval than without. This vetting process has already led to some subtle improvements that likely would have never happened if I'd only reviewed the output on my own. --ThaddeusB (talk) 13:09, 16 September 2009 (UTC)[reply]

- I agree with that, Abyssal. I run scripts like that myself. But you don't need a bot account to run a script against an external data source. You only need a bot account to post the formatted results to Wikipedia. Personally I prefer to run my scripts, examine the results, tweak the scripts and run them again if necessary, iterate, eventually load the results into an edit window, preview, tweak, and finally save. It is a lesser risk to do it this way. The risk is only the same if you are going to copy-paste-save without examining the results, just like a bot would do. And in that case, I cannot comprehend why a bot is necessary. Once you've generated the data, posting 13 pages by hand will take you 6½ minutes. Thaddeus, I sure hope you'll be spending more time than that implementing and testing your bot. So where is the benefit? I also dispute the scrutiny argument. People don't scrutinise bots more; they largely ignore them until they screw up royally. And no, you don't need to obtain community consent before you edit Wikipedia, even if you are posting big pages. Hesperian 05:42, 16 September 2009 (UTC)[reply]